My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

Going Deeper Into Reinforcement Learning: Fundamentals of Policy Gradients

As I stated in my last blog post, I am feverishly trying to read more research papers. One category of papers that seems to be coming up a lot recently are those about policy gradients, which are a popular class of reinforcement learning algorithms which estimate a gradient for a function approximator. Thus, the purpose of this blog post is for me to explicitly write the mathematical foundations for policy gradients so that I can gain understanding. In turn, I hope some of my explanations will be useful to a broader audience of AI students.

Assumptions and Problem Statement

In any type of research domain, we always have to make some set of assumptions. (By “we”, I refer to the researchers who write papers on this.) With reinforcement learning and policy gradients, the assumptions usually mean the episodic setting where an agent engages in multiple trajectories in its environment. As an example, an agent could be playing a game of Pong, so one episode or trajectory consists of a full start-to-finish game.

We define a trajectory \(\tau\) of length \(T\) as

\[\tau = (s_0, a_0, r_0, s_1, a_1, r_1, \ldots, s_{T-1}, a_{T-1}, r_{T-1}, s_T)\]where \(s_0\) comes from the starting distribution of states, \(a_i \sim \pi_\theta(a_i| s_i)\), and \(s_i \sim P(s_i | s_{i-1},a_{i-1})\) with \(P\) the dynamics model (i.e. how the environment changes). We actually ignore the dynamics when optimizing, since all we care about is getting a good gradient signal for \(\pi_\theta\) to make it better. If this isn’t clear now, it will be clear soon. Also, the reward can be computed from the states and actions, since it’s usually a function of \((s_i,a_i,s_{i+1})\), so it’s not technically needed in the trajectory.

What’s our goal here with policy gradients? Unlike algorithms such as DQN, which strive to find an excellent policy indirectly through Q-values, policy gradients perform a direct gradient update on a policy to change its parameters, which is what makes it so appealing. Formally, we have:

\[{\rm maximize}_{\theta}\; \mathbb{E}_{\pi_{\theta}}\left[\sum_{t=0}^{T-1}\gamma^t r_t\right]\]-

Note I: I put \(\pi_{\theta}\) under the expectation. This means the rewards are computed from a trajectory which was generated under the policy \(\pi_\theta\). We have to find “optimal” settings of \(\theta\) to make this work.

-

Note II: we don’t need to optimize the expected sum of discounted rewards, though it’s the formulation I’m most used to. Alternatives include ignoring \(\gamma\) by setting it to one, extending \(T\) to infinity if the episodes are infinite-horizon, and so on.

The above raises the all-important question: how do we find the best \(\theta\)? If you’ve taken optimization classes before, you should know the answer already: perform gradient ascent on \(\theta\), so we have \(\theta \leftarrow \theta + \alpha \nabla f(x)\) where \(f(x)\) is the function being optimized. Here, that’s the expected value of whatever sum of rewards formula we’re using.

Two Steps: Log-Derivative Trick and Determining Log Probability

Before getting to the computation of the gradient, let’s first review two mathematical facts which will be used later, and which are also of independent interest. The first is the “log-derivative” trick, which tells us how to insert a log into an expectation when starting from \(\nabla_\theta \mathbb{E}[f(x)]\). Specifically, we have:

\[\begin{align} \nabla_\theta \mathbb{E}[f(x)] &= \nabla_\theta \int p_\theta(x)f(x)dx \\ &= \int \frac{p_\theta(x)}{p_\theta(x)} \nabla_\theta p_\theta(x)f(x)dx \\ &= \int p_\theta(x)\nabla_\theta \log p_\theta(x)f(x)dx \\ &= \mathbb{E}\Big[f(x)\nabla_\theta \log p_\theta(x)\Big] \end{align}\]where \(p_\theta\) is the density of \(x\). Most of these steps should be straightforward. The main technical detail to worry about is exchanging the gradient with the integral. I have never been comfortable in knowing when we are allowed to do this or not, but since everyone else does this, I will follow them.

Another technical detail we will need is the gradient of the log probability of a trajectory since we will later switch \(x\) from above with a trajectory \(\tau\). The computation of \(\log p_\theta(\tau)\) proceeds as follows:

\[\begin{align} \nabla_\theta \log p_\theta(\tau) &= \nabla \log \left(\mu(s_0) \prod_{t=0}^{T-1} \pi_\theta(a_t|s_t)P(s_{t+1}|s_t,a_t)\right) \\ &= \nabla_\theta \left[\log \mu(s_0)+ \sum_{t=0}^{T-1} (\log \pi_\theta(a_t|s_t) + \log P(s_{t+1}|s_t,a_t)) \right]\\ &= \nabla_\theta \sum_{t=0}^{T-1}\log \pi_\theta(a_t|s_t) \end{align}\]The probability of \(\tau\) decomposes into a chain of probabilities by the Markov Decision Process assumption, whereby the next action only depends on the current state, and the next state only depends on the current state and action. To be explicit, we use the functions that we already defined: \(\pi_\theta\) and \(P\) for the policy and dynamics, respectively. (Here, \(\mu\) represents the starting state distribution.) We also observe that when taking gradients, the dynamics disappear!

Computing the Raw Gradient

Using the two tools above, we can now get back to our original goal, which was to compute the gradient of the expected sum of (discounted) rewards. Formally, let \(R(\tau)\) be the reward function we want to optimize (i.e. maximize). Using the above two tricks, we obtain:

\[\nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}[R(\tau)] = \mathbb{E}_{\tau \sim \pi_\theta} \left[R(\tau) \cdot \nabla_\theta \left(\sum_{t=0}^{T-1}\log \pi_\theta(a_t|s_t)\right)\right]\]In the above, the expectation is with respect to the policy function, so think of it as \(\tau \sim \pi_\theta\). In practice, we need trajectories to get an empirical expectation, which estimates this actual expectation.

So that’s the gradient! Unfortunately, we’re not quite done yet. The naive way is to run the agent on a batch of episodes, get a set of trajectories (call it \(\hat{\tau}\)) and update with \(\theta \leftarrow \theta + \alpha \nabla_\theta \mathbb{E}_{\tau \in \hat{\tau}}[R(\tau)]\) using the empirical expectation, but this will be too slow and unreliable due to high variance on the gradient estimates. After one batch, we may exhibit a wide range of results: much better performance, equal performance, or worse performance. The high variance of these gradient estimates is precisely why there has been so much effort devoted to variance reduction techniques. (I should also add from personal research experience that variance reduction is certainly not limited to reinforcement learning; it also appears in many statistical projects which concern a bias-variance tradeoff.)

How to Introduce a Baseline

The standard way to reduce the variance of the above gradient estimates is to insert a baseline function \(b(s_t)\) inside the expectation.

For concreteness, assume \(R(\tau) = \sum_{t=0}^{T-1}r_t\), so we have no discounted rewards. We can express the policy gradient in three equivalent, but perhaps non-intuitive ways:

\[\begin{align} \nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}\Big[R(\tau)\Big] \;&{\overset{(i)}{=}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[\left(\sum_{t=0}^{T-1}r_t\right) \cdot \nabla_\theta \left(\sum_{t=0}^{T-1}\log \pi_\theta(a_t|s_t)\right)\right] \\ &{\overset{(ii)}{=}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t'=0}^{T-1} r_{t'} \sum_{t=0}^{t'}\nabla_\theta \log \pi_\theta(a_t|s_t)\right] \\ &{\overset{(iii)}{=}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}r_{t'}\right) \right] \end{align}\]Comments:

-

Step (i) follows from plugging in our chosen \(R(\tau)\) into the policy gradient we previously derived.

-

Step (ii) follows from first noting that \(\nabla_\theta \mathbb{E}_{\tau}\Big[r_{t'}\Big] = \mathbb{E}_\tau\left[r_{t'} \cdot \sum_{t=0}^{t'} \nabla_\theta \log \pi_\theta(a_t|s_t)\right]\). The reason why this is true can be somewhat tricky to identify. I find it easy to think of just re-defining \(R(\tau)\) as \(r_{t'}\) for some fixed time-step \(t'\). Then, we do the exact same computation above to get the final result, as shown in the equation of the “Computing the Raw Gradient” section. The main difference now is that since we’re considering the reward at time \(t'\), our trajectory under expectation stops at that time. More concretely, \(\nabla_\theta\mathbb{E}_{(s_0,a_0,\ldots,s_{T})}\Big[r_{t'}\Big] = \nabla_\theta\mathbb{E}_{(s_0,a_0,\ldots,s_{t'})}\Big[r_{t'}\Big]\). This is like “throwing away variables” when taking expectations due to “pushing values” through sums and summing over densities (which cancel out); I have another example later in this post which makes this explicit.

Next, we sum over both sides, for \(t' = 0,1,\ldots,T-1\). Assuming we can exchange the sum with the gradient, we get

\[\begin{align} \nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta} \left[ R(\tau) \right] &= \nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t'=0}^{T-1} r_{t'}\right] \\ &= \sum_{t'=0}^{T-1}\nabla_\theta \mathbb{E}_{\tau^{(t')}} \Big[r_{t'}\Big] \\ &= \sum_{t'}^{T-1} \mathbb{E}_{\tau^{(t')}}\left[r_{t'} \cdot \sum_{t=0}^{t'} \nabla_\theta \log \pi_\theta(a_t|s_t)\right] \\ &= \mathbb{E}_{\tau \sim \pi_\theta}\left[ \sum_{t'}^{T-1} r_{t'} \cdot \sum_{t=0}^{t'} \nabla_\theta \log \pi_\theta(a_t|s_t)\right]. \end{align}\]where \(\tau^{(t')}\) indicates the trajectory up to time \(t'\). (Full disclaimer: I’m not sure if this formalism with \(\tau\) is needed, and I think most people would do this computation without worrying about the precise expectation details.)

-

Step (iii) follows from a nifty algebra trick. To simplify the subsequent notation, let \(f_t := \nabla_\theta \log \pi_\theta(a_t|s_t)\). In addition, ignore the expectation; we’ll only re-arrange the inside here. With this substitution and setup, the sum inside the expectation from Step (ii) turns out to be

\[\begin{align} r_0f_0 &+ \\ r_1f_0 &+ r_1f_1 + \\ r_2f_0 &+ r_2f_1 + r_2f_2 + \\ \cdots \\ r_{T-1}f_0 &+ r_{T-1}f_1 + r_{T-1}f_2 \cdots + r_{T-1}f_{T-1} \end{align}\]In other words, each \(r_{t'}\) has its own row of \(f\)-value to which it gets distributed. Next, switch to the column view: instead of summing row-wise, sum column-wise. The first column is \(f_0 \cdot \left(\sum_{t=0}^{T-1}r_t\right)\). The second is \(f_1 \cdot \left(\sum_{t=1}^{T-1}r_t\right)\). And so on. Doing this means we get the desired formula after replacing \(f_t\) with its real meaning and hitting the expression with an expectation.

Note: it is very easy to make a typo with these. I checked my math carefully and cross-referenced it with references online (which themselves have typos). If any readers find a typo, please let me know.

Using the above formulation, we finally introduce our baseline \(b\), which is a function of \(s_t\) (and not \(s_{t'}\), I believe). We “insert” it inside the term in parentheses:

\[\nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}[R(\tau)] = \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}r_{t'} - b(s_t)\right) \right]\]At first glance, it doesn’t seem like this will be helpful, and one might wonder if this would cause the gradient estimate to become biased. Fortunately, it turns out that this is not a problem. This was surprising to me, because all we know is that \(b(s_t)\) is a function of \(s_t\). However, this is a bit misleading because usually we want \(b(s_t)\) to be the expected return starting at time \(t\), which means it really “depends” on the subsequent time steps. For now, though, just think of it as a function of \(s_t\).

Understanding the Baseline

In this section, I first go over why inserting \(b\) above doesn’t make our gradient estimate biased. Next, I will go over why the baseline reduces variance of the gradient estimate. These two capture the best of both worlds: staying unbiased and reducing variance. In general, any time you have an unbiased estimate and it remains so after applying a variance reduction technique, then apply that variance reduction!

First, let’s show that the gradient estimate is unbiased. We see that with the baseline, we can distribute and rearrange and get:

\[\nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}[R(\tau)] = \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}r_{t'}\right) - \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) b(s_t) \right]\]Due to linearity of expectation, all we need to show is that for any single time \(t\), the gradient of \(\log \pi_\theta(a_t|s_t)\) multiplied with \(b(s_t)\) is zero. This is true because

\[\begin{align} \mathbb{E}_{\tau \sim \pi_\theta}\Big[\nabla_\theta \log \pi_\theta(a_t|s_t) b(s_t)\Big] &= \mathbb{E}_{s_{0:t},a_{0:t-1}}\Big[ \mathbb{E}_{s_{t+1:T},a_{t:T-1}} [\nabla_\theta \log \pi_\theta(a_t|s_t) b(s_t)]\Big] \\ &= \mathbb{E}_{s_{0:t},a_{0:t-1}}\Big[ b(s_t) \cdot \underbrace{\mathbb{E}_{s_{t+1:T},a_{t:T-1}} [\nabla_\theta \log \pi_\theta(a_t|s_t)]}_{E}\Big] \\ &= \mathbb{E}_{s_{0:t},a_{0:t-1}}\Big[ b(s_t) \cdot \mathbb{E}_{a_t} [\nabla_\theta \log \pi_\theta(a_t|s_t)]\Big] \\ &= \mathbb{E}_{s_{0:t},a_{0:t-1}}\Big[ b(s_t) \cdot 0 \Big] = 0 \end{align}\]Here are my usual overly-detailed comments (apologies in advance):

-

Note I: this notation is similar to what I had before. The trajectory \(s_0,a_0,\ldots,a_{T-1},s_{T}\) is now represented as \(s_{0:T},a_{0:T-1}\). In addition, the expectation is split up, which is allowed. If this is confusing, think of the definition of the expectation with respect to at least two variables. We can write brackets in any appropriately enclosed location. Furthermore, we can “omit” the un-necessary variables in going from \(\mathbb{E}_{s_{t+1:T},a_{t:T-1}}\) to \(\mathbb{E}_{a_t}\) (see expression \(E\) above). Concretely, assuming we’re in discrete-land with actions in \(\mathcal{A}\) and states in \(\mathcal{S}\), this is because \(E\) evaluates to:

\[\begin{align} E &= \sum_{a_t\in \mathcal{A}}\sum_{s_{t+1}\in \mathcal{S}}\cdots \sum_{s_T\in \mathcal{S}} \underbrace{\pi_\theta(a_t|s_t)P(s_{t+1}|s_t,a_t) \cdots P(s_T|s_{T-1},a_{T-1})}_{p((a_t,s_{t+1},a_{t+1}, \ldots, a_{T-1},s_{T}))} (\nabla_\theta \log \pi_\theta(a_t|s_t)) \\ &= \sum_{a_t\in \mathcal{A}} \pi_\theta(a_t|s_t)\nabla_\theta \log \pi_\theta(a_t|s_t) \sum_{s_{t+1}\in \mathcal{S}} P(s_{t+1}|s_t,a_t) \sum_{a_{t+1}\in \mathcal{A}}\cdots \sum_{s_T\in \mathcal{S}} P(s_T|s_{T-1},a_{T-1})\\ &= \sum_{a_t\in \mathcal{A}} \pi_\theta(a_t|s_t)\nabla_\theta \log \pi_\theta(a_t|s_t) \end{align}\]This is true because of the definition of expectation, whereby we get the joint density over the entire trajectory, and then we can split it up like we did earlier with the gradient of the log probability computation. We can distribute \(\nabla_\theta \log \pi_\theta(a_t|s_t)\) all the way back to (but not beyond) the first sum over \(a_t\). Pushing sums “further back” results in a bunch of sums over densities, each of which sums to one. The astute reader will notice that this is precisely what happens with variable elimination for graphical models. (The more technical reason why “pushing values back through sums” is allowed has to do with abstract algebra properties of the sum function, which is beyond the scope of this post.)

-

Note II: This proof above also works with an infinite-time horizon. In Appendix B of the Generalized Advantage Estimation paper (arXiv link), the authors do so with a proof exactly matching the above, except that \(T\) and \(T-1\) are now infinity.

-

Note III: About the expectation going to zero, that’s due to a well-known fact about score functions, which are precisely the gradient of log probailities. We went over this in my STAT 210A class last fall. It’s again the log derivative trick. Observe that:

\[\mathbb{E}_{a_t}\Big[\nabla_\theta \log \pi_\theta(a_t|s_t)\Big] = \int \frac{\nabla_\theta \pi_\theta(a_t|s_t)}{\pi_{\theta}(a_t|s_t)}\pi_{\theta}(a_t|s_t)da_t = \nabla_\theta \int \pi_{\theta}(a_t|s_t)da_t = \nabla_\theta \cdot 1 = 0\]where the penultimate step follows from how \(\pi_\theta\) is a density. This follows for all time steps, and since the gradient of the log gets distributed for each \(t\), it applies in all time steps. I switched to the continuous-land version for this, but it also applies with sums, as I just recently used in Note I.

The above shows that introducing \(b\) doesn’t cause bias.

The last thing to cover is why its introduction reduces variance. I provide an approximate argument. To simplify notation, set \(R_t(\tau) = \sum_{t'=t}^{T-1}r_{t'}\). We focus on the inside of the expectation (of the gradient estimate) to analyze the variance. The technical reason for this is that expectations are technically constant (and thus have variance zero) but in practice we have to approximate the expectations with trajectories, and that has high variance.

The variance is approximated as:

\[\begin{align} {\rm Var}\left(\sum_{t=0}^{T-1}\nabla_\theta \log \pi_\theta(a_t|s_t) (R_t(\tau)-b(s_t))\right)\;&\overset{(i)}{\approx}\; \sum_{t=0}^{T-1} \mathbb{E}\tau\left[\Big(\nabla_\theta \log \pi_\theta(a_t|s_t) (R_t(\tau)-b(s_t))\Big)^2\right] \\ \;&{\overset{(ii)}{\approx}}\; \sum_{t=0}^{T-1} \mathbb{E}_\tau \left[\Big(\nabla_\theta \log \pi_\theta(a_t|s_t)\Big)^2\right]\mathbb{E}_\tau\left[\Big(R_t(\tau) - b(s_t))^2\right] \end{align}\]Approximation (i) is because we are approximating the variance of a sum by computing the sum of the variances. This is not true in general, but if we can assume this, then by the definition of the variance \({\rm Var}(X) := \mathbb{E}[X^2]-(\mathbb{E}[X])^2\), we are left with the \(\mathbb{E}[X^2]\) term since we already showed that introducing the baseline doesn’t cause bias. Approximation (ii) is because we assume independence among the values involved in the expectation, and thus we can factor the expectation.

Finally, we are left with the term \(\mathbb{E}_{\tau} \left[\Big(R_t(\tau) - b(s_t))^2\right]\). If we are able to optimize our choice of \(b(s_t)\), then this is a least squares problem, and it is well known that the optimal choice of \(b(s_t)\) is to be the expected value of \(R_t(\tau)\). In fact, that’s why policy gradient researchers usually want \(b(s_t) \approx \mathbb{E}[R_t(\tau)]\) to approximate the expected return starting at time \(t\), and that’s why in the vanilla policy gradient algorithm we have to re-fit the baseline estimate each time to make it as close to the expected return \(\mathbb{E}[R_t(\tau)]\). At last, I understand.

How accurate are these approximations in practice? My intuition is that they are actually fine, because recent advances in reinforcement learning algorithms, such as A3C, focus on the problem of breaking correlation among samples. If the correlation among samples is broken, then Approximation (i) becomes better, because I think the samples \(s_0,a_0,\ldots,a_{T-1},s_{T}\) are no longer generated from the same trajectory.

Well, that’s my intuition. If anyone else has a better way of describing it, feel free to let me know in the comments or by email.

Discount Factors

So far, we have assumed we wanted to optimize the expected return, or the expected sum of rewards. However, if you’ve studied value iteration and policy iteration, you’ll remember that we usually use discount factors \(\gamma \in (0,1]\). These empirically work well because the effect of an action many time steps later is likely to be negligible compared to other action. Thus, it may not make sense to try and include raw distant rewards in our optimization problem. Thus, we often impose a discount as follows:

\[\begin{align} \nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}[R(\tau)] &= \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}r_{t'} - b(s_t)\right) \right] \\ &\approx \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}\gamma^{t'-t}r_{t'} - b(s_t)\right) \right] \end{align}\]where the \(\gamma^{t'-t}\) serves as the discount, starting from 1, then getting smaller as time passes. (The first line above is a repeat of the policy gradient formula that I describe earlier.) As this is not exactly the “desired” gradient, this is an approximation, but it’s a reasonable one. This time, we now want our baseline to satisfy \(b(s_t) \approx \mathbb{E}[r_t + \gamma r_{t+1} + \cdots + \gamma^{T-1-t} r_{T-1}]\).

Advantage Functions

In this final section, we replace the policy gradient formula with the following value functions:

\[Q^\pi(s,a) = \mathbb{E}_{\tau \sim \pi_\theta}\left[\sum_{t=0}^{T-1} r_t \;\Bigg|\; s_0=s,a_0=a\right]\] \[V^\pi(s) = \mathbb{E}_{\tau \sim \pi_\theta}\left[\sum_{t=0}^{T-1} r_t \;\Bigg|\; s_0=s\right]\]Both of these should be familiar from basic AI; see the CS 188 notes from Berkeley if this is unclear. There are also discounted versions, which we can denote as \(Q^{\pi,\gamma}(s,a)\) and \(V^{\pi,\gamma}(s)\). In addition, we can also consider starting at any given time step, as in \(Q^{\pi,\gamma}(s_t,a_t)\) which provides the expected (discounted) return assuming that at time \(t\), our state-action pair is \((s_t,a_t)\).

What might be new is the advantage function. For the undiscounted version, it is defined simply as:

\[A^\pi(s,a) = Q^\pi(s,a) - V^\pi(s)\]with a similar definition for the discounted version. Intuitively, the advantage tells us how much better action \(a\) would be compared to the return based on an “average” action.

The above definitions look very close to what we have in our policy gradient formula. In fact, we can claim the following:

\[\begin{align} \nabla_\theta \mathbb{E}_{\tau \sim \pi_\theta}[R(\tau)] &= \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \left(\sum_{t'=t}^{T-1}r_{t'} - b(s_t)\right) \right] \\ &{\overset{(i)}{=}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \cdot \Big(Q^{\pi}(s_t,a_t)-V^\pi(s_t)\Big) \right] \\ &{\overset{(ii)}{=}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \cdot A^{\pi}(s_t,a_t) \right] \\ &{\overset{(iii)}{\approx}}\; \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T-1} \nabla_\theta \log \pi_\theta(a_t|s_t) \cdot A^{\pi,\gamma}(s_t,a_t) \right] \end{align}\]In (i), we replace terms with their expectations. This is not generally valid to do, but it should work in this case. My guess is that if you start from the second line above (after the “(i)”) and plug in the definition of the expectation inside and rearrange terms, you can get the first line. However, I have not had the time to check this in detail and it takes a lot of space to write out the expectation fully. The conditioning with the value functions makes it a bit messy and thus the law of iterated expectation may be needed.

Also from line (i), we notice that the value function is a baseline, and hence we can add it there without changing the unbiased-ness of the expectation. Then lines (ii) and (iii) are just for the advantage function. The implication of this formula is that the problem of policy gradients, in some sense, reduces to finding good estimates \(\hat{A}^{\pi,\gamma}(s_t,a_t)\) of the advantage function \(A^{\pi,\gamma}(s_t,a_t)\). That is precisely the topic of the paper Generalized Advantage Estimation.

Concluding Remarks

Hopefully, this is a helpful, self-contained, bare-minimum introduction to policy gradients. I am trying to learn more about these algorithms, and going through the math details is helpful. This will also make it easier for me to understand the increasing number of research papers that are using this notation.

I also have to mention: I remember a few years ago during the first iteration of CS 294-112 that I had no idea how policy gradients worked. Now, I think I have become slightly more enlightened.

Acknowledgements: I thank John Schulman for making his notes publicly available.

Update April 19, 2017: I have code for vanilla policy gradients in my reinforcement learning GitHub repository.

Keeping Track of Research Articles: My Paper Notes Repository

The number of research papers in Artificial Intelligence has reached un-manageable proportions. Conferences such as ICML, NIPS, and ICLR others are getting record amounts of paper submissions. In addition, tens of AI-related papers get uploaded to arXiv every weekday. With all these papers, it can be easy to feel lost and overwhelmed.

Like many researchers, I think I do not read enough research papers. This year, I resolved to change that, so I started an open-source GitHub repository called “Paper Notes” where I list papers that I’ve read along with my personal notes and summaries, if any. Papers without such notes are currently on my TODO radar.

After almost three months, I’m somewhat pleased with my reading progress. There are a healthy number of papers (plus notes) listed, arranged by subject matter and then further arranged by year. Not enough for me, but certainly not terrible either.

I was inspired to make this by seeing Denny Britz’s similar repository, along with Adrian Colyer’s blog. My repository is similar to Britz’s, though my aim is not to list all papers in Deep Learning, but to write down the ones that I actually plan to read at some point. (I see other repositories where people simply list Deep Learning papers without notes, which seems pretty pointless to me.) Colyer’s blog posts represent the kind of notes that I’d like to take for each paper, but I know that I can’t dedicate that much time to fine-tuning notes.

Why did I choose GitHub as the backend for my paper management, rather than something like Mendeley? First, GitHub is the default place where (pretty much) everyone in AI puts their open-source stuff: blogs, code, you name it. I’m already used to GitHub, so Mendeley would have to provide some serious benefit for me to switch over. I also don’t need to use advanced annotation and organizing materials, given that the top papers are easily searchable online (including their BibTeX references). In addition, by making my Paper Notes repository online, I can show this as evidence to others that I’m reading papers. Maybe this will even impress a few folks, and I say this only because everyone wants to be noticed in some way; that’s partly Colyer’s inspiration for his blog. So I think, on balance, it will be useful for me to keep updating this repository.

Update December 29, 2021: I now don’t use this repository very much, if at all. Instead, since I got an iPad two years ago, I’ve started to rely a lot on Notability which lets me download and annotate PDFs with the iPad pen. So far this is a slightly easier system for me to track papers that I read. However, I will keep the GitHub repository available in case it is of use to readers.

What Biracial People Know

There’s an opinion piece in the New York Times by Moises Velasquez-Manoff which talks about (drum roll please) biracial people. As he mentions:

Multiracials make up an estimated 7 percent of Americans, according to the Pew Research Center, and they’re predicted to grow to 20 percent by 2050.

Thus, I suspect that sometime in the next few decades, we will start talking about race in terms of precise racial percentages, such as “100 percent White” or in rarer cases, “25 percent White, 25 percent Asian, 25 percent Black, and 25 percent Native American.” (Incidentally, I’m not sure why the article uses “Biracial” when “Multiracial” would clearly have been a more appropriate term; it was likely due to the Barack Obama factor.)

The phrase “precise racial percentages” is misleading. Since all humans came from the same ancestor, at some point in history we must have been “one race.” For the sake of defining these racial percentages, we can take a date — say 4000BC — when, presumably, the various races were sufficiently different, ensconced in their respective geographic regions, and when interracial marriages (or rape) was at a minimum. All humans alive at that point thus get a “100 percent [insert_race_here]” attached to them, and we do the arithmetic from there.

What usually happens in practice, though, is that we often default to describing one part of one race, particularly with people who are \(X\) percent Black, where \(X > 0\). This is a relic of the embarrassing “One Drop Rule” the United States had, but for now it’s probably — well, I hope — more for self-selecting racial identity.

Listing precise racial percentages would help us better identify people who are not easy to immediately peg in racial categories, which will increasingly become an issue as more and more multiracial people like me blur the lines between the races. In fact, this is already a problem for me even with single-race people: I sometimes cannot distinguish between Hispanics versus Whites. For instance, I thought Ted Cruz and Marco Rubio were 100 percent White.

Understanding race is also important when considering racial diversity and various ethical or sensitive questions over who should get “preferences.” For instance, I wonder if people label me as a “privileged white male” or if I get a pass for being biracial? Another question: for a job at a firm which has had a history of racial discrimination and is trying to make up for that, should the applicant who is 75 percent Black, 25 percent White, get a hair’s preference versus someone who is 25 percent Black and 75 percent White? Would this also apply if they actually have very similar skin color?

In other words, does one weigh more towards the looks or the precise percentages? I think the precise percentages method is the way schools, businesses, and government operate, despite how this isn’t the case in casual conversations.

Anyway, these are some of the thoughts that I have as we move towards a more racially diverse society, as multiracial people cannot have single-race children outside of adoption.

Back to the article: as one would expect, it discusses the benefits of racial diversity. I can agree with the following passage:

Social scientists find that homogeneous groups like [Donald Trump’s] cabinet can be less creative and insightful than diverse ones. They are more prone to groupthink and less likely to question faulty assumptions.

The caveat is that this assumes the people involved are equally qualified; a racially homogeneous (in whatever race), but extremely well-educated cabinet would be much better than a racially diverse cabinet where no one even finished high school. But controlling for quality, I can agree.

Diversity also benefits individuals, as the author notes. It is here where Mr. Velasquez-Manoff points out that Barack Obama was not just Black, but also biracial, which may have benefited his personal development. Multiracials make up a large fraction of the population in racially diverse Hawaii, where Obama was born (albeit, probably with more Asian-White overlap).

Yes, I agree that diversity is important for a variety of reasons. It is not easy, however:

It’s hard to know what to do about this except to acknowledge that diversity isn’t easy. It’s uncomfortable. It can make people feel threatened. “We promote diversity. We believe in diversity. But diversity is hard,” Sophie Trawalter, a psychologist at the University of Virginia, told me.

That very difficulty, though, may be why diversity is so good for us. “The pain associated with diversity can be thought of as the pain of exercise,” Katherine Phillips, a senior vice dean at Columbia Business School, writes. “You have to push yourself to grow your muscles.”

I cannot agree more.

Moving on:

Closer, more meaningful contact with those of other races may help assuage the underlying anxiety. Some years back, Dr. Gaither of Duke ran an intriguing study in which incoming white college students were paired with either same-race or different-race roommates. After four months, roommates who lived with different races had a more diverse group of friends and considered diversity more important, compared with those with same-race roommates. After six months, they were less anxious and more pleasant in interracial interactions.

Ouch, this felt like a blindsiding attack, and is definitely my main gripe with this article. In college, I had two roommates, both of whom have a different racial makeup than me. They both seemed to be relatively popular and had little difficulty mingling with a diverse group of students. Unfortunately, I certainly did not have a “diverse group of friends.” After all, if there was a prize for college for “least popular student” I would be a perennial contender. (As incredible as it may sound, in high school, where things were worse for me, I can remember a handful of people who might have been even lower on the social hierarchy.)

Well, I guess what I want to say is that, this attack notwithstanding, Mr. Velasquez-Manoff’s article brings up interesting and reasonably accurate points about biracial people. At the very least, he writes about concepts which are sometimes glossed over or under-appreciated nowadays in our discussions about race.

Understanding Generative Adversarial Networks

Over the last few weeks, I’ve been learning more about some mysterious thing called Generative Adversarial Networks (GANs). GANs originally came out of a 2014 NIPS paper (read it here) and have had a remarkable impact on machine learning. I’m surprised that, until I was the TA for Berkeley’s Deep Learning class last semester, I had never heard of GANs before.1

They certainly haven’t gone unnoticed in the machine learning community, though. Yann LeCun, one of the leaders in the Deep Learning community, had this to say about them during his Quora session on July 28, 2016:

The most important one, in my opinion, is adversarial training (also called GAN for Generative Adversarial Networks). This is an idea that was originally proposed by Ian Goodfellow when he was a student with Yoshua Bengio at the University of Montreal (he since moved to Google Brain and recently to OpenAI).

This, and the variations that are now being proposed is the most interesting idea in the last 10 years in ML, in my opinion.

If he says something like that about GANs, then I have no excuse for not learning about them. Thus, I read what is probably the highest-quality general overview available nowadays: Ian Goodfellow’s tutorial on arXiv, which he then presented in some form at NIPS 2016. This was really helpful for me, and I hope that later, I can write something like this (but on another topic in AI).

I won’t repeat what GANs can do here. Rather, I’m more interested in knowing how GANs are trained. Following now are some of the most important insights I gained from reading the tutorial:

-

Major Insight 1: the discriminator’s loss function is the cross entropy loss function. To understand this, let’s suppose we’re doing some binary classification with some trainable function \(D: \mathbb{R}^n \to [0,1]\) that we wish to optimize, where \(D\) indicates the estimated probability of some data point \(x_i \in \mathbb{R}^n\) being in the first class. To get the predicted probability of being in the second class, we just do \(1-D(x_i)\). The output of \(D\) must therefore be constrained in \([0,1]\), which is easy to do if we tack on a sigmoid layer at the end. Furthermore, let \((x_i,y_i) \in (\mathbb{R}^n, \{0,1\})\) be the input-label pairing for training data points.

The cross entropy between two distributions, which we’ll call \(p\) and \(q\), is defined as

\[H(p,q) := -\sum_i p_i \log q_i\]where \(p\) and \(q\) denote a “true” and an “empirical/estimated” distribution, respectively. Both are discrete distributions, hence we can sum over their individual components, denoted with \(i\). (We would need to have an integral instead of a sum if they were continuous.) Above, when I refer to a “distribution,” it means with respect to a single training data point, and not the “distribution of training data points.” That’s a different concept.

To apply this loss function to the current binary classification task, for (again) a single data point \((x_i,y_i)\), we define its “true” distribution as \(\mathbb{P}[y_i = 0] = 1\) if \(y_i=0\), or \(\mathbb{P}[y_i = 1] = 1\) if \(y_i=1\). Putting in 2-D vector form, it’s either \([1,0]\) or \([0,1]\). Intuitively, we know for sure which class this belongs to (because it’s part of the training data), so it makes sense for a probability distribution to be a “one-hot” vector.

Thus, for one data point \(x_1\) and its label, we get the following loss function, where here I’ve changed the input to be more precise:

\[H((x_1,y_1),D) = - y_1 \log D(x_1) - (1-y_1) \log (1-D(x_1))\]Let’s look at the above function. Notice that only one of the two terms is going to be zero, depending on the value of \(y_1\), which makes sense since it’s defining a distribution which is either \([0,1]\) or \([1,0]\). The other part is the estimated distribution from \(D\). In both cases (the true and predicted distributions) we are encoding a 2-D distribution with one value, which lets us treat \(D\) as a real-valued function.

That was for one data point. Summing over the entire dataset of \(N\) elements, we get something that looks like this:

\[H((x_i,y_i)_{i=1}^N,D) = - \sum_{i=1}^N y_i \log D(x_i) - \sum_{i=1}^N (1-y_i) \log (1-D(x_i))\]In the case of GANs, we can say a little more about what these terms mean. In particular, our \(x_i\)s only come from two sources: either \(x_i \sim p_{\rm data}\), the true data distribution, or \(x_i = G(z)\) where \(z \sim p_{\rm generator}\), the generator’s distribution, based on some input code \(z\). It might be \(z \sim {\rm Unif}[0,1]\) but we will leave it unspecified.

In addition, we also want exactly half of the data to come from these two sources.

To apply this to the sum above, we need to encode this probabilistically, so we replace the sums with expectations, the \(y_i\) labels with \(1/2\), and we can furthermore replace the \(\log (1-D(x_i))\) term with \(\log (1-D(G(z)))\) under some sampled code \(z\) for the generator. We get

\[H((x_i,y_i)_{i=1}^\infty,D) = - \frac{1}{2} \mathbb{E}_{x \sim p_{\rm data}}\Big[ \log D(x)\Big] - \frac{1}{2} \mathbb{E}_{z} \Big[\log (1-D(G(z)))\Big]\]This is precisely the loss function for the discriminator, \(J^{(J)}\).

-

Major Insight 2: understanding how gradient saturation may or may not adversely affect training. Gradient saturation is a general problem when gradients are too small (i.e. zero) to perform any learning. See Stanford’s CS 231n notes on gradient saturation here for more details. In the context of GANs, gradient saturation may happen due to poor design of the generator’s loss function, so this “major insight” of mine is also based on understanding the tradeoffs among different loss functions for the generator. This design, incidentally, is where we can be creative; the discriminator needs the cross entropy loss function above since it has a very specific function (to discriminate among two classes) and the cross entropy is the “best” way of doing this.

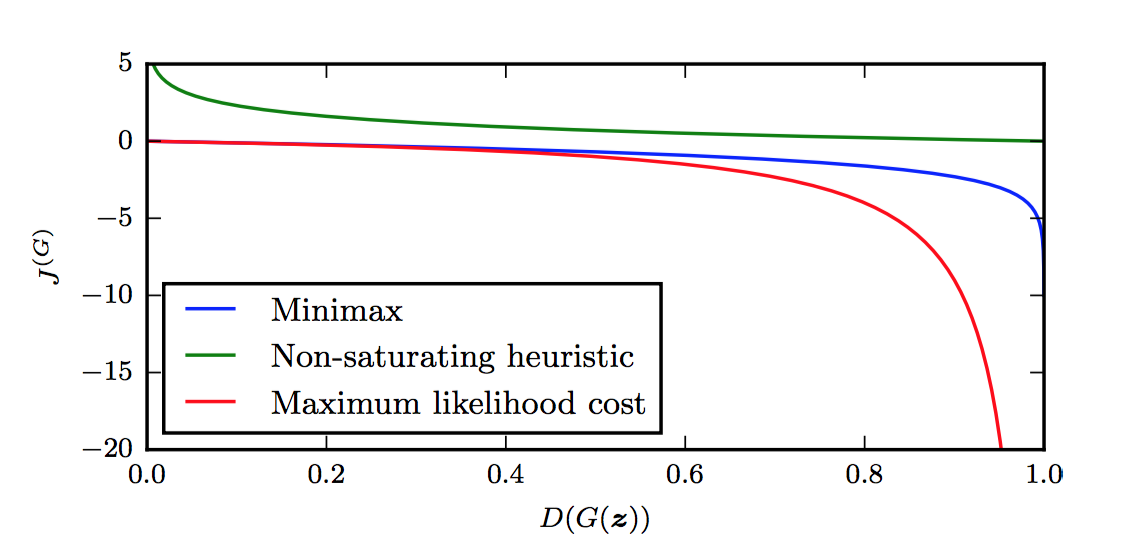

Using Goodfellow’s notation, we have the following candidates for the generator loss function, as discussed in the tutorial. The first is the minimax version:

\[J^{(G)} = -J^{(J)} = \frac{1}{2} \mathbb{E}_{x \sim p_{\rm data}}\Big[ \log D(x)\Big] + \frac{1}{2} \mathbb{E}_{z} \Big[\log (1-D(G(z)))\Big]\]The second is the heuristic, non-saturating version:

\[J^{(G)} = -\frac{1}{2}\mathbb{E}_z\Big[\log D(G(z))\Big]\]Finally, the third is the maximum likelihood version:

\[J^{(G)} = -\frac{1}{2}\mathbb{E}_z\left[e^{\sigma^{-1}(D(G(z)))}\right]\]What are the advantages and disadvantages of these generator loss functions? For the minimax version, it’s simple and allows for easier theoretical results, but in practice its not that useful, due to gradient saturation. As Goodfellow notes:

In the minimax game, the discriminator minimizes a cross-entropy, but the generator maximizes the same cross-entropy. This is unfortunate for the generator, because when the discriminator successfully rejects generator samples with high confidence, the generator’s gradient vanishes.

As suggested in Chapter 3 of Michael Nielsen’s excellent online book, the cross-entropy is a great loss function since it is designed in part to accelerate learning and avoid gradient saturation only up to when the classifier is correct (since we don’t want the gradient to move in that case!).

I’m not sure how to clearly describe this formally. For now, I will defer to Figure 16 in Goodfellow’s tutorial (see the top of this blog post), which nicely shows the value of \(J^{(G)}\) as a function of the discriminator’s output, \(D(G(z))\). Indeed, when the discriminator is winning, we’re at the left side of the graph, since the discriminator outputs the probability of the sample being from the true data distribution.

By the way, why is \(J^{(G)} = -J^{(J)}\) only a function of \(D(G(z))\) as suggested by the figure? What about the other term in \(J^{(J)}\)? Notice that of the two terms in the loss function, the first one is only a function of the discriminator’s parameters! The second part, which uses the \(D(G(z))\) term, depends on both \(D\) and \(G\). Hence, for the purposes of performing gradient descent with respect to the parameters of \(G\), only the second term in \(J^{(J)}\) matters; the first term is a constant that disappears after taking derivatives \(\nabla_{\theta^{(G)}}\).

The figure makes it clear that the generator will have a hard time doing any sort of gradient update at the left portion of the graph, since the derivatives are close to zero. The problem is that the left portion of the graph represents the most common case when starting the game. The generator, after all, starts out with basically random parameters, so the discriminator can easily tell what is real and what is fake.2

Let’s move on to the other two generator cost functions. The second one, the heuristically-motivated one, uses the idea that the generator’s gradient only depends on the second term in \(J^{(J)}\). Instead of flipping the sign of \(J^{(J)}\), they instead flip the target: changing \(\log (1-D(G(z)))\) to \(\log D(G(z))\). In other words, the “sign flipping” happens at a different part, so the generator still optimizes something “opposite” of the discriminator. From this re-formulation, it appears from the figure above that \(J^{(G)}\) now has desirable gradients in the left portion of the graph. Thus, the advantage here is that the generator gets a strong gradient signal so that it can quickly improve. The downside is that it’s not easier to analyze, but who cares?

Finally, the maximum likelihood cost function has the advantage of being motivated based on maximum likelihood, which by itself has a lot of desirable properties. Unfortunately, the figure above shows that it has a flat slope in the left portion, though it seems to be slightly better than the minimax version since it decreases rapidly “sooner.” Though that might not be an “advantage,” since Goodfellow warns about high variance. That might be worth thinking about in more detail.

One last note: the function \(J^{(G)}\), at least for the three cost functions here, does not depend directly on \(x\) at all! That’s interesting … and in fact, Goodfellow argues that makes GANs resistant to overfitting since it can’t copy from \(x\).

I wish more tutorials like this existed for other AI concepts. I particularly enjoyed the three exercises and the solutions within this tutorial on GANs. I have more detailed notes here in my Paper Notes GitHub repository (I should have started this repository back in 2013). I highly recommend this tutorial to anyone wanting to know more about GANs.

Update March 26, 2020: made a few clarifications.

-

Ian Goodfellow, the lead author on the GANs paper, was a guest lecture for the class, where (obviously) he talked about GANs. ↩

-

Actually, the discriminator also starts out random, right? I think the discriminator has an easier job, though, since supervised learning is easier than generating realistic images (I mean, c’mon??) so perhaps the discriminator simply learns faster, and the generator has to spend a lot of time catching up. ↩

My Thoughts on CS 231n Being Forced To Take Down Videos

CS 231n: Convolutional Neural Networks for Visual Recognition is, in my biased opinion, one of the most important and thrilling courses offered by Stanford University. It has been taught twice so far and will appear again in the upcoming Spring quarter.

Due to its popularity, the course lectures for the second edition (Winter 2016) were videotaped and released online. This is not unusual among computer science graduate level courses due to high demand both inside and outside the university.

Unfortunately, as discussed in this rather large reddit discussion thread, Andrej Karpathy (one of the three instructors) was forced to pull down the lecture videos. He later clarified on his Twitter account that the reason had to do with the lack of captioning/subtitles in the lecture videos, which relates to a news topic I blogged about just over two years ago.

If you browse the reddit thread, you will see quite a lot of unhappy students. I just joined reddit and I was hoping to make a comment there, but reddit disables posting after six months. And after thinking about it, I thought it would make more sense to write some brief thoughts here instead.

To start, I should state upfront that I have no idea what happened beyond the stuff we can all read online. I don’t know who made the complaint, what the course staff did, etc.

Here’s my stance regarding class policies on watching videos:

If a class requires watching videos for whatever reason, then that video should have subtitles. Otherwise, no such action is necessary, though the course staff should attempt as much as is reasonable to have subtitles for all videos.

I remember two times when I had to face this problem of watching a non-subtitled video as a homework assignment: in an introductory Women’s, Gender, and Sexuality Studies course and an Africana Studies class about black athletes. For the former, we were assigned to watch a video about a transgender couple, and for the latter, the video was about black golfers. In both cases, the professors gave me copies of the movie (other students didn’t get these) and I watched one in a room myself with the volume cranked up and the other one with another person who told me what was happening.

Is that ideal? Well, no. To (new) readers of this blog, welcome to the story of my life!

More seriously, was I supposed to do something about it? The professors didn’t make the videos, which were a tiny portion of the overall courses. I didn’t want to get all up in arms about this, so in both cases, I brought it up with them and they understood my situation (and apologized).

Admittedly, my brief stance above is incomplete and belies a vast gray area. What if students are given the option of doing one of two “required” assignments: watching a video or reading a book? That’s a gray area, though I would personally lean that towards “required viewing” and thus “required subtitles.”

Class lecture videos also fall in a gray area. They are not required viewing, because students should attend lectures in person. Unfortunately, the lack of subtitles for these videos definitely puts deaf and hard of hearing students like myself at a disadvantage. I’ve lost count of the amount of lectures that I wish I could have re-watched, but it extraordinarily difficult for me to do so for non-subtitled videos.

Ultimately, however, as long as I can attend lectures and understand some of the material, I do not worry about whether lecture videos have subtitles. Just about every videotaped class that I have taken did not have subtitled lecture videos, with one exception: CS 267 from Spring 2016, after I had negotiated about it with Berkeley’s DSP.

Heck, the CS 294-129 class which I TA-ed for last semester — which is based on CS 231n! — had lecture videos. Were there captions? Nope.

Am I frustrated? Yes, but it’s understandable frustration due to the cost of adding subtitles. As a similar example, I’m frustrated at the identity politics practiced by the Democratic party, but it’s understandable frustration due to what political science instructs us to do, which is why I’m not planning to jump ship to another party.

Thus in my case, if I were a student in CS 231n, I would not be inclined to pressure the staff to pull the videos down. Again, this comes with the obvious caveat; I don’t know the situation and it might have been worse than I imagine.

As this discussion would imply, I don’t like pulling down lecture videos as “collateral damage”.1 I worry, however, if that’s in part because I’m too timid. Hypothetically and broadly speaking, if I have to take out my frustration (e.g. with lawsuits) on certain things, I don’t want to do this for something like lecture videos, which would make a number of folks angry at me, whether or not they openly express it.

On a more positive note … it turns out that, actually, the CS 231n lecture videos are online! I’m not sure why, but I’m happy. Using YouTube’s automatic captions, I watched one of the lectures and finally understood a concept that was critical and essential for me to know when I was writing my latest technical blog post.

Moreover, the automatic captions are getting better and better each year. They work pretty well on Andrej, who has a slight accent (Russian?). I dislike attending research talks if I don’t understand what’s going on, but given that so many are videotaped these days, whether at Berkeley or at conferences, maybe watching them offline is finally becoming a viable alternative.

-

In another case where lecture videos had to be removed, consider MIT’s Open Courseware and Professor Walter Lewin’s famous physics lectures. MIT removed the videos after it was found that Lewin had sexually harassed some of his students. Lewin’s harassment disgusted me, but I respectfully disagreed with MIT’s position about removing his videos, siding with then-MIT professor Scott Aaronson. In an infamous blog post, Professor Aaronson explained why he opposed the removal of the videos, which subsequently caused him to be the subject of a hate-rage/attack. Consequently, I am now a permanent reader of his blog. ↩

These Aren't Your Father's Hearing Aids

I am now wearing Oticon Dynamo hearing aids. The good news is that I’ve run many times with them and so far have not had issues with water resistance.

However, I wanted to bring up a striking point that really made me realize about how our world has changed remarkably in the last few years.

A few months ago, when I was first fitted with the hearing aids, my audiologist set the default volume level to be “on target” for me. The hearing aid is designed to provide different amounts of power to people depending on their raw hearing level. There’s a volume control on it which goes from “1” (weak) to “4” (powerful), which I can easily adjust as I wish. The baseline setting is “3”, but this baseline is what audiologist adjust on a case-by-case basis. This means my “3” (and thus, my “1” and “4” settings) may be more powerful, less powerful, or the same compared to the respective settings for someone else.

When my audiologist first fit the hearing aids for me, I felt that my left hearing aid was too quiet and my right one too loud by default, so she modified the baselines.

She also, critically, gave me about a week to adjust to the hearing aids, and I was to report back on whether its strength was correctly set.

During that week, I wore the hearing aids, but I then decided that I was originally mistaken about both hearing aids, since I had to repeatedly increase the volume for the left one and decrease the volume for the right one.

I reported back to my audiologist and said that she was right all along, and that my baselines needed to be back to their default levels. She was able to corroborate my intuition by showing me — amazingly – how often I had adjusted the hearing aid volume level, and in which direction.

Hearing aids are, apparently, now fitted with these advanced sensors so they can track exactly how you adjust them (volume controls or otherwise).

The lesson is that just about everything nowadays consists of sensors, a point which is highlighted in Thomas L. Friedman’s excellent book Thank You for Being Late. It is also a characteristic of what computer scientists refer to as the “Internet of Things.”

Obviously, these certainly aren’t the hearing aids your father wore when he was young.

Academics Against Immigration Executive Order

I just signed a petition, Academics Against Immigration Executive Order to oppose the Trump administration’s recent executive order. You can find the full text here along with the names of those who have signed up. (Graduate students are in the “Other Signatories” category and may take a while to update.) I like this petition because it clearly lists the names of people so as to avoid claims of duplication and/or bogus signatures for anonymous petitions. There are lots of academic superstars on the list, including (I’m proud to say) my current statistics professor Michael I. Jordan and my statistics professor William Fithian from last semester.

The petition lists three compelling reasons to oppose the order, but let me just chime in with some extra thoughts.

I understand the need to keep our country safe. But in order to do so, there has to be a correct tradeoff in terms of security versus profiling (for lack of a better word) and in terms of costs versus benefits.

On the spectrum of security, to one end are those who deny the existence of radical Islam and the impact of religion on terrorism. On the other end are those who would happily ban an entire religion and place the blame and burden on millions of law-abiding people fleeing oppression. This order is far too close to the second end.

In terms of costs and benefits, I find an analogy to policing useful. Mayors and police chiefs shouldn’t be assigning their police officers uniformly throughout cities. The police should be targeted in certain hotspots of crime as indicated by past trends. That’s the most logical and cost-effective way to crack down on crime.

Likewise, if were are serious about stopping radical Islamic terrorism, putting a blanket ban on Muslims is like the “uniform policing strategy” and will also cause additional problems since Muslims would (understandably!) feel unfairly targeted. For instance, Iran is already promising “proportional responses”. I also have to mention that the odds of being killed by a refugee terrorist are so low that the amount of anxiety towards them does not justify the cost.

By the way, I’m still waiting for when Saudi Arabia — the source of 15 out of 19 terrorists responsible for 9/11 — gets on the executive order list. I guess President Trump has business dealings there? (Needless to say, that’s why conflict of interest laws exist.)

I encourage American academics to take a look at this order and (hopefully) sign the petition. I also urge our Secretary of Defense, James Mattis, to talk to Trump and get him to rescind and substantially revise the order. While I didn’t state this publicly to anyone, I have more respect for Mattis than any one else in the Trump cabinet, and hopefully that will remain the case.

Understanding Higher Order Local Gradient Computation for Backpropagation in Deep Neural Networks

Introduction

One of the major difficulties in understanding how neural networks work is due to the backpropagation algorithm. There are endless texts and online guides on backpropagation, but most are useless. I read several explanations of backpropagation when I learned about it from 2013 to 2014, but I never felt like I really understood it until I took/TA-ed the Deep Neural Networks class at Berkeley, based on the excellent Stanford CS 231n course.

The course notes from CS 231n include a tutorial on how to compute gradients for local nodes in computational graphs, which I think is key to understanding backpropagation. However, the notes are mostly for the one-dimensional case, and their main advice for extending gradient computation to the vector or matrix case is to keep track of dimensions. That’s perfectly fine, and in fact that was how I managed to get through the second CS 231n assignment.

But this felt unsatisfying.

For some of the harder gradient computations, I had to test several different ideas before passing the gradient checker, and sometimes I wasn’t even sure why my code worked! Thus, the purpose of this post is to make sure I deeply understand how gradient computation works.

Note: I’ve had this post in draft stage for a long time. However, I just found out that the notes from CS 231n have been updated with a guide from Erik Learned-Miller on taking matrix/vector derivatives. That’s worth checking out, but fortunately, the content I provide here is mostly distinct from his material.

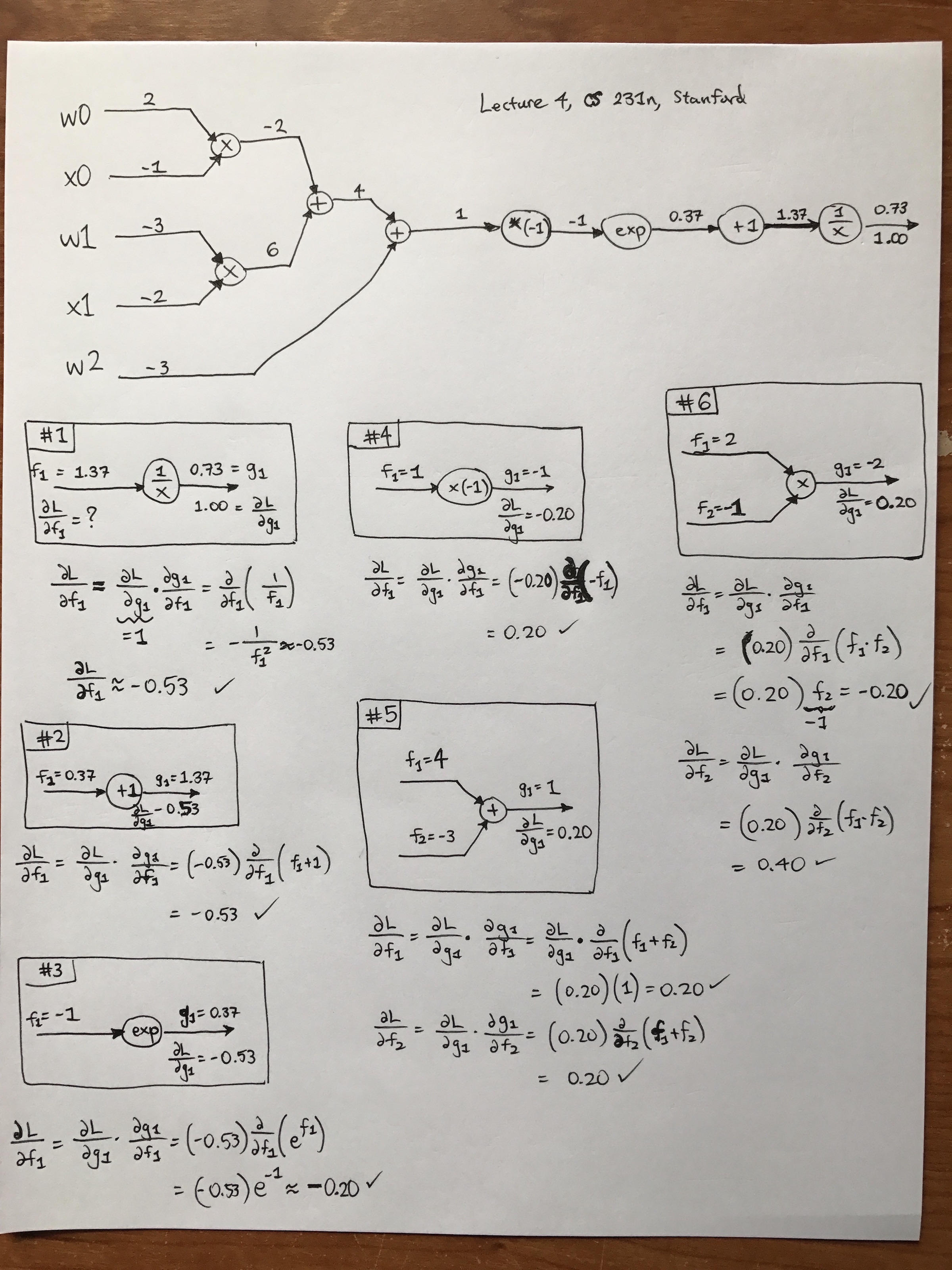

The Basics: Computational Graphs in One Dimension

I won’t belabor the details on one-dimensional graphs since I assume the reader has read the corresponding Stanford CS 231n guide. Another nice post is from Chris Olah’s excellent blog. For my own benefit, I reviewed derivatives on computational graphs by going through the CS 231n example with sigmoids (but with the sigmoid computation spread out among finer-grained operations). You can see my hand-written computations in the following image. Sorry, I have absolutely no skill in getting this up quickly using tikz, Inkscape, or other visualization tactics/software. Feel free to right-click and open the image in a new tab. Warning: it’s big. (But I have to say, the iPhone7 plus makes really nice images. I remember the good old days when we had to take our cameras to CVS to get them developed…)

Another note: from the image, you can see that this is from the fourth lecture of CS 231n class. I watched that video on YouTube, which is excellent and of high-quality. Fortunately, there are also automatic captions which are highly accurate. (There’s an archived reddit thread discussing how Andrej Karpathy had to take down the videos due to a related lawsuit I blogged about earlier, but I can see them just fine. Did they get back up somehow? I’ll write more about this at a later date.)

When I was going through the math here, I came up with several rules to myself:

-

There’s a lot of notation that can get confusing, so for simplicity, I always denoted inputs as \(f_1,f_2,\ldots\) and outputs as \(g_1,g_2,\ldots\), though in this example, we only have one output at each step. By doing this, I can view the \(g_1\)s as a function of the \(f_i\) terms, so the local gradient turns into \(\frac{\partial g_1}{\partial f_i}\) and then I can substitute \(g_1\) in terms of the inputs.

-

When doing backpropgation, I analyzed it node-by-node, and the boxes I drew in my image contain a number which indicates the order I evaluated them. (I skipped a few repeat blocks just as the lecture did.) Note that when filling in my boxes, I only used the node and any incoming/outgoing arrows. Also, the \(f_i\) and \(g_i\) keep getting repeated, i.e. the next step will have \(g_i\) equal to whatever the \(f_i\) was in the previous block.

-

Always remember that when we have arrows here, the part above the arrow contains the value of \(f_i\) (respectively, \(g_i\)) and below the arrow we have \(\frac{\partial L}{\partial f_i}\) (respectively \(\frac{\partial L}{\partial g_i}\)).

Hopefully this will be helpful to beginners using computational graphs.

Vector/Matrix/Tensor Derivatives, With Examples

Now let’s get to the big guns — vectors/matrices/tensors. Vectors are a special case of matrices, which are a special case of tensors, the most generalized \(n\)-dimensional array. For this section, I will continue using the “partial derivative” notation \(\frac{\partial}{\partial x}\) to represent any derivative form (scalar, vector, or matrix).

ReLU

Our first example will be with ReLUs, because that was covered a bit in the

CS 231n lecture. Let’s suppose \(x \in \mathbb{R}^3\), a 3-D column vector

representing some data from a hidden layer deep into the network. The ReLU

operation’s forward pass is extremely simple: \(y = \max\{0,x\}\), which can be

vectorized using np.max.

The backward pass is where things get tricky. The input is a 3-D vector, and so is the output! Hence, taking the derivative of the function \(y(x): \mathbb{R}^3\to \mathbb{R}^3\) means we have to consider the effect of every \(x_i\) on every \(y_j\). The only way that’s possible is to use Jacobians. Using the example here, denoting the derivative as \(\frac{\partial y}{\partial x}\) where \(y(x)\) is a function of \(x\), we have:

\[\begin{align*} \frac{\partial y}{\partial x} &= \begin{bmatrix} \frac{\partial y_1}{\partial x_1} &\frac{\partial y_1}{\partial x_2} & \frac{\partial y_1}{\partial x_3}\\ \frac{\partial y_2}{\partial x_1} &\frac{\partial y_2}{\partial x_2} & \frac{\partial y_2}{\partial x_3}\\ \frac{\partial y_3}{\partial x_1} &\frac{\partial y_3}{\partial x_2} & \frac{\partial y_3}{\partial x_3} \end{bmatrix}\\ &= \begin{bmatrix} \frac{\partial}{\partial x_1}\max\{0,x_1\} &\frac{\partial}{\partial x_2}\max\{0,x_1\} & \frac{\partial}{\partial x_3}\max\{0,x_1\}\\ \frac{\partial}{\partial x_1}\max\{0,x_2\} &\frac{\partial}{\partial x_2}\max\{0,x_2\} & \frac{\partial}{\partial x_3}\max\{0,x_2\}\\ \frac{\partial}{\partial x_1}\max\{0,x_3\} &\frac{\partial}{\partial x_2}\max\{0,x_3\} & \frac{\partial}{\partial x_3}\max\{0,x_3\} \end{bmatrix}\\ &= \begin{bmatrix} 1\{x_1>0\} & 0 & 0 \\ 0 & 1\{x_2>0\} & 0 \\ 0 & 0 & 1\{x_3>0\} \end{bmatrix} \end{align*}\]The most interesting part of this happens when we expand the Jacobian and see that we have a bunch of derivatives, but they all evaluate to zero on the off-diagonal. After all, the effect (i.e. derivative) of \(x_2\) will be zero for the function \(\max\{0,x_3\}\). The diagonal term is only slightly more complicated: an indicator function (which evaluates to either 0 or 1) depending on the outcome of the ReLU. This means we have to cache the result of the forward pass, which easy to do in the CS 231n assignments.

How does this get combined into the incoming (i.e. “upstream”) gradient, which is a vector \(\frac{\partial L}{\partial y}\). We perform a matrix times vector operation with that and our Jacobian from above. Thus, the overall gradient we have for \(x\) with respect to the loss function, which is what we wanted all along, is:

\[\frac{\partial L}{\partial x} = \begin{bmatrix} 1\{x_1>0\} & 0 & 0 \\ 0 & 1\{x_2>0\} & 0 \\ 0 & 0 & 1\{x_3>0\} \end{bmatrix} \cdot \frac{\partial L}{\partial y}\]This is as simple as doing mask * y_grad where mask is a numpy array with 0s

and 1s depending on the value of the indicator functions, and y_grad is the

upstream derivative/gradient. In other words, we can completely bypass the

Jacobian computation in our Python code! Another option is to use y_grad[x <=

0] = 0, where x is the data that was passed in the forward pass (just before

ReLU was applied). In numpy, this will set all indices to which the condition x

<= 0 is true to have zero value, precisely clearing out the gradients where we

need it cleared.

In practice, we tend to use mini-batches of data, so instead of a single \(x

\in \mathbb{R}^3\), we have a matrix \(X \in \mathbb{R}^{3 \times n}\) with

\(n\) columns.1 Denote the \(i\)th column as \(x^{(i)}\). Writing out

the full Jacobian is too cumbersome in this case, but to visualize it, think of

having \(n=2\) and then stacking the two samples \(x^{(1)},x^{(2)}\) into a

six-dimensional vector. Do the same for the output \(y^{(1)},y^{(2)}\). The

Jacobian turns out to again be a diagonal matrix, particularly because the

derivative of \(x^{(i)}\) on the output \(y^{(j)}\) is zero for \(i \ne j\).

Thus, we can again use a simple masking, element-wise multiply on the upstream

gradient to compute the local gradient of \(x\) w.r.t. \(y\). In our code we

don’t have to do any “stacking/destacking”; we can actually use the exact same

code mask * y_grad with both of these being 2-D numpy arrays (i.e. matrices)

rather than 1-D numpy arrays. The case is similar for larger minibatch sizes

using \(n>2\) samples.

Remark: this process of computing derivatives will be similar to other activation functions because they are elementwise operations.

Affine Layer (Fully Connected), Biases

Now let’s discuss a layer which isn’t elementwise: the fully connected layer operation \(WX+b\). How do we compute gradients? To start, let’s consider one 3-D element \(x\) so that our operation is

\[\begin{bmatrix} W_{11} & W_{12} & W_{13} \\ W_{21} & W_{22} & W_{23} \end{bmatrix} \begin{bmatrix} x_1 \\ x_2 \\ x_3 \end{bmatrix} + \begin{bmatrix} b_1 \\ b_2 \end{bmatrix} = \begin{bmatrix} y_1 \\ y_2 \end{bmatrix}\]According to the chain rule, the local gradient with respect to \(b\) is

\[\frac{\partial L}{\partial b} = \underbrace{\frac{\partial y}{\partial b}}_{2\times 2} \cdot \underbrace{\frac{\partial L}{\partial y}}_{2\times 1}\]Since we’re doing backpropagation, we can assume the upstream derivative is given, so we only need to compute the \(2\times 2\) Jacobian. To do so, observe that

\[\frac{\partial y_1}{\partial b_1} = \frac{\partial}{\partial b_1} (W_{11}x_1+W_{12}x_2+W_{13}x_3+b_1) = 1\]and a similar case happens for the second component. The off-diagonal terms are zero in the Jacobian since \(b_i\) has no effect on \(y_j\) for \(i\ne j\). Hence, the local derivative is

\[\frac{\partial L}{\partial b} = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix} \cdot \frac{\partial L}{\partial y} = \frac{\partial L}{\partial y}\]That’s pretty nice — all we need to do is copy the upstream derivative. No additional work necessary!

Now let’s get more realistic. How do we extend this when \(X\) is a matrix? Let’s continue the same notation as we did in the ReLU case, so that our columns are \(x^{(i)}\) for \(i=\{1,2,\ldots,n\}\). Thus, we have:

\[\begin{bmatrix} W_{11} & W_{12} & W_{13} \\ W_{21} & W_{22} & W_{23} \end{bmatrix} \begin{bmatrix} x_1^{(1)} & \cdots & x_1^{(n)} \\ x_2^{(1)} & \cdots & x_2^{(n)} \\ x_3^{(1)} & \cdots & x_3^{(n)} \end{bmatrix} + \begin{bmatrix} b_1 & \cdots & b_1 \\ b_2 & \cdots & b_2 \end{bmatrix} = \begin{bmatrix} y_1^{(1)} & \cdots & y_1^{(n)} \\ y_2^{(1)} & \cdots & y_2^{(n)} \end{bmatrix}\]Remark: crucially, notice that the elements of \(b\) are repeated across columns.

How do we compute the local derivative? We can try writing out the derivative rule as we did before:

\[\frac{\partial L}{\partial b} = \frac{\partial y}{\partial b} \cdot \frac{\partial L}{\partial y}\]but the problem is that this isn’t matrix multiplication. Here, \(y\) is a function from \(\mathbb{R}^2\) to \(\mathbb{R}^{2\times n}\), and to evaluate the derivative, it seems like we would need a 3-D matrix for full generality.

Fortunately, there’s an easier way with computational graphs. If you draw out the computational graph and create nodes for \(Wx^{(1)}, \ldots, Wx^{(n)}\), you see that you have to write \(n\) plus nodes to get the output, each of which takes in one of these \(Wx^{(i)}\) terms along with adding \(b\). Then this produces \(y^{(i)}\). See my hand-drawn diagram:

This captures the key property of independence among the samples in \(X\). To compute the local gradients for \(b\), it therefore suffices to compute the local gradients for each of the \(y^{(i)}\) and then add them together. (The rule in computational graphs is to add incoming derivatives, which can be verified by looking at trivial 1-D examples.) The gradient is

\[\frac{\partial L}{\partial b} = \sum_{i=1}^n \frac{\partial y^{(i)}}{\partial b} \frac{\partial L}{\partial y^{(i)}} = \sum_{i=1}^n \frac{\partial L}{\partial y^{(i)}}\]See what happened? This immediately reduced to the same case we had earlier,

with a \(2\times 2\) Jacobian being multiplied by a \(2\times 1\) upstream

derivative. All of the Jacobians turn out to be the identity, meaning that the

final derivative \(\frac{\partial L}{\partial b}\) is the sum of the columns of

the original upstream derivative matrix \(Y\). As a sanity check, this is a

\((2\times 1)\)-dimensional vector, as desired. In numpy, one can do this with

something similar to np.sum(Y_grad), though you’ll probably need the axis

argument to make sure the sum is across the appropriate dimension.

Affine Layer (Fully Connected), Weight Matrix

Going from biases, which are represented by vectors, to weights, which are represented by matrices, brings some extra difficulty due to that extra dimension.

Let’s focus on the case with one sample \(x^{(1)}\). For the derivative with respect to \(W\), we can ignore \(b\) since the multivariate chain rule states that the expression \(y^{(1)}=Wx^{(1)}+b\) differentiated with respect to \(W\) causes \(b\) to disappear, just like in the scalar case.

The harder part is dealing with the chain rule for the \(Wx^{(1)}\) expression, because we can’t write the expression “\(\frac{\partial}{\partial W} Wx^{(1)}\)”. The function \(Wx^{(1)}\) is a vector, and the variable we’re differentiating here is a matrix. Thus, we’d again need a 3-D like matrix to contain the derivatives.

Fortunately, there’s an easier way with the chain rule. We can still use the rule, except we have to sum over the intermediate components, as specified by the chain rule for higher dimensions; see the Wikipedia article for more details and justification. Our “intermediate component” here is the \(y^{(1)}\) vector, which has two components. We therefore have:

\[\begin{align} \frac{\partial L}{\partial W} &= \sum_{i=1}^2 \frac{\partial L}{\partial y_i^{(1)}} \frac{\partial y_i^{(1)}}{\partial W} \\ &= \frac{\partial L}{\partial y_1^{(1)}}\begin{bmatrix}x_1^{(1)}&x_2^{(1)}&x_3^{(1)}\\0&0&0\end{bmatrix} + \frac{\partial L}{\partial y_2^{(1)}}\begin{bmatrix}0&0&0\\x_1^{(1)}&x_2^{(1)}&x_3^{(1)}\end{bmatrix} \\ &= \begin{bmatrix} \frac{\partial L}{\partial y_1^{(1)}}x_1^{(1)} & \frac{\partial L}{\partial y_1^{(1)}} x_2^{(1)}& \frac{\partial L}{\partial y_1^{(1)}} x_3^{(1)}\\ \frac{\partial L}{\partial y_2^{(1)}} x_1^{(1)}& \frac{\partial L}{\partial y_2^{(1)}} x_2^{(1)}& \frac{\partial L}{\partial y_2^{(1)}} x_3^{(1)}\end{bmatrix} \\ &= \begin{bmatrix} \frac{\partial L}{\partial y_1^{(1)}} \\ \frac{\partial L}{\partial y_2^{(1)}}\end{bmatrix} \begin{bmatrix} x_1^{(1)} & x_2^{(1)} & x_3^{(1)}\end{bmatrix}. \end{align}\]We fortunately see that it simplifies to a simple matrix product! This seems to suggest the following rule: try to simplify any expressions to straightforward Jacobians, gradients, or scalar derivatives, and sum over as needed. Above, splitting the components of \(y^{(1)}\) allowed us to utilize the derivative \(\frac{\partial y_i^{(1)}}{\partial W}\) since \(y_i^{(1)}\) is now a real-valued function, thus enabling straightforward gradient derivations. It also meant the upstream derivative could be analyzed component-by-component, making our lives easier.

A similar case holds for when we have multiple columns \(x^{(i)}\) in \(X\). We would have another sum above, over the columns, but fortunately this can be re-written as matrix multiplication.

Convolutional Layers

How do we compute the convolutional layer gradients? That’s pretty complicated so I’ll leave that as an exercise for the reader. For now.

-

In fact, \(X\) is in general a tensor. Sophisticated software packages will generalize \(X\) to be tensors. For example, we need to add another dimension to \(X\) with image data since we’ll be using, say, \(28\times 28\) data instead of \(28\times 1\) data (or \(3\times 1\) data in my trivial example here). However, for the sake of simplicity and intuition, I will deal with simple column vectors as samples within a matrix \(X\). ↩

Keeper of the Olympic Flame: Lake Placid’s Jack Shea vs. Avery Brundage and the Nazi Olympics (Story of My Great-Uncle)

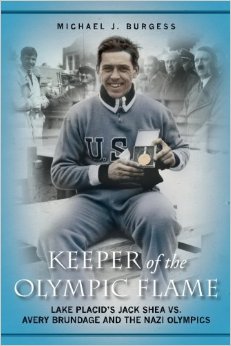

I just read Keeper of the Olympic Flame: Lake Placid’s Jack Shea vs. Avery Brundage and the Nazi Olympics. This is the story of Jack Shea, a speed-skater from Lake Placid, NY, who won two gold medals in the 1932 Winter Olympics (coincidentally, also in Lake Placid). Shea became a local hometown hero and helped to put Lake Placid on the map.

Then, a few years later, Jack Shea boycotted the 1936 Winter Olympics since they were held in Nazi Germany. Jack Shea believed – rightfully – that any regime that discriminated against Jews to the extent the Nazis did had no right to host such an event. Unfortunately, the man in charge of the decision, Avery Brundage, had the last call and decided to include Americans in the Olympics. (Due to World War II, The Winter Olympics would not be held again until 1948.) The book discusses Shea’s boycott – including the striking letter he wrote to Brundage – and then moves on to the 1980 Winter Olympics, which also was held in Lake Placid.

I enjoyed reading Keeper of the Olympic Flame to learn more about the history of the Winter Olympics and the intersection of athletics and politics.

The book also means a lot to me because Jack Shea was my great-uncle. For me, the pictures and stories within it are riveting. As I read the book, I often wondered about what life must have been like in those days, particularly for my distant relatives and ancestors.

I only met Jack Shea once, at a funeral for his sister (my great-aunt). Jack Shea died in a car accident in 2002 at the age of 91, presumably from a 36-year-old drunk motorist who escaped prosecution. This would be just weeks before his grandson, Jimmy Shea, won a gold meal in skeleton during the 2002 Salt Lake City Winter Olympics. I still remember watching Jimmy win the gold medal and showing everyone his picture of Jack Shea in his helmet. Later, I would personally meet Jimmy and other relatives in a post-Olympics celebration.

I wish I had known Jack Shea better, as he seemed like a high-character individual. I am glad that this book is here to partially make up for that.

Some Interesting Artificial Intelligence Research Papers I've Been Reading

One of the advantages of having a four-week winter break between semesters is that, in addition to reading interesting, challenging non-fiction books, I can also catch up on reading academic research papers. For the last few years, I’ve mostly read research papers by downloading them online, and then reading the PDFs on my laptop while taking notes using the Mac Preview app and its highlighting and note-taking features. For me, research papers are challenging to read, so the notes mean I can write stuff in plain English on the PDF.

However, there are a lot of limitations with the Preview app, so I thought I would try something different: how about making a GitHub repository which contains a list of papers that I have read (or plan to read) and where I can write extensive notes. The repository is now active. Here are some papers I’ve read recently, along with a one-paragraph summary for each of them. All of them are “current,” from 2016.

-

Stochastic Neural Networks for Hierarchical Reinforcement Learning, arXiv, by Carlos Florensa et al. This paper proposes using Stochastic Neural Networks (SNNs) to learn a variety of low-level skills for a reinforcement learning setting, particularly one which has sparse rewards (i.e. usually getting 0s instead of +1s and -1s). One key assumption is that there is a pre-training environment, where the agent can learn skills before being deployed in “official” scenarios. In this environment, SNNs are used along with an information-theoretic regularizer to ensure that the skills learned are different. For the overall high-level policy, they use a separate (i.e. not jointly optimized) neural network, trained using Trust Region Policy Optimization. The paper benchmarks on a swimming environment, where the agent exhibits different skills in the form of different swimming directions. This wasn’t what I was thinking of when I thought about skills, though, but I guess it is OK for one paper. It would also help if I were more familiar with SNNs.

-

#Exploration: A Study of Count-Based Exploration for Deep Reinforcement Learning, arXiv, by Haoran Tang et al. I think by count-based reinforcement learning, we refer to algorithms which explicitly keep track of \(N(s,a)\), state-action visitation counts, and which turn that into an exploration strategy. However, these are only feasible for small, finite MDPs when states will actually be visited more than once. This paper aims to blend count-based reinforcement learning to the high-dimensional setting by cleverly applying a hash function to map states to hash codes, which are then explicitly counted. Ideally, states which are similar to each other should have the same or similar hash codes. The paper reports the surprising fact (both to me and to them!) that such count-based RL, with an appropriate hash code of course, can reach near state of the art performance on complicated domains such as Atari 2600 games and continuous problems in the RLLab library.

-