My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

Miscellaneous Prelim Review (Part 2)

This will probably be my last prelim review post. The topics I’ll cover in this post are convex optimization, statistical learning theory (broadly), and logic/planning. Actually, I wanted to make some detailed notes about Kalman filtering, but I think I’ve done more than enough here, and there are too many equations involved to write that quickly.

Convex Optimization

This part is based on sections 9.1 through 9.5 of Boyd and Vandenberghe’s book, freely available online. Stephen Boyd also has a lecture video on YouTube that I watched, which I found to be very helpful. (I can also understand Professor Boyd’s speech well.) The book is fine, I suppose, but is really hard for me to read so I made embarrassingly slow progress as I learned this material.

The main purpose of sections 9.1 through 9.5 is to discuss iterative algorithms for minimization. Formally, we have the problem of minimizing a convex function \(f(x)\) and need to find the optimal \(x = x^*\). As in almost all cases, we have to remember that \(x\) is not generally a scalar but a vector, but \(f\) is real-valued, so \(f : \mathbb{R}^n \to \mathbb{R}\). I had to keep reminding myself of this.

In most cases, we need to use iterative algorithms to find \(x^*\). The class of algorithms we use are known as descent algorithms because they generate points \(\{x^1,x^2,\ldots \}\) such that \(f(x^k) > f(x^{k+1})\) unless we are at the optimum. Actually, a little side-note: there is exactly one optimal \(x^*\) because we are actually assuming that \(f\) is strongly convex, not just convex (and strong convexity is not the same as strict convexity!). By strong convexity, we assume that there is a constant \(m > 0\) such that \(\nabla^2 f(x) \ge mI\), which means \(\nabla^2 f(x) - mI\) is positive semidefinite.

A lot of our future analysis will depend on a concept known as the condition number of a matrix or a set. For a matrix, the condition number is the ratio of the largest singular value to the smallest singular value. Alternatively, we can use eigenvalues if the matrix is positive semidefinite, which actually happens here since the second-order characterization of convexity states that the Hessian of \(f\) is positive semidefinite. The condition number of a set is defined as the ratio of the largest width to smallest width. High condition numbers result in highly skewed/stretched data.

Here’s the descent algorithm. We repeat the following until some stopping criterion:

- Compute a (descent) direction to change \(x\), denoted \(\Delta x\)

- Compute a length or step size \(t\) to go in that direction using some form of line search

- Compute \(x \leftarrow x + t (\Delta x)\)

There are two main ways to choose \(t\): exact search (find \(\arg_t \min f(x + t (\Delta x))\)) or backtracking search. For some reason, it took me a really long time to understand backtracking line search, but after looking at that figure in Boyd’s book for ages, I understand what it does now. We have to keep decrementing \(t\) until our function \(f(x + t (\Delta x))\) lies below a given upper bound. Backtracking line search is important because it’s more efficient and in practice, it often works just as well (or better!) than exact line search. To explain it, remember that figure with the three curves in it: one is \(f\) and two are straight curves which follow from the FOC of \(f\) at \(y=x+\Delta x\).

But the real difference in the various gradient algorithms comes when we pick \(\Delta x\). There are three options:

- Use \(-\nabla f(x)\). I mean, the gradient \(\nabla f(x)\) points in the direction of greatest increase of \(f\) at \(x\) (by definition) so why on earth would we not use the negative of that? This is gradient descent.

- Use the direction that maximizes the negative gradient in the direction determined by a pre-specified norm. Precisely, our first-order approximation of \(x\) at \(x-v\) is \(f(x+v) \approx f(x) + \nabla f(x)^Tv\), and we want to find \(\arg_v \min \{\nabla f(x)^Tv : \|v\| \le 1\}\); in other words, we want to make the directional derivative as negative as possible. We need to restrict \(\|v\|\) because if not we could first pick a direction that makes it negative when multiplied by the gradient, and then make it arbitrarily large. Also notice that we are not specifying the exact norm. This is steepest descent, and equals gradient descent when \(\|v\|\) is the \(\ell_2\) norm.

- use \(-(\nabla^2 f(x))^{-1}\nabla f(x)\), i.e., the negative of the inverse of the Hessian, multiplied by the gradient. Whew! This comes from the second order approximation of \(f(x + \Delta x)\) – just take the gradient with respect to \(x\), then solve. This is Newton’s Method.

Gradient descent is simple, and works perfectly (i.e., converges in one step) when the data are “isotropic,” that is to say, roughly “equal in all directions.” It’s bad when the condition number of the Hessian or the sublevel sets is high (e.g., in the 1000s). The classic example is the ellipsoid “bowl” where we have a 3-D bowl that is much wider in one direction than the other. Gradient descent with exact line search will always “overshoot” the optimal location and keeps going back and forth, zig-zagging to the center. The stopping criterion for gradient descent is if \(\|\nabla f(x)\|_2 \le \eta\) for some pre-specified \(\eta\).

Steepest descent is a generalization of gradient descent in that we get the option of picking the norm that we want to use as a metric of our “gradient” here. A quick warning: there are actually two versions of \(\Delta x\). I tend to assume we are using the normalized version \(\Delta x_{\rm nsd}\), where the \(v\) we pick has norm bounded by one. There’s also the un-normalized version \(\Delta x_{\rm sd} = \|\nabla f(x)\|_{*} \Delta x_{\rm nsd}\) but I don’t understand how this actually works.

Steepest descent can work with the \(\ell_1\), \(\ell_2\), and quadratic norms. In the \(\ell_1\), it is equivalent to coordinate descent (modifying one coordinate of \(x\) at a time), and the way to think about this is that we are taking the maximum component (in absolute value) of \(\nabla f(x)\) and setting our \(v\) to be zero everywhere except for \(\pm 1\) at that “largest component.” The derivation for \(\Delta x_{\rm nsd}\) in the quadratic norm is more complicated (for the un-normalized, it’s just \(-P^{-1}\nabla f(x)\)), but visualizing it is easier: we have a point \(x\), draw an ellipse around it (determined by the norm), and then pick the direction that results in the greatest decrease. More intuition: extend as far as possible in the direction of \(-\nabla f(x)\), while staying inside that unit ball. It’s also worth noting that we can transform coordinates from the quadratic norm’s matrix \(P\) to get gradient descent. In fact, this gives a useful test for a norm: how well steepest descent performs will depend on how well the transformed points \(P^{1/2}x\) have “equal” isocontours suited for gradient descent.

Newton’s method is a step up from gradient descent in that we use a second-order approximation of \(f\). The way I think of it is that gradient descent will produce a plane in 3-D (e.g., for a 3-D “bowl” that we’re trying to reach the minimum of) but Newton’s method will produce another bowl, though this bowl will usually be entirely above of the original one, save for the tangent point.

The book mentions three “perspectives” on Newton’s method:

- Minimization of the second-order approximation of \(f\), which is how I see it.

- Steepest descent in the Hessian norm: it’s like the quadratic norm described earlier, but the Hessian is a really good “\(P\)” matrix to use since its condition number approximates the condition number of the sublevel sets!

- Solution of linearized optimality condition. I did not understand this at first, but actually, think of Newton’s method for approximating roots of a function \(f\), where we need to subtract \(f/f'\). In our case, we want to find the minimizer of \(f\), which means we want the roots of the derivative \(f'\), which involves \(f'/f''\). That’s exactly what we have here!

More facts about Newton’s method:

- If the original function is already quadratic, Newton’s method converges in one step.

- It is independent of affine coordinate transformations. When we do iterates with \(x^{(k)}\) versus \(Tx^{(k)}\), the relationship between the points will remain the same.

- It uses something called the Newton decrement \(\lambda(x) \approx f(x) - f(x^\star)\) to determine when to stop.

- There is a damped phase versus a pure phase. In the former, the difference in \(f\) when we change \(x\) decreases by a fixed quantity (this is good!). In the latter, the backtracking line search always picks \(t=1\) and the number of accurate digits doubles. Thus, there is no need to run that second phase more than, say, four times.

- Newton’s method still works with badly-conditioned sublevel sets of \(f\).

- The downside of Newton’s method compared to gradient or steepest descent is that (1) we have to compute the Hessian, and (2) we have to store it – remember that the Hessian will be \(n \times n\), whereas the gradient will only be \(n \times 1\).

The usual disclaimers apply in that we don’t really know various constants that get involved in the proofs, unfortunately.

Statistical Concepts and Logistic Regression

This part is closely related to what I wrote about linear regression and the least mean squares algorithm. I will be discussing logistic regression as well (for classification, not regression), but first we take a brief detour to discuss the third major class of problem known as density estimation.

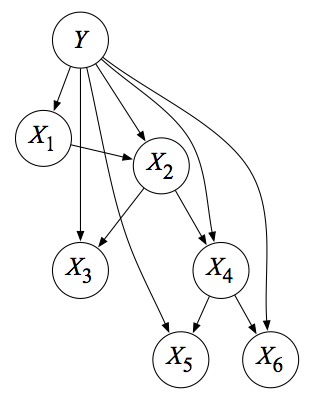

The problem is, given data, to find the appropriate model for it. The relatively easy case is if we assume we already have an idea of the distribution (e.g., Gaussian) and we just need to find the parameters (here, the mean and variance). We find the parameters via maximum likelihood. So in the IID Gaussian case, of which the graphical model is represented as \(N\) independent shaded circles in a graphical model, we take the sample mean and sample covariance as our MLEs. With the Bayesian approach, where we have a new \(\mu\) node pointing to all samples, we put a Gaussian prior on mean \(\mu\) so that the result is a weighted estimate (and the same for the variance, actually), because of conjugate priors. In the case of discrete data \(x\), we model these with multinomials. The resulting MLEs, which require Lagrange multipliers to solve (which gave me a huge headache at first), are just the sample proportions. For the Bayesian version, we use a Dirichlet prior. To extend the class of distributions we want to model, we can assume a mixture model, where \(p(x\mid \theta) = \sum_{i=1}^{k}\alpha_i f_i(x\mid \theta_i)\), where the \(\alpha_i\)s are mixing proportions that sum to one. This time, we have a hidden node that points to its own observed data point \(x\).

There is an alternative strategy of estimation known as nonparametric density estimation. Here, we do not assume we have a fixed parameter \(\theta\) and as our data grows, the nonparametric model will grow to represent a wider class of distributions. We have kernels, where each data point takes some probability mass, and we add them up and normalize. In the case of Gaussian kernels, the nonparametric case for a fixed number of samples really reduces to the mixture model case, but they differ as the number of instances grow.

Tip: use the nonparametric case if we do not have a good idea of the model and lots of data, but use the parametric version when we have little data and a good idea of its underlying distribution (it will converge faster). The line between the two methods does blur somewhat, for instance, when we have a mixture modeling problem where we have to dynamically estimate the number of components \(K\).

Finally, we can turn our attention towards the regression and classification problems. In both cases, we model \(p(y_n\mid x_n,\theta)\), where the \(n\) here indicates that we assume IID data. For linear regression, we assume \(y_n = \beta^Tx_n + \epsilon_n\), and have to find \(\beta\). The choice of \(\epsilon_n\) is what really determines the distribution – here we assume Gaussians, so this is linear regression, and that means the MLE of \(\beta\) is the OLS estimate. Another way of extending linear regression to be more flexible is to use (conditional) mixtures. Here, the graphical model looks like that of the density estimation mixture model, except we also need the \(X_n\) node (which may or may not be connected to the mixture node \(Z_n\)). And, of course, we could always treat these from a Bayesian perspective, perhaps by endowing that \(\epsilon_n\) error term for Gaussians (in linear regression) with Gaussian priors for its mean and variance (well, probably variance only if we want the mean to be zero).

We can also use nonparametric regression, if we do not want to restrict our conditional mean functions. Actually, Russell and Norvig cover this a bit in their nonparametric methods section in the textbook; each predicted new \(y\) is based on the weighted prediction of the other, “nearest” \(y_n\)s.

In the classification case, the distinction between generative and discriminative cases is more apparent. I remember the way the arrows point in the model just by remembering the discriminative case, and then realizing that the generative is the opposite one. Use the generative case if we want a full probabilistic model, and use discriminative classification if we only care about the boundary point. The full model in the generative case also may help combat overfitting, so it is better with limited and partially observed data. Discriminative models have less bias because they make fewer assumptions, so they work better with lots of data (in fact, it’s a lot like how nearest neighbor will work best with lots of data).

These approaches are important to understand the logistic regression algorithm, where we assume that the posterior probability \(p(y=0\mid x, \theta)\) for a binary classification problem is logistic or arrives at that form. That we have the inner product there means the posterior “boundaries” of equal probability are hyperplanes. In the generative case, we estimate means and covariances, which define \(\theta\) (and these are density estimation problems!) and the boundary implicitly, while in the discriminative case, we estimate \(\theta\) “directly,” possibly choosing an arbitrarily complex boundary. In fact, “discriminative = logistic regression”, “generative = Naive Bayes”, and both are for classification. In fact, that’s why they are in the same chapter of Mike Jordan’s notes!

Again, logistic regression assumes we have the sigmoid function as the form for our posterior probability. We can assume this from the outset (discriminative) but we can also “inspire” this generatively. Here’s how: assume that we have two classes, and the class conditionals1 are Gaussian with, and this is important, the same covariance matrices. Then the posterior \(P(Y = 0 \mid X, \theta)\) can be expressed as \((1+e^{-\beta^Tx - \gamma})^{-1}\), i.e., the exponent has an affine function of \(x\), which means that the boundaries of equal probability are hyperplanes. In the special case of equal mixing proportions, we have equidistant boundaries. A skewed mixing proportion will shift the boundaries towards or away one of the classes.

In fact, the assumption of a Gaussian class conditional is not even necessary. We can get away with multinomials (this is another way of viewing the Naive Bayes classifier), or in fact, anything in the exponential family2! When I was learning about these in my undergraduate Bayesian statistics course, I never really got why the exponential families were that important. But here is one reason, I suppose. Note that these are still assumptions that add bias to the generative case.

We can extend the previous analysis to the general classification case with \(K\) outputs. In that case, we use the softmax: \(e^{\beta_i^Tx}/\sum_j e^{\beta_j^Tx}\), which also results in linear boundaries, though that’s kind of stretching the definition; imagine a “pie-chart” where the “slices” represent boundaries. Also, if we wanted to find maximum likelihood estimation, we could do that, because we have \(P(x\mid y,\theta)\) and \(P(y\mid \theta)\). Just combine those to get the joint and differentiate the log of it. For instance, in the two Gaussian case, the MLE for the means \(\mu_1\) and \(\mu_2\) are just the sample means of the elements in their respective classes (remember, we assume we know the training data labels), and the covariance is weighted among the two. In the general case, we again write the formula and then separate the terms appropriately. Note: we will use \(\theta\) to represent a generic vector of weights. To be safe, whenever we write probabilities, add a conditioned \(\theta\).

Whew! Now we can talk about logistic regression, where the class dependency is fixed to be a sigmoid function. How do we find the best \(\theta\)? As usual, take logs, and maximize. This actually leads us to an LMS-like algorithm, and the only difference is the class expectation. For the batch version, we use iteratively reweighted least squares, which is basically Newton’s method for optimizing the (nearly) quadratic log likelihood function. In fact, there is a close connection between this method and the “normal” weighted least squares method, which started by assuming that each training input/output had an attached “weight” to it: this method can be written as

\[\theta \leftarrow (X^TWX)^{-1}X^TWz\]for what I thought was a pretty convoluted \(z\), but actually turns out to be a first order approximation of \(y\). Interesting … I don’t really understand the full details of this, but having the knowledge of convex optimization at the top of this post really helped me.

For extending discriminative learning to multiple classes, again assume that \(P(Y = ? \mid X,\theta)\) is represented by the softmax function, and a lot of our math follows for what is known as softmax regression.

Finally, thanks to Andrew Ng I have a bit of a better idea on the connection between the logistic regression update (in the LMS-like form) versus the perceptron: just change the sigmoid part in the update to be the “sign” function, and then the update turns into the perceptron.

More Statistical Learning Theory

Here’s a random assortment of notes from Mike Jordan’s book (which I think he has abandoned now).

First, let’s consider the multivariate Gaussian is one of the most important distributions to understand, and I did not have an easy time learning about it. Fortunately, by now I can write out the formula and reason about it quite easily. Unfortunately, I don’t know how to derive it from first principles. I can explain “roughly” what it does, e.g., that \(|\Sigma|^{1/2}\) in the normalizing constant comes from how each component of the random vector contributes some amount of variance equal to its eigenvalue, and the determinant of a matrix is the product of its eigenvalues.

But anyway, there are a few important facts worth discussing about the multivariate Gaussian.

-

There is a moment parameterization and the canonical parameterization. The former is what I always use, but we can transform it into the latter with the rules \(\Lambda = \Sigma^{-1}\) and \(\eta = \Sigma^{-1}\mu\) to get \(p(X\mid \eta, \Lambda)\).

-

Given a matrix \(M\) where we partition it into components \(E,F,G,\) and \(H\), the goal of block diagonalization is to find matrices \(A\) and \(B\) such that \(A \times M \times B\) is diagonal in the corresponding locations of \(F\) and \(G\). After a lot of algebra, we can arrive at the derivative of the partitioned matrix \(M\), and also derive a bunch of useful identities (the “matrix inversion lemma”) that I refuse to memorize.

-

The reason why we go through this tedious algebra is that it gives us identities we can use when partitioning the multivariate Gaussian to get formulas for marginal and conditional probabilities involving multivariate Gaussians. Specifically, we have \(x\in \mathbb{R}^n\) split into \(x_1\) and \(x_2\), and we want \(p(x_2)\) and \(p(x_1\mid x_2)\), where I’m eliding the parameters for simplicity. We obviously have the joint \(p(x_1,x_2)\), so we need to figure out how to split them cleverly. Once we’ve gone through the derivation, we will find that the moment parameterization lends to easy computations of marginals but hard ones for conditionals, and the reverse is true for the canonical parameterization. Importantly, these formulas preserve the fact that our variables are Gaussian.

In addition to knowing that the marginals and the conditionals are Gaussian, the sum of independent Gaussians is Gaussians.

We can extend the mixture model discussion from last section into the multivariate Gaussian setting, where the hidden variables indicate the particular multivariate Gaussian distribution of interest. Here, we have \(p(x\mid \theta) = \sum_i \pi_i \mathcal{N}(x\mid \mu_i, \Sigma_i)\), and assuming IID points, we want to find the \(\pi\), \(\mu\), and \(\Sigma\) parameters to maximize the log likelihood. This requires Expectation-Maximization, which involves computing the probability that a particular distribution generated point \(x\), which is of obvious interest for classification. (Admittedly this case works best in the binary setting where the conditional expectation is the same as the conditional probability of being one.) One can also think of K-Means as a simplified version of EM. We use EM rather than maximum likelihood because our “log” term has a sum inside it, which is due to the probabilities of the point being in multiple possible classes. In the previous section (on classification), we had the class so we effectively take only one term in that summation, in which MLE follows easily.

One thing I didn’t quite realize earlier was that in the EM for Gaussians, we can take the log likelihood, differentiate it with respect to \(\pi_i\) (or \(\mu_i\) or \(\Sigma_i\)) and we end up finding solutions that match the EM algorithm, which is interesting and implies that our “heuristic” update formulas may not be so bad because they indicate maxima of the log likelihood. Of course, one can also derive the update formulas “systematically” by appealing to the expected complete log likelihood, where we take expectations with respect to the hidden variables. (See my previous post for more information about this quantity.)

The E-step in general involves computing the expected complete log likelihood, and the M-step in general involves maximizing the expected complete log likelihood with respect to \(\theta\). The full power of this terminology is not needed in the simple Gaussian example, but it is a useful exercise to ensure that we derive the same update formulas we developed “heuristically.” In general, the expected complete log likelihood does not suffer from the “coupling” of variables as the original log likelihood.

Finally, we consider the “mixture of experts” case, which is when we have a mixture model for the purposes of regression or classification. Mike Jordan’s notes appear to be missing some figures, so it’s a little hard to see what he’s trying to do, but I think the first figure represents a “V”-shaped set of data, and we need to fit two different regressions on that. The key is figuring out where to split, which is our “EM-like” task. In the mixture of experts, the M-step involves two different maximization steps.

Logic and Planning

I discussed this earlier and had a chance to re-read all of that stuff. My main purpose in this section is to highlight how everything in this section connects with each other. I don’t want to just learn propositional logic, then first order logic, etc., I want to describe then in terms of each other, and to discuss all the similarities and differences among them (and the algorithms they inspire). But this won’t be long because Stuart Russell isn’t on the prelim committee this time (hint hint…).

But first, a laundry list of facts that really confused me the first time:

- Propositions consist of literals, which are just like the atomic elements of propositions, but they can have a “negation” symbol. That’s it: think of literals as either \(A\) or \(\neg A\).

- Predicates are really functions that output a True or a False. Predicates are – in my opinion – the backbone of first order logic.

- Be sure to realize that \(\alpha \Rightarrow \beta\) is the same thing as \(\neg \alpha \vee \beta\). This is probably the most important thing to remember to understand Horn and definite clauses, and why we can apply Modus Ponens to them.

Now we can talk about the connections. Here they are:

-

One can convert from first order logic to propositional logic by extending universal and existential quantifiers.

-

Forward and backward chaining play a role in both propositional and first order logic. They are algorithms for determining entailment when we assume that our knowledge base consists of Horn clauses (prop.) or first order definite clauses (FOL). This is a simplifying assumption, but it is often easy to convert databases to this format. The reason why Horn or definite clauses are needed is that their truth values are equivalent to \(\alpha \Rightarrow \beta\) (and we need “or”s not “and”s), and that exactly fits the description of the Modus Ponens and Generalized Modus Ponens rule format. Note: we use these when we do not want to use the full power of resolution.

-

As an alternative, say we do not have definite clauses and are just looking for a satisfying assignment to a disjunction of clauses. Then in both types of logics, we have the option of backtracking and local search. Both of these have their similarities in the Constraint Satisfaction Problem domain. In backtracking search, we have similar versions of “minimum remaining values” and “least constraining value” heuristics. In local search, that is when we are starting with a full, though not typically satisfactory, assignment to a problem in CNF form, and we pick clauses to shift, and this is the same as in CSPs when we start with a full assignment and use the minimum conflicts heuristics to adjust values.

-

The PDDL language (Chapter 10) is about a simplified language that uses first-order logic “materials” (e.g., predicates, quantifiers, etc.) to encode a search problem (remember Chapter 3!)3. Since we’re encoding a search problem, we need to define the actions we can take, and those must have preconditions and effects, which involve adding or removing some fluents. The fluent, by the way, is the atomic set whose values represent a state. Again, the really important thing to know about Chapter 10 is that it is really another case of the general search problems. One can also make plans using a logical agent.

-

Knowledge representation (Chapter 12) is all about encoding “real-world” stuff in first order logic. Our strategy to represent these is formally called ontological engineering. They discuss categorizing objects, categories (make them into objects!), and events.

-

Let’s go over the different kinds of algorithms:

- Backtracking search: when we incrementally look for assignments to stuff, and then “backtrack” when we have seen some “problems”, e.g., impossible situations (and this can be used for entailment as well!). There are heuristics for this. We do this in CSPs and searching for satisfying assignments in propositional logic. We can also transform a classical planning case to a propositional case and turn it over to the backtracking solver, but this is not practical.

- Local search: we start with a complete assignment, and move variables around until we get to a solution. We do this with CSPs, propositional logic.

- Forward chaining and backward chaining are algorithms for deciding entailment in the two logics. We do not use these in CSPs or classical planning. The FOL case is more complicated due to the need to perform unification (among other factors), but we have general heuristics for improving them.

- In PDDLs, we do forward searching and backward searching to search for a satisfying sequence of actions. The forward searching part is similar to the backtracking search in that we can search for actions with heuristics and backtrack if needed. Backward search can avoid irrelevant states, though.

-

These are \(p(x\mid y)\) because we are conditioning on the class \(y\). ↩

-

A distribution that can be expressed as \(p(x\mid \eta) = h(x) \exp\{\eta^Tx - a(\eta)\}\) is in the exponential family. ↩

-

The book never really makes this clear, but PDDL is not actually First Order Logic, but it reminds me of it because the syntax was designed apparently to be similar. ↩

Miscellaneous Prelim Review (Part 1)

Here is a random assortment of notes I created to wrap up some of the remaining material I need to know. It’s “part 1” because I have another part coming up later.

Information Theory

This part, Chapter 2 from Cover and Thomas, is a bunch of definitions and straightforward theorems (i.e., those that follow directly from definitions):

-

Entropy: \(E(X) = -\sum_{x} p(x)\log_2 p(x) = -\mathbb{E}[\log_2 p(X)]\), where \(x\) is a realization of variable \(X\). It’s the amount of uncertainty inherent in a random variable. For a fixed variable \(X\) with some probability distribution that we can create, the entropy is highest if we make the distribution relatively uniform, and lowest if we make it “peaked.” In the extreme case, if we set \(Pr(X = 0) = 1\), then \(E(X) = 0\).

-

Mutual Information: \(I(X;Y) = E(X) - E(X|Y)\). It’s the decrease in entropy (upon obtaining the value of \(Y\)), for variable \(X\). Note that \(I(X;X) = E(X)\) since the second term will be zero. Alternatively, we represent it as

\[I(X;Y) = \sum_x \sum_y p(x,y) \log_2 \left(\frac{p(x,y)}{p(x)p(y)}\right)\]Note that \(I(X;Y) = I(Y;X)\), so it does commute.

-

Relative Entropy (KL-divergence): \(D(p || q) = \sum_{x} p(x)\ln \left( \frac{p(x)}{q(x)} \right)\). This is a non-symmetric measure of the difference between distributions \(p\) and \(q\). We can also interpret it as the number of additional bits we will need to represent \(p\) if we are using the (inferior) approximation of \(q\). It is infinity if there exists an \(x\) such that \(p(x) > 0\) but \(q(x) = 0\).

All three of the above quantities are non-negative.

Another concept that plays a huge role in information theory is the following:

- Jensen’s inequality: for a convex function \(f\), \(f(\mathbb{E}[X]) \le \mathbb{E}[f(X)]\). For a concave function, like the logarithm, we flip the sign (actually for logs, we can drop the equality case). I find it easiest to remember this rule by expanding out the equations for binary random variables. Let’s say they taken on values 0 and 1 with probability a half each. Then we have \(f(\mathbb{E}[X]) = f(.5 \times 0 + .5 \times 1)\) and \(\mathbb{E}[f(X)] = .5 \times f(0) + .5 \times f(1)\) and can directly relate this to the definition of convexity.

Based on the previous discussion, we can define and infer things like:

-

Joint entropy \(H(X,Y) = - \sum_x \sum_y p(x,y) \log_2 p(x,y) = H(X) + H(Y\mid X)\).

-

Conditional entropy, conditional KL divergence, conditional mutual information. For the sake of simplicity, I will not write all the rules here, but here is the one for conditional entropy: \(H(Y\mid X) = -\sum_x p(x) H(Y \mid X=x) = -\mathbb{E}_p [\log_2 p(Y\mid X)]\). Note that \(H(Y\mid X) \ne H(X\mid Y)\).

-

The chain rule for entropy, relative entropy, and mutual information. Unlike normal probability, these sum the components rather than multiply, which makes sense because all three cases involve logarithms. Again, I won’t write all the rules here, but will note that entropy is the easiest to relate to probability because we literally copy formulas from probability, but use sums instead of products. For the chain rule with mutual information, just pretend we don’t have \(Y\) and follow the probability convention (but sum up). Then stick the \(Y\)’s after the semicolon, but before the conditioning bar. For KL-divergence, it’s the same (split up the joint into a marginal and product, but do this for both distributions, then use two “D” terms.

-

A theorem: \(H(X) \le \log_2|\mathcal{X}|\), where \(\mathcal{X}\) represents the range of variable \(X\), and equality here holds if and only if \(X\) is a uniform random variable.

-

Also one thing that tricked me up the first time I saw it was this consequence of Jensen’s inequality:

\[\sum_{x \in A} p(x) \log\left( \frac{q(x)}{p(x)}\right) \le \log \left( \sum_{x \in A} p(x) \frac{q(x)}{p(x)} \right)\]where \(A\) is the domain for \(p\). I am assuming this really means

\[\mathbb{E}_p\left[\log \left(\frac{q(x)}{p(x)}\right)\right] \le \log \left( \mathbb{E}_p\left[ \frac{q(x)}{p(x)} \right] \right)\]

A final thought on this section: an alternative interpretation of entropy is that it is a lower bound on the average number of bits required to represent the random variable. It’s not “the minimum number of bits” because random variables take on different values with different probabilities, so we may wish to allocate more bits for the low probability events. And we also need to make it clear how we encode, so that we can compare different encodings. Example 1.1.2 from Cover and Thomas will clarify: here, we have eight horses, and they each win with some specified probability. If we wanted to encode the random variable \(H\) indicating the horse that won, we could use three bits in the standard way. But this is suboptimal if the distribution is \((1/2,1/4,1/8,1/16,1/64,1/64,1/64,1/64)\), like it is in the example, because we should allocate fewer digits to the higher probability horses, and more towards the ones that are less likely to win. It’s possible to encode \(H\) so that the average number of bits to represent it is two, which exactly matches the entropy.

Decision Trees

Decision trees are one of the simplest nontrivial classifiers1 that have strong performance in practical tasks. The hypothesis space is the set of trees. For each \(n\)-dimensional sample \(x\), we classify it by propagating \(x\) down the tree. At each node, we test an attribute \(x_i\), and depending on that value, send the sample left or right. Once we send it down the tree far enough, it will land in some “classifier” node that labels the class of that element.

Great, so how do we train such trees from labeled data \(\{(x^{(i)} = (x_1,x_2,\ldots,x_n)^{(i)},y^{(i)})\}_{i=1}^n\)? For that, we invoke some information theory criteria: we want to select the attribute to test that will result in the most amount of purity in the resulting trees, where purity is defined based on entropy. Formally, at each point of the tree, we have a set of data and a candidate set of attributes. We pick the attribute that maximizes the information gain of the data.

Let’s precisely define this for boolean decision trees. At a decision tree’s node, we have \(p\) positive and \(n\) negative samples. The entropy of the random variable describing the output is the entropy of a binary random variable with probability \(p/(p+n)\); to simplify the subsequent notation, denote this as \(B(p/(p+n))\). We define the gain of attribute \(A_k\) that splits the data into \(d\) subsets as follows:

\[Gain(A_k) = B\left(\frac{p}{p+n}\right) - \sum_{i=1}^d \left( \frac{p_k+n_k}{p+n}\right) B\left(\frac{p_k+n_k}{p+n}\right)\]For each subset, we weigh its entropy probabilistically. Otherwise, you could think of a useless attribute that keeps the same proportion of positive and negative examples in each subset. Without the probability weighting, splitting on \(A_k\) would increase the entropy of the goal test on the data.

There are other impurity measures, such as the Gini impurity measure \(\sum_{k=1}^K p(x_k)(1-p(x_k))\) if the output takes on \(K\) realizations. This is not the same as the measure of income inequality! I think the “CART” category of decision trees uses the Gini measure, whereas the “C4.5” and “C5” trees use entropy to measure impurity, though I think the boundaries between those categories is a bit blurry. Misclassification error is not an appropriate measure because it – unlike Gini impurity and entropy – does not give higher weight to branches with purer solutions.

Here are some things to think about:

-

Trees can overfit, so what happens in most realistic algorithms is that we build the large tree first, then prune away nodes with only leaf descendants that do not contribute much to the information gain (e.g., using tests of statistical significance to see if the gain is significant enough). This is not the same as building a tree and deciding to prune away early. The classic example is the XOR data. If we have a lot of XOR data that we want to split, we will find that the information gain of both attributes is zero. We do not want to prune away early because the next step will involve splitting on the second part of the XOR, which splits the data perfectly.

-

In practice, information gain might not be a good value of the amount of information in an attribute, because there might be an attribute that maps each element to a unique value.

-

We may have missing data. A simple but bad strategy is to ignore all training data points that have missing data. An alternative is to “fill in” those values probabilistically based on the distribution of values of those variables in the other samples considered for a particular decision tree.

-

We may want to use decision trees for regression if the output is continuous-valued. One option is to use a decision tree normally up to a certain depth, and then after that, we fit (linear) regression on only those data points that manage to reach that particular leaf node, and only the subset of variable attributes yet to be tested.

How would we train such a regression tree? HTF suggest a greedy algorithm (which also assumes continuous attributes, by the way): at each node, find the attribute and a split point that minimizes the sum of squared errors of the two resulting regions. HTF also assume that once we get to a region, we will approximate the samples with just one value, rather than doing a full-blown regression on it, which makes the problem a lot easier since the sum of squared errors criterion means we pick the mean of the elements considered at that node. To avoid overfitting, they suggest weakest link pruning. We iteratively pick the internal (non-leaf) node that, upon its removal (and subsequent collapsing of the tree) results in the smallest increase in the sum of squared errors criterion. This is pretty cool, and it’ similar to what Russell and Norvig describe.

Finally, here’s a rather interesting connection between boolean decision trees and propositional logic that I failed to realize at first: we can label various paths throughout the tree as \(Path_i\), and so the goal is expressed as:

\[Goal \iff (Path_1 \vee Path_2 \vee \cdots )\]Thus, any function in propositional logic can be expressed as a decision tree!

Nearest Neighbor

This classifier is easy to describe: for each test point, we look at the \(k\) nearest points according to Euclidean (or other) distance matrices and classify the test point as the majority class among those \(k\). This is a problem in high dimensions, since the notion of “distance” as a measure of similarity becomes less reliable due to a combination of (1) noisy and irrelevant features, and (2) the rather intriguing fact that the higher we go in dimensions, the more likely it is our points are farther away from each other. As we increase the dimension of the unit hypercube with our fixed \(k\)-nearest neighbor classifier, we will need to traverse an extra amount for each dimension to reach the \(k\) nearest neighbors.

Let’s now restrict our focus to the 1-nearest-neighbor case. On the surface, this might seem to be unreliable, since we’re only using one closest point and it might overfit the data (see examples of plots showing 1-nearest neighbor versus 5-nearest neighbor). But a famous result called the Cover-Hart Theorem provides a different story, saying that the asymptotic error rate of the 1-nearest neighbor classifier is never more than twice the error of the Bayes’ classifier (according to HTE), where the Bayes’ classifier assigns \(\arg_y \max P(y\mid X=x)\). While it sounds nice, it assumes that new points have to exactly coincide with a point in the training data, which is true in the limit, but not true in general.

Here’s another interesting fact about nearest neighbors that I found surprising. Researchers used nearest neighbors to achieve the best performance (at that time) on the MNIST handwritten digit recognition problem. The digits themselves are points in \(\mathbb{R}^{256}\)-space. A classifier would have to work in high dimensional space and be invariant to rotations, scaling, etc. They way they did this was by defining manifolds in \(\mathbb{R}^{256}\)-space. For instance, there is a one-dimensional curve where points on that curve represent different rotated versions of the “3” digit2. Then there can be another curve representing a different three. One idea is to take the Euclidean distance of the two closest points \(p_1\) and \(p_2\) which lie on separate curves. Unfortunately, this may result in heavily rotated images being equivalent (the classic disaster: confusing a “6” with a “9”), so the ingenious solution is to use tangent lines. That’s the intuition: in reality, the “one-dimensional curves” would be manifolds taking into account additional invariance factors.

Having motivated nearest neighbors, let’s discuss some of its drawbacks. One problem is that it needs to store all the training instances, and for each new test point to classify, it needs to iterate through all of those to find the \(k\) closest neighbors. If \(O(n^2)\) time is unacceptable, then we can speed up the process of finding nearest neighbors with the following two strategies:

-

We can use \(k\)-d trees, or more accurately, \(n\)-d trees if our data is \(n\)-dimensional, so that we don’t confuse this with the \(k\) in the \(k\)-nearest neighbors. At each node, this tree will pick a dimension \(i\) and split the examples according to their median point so that all \(x_i\) such that \(x_i < m\) will go the left sub-tree, and the rest go to the right subtree. The dimensions are typically chosen based on the widest spread of values.

Thus, if we are doing 1-nearest neighbor, for a given new point \(x\), we find its nearest neighbor by querying the \(k\)-d tree to see where it would be located (i.e., it’s like we are inserting it in the tree). We proceed until we hit a leaf, and declare that as the best node found given the current information. But we have to be careful. The nearest neighbor of \(x\) might not be in the same hyperplane after a split! We need to “backtrack” and then measure the distance between \(x\) and the hyperplanes at each step, to see if there are nearest neighbor candidates on the other side. Check the Wikipedia page for more details. They have a nice description and an animation.

The downside with \(k\)-d trees is that with many dimensions, we will need to keep track of numerous subtrees that could potentially have “that nearest neighbor,” and we would iterate through the entire tree. To extend this algorithm for multiple neighbors, we use a list of nearest neighbor points.

-

We can use locality sensitive hashing, which hashes “similar” values in high dimensional spaces to the same hash buckets. Then, using only the elements remaining in that bucket, we can perform exact nearest neighbor via brute force comparisons. Since hash functions are hard to create, we can try \(M\) hash functions independently to get \(M\) buckets, then take the union of all those elements to arrive at the set which we will use for exact comparisons. Russell and Norvig seem to suggest that each of those \(M\) hash functions be a projection down to a line, and the buckets would be a line segment. I guess that makes sense.

The downside with locality sensitive hashing is that it, unlike the use of \(k\)-d trees, is an approximate nearest neighbors search.

Nearest neighbors has an interesting tradeoff with perceptrons. Kernelized perceptrons learn similar to the way nearest neighbors learn, especially with Gaussian kernels that weigh a probability distribution about each point. In other words, distance-weighted nearest neighbors are kernelized perceptrons. Nearest neighbors, unlike plain (non-kernelized) perceptrons, can use fancy similarity functions, as exemplified by the handwritten digit recognition example.

HTE also emphasize the connection between nearest neighbors and least squares.

(Artificial) Neural Networks

Neural networks are a natural extension of the perceptron that I’ve written about in detail before, since perceptrons form the basic building blocks for each node. Like the perceptron (and regression, for that matter), we develop a classifier by updating weights. For neural networks, we use backpropagation to update the weights. At a high level, this means for each training instance, we “feed” it to the network so that it classifies it. Then, we propagate the “error” backwards through the network to update weights. The weight update for those connecting to the output layer is the same for that of logistic regression3, assuming we’re using the sigmoid nonlinear function. The real challenge comes when we compute weight updates for those connecting input or hidden layers to other hidden layers. But in fact, the gradient for the loss at inner nodes is the same as the computation for the gradient at the output layer, except we apply the chain rule multiple times. I’m not going to write the derivation here since it would take too much time; I just did it by hand.

Neural networks have an intriguing fact: provided that there are sufficiently many nodes and layers, they can represent any continuous function (of the input) with arbitrarily high accuracy. It needs multiple layers with non-linear activation functions at each node. Otherwise, if a NN just has an input layer directly connected to an output layer, it fails to learn even a simple XOR function.

There are many extensions to NNs. We could use recurrent NNs, convolutional NNs (popular for computer vision now), etc. We can use thresholding functions other than sigmoids, such as ReLUs, which avoid the “vanishing gradient” problem of sigmoids. Note that we focus on the problem of learning from a fixed structure, i.e., like parameter estimation for a graphical model with the nodes and edges fixed. Learning the structure is much more complicated.

Principal Components Analysis

Principal Components Analysis (PCA) is a way of mapping high dimensional data into a reduced dimensional space, where the reduction is a “best approximation” of the original data. Formally, if \(x_i \in \mathbb{R}^n\) but we really think they lie in \(\mathbb{R}^k\) where \(k \ll n\), then there is probably a process such that \(x_i = \Lambda z_i + \mu_i\) for \(z_i \in \mathbb{R}^k\) but \(\mu_i \in \mathbb{R}^n\) is some noise added.

Obviously, there are many advantages to dimensionality reduction, so the question is how we do this in a sound way. PCA will do this by iteratively “mapping” points to a line characterized by the vector which preserves as much variance in the data, not including vectors already chosen4. In other words, we’d like to project the data onto a subspace so that the variance is maximized. To do this, PCA uses an eigendecomposition, which can make it expensive, but it does not need Expectation-Maximization. (The dimensionality reduction technique that uses E-M is called “factor analysis.”)

It’s easiest to derive PCA in the two dimensional case with \(N\) data points \(\{(x_1,x_2)^{i}\}_{i=1}^N\) where the data have zero mean and each coordinate has unit variance. In the first step, we solve for the (unit) direction vector \(u\):

\[\max_u \sum_{i=1}^N (u^Tx^{(i)})^2 = \max_u \|Xu\|_2^2 = \max_u u^T(X^TX)u\]where \(X\) is the matrix where each row is a training instance \(x^{(i)}\). Note that \(X^TX = \sum_{i=1}^N x^{(i)}(x^{(i)})^T\), and also, if the data are centered, then it is the sample covariance matrix.

In fact, this is a standard optimization problem, where we have a quadratic form \(z^TAz\) that we are maximizing w.r.t. \(z\) subject to the fact that \(\|z\|_2 = 1\). It is a well known fact that this problem is solved by finding the \(u\) that corresponds to the eigenvector of \(A = X^TX\) that has the largest eigenvalue. After all, \(X^TX\) is a symmetric matrix of reals, so its eigenvectors can be chosen to be of unit norm and orthogonal to each other.

Given \(u_1\), the best vector so far, we know that \(x_i = u_1 z_i + \mu_i\) is our “process”, where \(z_i\) is a scalar. Since \(u_1^Tu_1 = 1\) it follows that to project all the \(x_i\) points down to the one dimensional space characterized by \(u\), we do \(u_1^Tx_i\).

But normally we need more dimensions than that. How do we find the “best” set of vectors \(u_1,\ldots,u_k\) for that? We take those eigenvectors that had the largest \(k\) eigenvalues. These form the principal components of the data, and are mutually uncorrelated. (I’m not actually sure why this works – intuitively it does, but I don’t have a proof.) And when we need to project our data, we remember our “process” and add the new eigenvectors as columns of a matrix \(U\) so that \(x_i = Uz_i + \mu_i\), where \(z_i \in \mathbb{R}^k\), and \(k\) is the number of columns of \(U\). Again, \(U\) is orthogonal so ignoring the noise (which is deliberate, since it’s noise!) our projection is \(U^Tx_i\) for all \(x_i\) points.

We can find those eigenvectors by diagonalization or SVD of \(X^TX\). SVD would work since that’s a real, symmetric matrix, so the eigenvalues will be the same as the singular values, and we can thus rank them easily.

There is an alternative way we can derive PCA, using the “process” I explained earlier. We can define \(f(z_i) = Vz_i + \mu_i\) and use that as our approximation of \(x_i\). Thus, our objective would be to find

\[\min_{V} \sum_{i=1}^N \|x_i - f(z_i) \|_2^2 = \min_{V} \sum_{i=1}^N \|x_i - V(V^Tx_i) \|_2^2\]where I just put the \((V^Tx_i)\) to represent the lower dimensional approximation data. To find \(U\), we can again resort to SVD: \(X = UDV^T\), where \(X\) is again the matrix with rows as training instances. Then the columns of \(V\) form the vectors of the principal components. (Sorry for the \(U\) and \(V\) confusing; Ng and HTE use different formulations.) Technically, we only take the first \(K\) columns from \(V\) if we want a set of \(K\) vectors for the projections, which I find is neat (if we want more, just add more columns!). Since \(XV = UD\), then \(UD\) consists of the projected points of \(x_i\), one for each row (and \(UD\) will usually have fewer columns than the full number of components of the \(x_i\)s). There’s a lot of matrix stuff going on here; draw this on a piece of paper to understand better.

HTE present an example of PCA using the Procrustes Transformation, but I don’t really understand how PCA relates to it. I guess because both involve rotations and scaling of the data?

-

In fact, the ability to describe the classifier to lawyers means that companies can use these classifiers to “discriminate” without concern. What companies would have to do is explain the classifier and their rationale (e.g., if a person is in X category, we have to do Y due to previous data, etc.). ↩

-

Admittedly, I am skeptical of how they can claim that a one-dimensional curve represents various rotated aspects of a digit, but if you buy that argument, then everything else follows from that. ↩

-

Recall how we do a stochastic gradient update of a single weight \(w_j\) in logistic regression. For a given training instance, \((x,y)\), where \(x\) is \(N\)-dimensional and \(y\) is a scalar, we do

\[\frac{\partial}{\partial w_i}(y-h_w(x))^2 = \frac{\partial}{\partial w_i}\left(y - \frac{1}{1 + e^{w^Tx}} \right)^2\]assuming we’re using the \(L_2\) loss function. Then we eventually get

\[w_j \leftarrow w_j + \alpha (y-h_w(x))h_w(x)(1-h_w(x))x_i\]which uses the fact that the derivative of the logistic function is itself multiplied by the quantity “one minus itself.” ↩

-

The easiest way to understand this is to look for figures that plot data along with vectors that indicate the PCA dimensions. Typically there will be two vectors chosen, incidating two “best directions” that capture the data. ↩

Perceptrons, SVMs, and Kernel Methods

In this post, we’ll discuss the perceptron and the support vector machine (SVM) classifiers, which are both error-driven methods that make direct use of training data to adjust the classification boundary. They do not “build a model,” which is what a BayesNet-based algorithm such as Naive Bayes would do, which means we can make fewer assumptions about the data.

We’ll also talk about kernels, which allow us to efficiently compute dot products of high-dimensional feature vectors without actually computing those feature vectors.

The Perceptron

The perceptron learning algorithm relies on classification via the sign of the dot product. Given a binary classification problem of vectors in \(\mathbb{R}^n\), the perceptron algorithm computes one parameter vector \(w \in \mathbb{R}^n\). Given an arbitrary sample \(x_i\) with features1 \(f(x_i) \in \mathbb{R}^n\), we classify this as +1 if \(w \cdot f(x_i) \ge 0\) and -1 if otherwise. Assuming we’re doing supervised data, we will know the true label \(y^{(i)} \in \{-1,1\}\). If \({\rm sign}(w \cdot f(x_i)) = y^{(i)}\), then we don’t do anything. Otherwise, we must adjust the weight vector \(w \leftarrow w + y^{i}\cdot f(x_i)\). This will change the direction of the vector, thus shifting the classification boundary. It’s easiest to understand how this works by realizing that \(w \cdot x = 0\) represents the decision boundary, which is orthogonal to \(w\) by definition of the dot product and divides up the feature vector space into “halves,” where one has dot products with \(w\) positive, and the other negative.

In the general case, there will be multiple classes, so we will have multiple weight vectors \(w_1, \ldots, w_k\) for a \(k\)-way classification problem. In that case, whenever we have a training instance \(x_i\), we assign the class based on \(\arg_j \max w_j \cdot f(x_i)\). If \(x_i\) was actually in class \(j\), we are done; otherwise it should have been in class \(j'\) so we need to adjust two weight vectors with \(w_j \leftarrow w_j - f(x_i)\) and \(w_{j'} \leftarrow w_{j'} + f(x_i)\). We add to the appropriate class, and subtract from the wrong class.

What are the problems with the perceptron as we just described? Well, if the data isn’t linearly separable, the algorithm will “thrash” around and never converge2. Two other (related) problems: it can overfit the data, or not find a suitable boundary. For the latter case, think of a linearly separable data, but with one outlier that causes the linear boundary to drastically shift. It may be wise to allow one “error” in order to get a \(w\) that generalizes better.

There is a modification of the perceptron known as the Margin-Infused Relaxed Algorithm (MIRA), which updates in the same direction as the perceptron, but at the minimum magnitude necessary (technically, we add one to leave some slack, but whatever) to force the classifier to classify the current sample correctly (if it was not already correct). This means that the update could be smaller or greater than the perceptron update, but unlike the perceptron, MIRA will always classify an example correctly after seeing it. In practice, we cap the amount that a single training example can change the weight vector, so the scale factor \(\tau\) is at most a pre-specified \(C\).

As an alternative to the multiway classification perceptron, one can use the perceptron for ranking (e.g., website ranking), which has only one weight vector. It’s useful if we want to consider data points \(x\) and classes \(y\) together in a single vector \(f(x,y)\). The decision rule is

\[\arg_y \max f(x,y) \cdot w\]and the update rule is

\[w \leftarrow w + f(x,y^*) - f(x,y)\]where for a data point \(x\), \(y\) was the predicted class but \(y^*\) was the actual class. Now the weights are interpreted as the importance of each feature component to each class.

The Kernelized Perceptron

We can create more complicated classification boundaries with perceptrons by using kernelization3. Suppose \(w\) starts off as the zero vector. Then we notice in the general \(k\)-way classification problem that we only add or subtract \(f(x_i)\) vectors to \(w\). In other words, with \(N\) samples in the training data, \(w_j = \sum_{i=1}^N \alpha_{i,j}f(x_i)\) where all the \(\alpha\) variables are integers. This means learning all the alphas would be enough to reconstruct the weight vectors.

How do we make a classification decision? For a given training instance (or even an entirely new sample) \(x\), we would assign it the class based on whatever \(j\) (for weight vector \(w_j\)) that maximizes the following: \(\left( \sum_{i=1}^N \alpha_{i,j}f(x_i) \right) \cdot f(x) = \sum_{i=1}^N \alpha_{i,j} (f(x_i) \cdot f(x))\). We can re-express the dot product: \(f(x_i) \cdot f(x) \rightarrow K(x_i,x)\), where we have introduced a kernel function \(K\). Kernels allow us to “map” vectors \(x_i\) and \(x\) into a higher dimensional space, where we would then “take the dot product,” without actually transforming the features into the higher dimensional space.

Here’s an example: if we let \(K(x_i,x) = (x_i \cdot x)^2\), then we have mapped \(x_i\) and \(x\) into a higher dimensional space that includes squared components of \(x_i\) and \(x\), resulting in linearly separable boundaries in that space even if the original feature space was not, e.g., the positive examples formed a circle and were surrounded by the negative examples. As a general rule, the more features we have, the more likely we have linearly separable data, unless two of the exact same \(x\)’s have different classes, for whatever reason. Of course, we will need more examples to learn correctly (growth is roughly quadratic in the number of features), and when doing classification, we will need to compute all the \(K(\cdot,\cdot)\) values. It will be further slower if most of the alpha counts are nonzero.

There are two popular classes of kernels:

-

The polynomial kernel has the form \(K(x,y) = (x^Ty + c)^d\) for degree \(d\). For vectors of dimension \(n\), this kernel will map them to an \(O(n^d)\)-dimensional space! Expanding the kernel out for the simple case of \(d=2\), we get

\[(x^Ty + c)^2 = \sum_{i=1}^n\sum_{j=1}^n (x_ix_j)(z_iz_j) + \sum_{i=1}^n (\sqrt{2c}x_i)(\sqrt{2c}z_i) + c^2\]This is the equivalent of a dot product of features that contain elements \(x_ix_j\), \(\sqrt{2c}x_i\), and \(c\) (not \(c^2\) – watch out!).

-

The Gaussian kernel, also known as the radial-basis function (RBF) kernel maps elements into an infinite-dimensional feature space. It is \(K(x,y) = \exp(-\frac{1}{2\sigma^2}\|x-y\|_2^2)\). Probably more than any other kernel, classifying with this one is a lot like nearest neighbor because it clearly measures a similarity function, weighing “closer” examples more in our classification decisions. As \(\sigma \to 0\), the kernel becomes a lookup table, and our training accuracy for a perceptron trained with this is 100 percent (except in the weird case of two exact same points getting different labels) but our validation and test set accuracy will be horrible.

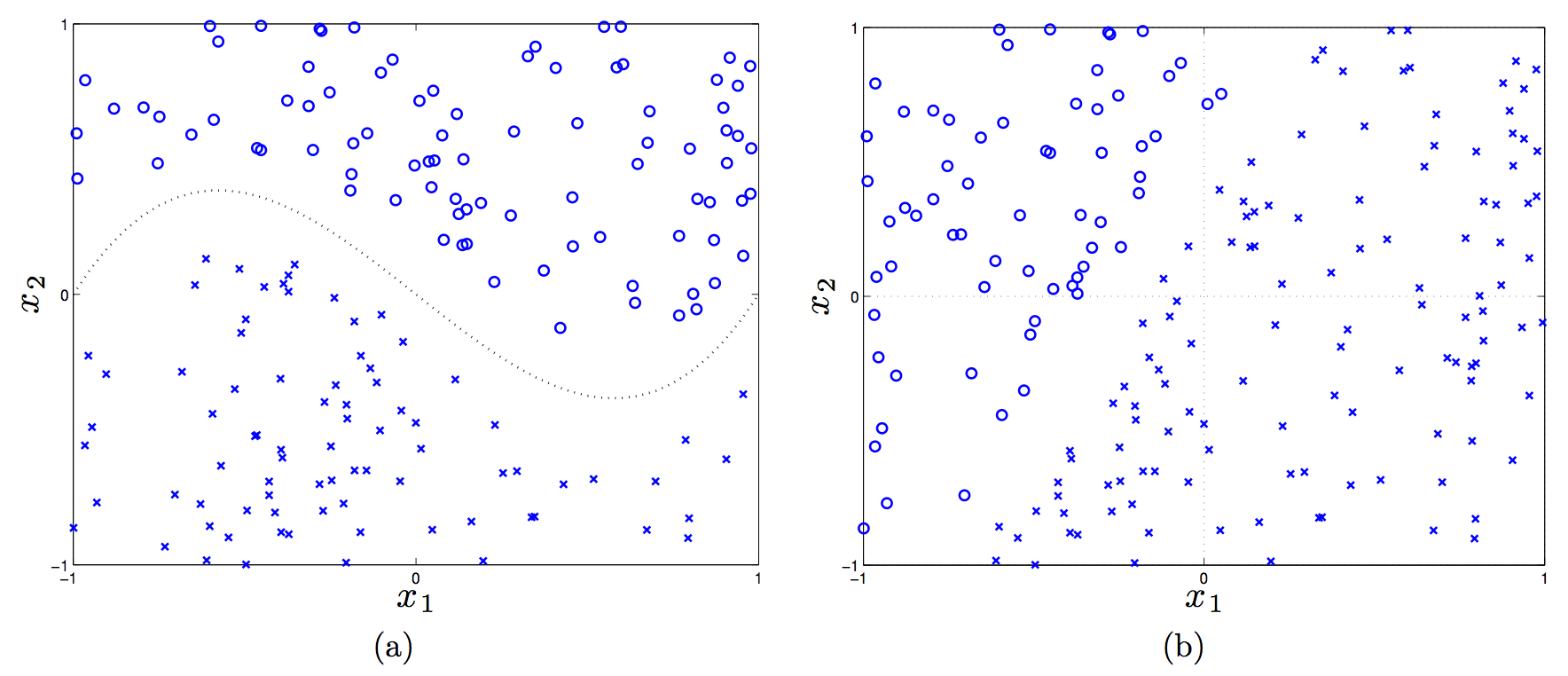

To test my understanding of kernels in more detail, I looked at (as usual) an old CS 188 handout. It had the following image:

(In the first plot, the dotted line is \(f(x_1) = x_1^3 - x_1\).)

Let’s consider a linear, a shifted linear, a quadratic, and cubic kernels (see the handout for details on these), and see if any of them can linearly separate the data in the two plots.

Plot (a) requires a third-order polynomial to separate the data, so only the cubic kernel will work, because that will map feature vectors \(x \to \phi(x)\) to have \(x_1^3\) in it. Then we’d just adjust the weights to set that to have nonzero weight.

In plot (b), a linear kernel is enough, but there has to be a bias term in there! (That actually tricked me.) Without a bias, in the 2-D case here, the decision rule is \({\rm sign}(w^Tx)\), and a 2-D vector \(w\) must “emanate” out of the origin, which means the perpendicular line to it crosses the origin.

Kernels, Formalized

The preceding discussion motivates the following question: how do we know if a function \(K\) is a valid kernel? First, the official definition of a kernel is that it is a function \(K(x,y) = \phi(x)^T\phi(y)\) that performs an inner product in a Hilbert Space. Normally, I prefer thinking of the inner product \(\langle \phi(x),\phi(y) \rangle\) as the normal dot product (as I wrote earlier) but more generally, we should use the terminology of the inner product. Those satisfy properties of symmetry, bilinearity, and positive definiteness. A Hilbert Space is an inner product space that is complete and separable with respect to the norm defined by the inner product.

Since we are only dealing with real-valued vectors, our Hilbert Space will be \(\mathbb{R}^n\) and the inner product here is the normal vector dot product. To test whether a function is a kernel, we invoke a simplified form of Mercer’s Theorem: let function \(K : \mathbb{R}^n \times \mathbb{R}^n \to \mathbb{R}\) be given. Then for \(K\) to be a valid Mercer kernel, it is necessary and sufficient that for any set of points \(\{x^{(1)}, \ldots, x^{(m)}\}\) the corresponding kernel matrix is (symmetric) positive semi-definite. The element \(K_{ij}\) of the kernel matrix is the value \(K(x^{(i)},x^{(j)})\). (Sometimes the kernel matrix is called the Gram matrix.)

To prove one direction, that if \(K\) is a kernel matrix corresponding to a feature mapping \(\phi\), it must be symmetric positive semi-definite, we proceed as follows. First, it’s going to be symmetric due to the dot product (or more accurately, inner product) being commutative. Next, for any \(z \in \mathbb{R}^n\), we have

\[\begin{align} z^TKz &= \sum_{i=1}^n\sum_{j=1}^n K_{ij}z_iz_j \\ &= \sum_{i=1}^n\sum_{j=1}^n z_i \phi(x^{(i)})^T\phi(x^{j})z_j \\ &= \sum_{i=1}^n\sum_{j=1}^n z_i \sum_{k=1}^n \phi_k(x^{(i)})\phi_k(x^{j})z_j \\ &= \sum_{k=1}^n\sum_{i=1}^n\sum_{j=1}^n z_i \phi_k(x^{(i)}) \phi_k(x^{(j)}) z_j\\ &= \sum_{k=1}^n \left( \sum_{i=1}^n z_i\phi_k(x^{(i)})\right)^2 \ge 0 \end{align}\]where we used the fact we indirectly showed earlier that \(\sum_i\sum_j x_iz_ix_jz_j = (x^Tz)^2 \ge 0\). It’s a little tricky because we are keeping \(k\), a component of \(\phi\), fixed, and ranging across different \(\phi\) vectors.

This fact about positive semi-definiteness makes it easy to see that the following are also valid kernels:

- Addition: \(K_1 + K_2\)

- Multiplication: \(K^T K\)

- Scalar: \(cK\) for a constant \(c \ge 0\)

We can use kernels for perceptrons (as previously discussed), support vector machines (as we will discuss), principal components analysis, and other classifiers such as linear regression.

Let’s discuss the linear regression case. In the general (regularized) case, the objective is:

\[\arg_w \min \|y - Xw\|_2^2 + \lambda \|w\|_2^2\]where \(X\) is the \(n \times m\) matrix of training instances, where each training instance is a row (this is different from what I usually think of it, but it makes more sense in regression). The \(y \in \mathbb{R}^n\) vector has the true labels. By taking derivatives, we see that the optimal \(w\) is

\[w = (X^TX + \lambda I)^{-1}X^Ty = X^T(XX^T + \lambda I)^{-1}y\]In the last step above, I used the clever trick I learned from CS 281A that for \(\lambda > 0\) and a matrix \(A\) that is \(d\times n\), we have \((AA^T + \lambda I)^{-1}A = A(A^TA + \lambda I)^{-1}\). But wait, what does this mean? We can express \(w\) as

\[w = X^T(XX^T+\lambda I)^{-1}y = \sum_{i=1}^n \alpha_i x_i\]with an appropriately defined \(\alpha_i\) since the columns of \(X^T\) (not \(X\), be careful!) are the actual training elements, so \(w\) is in the space spanned by them and thus we can write it as a linear combination.

When we are faced with a new training instance to do regression, \(x_{\rm new}\), we will perform the following:

\[f(x_{\rm new}) = (x_{\rm new})^Tw = (x_{\rm new})^T \left(\sum_{i=1}^n \alpha_i x_i \right) = \sum_{i=1}^n \alpha_i \langle x_i, x_{\rm new}\rangle\]We have kernels again! This is more accurately known as kernelized linear regression. In fact, we even use kernels before we test on new examples (i.e., we use it during training). Why? The matrix \(XX^T\) is itself a kernel matrix! It consists of dot products between the training instances, and since we optimize over that during training, we will use kernels during training.

I may end up reading more of Tom Mitchell’s slides on this, because this was quite illuminating to me.

Support Vector Machines

We now switch gears to Support Vector Machines (SVMs), which are possibly the best “off-the-shelf” classifier because they combine the kernel trick along with the concept of a maximum margin separator. Thus, we know immediately that – like in the perceptron – we must find some way to express the optimization problem in terms of dot products.

To begin the derivation, we define the functional margin of a weight vector \((w,b)\) (note: we keep the intercept term separate now) with respect to training instance \(x^{(i)}\) to be \(\gamma^{(i)} = y^{(i)}(w^Tx^{(i)}+b)\), where the class label \(y^{(i)} \in \{-1,1\}\), and across the entire dataset, \(\gamma\) is just the minimum of all the functional margins. Ideally, we would like the functional margin to be relatively large, as that would indicate a strong, “robust” boundary between the two classes.

We can formulate SVMs with the following “optimization” problem:

\[\max_{\gamma,w,b} \gamma\]such that

\[y^{(i)}(w^Tx^{(i)}+b) \ge \gamma,\quad \forall i\]along with the restriction that \(\|w\|_2 = 1\), which prevents the functional margin from changing due to invariance of the size of \(w\) (though \(b\) might vary, but I don’t think it’s a problem).

Unfortunately, this is not really possible with “optimization” easily, so we transform the problem into an equivalent one as follows:

\[\min_{w,b} \frac{1}{2}\|w\|_2^2\]such that for all training instances \(i \in \{1,2,\ldots,m\}\), we have the constraint \(y^{(i)}(w^Tx^{(i)} + b) \ge 1\). This scales \(w,b\) so that the functional margin must be one.

We are done, but it is better to face the problem from the dual perspective so that we can take advantage of kernels. Since the dual solution \(d^*\) is less than or equal to the primal solution \(p^*\), it follows that we can equivalently solve the problem by maximizing the dual4. We re-write the constraints as \(g_i(w) = 1-y^{(i)}(w^Tx^{(i)}+b) \le 0\) and construct the Lagrangian as:

\[\mathcal{L}(w,b,\alpha) = \frac{1}{2}\|w\|_2^2 - \sum_{i=1}^{m} \alpha_i (y^{(i)}(w^Tx^{(i)}+b) - 1)\]Setting the derivative of \(\mathcal{L}\) with respect to \(w\) and \(b\), then after some algebra (which took me a while due to lots of indices messing me up, but I eventually got it), and then knowing that we need to maximize this, we pose the dual optimization problem:

\[\max_\alpha \sum_{j} \alpha_j - \frac{1}{2}\sum_{j}\sum_{k} \alpha_j \alpha_k y^{(j)} y^{(k)}(x_j^T x_k)\]such that \(\alpha_i \ge 0\) for all \(i\), and \(\sum_{i=1}^m \alpha_iy^{(i)} = 0\). Fortunately, this is convex, so there is a single global minimum.

Notice that we have \(\alpha\) variables again, though these have a different interpretation than the ones in the kernelized perceptron, though. Watch out! And yes, we do get kernels to appear once again. Nice!

Now let’s see what happens when we have trained and are going to assign a class to a new instance \(x_{\rm new}\). We perform the \({\rm sign}(w^Tx_{\rm new}+b)\) computation, which can equivalently be expressed as

\[w^Tx_{\rm new}+b = \left(\sum_{i=1}^m \alpha_i y^{(i)}x^{(i)}\right)^Tx_{\rm new} + b = \sum_{i=1}^m \alpha_i y^{(i)} \langle x^{(i)},x_{\rm new}\rangle + b\]Once again, we have kernels! Incidentally, it looks like we might have to do a lot of computation for classifying a single point, but in fact, most of the \(\alpha_i\)s will be zero. The few that are nonzero correspond to the training instances called support vectors, and they are the ones closest to the margin. This is formally called the Karush-Kuhn-Tucker dual complementarity condition. The fact that we may not need to do much computation means SVMs gain some of the advantages of parametric models.

In the above problem, we – just like in the kernelized perceptron and kernelized regression – have formulated the problem so that, both during training and classification of new examples, the data enter via inner products, allowing us to use kernels.

What happens when we do not have linearly separable data? Rather than come up with a more complicated or longer feature vector (which might risk overfitting), we can reformulate the problem using slack variables (for \(\ell_1\)-regularization) and an additional, controllable parameter \(C\):

\[\min_{w,b} \frac{1}{2}\|w\|_2^2 + C \sum_{i=1}^m \xi_i\]such that for all \(i \in \{1,2,\ldots,m\}\), we have \(y^{(i)}(w^Tx^{(i)}+b) \ge 1 - \xi_i\) and \(\xi_i \ge 0\). Thus, samples are permitted to have a (functional) margin less than one.

Rather surprisingly, the only change to the dual is that \(\alpha_i \ge 0\) constraints turns into \(C \ge \alpha_i \ge 0\) constraints, so we can apply the same principles (roughly speaking) as we did in the linearly separable case. In addition, the way we find \(b\) changes, but generally we don’t really worry about the intercept too much when going through the derivation. It’s really \(w\) that matters most to me.

-

This is important. When we call things \(x\), we usually refer to the raw data, but what the classifier needs are a set of features for each sample. But some people elide this notation by treating \(x\) directly as features, so be careful. ↩

-

Even when the data is linearly separable, the perceptron is only guaranteed to converge in the binary classification case. Here’s a key theorem: suppose the (binary) data are separable with margin \(\gamma\) and the maximum norm of a training sample is \(R\). Then the perceptron converges with at most \(O(R^2/\gamma^2)\) updates. ↩

-

Here’s some intuition: we’re trying to combine the best of nearest neighbor approaches with perceptron approaches by using the former’s ability to use fancy “similarity” functions along with the latter’s ability to explicitly learn from data. ↩

-

Technically, we need the Karush-Kuhn-Tucker conditions to hold for there to be possibly equality. ↩

Markov Decision Processes and Reinforcement Learning

In this post, we’ll review Markov Decision Processes and Reinforcement Learning. This material is from Chapters 17 and 21 in Russell and Norvig (2010).

Markov Decision Processes

The general idea with this situation is that we are an agent in some world, and we have a set of states, actions (for each state), a transition probability (which we may not actually know), and a reward function. The goal for a rational agent is to maximize the expected sum of (discounted) rewards for a state \(\mathbb{E}[\sum_t \gamma^t R(s_t)]\), where \(0 \le \gamma \le 1\) is our discount factor, which we usually assume is equal to one for toy cases only.

This is the setup of a Markov Decision Process (MDP), with the added constraint that the probability of going to another state only depends on the current state, which is why we call this a Markov Decision Process. Since the ultimate goal is to maximize the expected utility, we will need to learn a policy function \(\pi\) that maps states to actions. Finally, while not strictly necessary, it is common to “spice up” the MDP problem by assuming that actions are non-deterministic. In the canonical grid-world example described in the book (and in a lot of undergraduate AI classes, for that matter), we assume if we move North, then that has an 80 percent chance of succeeding. Hence, the transition probability \(P(s' \mid s, a) = P(s,a,s')\) is nontrivial, where \(s\) and \(s'\) are states, and \(a\) is the action we took at state \(s\).

In order to understand how to get an optimal policy, we first need to realize how exactly to define the value \(V^*(s)\) of a state, which in other words, is the expected utility we will get if we are at state \(s\) and play optimally. (The asterisk here is to indicate optimality.) There are, sadly, two common formulations for \(V^*(s)\). The first, from R & N, assumes that the reward aspect of the formula is defined in terms of a state only:

\[V^*(s) = R(s) + \gamma \max_a \sum_{s'} P(s,a,s') V^*(s')\]The second formulation, which Berkeley’s CS 188 class uses1, defines reward in terms of a state-action-successor triple:

\[V^*(s) = \max_a \sum_{s'} P(s,a,s') [R(s,a,s') + \gamma V^*(s')]\]Both of these equation sets can be called the Bellman Equations, which characterize the optimal values (but we will generally need some other way of computing them, as we show shortly). In general, I will utilize the second formulation, but the formulations are not fundamentally different.

It is also common to define a new quantity called a Q-value with respect to state-action pairs:

\[Q^*(s,a) = \sum_{s'} P(s,a,s')[R(s,a,s') + \gamma V^*(s')]\]In words, \(Q(s,a)\) is the expected utility starting at state \(s\), taking action \(a\), and hereafter, playing optimally. We can therefore relate the \(V\)s and \(Q\)s with the following equation:

\[V^*(s) = \max_a Q^*(s,a)\]I find it easiest to think of these in terms of expectimax trees with chance nodes. The “normal” nodes correspond to \(V\)s, and the “chance” nodes correspond to \(Q\)s. Both nodes have multiple successors: the \(V\)s because we have choices of actions, and \(Q\)s because, even if we commit to an action, the actual outcome is generally non-deterministic, so we will not know what state we end up in. (I guess we can also view states as the “normal” nodes here.)

Given these definitions, how do we figure out the optimal policy? There are two common tactics:

-

Value Iteration is an iterative algorithm that computes values of states indexed by a \(k\), as in \(V_k(s)\), which can also be thought of the best value of state \(s\) assuming the game ends in \(k\) time-steps. This is not the actual policy itself, but these values are used to determine the optimal policy2.

Starting with \(V_0(s) = 0\) for all \(s\), value iteration performs the following update:

\[V_{k+1}(s) \leftarrow \max_a \sum_{s'} P(s,a,s') [R(s,a,s') + \gamma V_{k}(s')]\]and it repeats until we tell it to stop.

To make this intuitive, during each step, we have a vector of \(V_k(s)\) values, and then we do a one-ply expectimax computation to get the next vector. Note that expectimax could compute all of the values we need, but it would take too long due to repeated and infinite depth state trees.

To prove value iteration converges to \(V^*\) (and uniquely!), appeal to contraction and the fact that \(\gamma^k\), so long as \(\gamma \ne 1\), will go down to zero. Said another way, the “\(V_k\) tree” and the “\(V_{k+1}\) tree” are different only in their last layer, but that last layer’s contribution is reduced exponentially.

-

Policy Iteration is generally an improvement over Value Iteration because policies often converge long before the values do, so we alternate between policy evaluation and policy improvement steps. In the first step, we assume we are given a policy \(\pi\) and have to figure out the expected utilities of each state when executing \(\pi\). These values are characterized by the following equations:

\[V^\pi(s) = \sum_{s'} P(s,\pi(s),s') [R(s,\pi(s),s') + \gamma V^\pi(s')]\]Note that the MAX operator is gone because \(\pi(s)\) gives us our action. Thus, we can solve these equations in \(O(n^3)\) time with standard matrix multiplication methods3.

It is actually rather easy to do policy improvement from Q-values:

\[\pi^*(s) = \arg_a \max Q^*(s,a)\]Alternatively, we can use the more complicated expectimax form:

\[\pi^*(s) = \arg_a \max \sum_{s'} P(s,a,s')[R(s,a,s') + \gamma V^*(s')]\]The two steps would iterate until convergence, and like value iteration, policy iteration is optimal. Also, these equations are really policy extraction, in the sense that given values, we know how to get a policy.

In general, policy iteration seems to be a slightly better bet than value iteration, though I guess the latter could be simpler to implement in some cases since it only involves the value updating part?

If we do not know exactly what state we are in, we do not have a notion of \(s\) here, so we need to change our representation of the problem. This is the Partially Observable Markov Decision Process (POMDP) case. We augment the MDP with a sensor model \(P(e \mid s)\) and treat states as belief states. In a discrete MDP with \(n\) states, the belief state vector \(b\) would be an \(n\)-dimensional vector with components representing the probabilities of being in a particular state. The belief state update for a particular component (state) \(s'\) looks a lot like an HMM update:

\[b(s') \propto P(e \mid s') \sum_{s'} P(s' \mid s, a) b(s)\]The cycle is: we compute an action from \(b\), see evidence \(e\), compute a new belief state (using the above formula), then repeat. Fortunately, the optimal action for a POMDP only depends on the current belief state, allowing us to have our policy \(\pi\) be a mapping from a belief state to an action.