My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

One-Shot Visual Imitation Learning via Meta-Learning

A follow-up paper to the one I discussed in my previous post is One-Shot Visual Imitation Learning via Meta-Learning. The idea is, again, to train neural network parameters \(\theta\) on a distribution of tasks such that the parameters are easy to fine-tune to new tasks sampled from the distribution. In this paper, the focus is on imitation learning from raw pixels and showing the effectiveness of a one-shot imitator on a physical PR2 robot.

Recall that the original MAML paper showed the algorithm applied to supervised regression (for sinusoids), supervised classification (for images), and reinforcement learning (for MuJoCo). This paper shows how to use MAML for imitation learning, and the extension is straightforward. First, each imitation task \(\mathcal{T}_i \sim p(\mathcal{T})\) contains the following information:

-

A trajectory \(\tau = \{o_1,a_1,\ldots,o_T,a_T\} \sim \pi_i^*\) consists of a sequence of states and actions from an expert policy \(\pi_i^*\). Remember, this is imitation learning, so we can assume an expert. Also, note that the expert policy is task-specific.

-

A loss function \(\mathcal{L}(a_{1:T},\hat{a}_{1:T}) \to \mathbb{R}\) providing feedback on how closely our actions match those of the expert’s.

Since the focus of the paper is on “one-shot” learning, we assume we only have one trajectory available for the “inner” gradient update portion of meta-training for each task \(\mathcal{T}_i\). However, if you recall from MAML, we actually need at least one more trajectory for the “outer” gradient portion of meta-training, as we need to compute a “validation error” for each sampled task. This is not the overall meta-test time evaluation, which relies on an entirely new task sampled from the distribution (and which only needs one trajectory, not two or more). Yes, the terminology can be confusing. When I refer to “test time evaluation” I always refer to when we have trained \(\theta\) and we are doing few-shot (or one-shot) learning on a new task that was not seen during training.

All the tasks in this paper use continuous control, so the loss function for optimizing our neural network policy \(f_\theta\) can be described as:

\[\mathcal{L}_{\mathcal{T}_i}(f_\theta) = \sum_{\tau^{(j)} \sim p(\mathcal{T}_i)} \sum_{t=1}^T \| f_\theta(o_t^{(j)}) - a_t^{(j)} \|_2^2\]where the first sum normally has one trajectory only, hence the “one-shot learning” terminology, but we can easily extend it to several sampled trajectories if our task distribution is very challenging. The overall objective is now:

\[{\rm minimize}_\theta \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} (f_{\theta_i'}) = \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} \Big(f_{\theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(f_\theta)}\Big)\]and one can simply run Adam to update \(\theta\).

This paper uses two new techniques for better performance: a two-headed architecture, and a bias transformation.

-

Two-Headed Architecture. Let \(y_t^{(j)}\) be the vector of post-activation values just before the last fully connected layer which maps to motor torques. The last layer has parameters \(W\) and \(b\), so the inner loss function \(\mathcal{L}_{\mathcal{T}_i}(f_\theta)\) can be re-written as:

\[\mathcal{L}_{\mathcal{T}_i}(f_\theta) = \sum_{\tau^{(j)} \sim p(\mathcal{T}_i)} \sum_{t=1}^T \| Wy_t^{(j)} + b- a_t^{(j)} \|_2^2\]where, I suppose, we should write \(\phi = (\theta, W, b)\) and re-define \(\theta\) to be all the parameters used to compute \(y_t^{(j)}\).

In this paper, the test-time single demonstration of the new task is normally provided as a sequence of observations (images) and actions. However, they also experiment with the more challenging case of removing the provided actions for that single test-time demonstration. They simply remove the action and use this inner loss function:

\[\mathcal{L}_{\mathcal{T}_i}(f_\theta) = \sum_{\tau^{(j)} \sim p(\mathcal{T}_i)} \sum_{t=1}^T \| Wy_t^{(j)} + b\|_2^2\]This is still a bit confusing to me. I’m not sure why this loss function leads to the desired outcome. It’s also a bit unclear how the two-headed architecture training works. After another read, maybe only the \(W\) and \(b\) are updated in the inner portion?

The two-headed architecture seems to be beneficial on the simulated pushing task, with performance improving by about 5-6 percentage points. That may not sound like a lot, but this was in simulation and they were able to test with 444 total trials.

The other confusing part is that if we assume we’re allowed to have access to expert actions, then the real-world experiment actually used the single-headed architecture, and not the two-headed one. So there wasn’t a benefit to the two-headed one assuming we have actions. Without actions, of course, the two-headed one is our only option.

-

Bias Transformation. After a certain neural network layer (which in this paper is after the 2D spatial softmax applied after the convolutions to process the images), they concatenate this vector of parameters. They claim that

[…] the bias transformation increases the representational power of the gradient, without affecting the representation power of the network itself. In our experiments, we found this simple addition to the network made gradient-based meta-learning significantly more stable and effective.

However, the paper doesn’t seem to show too much benefit to using the bias transformation. A comparison is reported in the simulated reaching task, with a dimension of 10, but it could be argued that performance is similar without the bias transformation. For the two other experimental domains, I don’t think they reported with and without the bias transformation.

Furthermore, neural networks already have biases. So is there some particular advantage to having more biases packed in one layer, and furthermore, with that layer being the same spot where the robot configuration is concatenated with the processed image (like what people do with self-supervision)? I wish I understood. The math that they use to justify the gradient representation claim makes sense; I’m just missing a tiny step to figure out its practical significance.

They ran their setups on three experimental domains: simulated reaching, simulated pushing, and (drum roll please) real robotic tasks. For these domains, they seem to have tested up to 5.5K demonstrations for reaching and 8.5K for pushing. For the real robot, they used 1.3K demonstrations (ouch, I wonder how long that took!). The results certainly seem impressive, and I agree that this paper is a step towards generalist robots.

Model-Agnostic Meta-Learning

One of the recent landmark papers in the area of meta-learning is MAML: Model-Agnostic Meta-Learning. The idea is simple yet surprisingly effective: train neural network parameters \(\theta\) on a distribution of tasks so that, when faced with a new task, can be rapidly adjusted through just a few gradient steps. In this post, I’ll briefly go over the notation and problem formulation for MAML, and meta-learning more generally.

Here’s the notation and setup, mostly following the paper:

-

The overall model \(f_\theta\) is what MAML is optimizing, with parameters \(\theta\). We denote \(\theta_i'\) as weights that have been adapted to the \(i\)-th task through one or more gradient steps. Since MAML can be applied to classification, regression, reinforcement learning, and imitation learning (plus even more stuff!) we generically refer to \(f_\theta\) as mapping from inputs \(x_t\) to outputs \(a_t\).

-

A task \(\mathcal{T}_i\) is defined as a tuple \((T_i, q_i, \mathcal{L}_{\mathcal{T}_i})\), where:

-

\(T_i\) is the time horizon. For (IID) supervised learning problems like classification, \(T_i=1\). For reinforcement learning and imitation learning, it’s whatever the environment dictates.

-

\(q_i\) is the transition distribution, defining a prior over initial observations \(q_i(x_1)\) and the transitions \(q_i(x_{t+1}\mid x_{t},a_t)\). Again, we can generally ignore this for simple supervised learning. Also, for imitation learning, this reduces to the distribution over expert trajectories.

-

\(\mathcal{L}_{\mathcal{T}_i}\) is a loss function that maps the sequence of network inputs \(x_{1:T}\) and outputs \(a_{1:T}\) to a scalar value indicating the quality of the model. For supervised learning tasks, this is almost always the cross entropy or squared error loss.

-

-

Tasks are drawn from some distribution \(p(\mathcal{T})\). For example, we can have a distribution over the abstract concept of doing well at “block stacking tasks”. One task could be about stacking blue blocks. Another could be about stacking red blocks. Yet another could be stacking blocks that are numbered and need to be ordered consecutively. Clearly, the performance of meta-learning (or any alternative algorithm, for that matter) on optimizing \(f_\theta\) depends on \(p(\mathcal{T})\). The more diverse the distribution’s tasks, the harder it is for \(f_\theta\) to quickly learn new tasks.

The MAML algorithm specifically finds a set of weights \(\theta\) that are easily fine-tuned to new, held-out tasks (for testing) by optimizing the following:

\[{\rm minimize}_\theta \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} (f_{\theta_i'}) = \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} \Big(f_{\theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(f_\theta)}\Big)\]This assumes that \(\theta_i' = \theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(f_\theta)\). It is also possible to do multiple gradient steps, not just one. Thus, if we do \(K\)-shot learning, then \(\theta_i'\) is obtained via \(K\) gradient updates based on the task. However, “one shot” is cooler than “few shot” and also easier to write, so we’ll stick with that.

Let’s look at the loss function above. We are optimizing over a sum of loss functions across several tasks. But we are evaluating the (outer-most) loss functions while assuming we made gradient updates to our weights \(\theta\). What if the loss function were like this:

\[{\rm minimize}_\theta \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} (f_{\theta})\]This means \(f_\theta\) would be capable of learning how to perform well across all these tasks. But there’s no guarantee that this will work on held-out tasks, and generally speaking, unless the tasks are so closely related, it shouldn’t work. (I’ve tried doing some similar stuff in the past with the Atari 2600 benchmark where a “task” was “doing well on game X”, and got networks to optimize across several games, but generalization was not possible without fine-tuning.) Also, even if we were allowed to fine-tune, it’s very unlikely that one or few gradient steps would lead to solid performance. MAML should do better precisely because it optimizes \(\theta\) so that it can adapt to new tasks with just a few gradient steps.

MAML is an effective algorithm for meta-learning, and one of its advantages over other algorithms such as \({\rm RL}^2\) is that it is parameter-efficient. The gradient updates above do not introduce extra parameters. Furthermore, the actual optimization over the full model \(\theta\) is also done via SGD

\[\theta = \theta - \beta \left( \nabla_\theta \sum_{\mathcal{T}_i\sim p(\mathcal{T})} \mathcal{L}_{\mathcal{T}_i} \Big(f_{\theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(f_\theta)}\Big) \right)\]again introducing no new parameters. (The update is actually Adam if we’re doing supervised learning, and TRPO if doing RL, but SGD is the foundation of those and it’s easier for me to write the math. Also, even though the updates may be complex, I think the inner part, where we have \(f_{\theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(f_\theta)}\), I think that is always vanilla SGD, but I could be wrong.)

I’d like to emphasize a key point: the above update mandates two instances of \(\mathcal{L}_{\mathcal{T}_i}\). One of these — the one in the subscript to get \(\theta_i'\) should involve the \(K\) training instances from the task \(\mathcal{T}_i\) (or more specifically, \(q_i\)). The outer-most loss function should be computed on testing instances, also from task \(\mathcal{T}_i\). This is important because we want our ultimate evaluation to be done on testing instances.

Another important point is that we do not use those “testing instances” for evaluating meta-learning algorithms, as that would be cheating. For testing, one takes a held-out set of test tasks entirely, adjusts \(\theta\) for however many steps are allowed (one in the case of one-shot learning, etc.) and then evaluates according to whatever metric is appropriate for the task distribution.

In a subsequent post, I will further investigate several MAML extensions.

Zero-Shot Visual Imitation

In this post, I will further investigate one of the papers I discussed in an earlier blog post: Zero-Shot Visual Imitation (Pathak et al., 2018).

For notation, I denote states and actions at some time step \(t\) as \(s_t\) and \(a_t\), respectively, if they were obtained through the agent exploring in the environment. A hat symbol, \(\hat{s}_t\) or \(\hat{a}_t\), refers to a prediction made from some machine learning model.

Basic forward (left) and inverse (right) model designs.

Recall the basic forward and inverse model structure (figure above). A forward model takes in a state-action pair and predicts the subsequent state \(\hat{s}_{t+1}\). An inverse model takes in a current state \(s_t\) and some goal state \(s_g\), and must predict the action that will enable the agent go from \(s_t\) to \(s_t\).

-

It’s easiest to view the goal input to the inverse model as either the very next state \(s_{t+1}\), or the final desired goal of the trajectory, but some papers also use \(s_g\) as an arbitrary checkpoint (Agrawal et al., 2016, Nair et al., 2017, Pathak et al., 2018). For the simplest model, it probably makes most sense to have \(s_g = s_{t+1}\) but I will use \(s_g\) to maintain generality. It’s true that \(s_g\) may be “far” from \(s_t\), but the inverse model can predict a sequence of actions if needed.

-

If the states are images, these models tend to use convolutions to get a lower dimensional featurized state representation. For instance, inverse models often process the two input images through tied (i.e., shared) convolutional weights to obtain \(\phi(s_t)\) and \(\phi(s_{t+1})\), upon which they’re concatenated and then processed through some fully connected layers.

As I discussed earlier, there are a number of issues related to this basic forward/inverse model design, most notably about (a) the high dimensionality of the states, and (b) the multi-modality of the action space. To be clear on (b), there may be many (or no) action(s) that let the agent go from \(s_t\) to \(s_g\), and the number of possibilities increases with a longer time horizon, if \(s_g\) is many states in the future.

Let’s understand how the model proposed in Zero-Shot Visual Imitation mitigates (b). Their inverse model takes in \(s_g\) as an arbitrary checkpoint/goal state and must output a sequence of actions that allows the agent to arrive at \(s_g\). To simplify the discussion, let’s suppose we’re only interested in predicting one step in the future, so \(s_g = s_{t+1}\). Their predictive physics design is shown below.

The basic one-step model, assuming that our inverse model just needs to predict

one action. The convolutional layers for the inverse model use the same tied

network convolutional weights. The action loss is the cross-entropy loss

(assuming discrete actions), and is not written in detail due to cumbersome

notation.

The main novelty here is that our predicted action \(\hat{a}_t\) from the inverse model is provided as input to the forward model, along with the current state \(s_t\). We then try and obtain \(s_{t+1}\), the actual state that was encountered during the agent’s exploration. This loss \(\mathcal{L}(s_{t+1}, \hat{s}_{t+1})\) is the standard Euclidean distance and is added with the action prediction loss \(\mathcal{L}(a_t,\hat{a}_t)\) which is the usual cross-entropy (for discrete actions).

Why is this extra loss function from the successor states used? It’s because we mostly don’t care which action we took, so long as it leads to the desired next state. Thus, we really want \(\hat{s}_{t+1} \approx s_{t+1}\).

Two extra long-ended comments:

-

There’s some subtlety with making this work. The state loss \(\mathcal{L}(s_{t+1}, \hat{s}_{t+1})\) treats \(s_{t+1}\) as ground truth, but that assumes we took action \(a_t\) from state \(s_t\). If we instead took \(\hat{a}_t\) from \(s_t\), and \(\hat{a}_t \ne a_t\), then it seems like the ground-truth should no longer be \(s_{t+1}\)?

Assuming we’ve trained long enough, then I understand why this will work, because the inverse model will predict \(\hat{a}_t = a_t\) most of the time, and hence the forward model loss makes sense. But one has to get to that point first. In short, the forward model training must assume that the given action will actually result in a transition from \(s_t\) to \(s_{t+1}\).

The authors appear to mitigate this with pre-training the inverse and forward models separately. Given ground truth data \(\mathcal{D} = \{s_1,a_1,s_2,\ldots,s_N\}\), we can pre-train the forward model with this collected data (no action predictions) so that it is effective at understanding the effect of actions.

This would also enable better training of the inverse model, which (as the authors point out) depends on an accurate forward model to be able to check that the predicted action \(\hat{a}_t\) has the desired effect in state-space. The inverse model itself can also be pre-trained entirely on the ground-truth data while ignoring \(\mathcal{L}(s_{t+1}, \hat{s}_{t+1})\) from the training objective.

I think this is what the authors did, though I wish there were a few more details.

-

A surprising aspect of the forward model is that it appears to predict the raw states \(s_{t+1}\), which could be very high-dimensional. I’m surprised that this works, given that (Agrawal et al., 2016) explicitly avoided this by predicting lower-dimensional features. Perhaps it works, but I wish the network architecture was clear. My guess is that the forward model processes \(s_t\) to be a lower dimensional vector \(\psi(s_t)\), concatenates it with \(\hat{a}_t\) from the inverse model, and then up-samples it to get the original image. Brandon Amos describes up-sampling in his excellent blog post. (Note: don’t call it “deconvolution.”)

Now how do we extend this for multi-step trajectories? The solution is simple: make the inverse model a recurrent neural network. That’s it. The model still predicts \(\hat{a}_t\) and we use the same loss function (summing across time steps) and the same forward model. For the RNN, the convolutional layers \(\phi\) take in the current state but they always take in \(s_g\), the goal state. They also take in \(h_{i-1}\) and \(a_{i-1}\) the previous hidden unit and the previous action (not the predicted action, that would be a bit silly when we have ground truth).

The multi-step trajectory case, visualizing several steps out of many.

Thoughts:

-

Why not make the forward model recurrent?

-

Should we weigh shorter-term actions highly instead of summing everything equally as they appear to be doing?

-

How do we actually decide the length of the action vector to predict? Or said in a better way, when do we decide that we’ve attained \(s_g\)?

Fortunately, the authors answer that last thought by training a deep neural network that can learn a stopping criterion. They say:

We sample states at random, and for every sampled state make positives of its temporal neighbors, and make negatives of the remaining states more distant than a certain margin. We optimize our goal classifier by cross-entropy loss.

So, states “close” to each other are positive samples, whereas “father” samples are negative. Sure, that makes sense. By distance I assume simple Euclidean distance on raw pixels? I’m generally skeptical of Euclidean distance but it might be necessary if the forward model also optimizes the same objective. I also assume this is applied after each time step, testing whether \(s_i\) at time \(i\) has reached \(s_g\). Thus, it is not known ahead of time how many actions the RNN must be able to predict before the goal is reset.

An alternative is mentioned about treating stopping as an action. There’s some resemblance to this and DDO’s option termination criterion.

Additionally, we have this relevant comment on OpenReview:

The independent goal recognition network does not require any extra work concerning data or supervision. The data used to train the goal recognition network is the same as the data used to train the PSF. The only prior we are assuming is that nearby states to the randomly selected states are positive and far away are negative which is not domain specific. This prior provides supervision for obtaining positive and negative data points for training the goal classifier. Note that, no human supervision or any particular form of data is required in this self-supervised process.

Yes, this makes sense.

Now let’s discuss the experiments. The authors test several ablations of their model:

-

An inverse model with no forward model at all (Nair et al., 2017). This is different from their earlier paper which used a forward model for regularization purposes (Agrawal et al., 2016). The model in (Nair et al., 2017) just used the inverse model for predicting an action given current image \(I_t\) and (critically!) a goal image \(I_{t+1}'\) specified by a human.

-

A more sophisticated inverse model with an RNN, but no forward model. Think of my most recent hand-drawn figure above, except without the forward portion. Furthermore, this baseline also does not use the action \(a_i\) as input to the RNN structure.

-

An even more sophisticated model where the action history is now input to the RNN. Otherwise, it is the same as the one I just described above.

Thus, all three of their ablations do not use the forward consistency model and are solely trained by minimizing \(\mathcal{L}(a_t,\hat{a}_t)\). I suppose this is reasonable, and to be fair, testing these out in physical trials takes a while. (Training should be less cumbersome because data collection is the bottleneck. Once they have data, they can train all of their ablations quickly.) Finally, note that all these inverse models take \((s_t,s_g)\) as input, and \(s_g\) is not necessarily \(s_{t+1}\). This, I remember from the greedy planner in (Agrawal et al., 2016).

The experiments are: navigating a short mobile robot throughout rooms and performing rope manipulation with the same setup from (Nair et al., 2017).

-

Indoor navigation. They show the model an image of the target goal, and check if the robot can use it to arrive there. This obviously works best when few actions are needed; otherwise, waypoints are necessary. However, for results to be interesting enough, the target image should not have any overlap with the starting image.

The actions are: (1) forward 10cm, (2) turn left, (3) turn right, and (4) standing still. They use several “tricks” such as using action repeats, applying a reset maneuver, etc. A ResNet acts as the image processing pipeline, and then (I assume) the ResNet output is fed into the RNN along with the hidden layer and action vector.

Indeed, it seems like their navigating robot can reach goal states and is better than the baselines! They claim their robot learns first to turn and then to move to the target. To make results more impressive, they tested all this on a different floor from where the training data was collected. Nice! The main downside is that they conducted only eight trials for each method, which might not be enough to be entirely convincing.

Another set of experiments tests imitation learning, where the goal images are far away from the robot, thus mandating a series of checkpoint images specified by a human. Every fifth image in a human demonstration was provided as a waypoint. (Note: this doesn’t mean the robot will take exactly five steps for each waypoint even if it was well trained, because it may take four or six or some other number of actions before it deems itself close enough to the target.) Unfortunately, I have a similar complaint as earlier: I wish there were more than just three trials.

-

Rope manipulation. They claim almost a 2x performance boost over (Nair et al., 2017) while using the same training data of 60K-70K interaction pairs. That’s the benefit of building upon prior work. They surprisingly never say how many trials they have, and their table reports only a “bootstrapped standard deviation”. Looking at (Nair et al., 2017), I cannot find where the 35.8% figure comes from (I see 38% in that paper but that’s not 35.8%…).

According to OpenReview comments they also trained the model from (Agrawal et al., 2016) and claim 44% accuracy. This needs to be in the final version of the paper. The difference from (Nair et al., 2017) is that (Agrawal et al., 2016) jointly train a forward model (but not to enforce dynamics but just as a regularizer), while (Nair et al., 2017) do not have any forward model.

Despite the lack of detail in some areas of the paper, (where’s the appendix?!?) I certainly enjoyed reading it and would like to try out some of this stuff.

A Critical Comparison of Three Half Marathons I Have Run

I have now run in three half marathons: the Berkeley Half Marathon (November 2017), the Kaiser Permanente San Francisco Half Marathon (February 2018), and the Oakland Half Marathon (March 2018).

To be clear, the Kaiser Permanente San Francisco half marathon is not the same as a separate set of San Francisco races in the summers. The Oakland Half Marathon is also technically the “Kaiser Permanente […]” but since there’s only one main set of Oakland races a year — known as the “Running Festival” — we can be more lenient in our naming convention.

All these races are popular, and the routes are relatively flat and therefore great for setting PRs. I would be happy to run any of these again. In fact, I’ll probably will, for all three!

In this post, I’ll provide some brief comments on each of the races. Note that:

-

When I list registration fees, it’s not always a clear-cut comparison since prices jack up closer to race day. I think I managed to get an “early bird” deal for all these races, so hopefully the prices are somewhat comparable. Also, I include taxes in the fee I list.

-

By “packet pickup” I refer to when runners pick up whatever racing material is needed (typically a timing chip, bib, sometimes gear as well) a day or two before the actual race. These pickup events also involve some deals for food and running equipment from race sponsors. Below is a picture that I took of the Oakland package pickup:

-

While I list “pros” and “cons” of the races, most are minor in the grand scheme of things, and this review is for those who might be picky. I reiterate that I will probably run in all of these again the next time around.

OK, let’s get started!

Berkeley Half Marathon

- Website: here.

- Price I paid: about 100 dollars, including a 10 dollar bib shipping fee.

Pros:

-

The race has a great “local feel” to it, with lots of Berkeley students and residents both running in the race or cheering us as spectators. I saw a number of people that I knew, mostly other student runners, and it was nice to say hi to them. There was also a cool drumming band which played while we were entering the portion of the race close to the San Francisco Bay.

-

The course is mostly flat, and enters a few Berkeley neighborhoods (again, a great local feel to it). There’s also a relatively straight section at the roughly 8-11 mile range by the San Francisco Bay and which lets you see the runners ahead of you when you’re entering the portion (for extra motivation). As I discussed two years ago, I regularly run by this area so I was used to the view, but I can see it being attractive for those who don’t use the same routes.

-

There are lots of pacers, for half-marathon finish times of 1:27, 1:35 (2x), 1:45, 1:55, etc.

-

The post-race food sampling selection was fantastic! There were the obligatory water bottles and bananas, but I also had tasty Power Crunch protein bars, Muscle Milk (this is clearly bad for you, but never mind), pretzels, cookies, coffee, etc. There was also beer, but I didn’t have any.

-

Post-race deals are excellent. I used them to order some Power Crunch bars at a discount.

-

The packet pickup had some decent free food samples. The race shirt is interesting — it’s a different style from prior years and feels somewhat odd but I surprisingly like it, and I’ll be wearing it both to school and for when I run in my own time.

Cons:

-

There’s a $10 bib mailing fee, and I realize now that it’s pointless to pay for it because we also have to pick up a timing chip during packet pickup, and that’s when we could have gotten the bibs. Thus, there seems to be no advantage to paying for the bib to be mailed. Furthermore, I wish the timing chip were attached to the bib; we had to tie it within our shoelaces. I think it’s far easier to stick it on the bib.

-

The starting location is a bit awkwardly placed in the center of the city, though to be fair, I’m not sure of a better spot. Certainly it’s less convenient for drop-offs and Uber rides compared to, say, Golden Gate Park.

-

There were seven water stops, one of which had electrolytes and GU energy chews. (Unfortunately, when running, I actually dropped two out of the four GU chews I was given … please use the longer, thinner packages that the Oakland race uses!!) The other two races offered richer goodies at the aid stations so next time, I’ll bring my own energy stuff.

-

It was the most expensive of the races I’ve run in, though the difference isn’t that much, especially if you avoid making the mistake of getting your bib mailed to you.

-

The photography selection after the race is excellent, but it’s expensive and most of it is concentrated near the end of the race when it’s crowded, so most pictures weren’t that interesting.

Kaiser Permanente San Francisco Half Marathon

- Website: here.

- Price I paid: about $80.

Upsides:

-

The race route is great! I enjoyed running through Golden Gate Park and seeing the Japanese Tea Garden, the California Academy of Sciences, and so on. There’s also a very long, straight section in the second half of the race (longer than Berkeley’s!) by the ocean where you can again see the runners ahead of you on their way back.

-

There’s a great selection of post-race sampling, arguably on par with Berkeley though there’s no beer. There were water bottles and bananas, along with CLIF Whey protein bars, Ocho candy, some coffee/caffeine-base drinks, etc.

-

The price is the cheapest of the three, which is surprising since I figured things in San Francisco would be more expensive. I suspect it has to do with much of the race being in Golden Gate Park, and the course is set so that there isn’t a need to close many roads. On a related note, it’s also easy to drop off and pick up racers.

-

You have to finish the race to get your shirt. Of course this is minor, but I believe it’s not a good idea to wear the official race shirt on race day. Incidentally, there’s no package pickup, which means we don’t get free samples or deals, but it’s probably better for me since I would have had to Uber a long distance to and back. You get the bib and timing chip mailed in advance, and the timing chip is (thankfully) attached to the bib.

Downsides:

-

No pacers. I don’t normally try to stick to a pacer during my races, but I think they’re useful.

-

While there was a great selection of post-race food sampling, there was no beer offered, in contrast to the Berkeley and Oakland races.

-

With regards to post-race photographs, my comments on this are basically identical to those of the Berkeley race.

-

All the aid stations had electrolytes (I think Nuun) in addition to water. It was a bit unclear to me which cups corresponded to what beverage, though in retrospect I should have realized that the “blank” cups had water and cups with a lightning sign on them had the electrolytes. The drinks situation is better than the Berkeley race, but the downside is that there were no GU energy chews, so perhaps it’s a wash with respect to the aid stations?

-

It felt like there were fewer people cheering us on when we raced, particularly compared to the Berkeley race.

-

I don’t think there were as many post-race discount deals. I was hoping that there were some deals for the CLIF whey protein bars, which would have been the analogue of the Power Crunch discount for the Berkeley race. The discount deals also lasted only a week, compared to two months for Berkeley’s post-race stuff.

Oakland Running Festival Half Marathon

- Website: here.

- Price I paid: about $90.

Upsides:

-

The race started at 9:45am, whereas the Berkeley and San Francisco races each started at about 8:10am. While I consider myself a morning person, that’s for work. If I want to set a half marathon PR, a 9:45am starting time is far better.

-

The Oakland race easily has the best aid stations compared to the other two races. Not only were there electrolytes at each station, but some also had bananas, GU gels, and GU chews (yes, GU has a lot of products!). Throughout the race I consumed two half-bananas (easy to eat since you can squeeze them), one GU gel, and one GU chew package, which contained about eight chews. This was very helpful!

-

There were lots of spectators and locals cheering us on, possibly as much as the Berkeley race had.

-

The view of Lake Merritt is excellent, and it’s probably the main visual attraction. Other than that, the race enters the city of Oakland throughout mostly the business sector. Also this was the only one of the three races where a marathon was simultaneously offered, so there were a few marathoners mixed in with us.

-

There’s a great package pickup (which I showed a photo of earlier), which probably had as many deals as the Berkeley package pickup. We had to show up to the pickup to get the bib and the timing chip (attached to the bib). While I was there, I bought several GU products that I’ll use for my future long-distance training sessions.

-

Each runner got tickets for two free Lagunitas Beer cups. We had this offering after the race, but one was enough for me. I’m not sure how people can down two servings quickly.

-

There were pacers for various distances.

-

Race photos are free, which is definitely refreshing compared to the other two races. Disclaimer: I’m writing this post one day after the race occurred, and I won’t be able to download the photos for a few days, so the quality may be worse on a per-photo basis.

-

Unfortunately, I don’t think there are any post-race deals. Hopefully something will show up in my inbox soon so I can turn this into an “upside.” Update 03/27/2018: heh, a day later, I get an email in my inbox showing that there are some race deals. Excellent! The deals seems to be just as good as the other races, so I’ll put it as an upside.

Downsides:

-

The race scenery is probably less appealing than the Berkeley or San Francisco races. The route mostly weaves throughout the city roads, and there aren’t clear views of the Bay. Also, the turn near the end of the race when we see Lake Merritt again is narrow and awkwardly placed, and it’s also hilly, which is not what I want to see at the 12th and 13th mile checkpoints.

-

The post-race food sampling was probably weaker compared to the other two, though it’s debatable. There were water bottles, as you can see in my photo below, along with bananas and some peanut butter bars and energy drinks. I think the other races had more, and I was disappointed when the Oakland website said that racers would “receive bagels” because I didn’t see any! On the positive side, I got a free package of GU stroopwafel, so again, it’s debatable.

-

The race isn’t as good at storing your sweats. At Berkeley, we could save our sweats in the Berkeley high school gym, and it was easy for us to retrieve our bags after the race. For Oakland, it was stored in a small tent and we had to stand in line for a while before a volunteer could find our stuff.

The finish line of the Oakland races (including the half marathon).

Conclusion

I’m really happy that I started running half marathons. I’m signed up to run the San Francisco Second Half-Marathon in July. If you’re interested in training with me, let me know.

Self Supervision and Building Visual Predictive Models

I enjoy reading robotics and deep reinforcement learning papers that cleverly apply self-supervision to learn some task. There’s something oddly appealing about an agent “semi-randomly” acting in a world and learning something useful out of the data it collects. Some papers, for instance, build visual predictive models, which are those that enable the agent to anticipate the future states of the world, which may be raw images (or more commonly, a latent feature representation of them). Said another way, the agent learns an internal physics model. The agent can then use it to plan because it knows the effect of its actions, so it can run internal simulations and pick the action that results in the most desirable outcome.

In this blog post, I’ll discuss a few papers about self-supervision and visual predictive models by providing a brief description of their contributions. A subsequent blog post will discuss the papers’ relationships to each other in further detail.

Paper 1: Learning Visual Predictive Models of Physics for Playing Billiards (ICLR 2016)

“Billiards” in this paper refers to a generic, 2-D simulated environment of balls that move and bounce around walls according to the laws of physics. As the authors correctly point out, this is an environment that easily enables extensive experiments: altering the number of balls, changing their sizes or colors, and so forth.

While the agent “sees” a 2-D image of the environment, that is not the direct input to the neural network nor is it what the neural network predicts.

-

The input consists of the past four “glimpses” of the object, and the applied forces (which we assume known and tracked). The glimpses should be the 128x128 RGB image of the environment, but perhaps “blacking out” everything except the object. (I’m not sure about the technical details, but the idea is intuitive.) Thus, the same network is used for each of the balls in the environment, which the authors call an “object-centric” model. As one would expect, the input image is passed through a series of convolutional layers and then the forces are concatenated with that feature representation.

-

The output is the object’s predicted velocity for the current and subsequent (up to \(h\)) times. It is not the standard latent feature representation that other visual predictive models normally apply, because in billiards, they assume it is enough to know the displacements of the balls to track them.

The model is trained by minimizing

\[\sum_{k=1}^h w_k\|\tilde{u}_{t+k} - u_{t+k}\|_2^2\]where \(w_k\) is a weighing factor that is larger for shorter-term (smaller \(k\)) time steps. Good, this makes sense.

The authors show that they are able to predict the trajectories of balls, and that this can be generalized and also used for planning.

Paper 2: Learning to Poke by Poking: Experiental Learning of Intuitive Physics (NIPS 2016)

I discussed this paper in a previous blog post. Heh, you can tell that I’m interested in this stuff.

Paper 3: Learning Hand-Eye Coordination for Robotic Grasping with Deep Learning and Large-Scale Data Collection (IJRR 2017)

This is the famous (or infamous?) “arm-farm” paper from Google. The dataset here is MASSIVE — I don’t know of a self-supervision paper with real robots that contains this much data. The authors collected 800,000 (semi-)random grasp attempts collected over two months by running up to 14 robots in parallel. In fact, even this somewhat understates the total amount of data: each grasp consists of \(T\) training data points of the form \((I_t^i, p_T^i - p_t^i, \ell_i)\) which contains the current camera image, the vector from the current pose to the one that is eventually reached, and the success of the grasp.

The data then enables the robot to effectively learn hand-eye coordination by continuous visual servoing, without the need for camera calibration. Given a camera image of the workspace, and independently of the calibration or robot pose, the trained CNN predicts the probability that the motion of the gripper results in successful grasps.

During data collection, the labels (either a successful grasp or not) must be automatically supplied. The authors do this with (a) checking if the gripper closed or not, and (b) an image subtraction test, testing the image before and after the object was grasped. This makes sense to me. The first test is used, and then the second is a backup to check for small objects. I can see how it might fail, though, such as if the robot grasped the wrong object or pushed the target object to the side rather than picking it up, either of which would result in a different image than the starting one

The use of robots running in parallel means that each can collect a diverse dataset on its own, in part due to different actions and in part due to different material properties of each gripper. This is an application of the A3C concept from Deep Reinforcement Learning for real, physical robotics.

There are a lot of things that I like from this paper, but one that really seems intriguing for future AI applications is that the data enabled the robots to learn different grasping strategies for different types of objects, such as the soft vs hard difference the authors observed.

Paper 4: Learning to Act by Predicting the Future (ICLR 2017)

I discussed this paper in a previous blog post.

Paper 5: Combining Self-Supervised Learning and Imitation for Vision-Based Rope Manipulation (ICRA 2017)

The same architectural idea from the “Learning to Poke” paper is used in this one to jointly learn forward and inverse dynamics models. Instead of poking, the robot learns rope manipulation, a complicated task to model with hard-coded physics.

In my opinion, one of the weaknesses in the “Learning to Poke” paper was the greedy planner. The planner saw the current and goal images, and had to infer the intermediate actions. This prevented the robot from learning longer-horizon tasks, because the goal image could be quite different from the current one. In this paper, the authors allow for longer-horizon learning by providing one human demonstration of the task. The demonstration consists of a sequence of images, each of which are repeatedly fed into the neural network model at each time step. Thus, the goal image should be the one that correspond to the next time step, which appears to be more tractable.

They ran their Baxter robot autonomously for 500 hours, collecting 60,000 training data points.

Paper 6: Curiosity-Driven Exploration by Self-Supervised Prediction (ICML 2017)

They build on top of an existing RL algorithm, A3C, by modifying the reward function so that at each time step \(t\), the reward is \(r_t^{i}+r_t^{e}\) instead of just \(r_t^{e}\), where \(r_t^{i}\) is the curiosity reward and \(r_t^{e}\) is the reward from the environment.

In sparse rewards, such as the Doom environment from OpenAI they use (and, I might add, the recent robotics environments, also from OpenAI) the environment reward is zero almost everywhere, except for 1 at the goal. This makes it effectively an intractable problem for off-the-shelf RL algorithms. Hence, by building a predictive model, given current and subsequent states \(s_t\) and \(s_{t+1}\) they can assign the curiosity reward to be

\[r_t^i = \frac{\eta}{2}\|\hat{\phi}(s_{t+1}) - \phi(s_{t+1})\|_2^2\]which measures the difference in the predicted latent space of the successor state, respectively. The inverse dynamics model takes in \((s_t,s_{t+1})\) during training and predicts \(a_t\). The forward dynamics model predicts the latent successor state \(\hat{\phi}(s_{t+1})\) shown above.

They argue that their form of curiosity has three benefits: solving tasks with sparse rewards, exploring the environment, and learning skills that can be reused and applied in different scenarios. One interesting conjecture from the third claim is that if the agent simply does the same thing over and over again, the curiosity reward will go down to zero because the agent is stuck in the same latent space. Only by “learning” new actions that substantially change the latent space will the agent then be able to obtain new rewards.

The results on Doom and Mario environments are impressive.

Paper 7: Zero-Shot Visual Imitation (ICLR 2018)

Wait, zero-shot visual imitation (learning)? How is this possible?

First, let’s be clear on their technical definition: “zero-shot” means that they are still allowed to observe a demonstration of the task, but it has to be only the state space (i.e., images), so actions are not included. The second part of the definition means that expert demonstrations (regardless of states or actions) are not allowed during training.

OK, that makes sense. So … the robot just sees the images of the demo at inference time, and must imitate it. That’s a high bar. The key must be to develop a sufficient prior — but how? By having the agent move (semi-)randomly to learn physics, of course!

In terms of the visual predictive model, the paper does a nice job describing four different models, starting from the ICRA 2017 rope manipulation paper and moving towards the one they use for their experiments. Their final model conditions on the final goal and uses recurrent neural networks, and is augmented with a separate neural network that predicts whether the goal has been attained or not.

The paper presents two sets of experiments. One is a navigation task using a mobile robot, and the other is a rope manipulation task using the Baxter robot. With zero-shot visual imitation, the Baxter robot doubles the performance of rope manipulation compared to the results from ICRA 2017. Thus, if I’m thinking about rope manipulation benchmarks, I better check out this paper and not the ICRA 2017 one. I also assume that zero-shot visual imitation would result in better poking performance than “Learning to Poke” if the poking requires long-term planning.

Results for the navigation agent are also impressive.

This is not a deep reinforcement learning paper, though one could argue for the use of Deep RL as an alternative to self-supervision. Indeed, that was a point raised by one of the reviewers.

Additional References

Here are a few additional papers that are somewhat related to the above, and which I don’t have time to write about in detail … yet.

-

Unsupervised Learning for Physical Interaction through Video Prediction is another interesting paper on imagining the future based on predicting pixel motion.

-

One-Shot Visual Imitation Learning via Meta-Learning allows robots to learn how to perform tasks with a single demonstration. It’s somewhat related to the “Zero-Shot Visual Imitation” paper, except those papers use very different solutions for different problems. I’d like to compare them in more detail later.

-

Reinforcement Learning with Unsupervised Auxiliary Tasks works by having a reinforcement learning agent consider a series of “pseudo” loss functions that it considers under its objective function.

-

Diversity is All You Need, which argues that by using entropy correctly, an agent can automatically learn useful skills in an environment. It’s related to the “Curiosity” paper in discovering new skills.

Admitted to Berkeley? Congratulations, But ...

As I write this post, UC Berkeley is hosting its “visit days” program for admitted EECS PhD students. This is a three-day event that lets admitted students see the department, meet people, and get a (tiny) flavor of what Berkeley is like. Those interested in some history may enjoy my blog post about visit days four years ago.

If you’re an admitted student, congratulations! It’s super-competitive to get in. When I applied, the acceptance rate was roughly 5 percent, and the competition has undoubtedly increased since then. This is definitely true for those applying to work in Artificial Intelligence. I’ve seen statistics from BAIR director Trevor Darrell showing that the number of AI applicants has soared in recent years, to the point where the corresponding acceptance rate is now less than three percent.

It’s technically true that you’re not tied to a specific area when you apply, and that’s what the department probably advertises to admitted students. Do not, however, take this as implying that you can apply in an area you’re not interested in but think is “less competitive” and then pivot to AI. If you want to do fundamental AI research (and not just use it in an application) you must apply in AI — otherwise, I highly doubt the faculty will be interested in working with you when they already have the cream of the crop to consider from other applicants.

That being said, here are some related thoughts regarding graduate school, visit days, and so forth, which might be of use to admitted students:

-

You must come to visit days. You will learn a lot about the professors who are interested in working with you based on your assigned one-on-one meetings. I don’t know the details on how those assignments are made, but it’s a good bet that if a professor wants to work with you, then you’ll have a one-on-one meeting with him or her.

-

On a related note, if there’s a faculty member you desperately want to work with, then not only do you need to talk to him or her during visit days, you also need a firm commitment that he or she is willing to advise you without qualifications. This is particularly true for the “rock-star” faculty who get swarmed with emails from top-tier students asking to work with them. Get commitments done early.

-

You also want to be in touch with the students in your target lab(s). If you accept your offer, consistently communicate with them well before the official start of your PhD. This might mean just occasional emails over the summer, or (better) being remotely involved in an ongoing research project that can lead to a fast paper during your first semester. The point is, you want to be in the loop in what the other students are doing. This also includes incoming students — you’ll want to take the same classes as those in your research area, so that you can collaborate on homework and (ideally) research.

These previous points imply the following: you do not want to be spending your first year (or two) trying to “explore” or “get incubated” into research. Your goal must be to do outstanding research in your area of interest from day one.

It’s easy to experience euphoria upon getting your offer of admission. I don’t mean to rain on this, but there’s life beyond getting the admitted offer, and you want to make a sound and informed decision on something that will impact you forever. Again, if you got accepted to Berkeley, congratulations! I hope you seriously consider attending, as it’s one of the top computer science schools. Just ensure that you were admitted to your area of interest, and furthermore, it is crystal clear that the professors who you want to work with are willing to advise you from day one.

Learning to Poke by Poking: Experiental Learning of Intuitive Physics

One of the things I’m most excited about nowadays is that physical robots now have the capability to repeatedly execute trajectories to gather data. This can then be fed into a learning algorithm to subsequently learn complex manipulation tasks. In this post, I’ll talk about a paper which does exactly that: the NIPS 2016 paper Learning to Poke by Poking: Experiental Learning of Intuitive Physics. (arXiv link; project website) Yes, it’s experiental, not experimental, which I originally thought was a typo, heh.

The main idea of the paper is that by repeatedly poking objects, a robot can then “learn” (via Deep Learning) an internal model of physics. The motivation for the paper came out of how humans seem to possess this “internal physics” stuff:

Humans can effortlessly manipulate previously unseen objects in novel ways. For example, if a hammer is not available, a human might use a piece of rock or back of a screwdriver to hit a nail. What enables humans to easily perform such tasks that machines struggle with? One possibility is that humans possess an internal model of physics (i.e. “intuitive physics” (Michotte, 1963; McCloskey, 1983)) that allows them to reason about physical properties of objects and forecast their dynamics under the effect of applied forces.

I think it’s a bit risky to try and invoke human reasoning in a NIPS paper, but it seems to have worked out here (and the paper has been cited a fair amount).

The methodology can be summarized as:

In our setup (see Figure 1), a Baxter robot interacts with objects kept on a table in front of it by randomly poking them. The robot records the visual state of the world before and after it executes a poke in order to learn a mapping between its actions and the accompanying change in visual state caused by object motion. To date our robot has interacted with objects for more than 400 hours and in process collected more than 100K pokes on 16 distinct objects.

Now, how does the Deep Learning stuff work to actually develop this internal model? To describe this, we need to understand two things: the data collection and the neural network architecture(s).

First, for data collection, they randomly poke objects in a workstation and collect the tuple of: before image, after image, and poke. The first two are just the images from the robot sensors and the “poke” is a tuple with information about the poke point, direction and length. Second, they train two models: a forward model to predict the next state given the current state and the applied force, and an inverse model to predict the action given the initial and target state. A state, incidentally, could be the raw image from the robot’s sensors, or it could be some processed version of it.

I’d like to go through the architecture in more detail. If we assume naively that the forward and inverse models are trained separately, we get something like this:

Visualization of the forward and inverse models. Here, we assume the forward and

inverse models are trained separately. Thus, the forward model takes a raw image

and action as input, and has to predict the full image of the next state. In the

inverse model, the start and goal images are input, and it needs to predict the

action that takes the environment to the goal image.

where the two models are trained separately and act on raw images from the robot’s sensors (perhaps 1080x1080 pixels).

Unfortunately, this kind of model has a number of issues:

- In the forward model, predicting a full image is very challenging. It is also not what we want. Our goal is for forward model to predict a more abstract event. To use their example, we want to predict that pushing a glass over a counter will result in the abstract event of “shattered glass.” We don’t need to know the precise pixel location of every shattered glass.

- The inverse model has to deal with ambiguity: there are multiple actions that may head to a resulting goal state, or perhaps no action at all can possibly lead to the next state.

All these factors require some re-thinking in terms of our model architecture (and training protocol). One obvious alternative the authors suggest is to avoid acting on image space and just feed all images into a CNN trained on ImageNet data and extract some intermediate layer. The problem is that it’s unclear if object classification and object manipulation mandate a similar set of features. One would also need to fine-tune ImageNet somehow, which would make this more task-specific (e.g., for a different workstation setup, you’d need to fine-tune again).

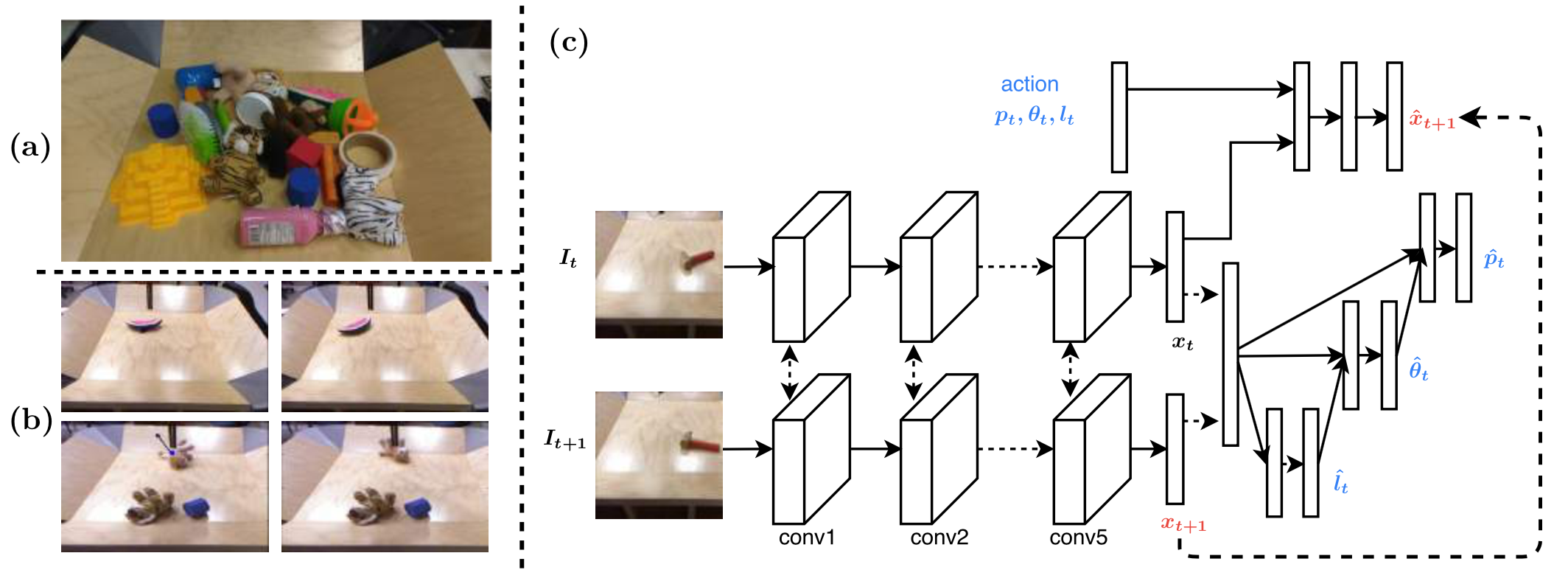

Figure from their paper describing (a) objects used, (b) before/after image

pairs, (c) the network.

Their solution, shown above, involves the following:

-

Two consecutive images \(I_t, I_{t+1}\) are separately passed through a CNN and then the output \(x_t, x_{t+1}\) (i.e., latent feature representation) is concatenated.

-

To conclude the inverse model, \((x_t, x_{t+1})\) are used to conditionally estimate the poke length, poke angle, and then poke location. We can measure the prediction accuracy since all the relevant information was automatically collected in the training data.

As to why we need to predict conditionally: I’m assuming it’s so that we can get “more reasonable” metrics since knowing the poke length may adjust the angle required, etc., but I’m not sure. (The project website actually shows a network which doesn’t rely on this conditioning … well OK, it’s probably not a huge factor.) Update 03/29/2018: actually, it’s probably because it reduces the number of trainable weights.

Also, the three poke attributes are technically continuous, but the authors simply discretize.

-

For the forward model, the action \((p_t, \theta_t, l_t)\) along with the latent feature representation \(x_t\) of image \(I_t\) is concatenated and fed through its own neural network, to predict \(x_{t+1}\), which in fact we already know as we have passed it through the inverse model!

By integrating both networks together, and making use of the randomly-generated training data to provide labels for both the forward and inverse model, they can simply rely on one loss function to train:

\[L_{\rm joint} = L_{\rm inv}(u_t, \hat{u}_t, W) + \lambda L_{\rm fwd}(x_{t+1}, \hat{x}_{t+1}, W)\]where \(\lambda > 0\) is a hyperparameter. They show that using the forward model is better than ignoring it by setting \(\lambda = 0\), so that it is advantageous to simultaneously learn the task feature space and forecasting the outcome of actions.

To evaluate their model, they supply their robot with a goal image \(I_g\) and ask it to apply the necessary pokes to reach the goal from the current starting state \(I_0\). This by itself isn’t enough: what if \(I_0\) and \(I_g\) are almost exact the same? To make results more convincing, the authors:

- set \(I_0\) and \(I_g\) to be sufficiently different in terms of pixels, thus requiring a sequence of pokes.

- use novel objects not seen in the (automatically-generated) training data.

- test different styles of pokes for different objects.

- compare against a baseline of a “blob model” which uses a template-based object detector and then uses the vector difference to compute the poke.

One question I have pertains to their greedy planner. They claim they can provide the goal image \(I_g\) into the learned model, so that the greedy planner sees input \((I_t,I_g)\) to execute a poke, then sees the subsequent input \((I_{t+1},I_g)\) for the next poke, and so on. But wasn’t the learned model trained on consecutive images \((I_t,I_{t+1})\) instead of \((I_t,I_g)\) pairs?

The results are impressive, showing that the robot is successfully able to learn a variety of pokes even with this greedy planner. One possible caveat is that their blob baseline seems to be just as good (if not better due to lower variance) than the joint model when poking/pushing objects that are far apart.

Their strategy of combining networks and conducting self-supervised learning with large-scale, near-automatic data collection is increasingly common in Deep Learning and Robotics research, and I’ll keep this in mind for my current and future projects. I’ll also keep in mind their comments regarding generalization: many real and simulated robots are trained to achieve a specific goal, but they don’t really develop an underlying physics model that can generalize. This work is one step in the direction of improved generalization.

Sample-Efficient Reinforcement Learning: Maximizing Signal Extraction in Sparse Environments

Sample efficiency is a huge problem in reinforcement learning. Popular general-purpose algorithms, such as vanilla policy gradients, are effectively performing random search in the environment1, and may be no better than Evolution Strategies, which is more explicit about acting random (I mean, c’mon). The sample-efficiency problem is exacerbated when environments contain sparse rewards, such as when it consists of just a binary signal indicating success or failure.

To be clear, the reward signal is an integral design parameter of a reinforcement learning environment. While it’s possible to engage in reward shaping (indeed, there is a long line of literature on just this topic!) the problem is that this requires heavy domain-specific engineering. Furthermore, humans are notoriously bad at specifying even our own preferences; how do we expect us to define accurate reward functions in complicated environments? Finally, many environments are most naturally specified by the binary success signal introduced above, such as whether or not an object is inserted into the appropriate goal state.

I will now summarize two excellent papers from OpenAI (plus a few Berkeley people) that attempt to improve sample efficiency in reinforcement learning environments with sparse rewards: Hindsight Experience Replay (NIPS 2017) and Overcoming Exploration in Reinforcement Learning with Demonstrations (ICRA 2018). Both preprints were updated in February so I encourage you to check the latest versions if you haven’t already.

Hindsight Experience Replay

Hindsight Experience Replay (HER) is a simple yet effective idea to improve the signal extracted from the environment. Suppose we want our agent (a simulated robot, say) to reach a goal \(g\), which is achieved if the configuration reaches the defined goal configuration within some tolerance. For simplicity, let’s just say that \(g \in \mathcal{S}\), so the goal is a specific state in the environment.

When the robot rolls out its policy, it obtains some trajectory and reward sequence

\[(s_0, a_0, r_0, s_1, a_1, r_1, \ldots, s_{T-1}, a_{T-1}, r_{T-1}, s_{T}) \sim \pi_b, P, R\]achieved from the current behavioral policy \(\pi_b\), internal environment dynamics \(P\), and (sparse) reward function \(R\). Clearly, in the beginning, our agent’s final state \(s_T\) will not match the goal state \(g\), so that all the rewards \(r_t\) are zero (or -1, as done in the HER paper, depending on how you define the “non-success” reward).

The key insight of HER is that during those failed trajectories, we still managed to learn something: how to get to the final state of the trajectory, even if it wasn’t what we wanted. So, why not use the actual final state \(s_T\) and treat it as if it was our goal? We can then add the transitions into the experience replay buffer, and run our usual off-policy RL algorithm such as DDPG.

In OpenAI’s recent blog post, they have a video describing their setup, and I encourage you to look at the it along with the paper website — it’s way better than what I could describe. I’ll therefore refrain from discussing additional HER algorithmic details here, apart from providing a visual which I drew to help me better understand the algorithm:

My visualization of Hindsight Experience Replay.

There are a number of experiments that demonstrate the usefulness of HER. They perform experiments on three simulated robotics environments and then on a real Fetch robot. They find that:

-

DDPG with HER is vastly superior to DDPG without HER.

-

HER with binary rewards works better than HER with shaped rewards (!), providing additional evidence that reward shaping may not be fruitful.

-

The performance of HER depends on the sampling strategy for goals. In the example earlier, I suggested using just the last trajectory state \(s_T\) as the “fake” goal, but (I think) this would mean the transition \((s_{T-1},a_{T-1},r_{T-1},s_T)\) is the only one which contains the dense reward \(r_{T-1}\); there would still be \(T-1\) other states with the non-informative reward. There are alternative strategies, such as sampling more frequent states. However, doing this too much has a downside in that “fake” goals can distract us from our true objective.

-

HER allows them to transfer a policy trained on a simulator to a real Fetch robot.

Overcoming Exploration in Reinforcement Learning with Demonstrations

This paper extends HER and benchmarks using similar environments with sparse rewards, but their key idea is that instead of trying to randomly explore with RL algorithms, we should use demonstrations from humans, which is safer and widely applicable.

The idea of combining demonstrations and supervised learning with reinforcement learning is not new, as shown in papers such as Deep Q-Learning From Demonstrations and DDPG From Demonstrations. However, they show several novel, creative ways to utilize demonstrations. Their algorithm, in a nutshell:

-

Collect demonstrations beforehand. In the paper, they obtain them from humans using virtual reality, which I imagine will be increasingly available in the near future. This information is then put into a replay buffer for the demonstrator data.

-

Their reinforcement learning strategy is DDPG with HER, with the basic sampling strategy (see discussion above) of only using the final state as the new goal. The DDPG+HER algorithm has its own replay buffer.

-

During learning, both replay buffers are sampled to get the desired proportion of supervisor data and data collected from environment interaction.

-

For the actor (i.e., policy) update in DDPG, they add the Behavior Cloning loss in addition to the normal gradient update for DDPG (function denoted as \(J\)):

\[\lambda_1 \nabla_{\theta_\pi}J - \lambda_2 \nabla_{\theta_\pi} \left\{ \sum_{i=1}^{N_D}\|\pi(s_i|\theta_\pi ) - a_i\|_2^2 \right\}\]I can see why this is useful. Notice, by the way, that they are not just using the demonstrator data to initialize the policy. It’s continuously used throughout training.

-

There’s one problem with the above: what if we want to improve upon the demonstrator performance? The behavior cloning loss function prevents this from happening, so instead, we can use the Q-filter, a clever contribution:

\[L_{BC} = \sum_{i=1}^{N_D}\|\pi(s_i|\theta_\pi ) - a_i\|_2^2 \cdot \mathbb{1}_{\{Q(s_i,a_i)>Q(s_i,\pi(s_i))\}}.\]The critic network determines \(Q\). If the demonstrator action \(a_i\) is better than the current actor’s action \(\pi(s_i)\), then we’ll use that term in the loss function. Note that this is entirely embedded within the training procedure: as the critic network \(Q\) improves, we’ll get better at distinguishing which terms to include in the loss function!

-

Lastly, they use “resets”. I initially got confused about this, but I think it’s as simple as occasionally starting episodes from within a demonstrator trajectory. This should increase the presence of relevant states and dense rewards during training.

I enjoyed reading about this algorithm. It raises important points about how best to interleave demonstrator data within a reinforcement learning procedure, and some of the concepts here (e.g., resets) can easily be combined with other algorithms.

Their experimental results are impressive, showing that with demonstrations, they outperform HER. In addition, they show that their method works on a complicated, long-horizon task such as block stacking.

Closing Thoughts

I thoroughly enjoyed both of these papers.

-

They make steps towards solving relevant problems in robotics: increasing sample efficiency, dealing with sparse rewards, learning long-horizon tasks, using demonstrator data, etc.

-

The algorithms are not insanely complicated and fairly easy to understand, yet seem effective.

-

HER and some of the components within the “Overcoming Exploration” (OE) algorithm are modular and can easily be embedded into well-known, existing methods.

-

The ablation studies appear to be done correctly for the most part, and asking for more experiments would likely be beyond the scope of a single paper.

If there are any possible downsides, it could be that:

-

The HER paper had to cheat a bit on the pick-and-place environment by starting trajectories from when the gripper grips the block.

-

In the OE paper, their results which benchmark against HER (see Section 6.A, 6.B) were done with only one random seed, and that’s odd given that it’s entirely in simulation.

-

Their OE claim that the method “can be done on real robot” needs additional evidence. That’s a bold statement. They argue that “learning the pick-and-place task takes about 1 million timesteps, which is about 6 hours of real world interaction time” but does that mean we can really execute the robot that often in 6 hours? I’m not seeing how the times match up, but I guess they didn’t have enough space to describe this in detail.

For both papers, I was initially disappointed that there wasn’t code available. Fortunately, that has recently changed! (OK, with some caveats.) I’ll go over that in a future blog post.

-

I’m happy to see that Professor Ben Recht has a new batch of reinforcement learning blog posts, as he’s a brilliant, first-rate machine learning researcher. I’ve been devouring these posts, and I remain amused at his perspective on control theory versus reinforcement learning. He has a point in that RL seems silly if we deliberately constrain the knowledge we can provide to the environment (particularly with model-free RL). For instance, we wouldn’t deploy airplanes and other machines today without a deep understanding of the physics involved. But those are thoughts for another day. ↩

Why Does IEEE Charge Hundreds for Two Extra Pages?

My preprint on surgical debridement and robot calibration was accepted to the 2018 IEEE International Conference on Robotics and Automation (ICRA). It’s in Brisbane, Australia, which means I’ll be going to Australia for the second time in less than a year — last August, I went to Sydney for UAI 2017.

I’m excited about this opportunity and look forward to traveling to Brisbane in May. (That is, assuming Berkeley’s Disabled Students’ Program isn’t as slow as they were in August, but never mind.) I have already booked my travel reservations and registered for the conference.

Everyone knows that long-haul international travel is expensive, but what might not be clear to those outside academia is that conference registration fees can be just as high as those airfare fees. For ICRA, the cost of my registration came to be 1,171.36 AUD before taxes, and 1,275.00 AUD with taxes. That corresponds to 1,033.94 in US dollars. Ouch.

Fortunately, I’m going to get reimbursed, since Berkeley professors are not short on money, but I still wish that costs could be lower. The breakdown was: 31.36 AUD for a hotel deposit (I’ll pay the full hotel fees when I arrive in May), 600 AUD for the early-bird IEEE student membership registration, 100 AUD for the workshops/tutorials, and 440 AUD for the two extra page charge.

Wait, what was the last one?

Ah, I should clarify. The policy of ICRA, and for many IEEE conferences at that (hence the title of this blog post), is the following:

Papers to ICRA can be submitted through two channels:

- To ICRA. Six pages in standard ICRA format and a maximum of two additional pages can be purchased.

- To the IEEE Robotics and Automation Letters (RA-L) journal, and tick the option for presentation at ICRA. Six pages in standard ICRA format are allowed for each paper, including figures and references, but a maximum of two additional pages can be purchased. Details are provided on the RA-L webpage and FAQ.

All papers are submitted in PDF format and the page count is inclusive of figures and references. We strongly encourage authors to submit a video clip to complement the submission. Papers hosted on arXiv may be submitted to ICRA.

So, in short, we can have six pages, and can purchase two extra pages if needed.

This makes no sense.

Is it because of printing costs associated with the proceedings? It shouldn’t be. The proceedings, as far as I know, are those enormous books that concatenate all the papers from a conference.

They are also worthless and should never be printed outside of maybe one or two historical copies for IEEE’s book archives. No one should read directly from them. Who has the time? Academics are judged based on the papers they produce, not the papers they consume. This year, ICRA alone accepted 1030 papers (!!). Yes, over a thousand. It makes no sense to browse proceedings to search for a paper; just type in a search query on Google Scholar. If you think you might be missing a gem somewhere in the proceedings, I wouldn’t worry. Good papers will make themselves known eventually through word of mouth. They also tend to be widely accessible to all, such as being available on arXiv rather than being stuck behind an IEEE paywall. Most universities have IEEE subscriptions so it’s not generally a problem to download IEEE papers for free, but it’s still a bit of an unnecessary nuisance.

Speaking of arXiv, perhaps IEEE doesn’t follow a similar model due to hosting costs? That doesn’t seem like a good rationale, and in particular it doesn’t justify the steep jump in price from 6 to 8 pages. Why not have pages 1 through 6 charged accordingly? Or simply make the charge based on file size instead of page size, while obviously keeping a hard page limit to alleviate the load on reviewers. There seem to be way more rational price structures than the 220 AUD each for pages 7 and 8.

It seems like ICRA organizers would prefer to see 6 page papers, yet the problem is that everyone knows that if you allow 8 pages, then that becomes the effective lower bound on paper length. An 8-page paper has a better chance of being accepted to ICRA than a 7-page paper, which in turn has odds over a 6-page paper. And so on. Indeed, if you look at ICRA papers nowadays, the vast majority hit the 8 page limit, many with barely a line to spare (such as my paper!). The trend is possibly even more pronounced with ICML, NIPS, and other AI conferences, from my anecdotal experience reading those papers.

Such a cost structure might needlessly disadvantage students and authors from schools without the money to easily pay the over-length fees. This is further exacerbated by ICRA’s single-blind policy, where reviewers can see the names of authors and thus be influenced by research fame and school institution name.

In short, I’m not a fan of the two-page extra charge, and I would suggest that ICRA (and similar IEEE-based conferences) switch to a simple, hard, 8-page limit for papers. In addition, I would also like to see all accepted papers freely available for download in an arXiv-style format. If hosting costs are a burden, a more rational price structure would be to slightly increase the conference registration fees, or encourage authors to upload their papers on arXiv in lieu of being hosted by ICRA.

To be clear, I’m still extremely excited about attending ICRA, and I’m grateful to IEEE for organizing what I’ve heard is the preeminent conference on robotics. I just wish that they were a little bit clearer on why they have this two-page extra charge policy.

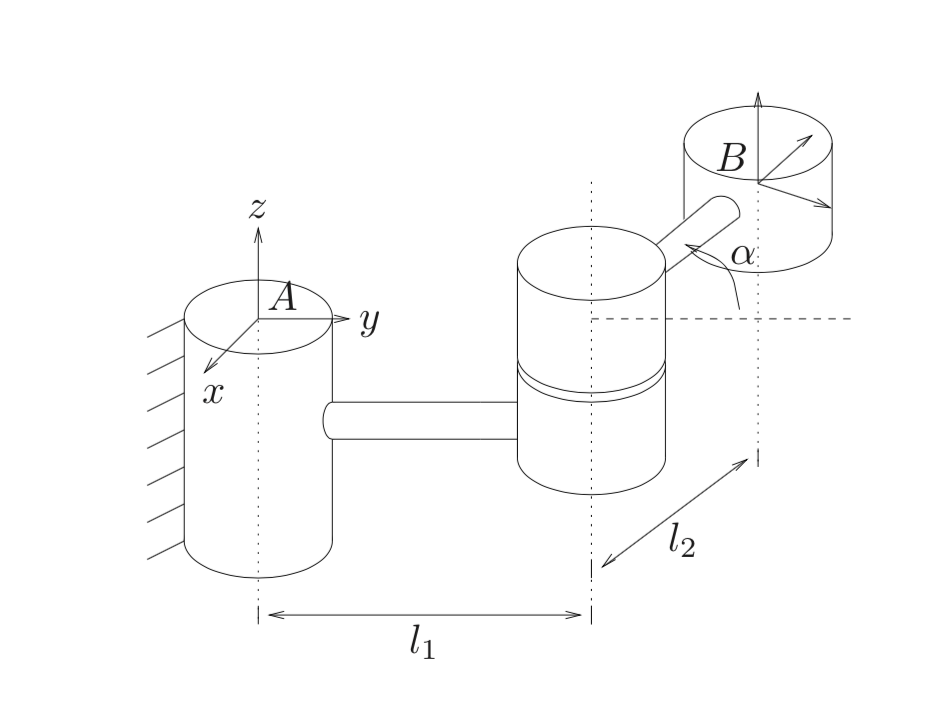

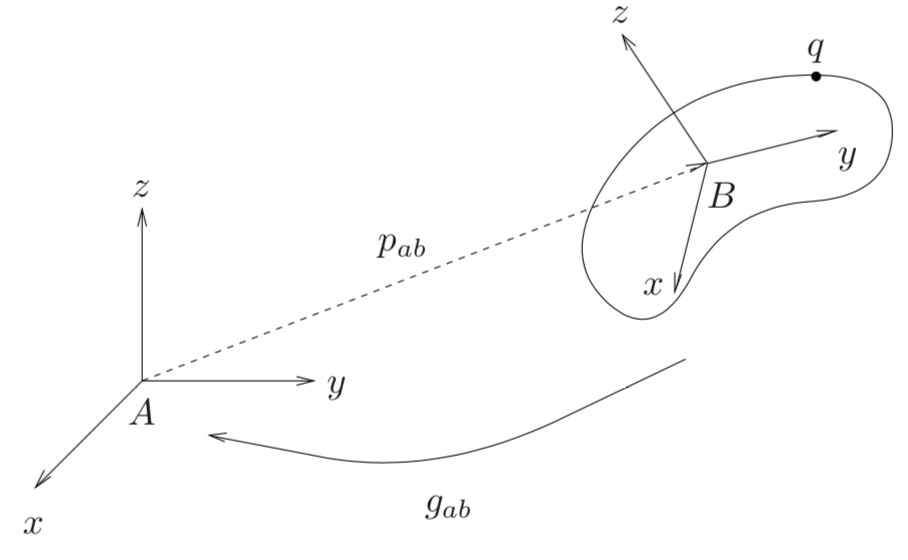

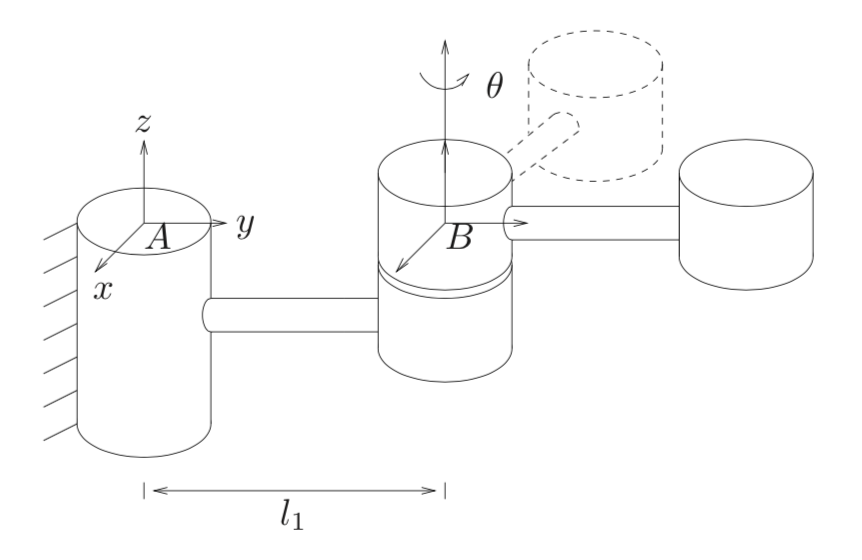

Twists and Exponential Coordinates

In this post, I build upon my previous one by further investigating fundamental concepts in Murray, Li, and Sastry’s A Mathematical Introduction to Robotic Manipulation. One of the challenges of their book is that there’s a lot of notation, so I first list the important bits here. I then review an example that uses some of this notation to better understand the meaning of twists and exponential coordinates.

Side comment: there is an alternative, more recent robotics book by Frank C. Park and Kevin M. Lynch called Modern Robotics. It’s available online and has its own Wikipedia, and even has some lecture videos! Despite its 2017 publication date, the concepts it describes are very similar to Murray, Li, and Sastry, except that the presentation can be a bit smoother. The notation they use is similar, but with a few exceptions, so be aware of that if you’re reading their book.

Back to our target textbook. Here is relevant notation from Murray, Li, and Sastry:

-

The unit vector \(\omega \in \mathbb{R}^3\) specifies a direction of rotation, and \(\theta \in \mathbb{R}\) represents the angle of rotation (in radians).

An important fact is that any rotation can be represented as rotating by some amount through an axis vector, so we could write rotation matrices \(R\) as functions of \(w\) and \(\theta\), i.e., \(R(\omega, \theta)\). Murray, Li, and Sastry call this “Euler’s Theorem”.

Note: if you’re familiar with the Product of Exponentials formula, then \(\theta = (\theta_1, \ldots, \theta_J)\), which generalizes to the case when there are \(J\) joints in a robotic arm. Also, \(\theta_i\) doesn’t have to be an angle; it could be a displacement, which would be the case if we had a prismatic joint.

-

The cross product matrix \(\hat{\omega}\) satisfies \(\hat{\omega} p = \omega \times p\) for some \(p \in \mathbb{R}^3\), where \(\theta\) indicates the cross product operation. More formally, we have