My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

ASL Country Signs and Variation

Ever since I failed to recognize the sign for “Hungary” in a conversation several weeks ago, I have made it a personal goal to learn more ASL signs for countries. Related to that, I have investigated about certain countries having “old” ASL versus “new” ASL signs.

China

The current and traditional sign for China is here. As the website explains, the new version was actually borrowed from China. (The sign language that ASL primarily borrowed from was French Sign Language.) The traditional version is similar to Korea’s traditional version, but does not seem to possess as much of the negative connotations as the latter did (see below). Interestingly enough, the first sign for China that I learned was actually the third sign seen in the link. I’ll sometimes slip up and use it in conversations when I really should not.

Korea

There are several variations of the current sign for Korea. The one I most frequently use starts with my right hand slightly above my head, with my fingertips touching my temple – think of doing a salute. Then I (quickly) bring my hand “out” to the right, then back “in” where my fingertips end up touching my lower cheek and my palm is facing down. This is generally the sign for South Korea; North Korea’s sign is the reverse of the South Korean sign, though I don’t quite see the need, as it is easy to first sign “north” or “south” before the “Korea” part. The old ASL sign is the second sign demonstrated here, with the middle finger “pulling” near the right eye. This sign started to disappear from common use due to the perception of it being a pejorative sign, aimed at deriding the many Asians who have long, narrow eyes. Even if that weren’t the case, I would still prefer the current sign as the second one causes my glasses to shift uncomfortably.

Russia

Russia’s current ASL sign is displayed here. As the caption insinuates, this sign came to fruition out of the public perception that Russians drink heavily. The hand motion is there to wipe off the ale from your (er … I mean the Russian’s) mouth. The old sign is when you put your hands on your hips twice, palms facing downwards – click here to see. I am unsure why this change occurred, although I can tell you that I have never heard a Russian protest about the new sign. Perhaps they are proud of the hefty amount they can drink?

That’s all for now, but I’ll continue to comment about country signs in the future.

Technical Term Dilemma

The number one problem with American Sign Language for deaf students taking courses in technical fields is the lack of sign standardization. Want to know what the sign for surjective is? You might run into a problem in that no sign exists or there will be some unusual sign that hasn’t been adopted in standard practice. (By the way, simply spelling out the letters of a technical term is a big no-no in ASL unless it’s the first time an interpreter is expressing that word.) And I can’t blame ASL for that; most people won’t use technical terms like homomorphism or even the word cache on a regular basis. So there’s little motivation for ASL to include esoteric words in its common vocabulary.

So how can we fix this problem? Or, perhaps, is it even necessary to fix this problem? As someone taking a myriad of undergraduate math and science classes, I am fairly used to having my two ASL interpreters collaborate with me to decide on signs for a variety of technical terms. Typically, if we can’t agree on a sign for a term, we settle for a one-letter representation of that word. The word chromosome, for instance, would be signed by just slightly shaking the letter “C” in the dominant hand along with a lip motion of the word. But even if we were able to come up with a sign, it’s highly likely that it will differ from what another student composed at a different university, so there’s a clear lack of standardization.

How has this issue been addressed? Possibly the best single resource on the web is the ASL-STEM Forum, which has done a tremendous job recruiting sign language users to share and distribute signs for terms commonly used in math and science. But there are some glaring pitfalls.

That website allows multiple users to upload different signs, so you might see three or four different signs for the same term, which defeats the purpose of having one sign for one word. Another downside is that this website does not provide an index for subjects that are beyond the level of college underclassmen. Math, for instance, has nothing beyond calculus, which is often the first course required for math majors. This is not as big of a problem as it seems, though, since there probably is not a large enough segment of deaf students taking advanced math and science courses at the upper-undergraduate or graduate level – which is definitely a prominent factor in the lack of signs for technical terms.

But even worse is that we are still nowhere close to providing signs for all the technical terms. One look at the listing of terms in the “Algebra” subsection of the Mathematics section reveals that just five of the twenty-one words have signs! And this is a subject that I hope all deaf students should take before graduating from high school.

The possible benefits of this website, though, leave me optimistic. It’s a user-contributed website, so its data could theoretically grow exponentially. I have contributed one sign to that website, and I hope to contribute more depending on my access to a PC. (For some reason, Macs cause issues with creating videos.) I’ll also try to spread the word to other people I know who may find the website a long-overdue resource.

What can I conclude? It would be nice to have the ASL-STEM Forum more widely known across the ASL community, and it would definitely be great if interpreters were required to know about that website as part of their job. They wouldn’t have to learn all the signs there – they would just need to keep the site in mind to act as a possible reference. Alternatively, perhaps only interpreters with the special R.I.D. (Registry of Interpreters for the Deaf) certification should be required to contribute or incorporate signs from that website since they are typically the ones interpreting undergraduates, who take more advanced courses than secondary school children.

Ultimately, though, this is not going to make or break a deaf student’s undergraduate career. College students should generally be prepared to understand terms on their own and actively collaborate among others to make sure that no comprehension is lost when an ASL sign is missing.

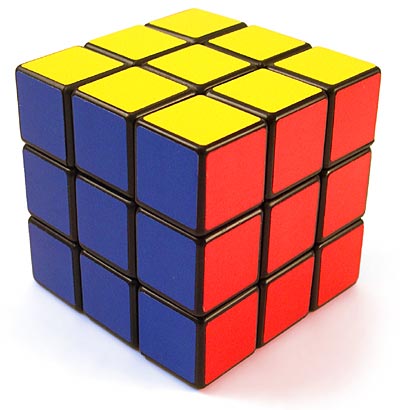

Rubik's Cube Orientations

A completely solved cube is one orientation of a Rubik’s Cube. Turning a face results in another … so how many total orientations of the Rubik’s Cube are there? To answer that, we need to look at how many possible combinations of corner and edge pieces are valid, in the sense that the cube is solvable. Later, I’ll discuss how many possible ways there are of combining the pieces randomly after disassembling the cube, which usually results in an unsolvable cube.

There are six center “pieces” that do not move; they are fixed in place. Then we have the corners and edges that compose the rest of each of the six faces of the cube. There are eight corner pieces, which have three colors, and twelve edge pieces, which have two colors. Take a look at a Rubik’s cube and make sure you understand the distinction.

We think of having eight corner pieces to “fit” into eight corner “slots” in the Rubik’s cube, so there are 8! ways to position the corners. Each individual corner, though, has three faces, and they can be oriented in different ways while maintaining the same actual position on the cube. Therefore, there are 3^7 ways of orienting the faces of the corners. Notice that it’s not 3^8 because if we orient the faces of the first seven corners, then the eighth corner is fixed in place – it cannot be modified without changing the position of the faces on the other corners.

So far, that gives us 8!*3^7 possible orientations. But we now have to consider our 12 edges. We have 12 edges so there are 12! ways of positioning them, along with 2^11 orientations for their two faces. Again, we use n-1 edges where n=12 since the twelfth edge is forced in place if we don’t want to alter the first 11 edges. This implies that if we have a completely “solved” Rubik’s cube with the exception of one edge that is in the wrong orientation, we won’t ever be able to solve that cube since there’s no algorithm that can adjust the faces of one edge without messing up another part of the cube. (Well, you could take the cube apart if you want, but that is cheating.)

All together, we have 8!*3^7*12!*2^11 orientations of the cube. But this is actually double the amount we have, because we have to consider even vs. odd permutations. By this, we are talking about the amount of transpositions we have to perform. A transposition here refers to swapping two corners without altering the rest of the cube, or swapping two edges without altering the rest of the cube. A key concept is that the overall “sign” for the group of edges and the group of corners must be the same; both can be even, both can be odd, but they cannot differ. A group, by the way, is a set with an operation that satisfies properties of associativity, identity and invertibility, but that is beyond the scope of this article because no abstract algebra knowledge is assumed.

What does this mean? Suppose we have a cube that is almost solved. Also suppose that if we can perform 3 transpositions (or swaps) of the corner pieces, we will have correctly oriented all the remaining corners. This means that, if the cube is solvable, the number of swaps needed for the edge pieces must be odd, so the overall parity is even. We might also need 3 swaps of the edges to solve the cube, or we could use just 1 swap if necessary. It is impossible to solve a cube that has an overall parity that is odd.

Therefore, we have to divide what we computed before by half. So the number of possible orientations of a Rubik’s cube, under the assumption that the cube is solvable, is (8!*3^7*12!*2^11)/2 = 43,252,003,274,489,856,000. If we wanted to see the total number of orientations, regardless of whether the cube is solvable, we multiply this by 12. Only a third of the corner orientations are solvable, and only half of the edge orientations are solvable. And, of course, we have the even vs. odd permutations as I explained above. All together, 2*2*3 = 12.

The astute observer will realize that this means that if he has all the pieces of the Rubik’s cube scattered in front of him and assembles them randomly, he will have a 1 in 12 chance of creating a solvable Rubik’s Cube.

Meal Signs and a Shift from SEE to ASL

All my life, I’ve grown used to the English version of signing the words breakfast, lunch, and dinner. But I began to notice a change this past year when my ASL interpreters repeatedly signed those three words in a more “ASL-like” manner. You can view the differences in this video. I’m surprised that I never realized this distinction sooner. My “ASL” interpreters before college must have used the English version of those signs so frequently that I never thought of any alternative signs. (Yet another reason why college is better than high school, but that’s beyond the scope of my article.)

The three signs are fairly straightforward. They all start with “eat” which makes sense since all three are meals. Then the dominant arm is used to indicate the time of the day: morning, noon, or evening. This is much, much more aesthetically pleasing to see than using single-letter versions of those meals that correspond to their first letter (b, l, and d). This is, again, part of a series of ASL signs that differ from their SEE, or Signed Exact English, counterparts by omitting the use of signing letters to represent a word. The use of letters representing the first letter of a sign is one of two distinct features of Signed Exact English. (The other, of course, is that SEE strives to be a direct word-for-word translation of ASL.) SEE, while not its own language like ASL, is often what students learn at first since it is easier to learn. After all, if one knows the English language well, he or she can just learn the signs of each English word. But that doesn’t mean SEE is better than ASL. In fact, the Deaf Community strongly discourages the use of SEE.

So what are the downsides of using SEE? In my opinion, there are three main points. The first and most important is that SEE cannot realistically replicate every single word in the English language. People can speak faster than they sign, even when accounting for time needed to breathe in the former. This means that a series of short small words such as “tell me, is it a big one on my arm or is it not?” can really throw a train wreck in a person trying to replicate words down to every single “a.” A person signing in ASL generally omits small words like “a” and “on.” An ASL equivalent for my sample question would be probably be signing “Tell-me-big-small” and then pointing to one’s arm. Related to that, trying to translate every single word will likely tire people’s arms quicker than ASL will. There’a reason why many deaf students who use ASL are provided two interpreters per class (as I am) even for just a 50-minute lecture. Signing is a physically exhausting job if done with attention and precision, and there’s no need to exacerbate things by painstakingly signing every single “the.”

The second downside is that it interferes with ASL comprehension. I already told you an example of true ASL signs that differ from their English versions. A similar case is with the word “red.” The ASL sign for Red is performed by touching your index finger below your lips and stroking it down reasonably quickly. But it can also be done by crossing your middle finger over the index finger, and performing the same sign. That cross will form the letter “R” – see this. But why add needless complexity to our sign when we were perfectly fine with just one letter? Moreover, there’s no other sign, for a color or otherwise, that closely resembles red so much that we would want the extra cross for distinction. This word is one of many that are different among commonly performed ASL and SEE. Some, like red, don’t have a significant difference to cause much confusion in a conversation between an ASL proponent and an SEE proponent. But others, like breakfast, could prove problematic and would require interrupting a conversation to ask questions. But the interference of ASL comprehension goes beyond just mismatching signs. SEE is performed following English grammar since it’s a translation, but ASL follows different grammatical rules. The question “When was the last time I saw you” might be expressed in ASL as signing “Last-time-saw-you-WHEN?” The order of words, as well as what’s ultimately signed, can differ substantially. This creates a unfortunate chasm between ASL and SEE users. While such people can often understand each other regardless, it would be nice if they were actually signing under the same guidelines.

The third might be one of my minor nitpicks, but I find that SEE simply not interesting. When done properly, ASL is a pleasing language, a sight to behold. But SEE, due to its constraint of being a translation, can’t match the fluidity of ASL, as it must follow the standard rules of English grammar. If you ever get a chance to see SEE uses and ASL users in close proximity, compare the two signs. I am not arguing that ASL is so beautiful that it’s ineffable, but from my experience, ASL in general has less jerky and stopping motions than SEE does.

Of course, there are other opinions on SEE. I have seem several websites that condemn SEE on the basis that it excludes deaf people from the Deaf Community. This claim does seem reasonable since I would imagine that people want to follow the “exact” same language, but it’s difficult to prove something like this scientifically. Add that to the fact that SEE and ASL users can often understand each other, and I don’t think that SEE people are significantly excluded, if at all. I’ve been to many events organized by deaf people and I don’t think I’ve had much difficulty fitting in with other ASL users. That’s one of the beauty of fingerspelling, which is thankfully common to both users.

By the way, can you sign all letters of the alphabet in four seconds? I can do the entire alphabet faster than four seconds on both hands.

Mathematics of a Rubik’s Cube

In less than 6 hours, it will be 2012. And in less than 72 hours, I’ll be back in my Williams College room for the Winter Study period. I’m taking a course called “The Mathematics of a Rubik’s Cube” taught by a professor whose research focus is on algebra. That’s the only course I’m taking for the four-week period, which is great for me since I can focus all my time on learning about the cube. Solving a Rubik’s cube doesn’t require much thought since there are many algorithms available online. But I’m hoping that the math of the cube won’t rely much on rote review and memorization. As a bonus, I’ll also expect to be able to speed cube faster than I’ve ever done before. Obviously, I’m not planning to try and break any records; this is just for my own entertainment. Maybe I’ll even be able to solve 4x4x4, 5x5x5, 6x6x6, 7x7x7, and 4x4x4x4 cubes.

The 4x4x4x4 cube has an extra dimension. This is a correct video of such a cube, as well as a correct solution. Technically, I should call those shapes tesseracts, which are a classification of hypercubes dealing with four dimensions.

Expect to see more entries about the Rubik’s Cube in January. I’ll also be posting more about American Sign Language and hopefully updating the Seita Axioms one day. Meanwhile, Happy New Years!

Where Not to Study

I finished my last final for the fall 2011 semester on December 19. While I was studying over finals week, I also took notice of the studying patterns of my fellow classmates and myself. To carry out that goal, I looked at the study habits of students in the libraries. Williams College has two main libraries, Sawyer and Schow, the former being primarily for the humanities and the latter being primarily for the sciences. Clearly, those libraries were going to be packed by nervous students trying to get that A. But was I among those in the library?

During the two weeks or so that were dedicated to studying and taking finals, I don’t think I set foot once in those two main libraries.

Actually, I lied. I did go and enter Sawyer library just to print a document. But while I was doing that, I noticed students sitting down on the main tables on the first floor of Sawyer. They had the necessary books and materials to study with, but they were also with other students or checking out their phones. (Most likely, they were texting.)

There’s the problem. I asked myself: Why would these people who are trying to study for final exams or write lengthy final papers study with others on one of the main tables close to the entrance of Sawyer? This no doubt leads to friends entering the library, noticing those people immediately, and saying hello. Thus, these people “studying” face an endless stream of distractions. It didn’t help that there happened to be a rowdy game of Quidditch.

While it was no doubt thrilling to see that happen, especially from the perspective of someone like me who wasn’t distracted by the event, I can’t help but wonder if anyone was in the library and regretted it. Unless you can somehow get an isolated spot in the library – which is actually pretty tough in both of Williams’ main libraries – I’d recommend studying elsewhere. It’s one of the small things that can really ameliorate an academic performance. Obviously, there will be some exceptions, such as if you’ve got to work in group for some reason. But the vast majority of study time should really be done in isolation. And preferably, with the phone off.

A picture of the main tables of Schow library, from the Williams College website. I have avoided studying in this area for months, despite its attractive architecture.

ASL Guidelines

I learned American Sign Language, which I’ll abbreviate to ASL from this point forward, when I was just two years old. I then took up English immediately after, and those are the only two languages that I’m fluent in today. (Here’s my preemptive apology to all the people fluent in Japanese; if you know me, you understand this.) My English is relatively better than my ASL, since I practice the former more, but I can still understand ASL well. For many years, I was cognizant of the various ways to emphasize signs to display various levels of expression. One of the things that I didn’t comprehend until just recently, however, was the amazing web of syntax and grammar rules in ASL. Many involve body movement and contortions of the English language that are not entirely instinctive.

I was formally introduced to ASL Syntax when I was a freshman at Williams College as a teaching assistant to the RUSS 12 – Introduction to American Sign Language course offered for Winter Study, the four-week period between the first and second semesters. Previously, I never had anyone tell me that the correct way to ask a yes or no question was with eyebrows pushed up. Similarly, signing a phrase with the translated English equivalent of “I teacher” was equivalent to the non-translated English equivalent of “I am a teacher.” (I knew this in middle and high school intrinsically; getting it described to me made it pleasantly clear.) This was all interesting to me, so I absorbed – and hopefully retained – the material just as well as the students in RUSS 12 if not more. Here are a handful of the rules that every person knowing ASL should follow. I’ll call them the 10 Seita Axioms, because it’s not illegal to do so.

Axiom I: Signing an English phrase word-by-word is discouraged.

Axiom II: Never sign the word “is.” This rule generally applies to all prepositions.

Axiom III: While asking yes/no questions, keep your eyebrows tilted up.

Axiom IV: While asking a who/what/where/when/why question, keep your eyebrows tilted down.

Axiom V: Use of classifiers is encouraged.

Axiom VI: Mouth the words that you sign, but do not use your voice.

Axiom VII: Emphasize the tone of your signs.

Axiom VIII: Make prudent use of indexing.

Axiom IX: The simplest way to manage personal pronouns is to point.

Axiom X: Use inflection to modify your signing; this aids brevity and clarity.

“Footnotes” for each axiom, which will probably need its own article.

Axiom I Footnote: If you do so, you’re signing Signed Exact English (abbreviated SEE).

Axiom II Footnote: There are other words that you shouldn’t sign, as I mention in the following sentence in the axiom, but “is” is probably the most incongruous of words to sign in ASL. I felt it was prudent to give it an axiom to itself.

Axiom III Footnote: Fairly self-explanatory.

Axiom IV Footnote: Think of it as a way of representing confusion. Sometimes it occurs instinctively when asking someone a question in English.

Axiom V Footnote: Classifiers here are signs that can represent the form, movement, or appearance of an object. For instance, to indicate someone’s walking, you could just slide your index finger across your body.

Axiom VI Footnote: If you speak while signing at the same time, it’s like you’re expressing two different languages simultaneously. While it can be helpful in situations when you’re communicating with a person who only knows ASL and another person who only knows English, it’s frowned upon in the deaf community.

Axiom VII Footnote: If you’re just a little mad, move your hands up quickly but briefly. If you’re at the level of madness where it’s not safe for someone to be within a one-mile radius of you … we need to see that in the sign.

Axiom VIII Footnote: If you’re talking about Bob and Sarah, point to the left if you want to describe Bob, and to the right for Sarah. Clearly, this becomes impractical with a large number of objects. In that case, just be sure to clarify what you’re signing beforehand.

Axiom IX Footnote: To sign the general word “he,” point your finger in the air.

Axiom X Footnote: If you’re very happy that something is done, instead of signing the cumbersome “very,” just emphasize the “very” when signing “happy.” Think of it as the difference between “I am happy” and “I am HAPPY.”

Above is, literally, my first attempt in creating a set of concise yet comprehensive ASL guidelines. I hope to eventually update to version 2, version 3, and so forth. I would copyright this, but I stole this idea from another deaf person who postulated these axioms (just kidding). © 2011 Daniel Seita. See? I can do this stuff. I now feel obligated to send my computer science professor a thank-you note for encouraging us to copyright all of our writing. Maybe I can get extra credit.

Before I end this post (which, sadly, coincides with the end of a Williams College class recess), I’d like to provide some references. A great website that contains much of what I said and more is Lifeprint, which was created by Bill Vicars. This website was used in RUSS 12. Additionally, there are numerous online dictionaries available that may include more signs. I’ve listed one below the Lifeprint link.

(I’ve had problems with the last link, but maybe it’s because I use a Macbook Pro.)

I did not include “knowing the alphabet” since that should be acquired before doing ANY sign language at all. Seriously, if you can’t sign the alphabet on one hand in less than ten seconds, review the signs. Meanwhile, I’m going to explore the Internet a bit more and see what revisions to make to my axioms.

The Night of Computer Science

Last Thursday night, I decided to finally start on my 3 computer science programs that I thought were due on Sunday night (they were actually due 2 days later … a big relief!). I figured that this wouldn’t take that long. I had to write a program that would spit out certain prime numbers to the user. Of course, there were more specifications, but that’s the general idea. Maybe it’s a bit hackneyed, but I decided to make a record of what happened in a diary format. It may be used by me as a way to laugh at myself.

Some background: I’m programming in the C language, and I’m using emacs to assist in compiling and input/output.

11:09 PM: I arrive in the computer science lab. It’s in an obscure room on the top floor of the science center (third floor). At least it’s (a) close to my dorm room and (b) has a nice view on both sides of the room of the science library below. I see that four other guys are there in the lab. They’re probably all in some 300 or 400-level class.

11:37 PM: After nearly a half hour of frustration with emacs, I finally figure out how to start typing my C program! Why couldn’t this be on a crystal-clear template? My “Intro to emacs” sheet lists commands, but it doesn’t have suggestions.

12:00 AM: It’s midnight, and one of the guys working near me keeps throwing a ball up in the air and cursing at his computer. I resume typing my program, which is going well so far.

12:15 AM: The guy who kept cursing and banging on his desk gives up. He tells his classmates that he’s had enough for the night. I find out that he’s in an advanced compilers class. He leaves, and one of his classmates follows suit.

12:32 AM: My code should now work for numbers (as input) that are less than or equal to two. Mission accomp … oh wait, I have to take in account input that’s greater than two?

1:00 AM: Still toiling in the lab. One of the guys who had left a half hour ago brings some pizza he got and offers it to me. It’s sausage and bacon-flavored. No thanks. I prefer cheese and buffalo chicken pizza.

1:26 AM: The second method I have is malfunctioning. Why?!?!? I decide to take a break and re-fill my water bottle.

1:39 AM: I think it works! I test out the program and it works for 0, 1, 2, 3, 4, 5, 6, 7, and …8?!? Crap, it works for all numbers OTHER than 8? And I was just about to leave.

1:48 AM: I “revised” my code. Now it doesn’t work for the number 3! (That’s not a factorial, by the way.)

1:59 AM: It doesn’t work with number 10, but it works with all other numbers. What is going on with 3, 8, and 10 tonight?

2:04 AM: Well, that’s it. I think I’m done for now. The program should now work for any number that’s inputted by the user. It’s not completely done, since I’ve got to take into account multiple integers as input, but I’ve done as much as I think I can manage. I need to sleep. I walk out of the building and in about 100 steps I get back to my room. Good night. I realize that if I were using Java, I would have finished before midnight. But Java sucks.

It’s a bit late now, but tomorrow, I have to finish up these programs.

Summer Academy

I spent much of the summer of 2011 at the University of Washington at Seattle as part of the Summer Academy for Advancing Deaf and Hard-of-Hearing in Computing. (Will they ever change that name so it isn’t a mouthful???) It’s a nine-week residential program that brings 13 deaf and hard-of-hearing students together who take courses and attend talks together. I was one of those students, along with my brother. When I first heard about the program, I had mixed feelings. I didn’t consider myself as a computer science person, and I felt like this program wouldn’t match my interests. But what was once a near last-choice summer experience may have unintentionally, yet incredibly, led me to gravitate towards computer science as a major.

You can read the description of the program on the web (alternatively, just Google search Summer Academy Deaf and Hard of Hearing) so I won’t explain everything. However, it wasn’t like being at Williams, since there were only 2 classes there compared with 4 at my college. What this program offered that I hadn’t experienced before was the opportunity to see what current computer science graduate students and workers were doing. Graduate students presented their topics, ranging from Android programs to touch-screens for blind people, while people in industry talked about their experience and jobs, which typically involved software engineering or information technology.

We’ll see how this program impacts me in the future. Check back in five years.

In the meantime, I’m going to read more about the ultra-popular computer game — not just at the Summer Academy but global — Minecraft and it’s upcoming 1.8 version. I can’t wait….

Hello World!

Hello world!

Well, I guess that’s good enough for an introduction. No, wait, this isn’t a world of computer science, so I guess it’s not good enough. (Computer science people will understand the joke.) Actually, I have to retract the last statement — isn’t it already a world of computers? Anyway, I’m an eighteen year old male living in the United States. I’m a student at Williams College in Williamstown, Massachusetts, and my hometown is Guilderland, New York. I have also been deaf since birth.

I have two primary objectives in mind about this blog. The first is that I want to spread my knowledge of deafness and deaf culture to my readers. The second is that I also want to talk about what life is like as a college student, as well as in academia. I hope to land a job in academia within the next decade. By doing so, I’ll be one of the few deaf people I know who have an academic job. I know that there are numerous deaf professors at the National Technical Institute for the Deaf at RIT – maybe even fifty or so – but elsewhere, professors are scarce. It is my lifelong aim to rectify that.

And, naturally, there will also be some random posts about things just popping up in my head, or just random things because I want to write something down for the sake of writing something down. Or perhaps they’ll be something so important that I just can’t ignore them. What about the House just passing a deal to avoid a debt crisis on August 2nd? (Oh wait, that’s a random thought!) I’ll just include those in an “everything else” category. But hopefully, I’ll be able to focus mostly on academia and deafness.

More details about my aspirations, expectations, and interests will come in future posts when I get used to the WordPress platform. UPDATE: As of May 13, 2015, I have migrated to Jekyll.

I look forward to having a long, lasting blog!

-Daniel Seita

August 1, 2011

Why Academia?

I guess I’ll make things clear right away. Working in academia can be very, very difficult. It’s hard to get paid to do research at a top school, with all the competition with the freshly minted PhD’s from last year and rock-star tenured professors in long-term positions. Politics are rife, and tenure can be a measure of how your colleagues enjoy you rather than the true quality of research. You also have to deal with students, of which a select few will be whining at you, barraging you with complaints about grades …. Last, but not least, you don’t get to start being a professor (unless you’re extremely gifted and got a PhD at 25 or younger) until you’re almost thirty. So this begs the two-part question: Why do I want a career in academia, and why do I think it’s right for me?

The first is that, as a deaf student, I don’t think I’d function well in many fields that my classmates at Williams seem to be gravitating towards. Investment banking, finance, private equity, and consulting seem to be all the rage here, and probably reflects how popular economics is as a major. I’m sure I could get a decent job and a living following the finance route, but that requires so much communication between me and clients, and I’m not sure if many would enjoy a deaf person working with them, all other things being equal. I think that, due to my natural tendency to study a lot of material in depth, I’m more suited towards graduate school and the PhD track. I’m primarily studying computer science, economics, mathematics at Williams, and I’m probably going to pursue a PhD in computer science. I don’t want a PhD in mathematics, since I’m not sure how well I’d be at conjuring new solutions to math problems, and I find computer science far more interesting. Economics is also interesting, but computer science may have more opportunities for me outside of academia should my quest to be a professor hit a severe gridlock. (I have backup plans!)

In that respect, Williams is a great place for me to start my prospective career. It’s a fantastic institution known for the quality of its research and the close interaction between students and faculty. The ratio is seven to one. I haven’t gotten involved in true research yet, but I’m hoping to start as early as the fall 2011 semester. I’ll probably ask around the computer science department and see if there’s any interest in a research assistant to help them with some grunt work. After all, I need to start somewhere. And in the summer of 2012, I hope to land a research internship at a Research Experience for Undergraduates (REU) in computer science. Unfortunately, Williams does not have a computer science REU — it has a very prestigious REU in mathematics — so I’ll have to apply elsewhere. I’ll have to aim *wide *since REU’s are super-competitive to get into. I would guess that almost all of them have acceptance ratios of 10 percent or less for students who are not already in that school. Ouch!

That’s looking far ahead in the future, though. I’ll update this more later.