My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

Before Robots Can Take Over the World, We Have to Deal With Calibration

Clockwise, starting from top left: the Da Vinci, the ABB YuMi, the PR2, and

the Toyota HSR.

Clockwise, starting from top left: the Da Vinci, the ABB YuMi, the PR2, and

the Toyota HSR.

I now have several months of research experience in robotics. I am familiar with the following four robots, roughly in descending order of my knowledge of them:

- Da Vinci. Price: 2,000,000 USD (!!!). I’m not sure how much of the full set I use, though — I only use two of the arms, and the components might be cheaper versions. After all, even for well-funded Berkeley robotics labs, paying 2 million USD for a robot is impractical. Smaller hospitals also cannot afford the full Da Vinci.

- Toyota Human Support Robot (HSR). Price: ???. Oddly, I can’t find a price! In fact, I’m not even sure where to buy it.

- ABB YuMi. Price: 40,000 USD. At least this one is on the “cheap” end … I think?

- Personal Robot 2 (PR2), from Willow Garage1. Price: 280,000 USD. Yikes! And that’s the open source version – the raw sticker cost started as 400,000 USD when it was released in 2010. Given that Willow Garage no longer exists, I’m not sure if it’s possible to buy a PR2.

I have sadly never touched or worked with the YuMi and the PR2, though I’ve manipulated the Da Vinci on a regular basis. The one-sentence summary: it’s a surgical robotics system which is currently the only such system approved by the U.S. Food and Drug Administration.

This is interesting. Now let’s switch to another topic: suppose you talk to a layperson about working in robotics. One typical, half-serious conversation involves this question: when will your robots take over the world?

I would respond by pointing out the obvious restrictions placed on the Da Vinci. It’s fixed to a base, with arms that perform a strictly limited set of surgery-related functions. So … it can’t really “join forces” with other Da Vincis and somehow navigate the real world.

But perhaps, your conversationalist argues, that we can take the arms of the Da Vinci and integrate them to a mobile robot (e.g. the Toyota HSR). If the Da Vinci works in surgical applications, then it must logically be very nimble-fingered2. Think of the things it can do! It can pick locks, use car keys, redirect electric wires, and so forth.

Alas, from my experience, it’s difficult to even get the robot arms to go where I want to them to go. To make this more concrete, suppose we’re looking at an image of a flat surgical platform through the Da Vinci camera mounted above. When we look at the image, we can visually identify the area where we want the arm (or more precisely, the “end effectors”) to go to, and we can figure out the pixel values. Mathematically, given \((x_p,y_p)\) in pixel space, with \(x_p,y_p\) positive integers typically bounded by 1080 and 1920 (i.e. the resolution) we want to find the corresponding six-dimensional robot coordinates \((x_r,y_r,z_r,y_r,p_r,r_r)\) where I’ve added yaw, pitch, and roll along with the \(r\) subscript representing “robot.”

The problem is that we can’t directly convert from pixel to robot points. The best strategy I’ve used for dealing with this is to do some supervised learning. Given known \(x_p,y_p\) points, I can manually move the robot end effectors to where they should be. Then my code can record the robot coordinates. I repeat this process many times to get a dataset, then perform supervised learning (e.g. with a random forest) to find the mapping. Finally, I use that map in real experiments.

This is the process of calibration. And unfortunately, it doesn’t work that well. I’ve found that I consistently get errors of at least 4 millimeters, and for automated robot surgery that’s pretty dangerous. To be clear, I’m focused on automated surgery, not teleoperation, which is when a human expert surgeon controls some switches which then translate to movement of the Da Vinci arms.

Indeed, calibration is a significant enough problem that it can be part of a research paper on its own. For instance, here’s a 2014 paper from the IEEE International Conference on Automation Science and Engineering (CASE) which deals with the problem of kinematic control (which involves calibration).

Calibration — or more broadly, kinematic control — is one of those necessary evils for research. I will tolerate it because I enjoy working with robotics and with enough manual time, usually calibration becomes workable for running experiments.

I hope to continue working with robotics to make them be more autonomous. Sadly, they won’t be taking over the world.

-

Willow Garage also developed the ROS system, which is used in many robotics systems, including the Da Vinci and Toyota HSR. While it’s no longer around, it has a long history and is considered a iconic robotics company. Many companies have spawned from Willow Garage. I’m embarrassed to admit that I didn’t know about Willow Garage until a few months ago. I really need to read more about the tech industry; it might be more informative for me than skimming over the latest political insults hurled on The New York Times and The Wall Street Journal. ↩

-

I think a conscious Da Vinci might take some offense at the YuMi being advertised as “The Most Nimble-Fingered Robot Yet.” ↩

How I Organize My GitHub Repositories

I’ve been putting more of my work-related stuff in GitHub repositories and by now I have more or less settled on a reasonable workflow for utilizing GitHub. For those of you who are new to this, GitHub helps us easily visualize and share code repositories online, whether in public (visible to everyone) or private (visible only to those with permissions), though technically repositories don’t have to be strictly code-based. GitHub uses version control in combination with git, which is what actually handles the technical machinery. It’s grown into the de facto place where computer scientists — particularly those in Artificial Intelligence — present their work. What follows is a brief description of what I use GitHub for; in particular, I have many public repositories along with a few private repositories.

For public repositories, I have the following:

- A Paper Notes repository, where I write notes for research papers. A few months ago, I wrote a brief blog post describing why I decided to do this. Fortunately, I have come back to this repository several times to see what I wrote for certain research papers. The more I’m doing this, the more useful it is! The same holds for running a blog; the more I find myself rereading it, the better!

- A repository for coding various algorithms. I actually have two repositories which carry out this goal: one for reinforcement learning and another for MCMC-related stuff. The goal of these is to help me understand existing algorithms; many of the state-of-the-art algorithms are tricky to implement precisely because they are state-of-the-art.

- A repository for miscellaneous personal projects, such as one for Project Euler problems (yes, I’m still doing that … um, barely!) and another for self-studying various courses and textbooks.

- A repository for preparing for coding interviews. I thought it might be useful to post some of my solutions to practice problems.

- A repository for my vimrc file. Right now my vimrc file is only a few lines, but it might get more complex. I’m using a number of computers nowadays (mostly via ssh), so one of the first steps to get started with a machine is to clone the repository and establish my vimrc.

- Lastly, but certainly not least, don’t forget that there’s a repository for my blog. That’s obviously the most important one!

On the other hand, there are many cases when it makes sense for individuals to use private repositories. (I’m using “individuals” here since it should be clear that all companies have their “critical” code in private version control.) Here are some of the private repositories I have:

- All ongoing research projects have their own private repository. This should be a no-brainer. You don’t want to get scooped, particularly with a fast-paced field such as Artificial Intelligence. Once such papers are ready to be posted to arXiv, that’s when the repository can be released to the public, or copied to a new public one to start fresh.

- I also have one repository that I’ll call a research sandbox. It contains multiple random ideas I have, and I run smaller-scale experiments here to test ideas. If any ideas look like they’ll work, I start a new repository to develop them further. On a side note, running quick experiments to test an idea before scaling it up is a skill that I need to work on!

- Finally, I have a repository for homework, which also includes class final projects. It’s particularly useful for when one has laptops that are relatively old (like mine) since the computer might die and thus all my work LaTeX-ing statistics homework might be lost. At this point, though, I think I’m done taking any real classes so I don’t know if I’ll be using this one anymore.

Well, this is a picture of how I manage my repositories. I am pleased with this configuration, and perhaps others who are starting out with GitHub might adapt some of these repositories for themselves.

Saving Neural Network Model Weights Using a Hierarchical Organization

Over the last two weeks, I have been using more Theano-based code for Deep Learning instead of TensorFlow, in part due to diving into OpenAI’s Generative Adversarial Imitation Learning code.

That code base has also taught me something that I have wondered about on occasion: what is the “proper” way to save and load neural network model weights? At the very least, how should we as programmers save weights in a way that’s robust, scalable, and easy to understand? In my view, there are two major steps to this procedure:

- Extracting or setting the model weights from a single vector of parameters.

- Actually storing that vector of weights in a file.

One way to do the first step is to save model weights in a vector, and use that

vector to load the weights back to the model as needed. I do this in my

personal reinforcement learning repository, for instance. It’s implemented

in TensorFlow, but the main ideas still hold across Deep Learning software.

Here’s a conceptually self-contained code snippet for setting model weights

from a vector self.theta:

self.theta = tf.placeholder(tf.float32, shape=[self.num_params], name="theta")

start = 0

updates = []

for v in self.params:

shape = v.get_shape()

size = tf.reduce_prod(shape)

# Note that tf.assign(ref, value) assigns `value` to `ref`.

updates.append(

tf.assign(v, tf.reshape(self.theta[start:start+size], shape))

)

start += size

self.set_params_flat_op = tf.group(*updates) # Performs all updates together.In later code, I run TensorFlow sessions on self.set_params_flat_op and supply

self.theta with the weight vector in the feed_dict. Then it iteratively

makes an update to extract a segment of the self.theta vector and assigns it

to the correct weight. The main thing to watch out about here is that

self.theta actually contains the weights in the correct ordering.

I’m more curious about the second stage of this process, that of saving and

loading weights into files. I used to use pickle files to save the weight

vectors, but one problem is the incompatibility between Python 2 and Python 3

pickle files. Given that I sometimes switch back and forth between

versions, and that I’d like to keep the files consistent across versions, this

is a huge bummer for me. Another downside is the lack of organization. Again,

I still have to be careful to ensure that the weights are stored in the correct

ordering so that I can use self.theta[start:start+size].

After looking at how the GAIL code stores and loads model weights, I realized

it’s different from saving single pickle or numpy arrays. I started by running

their Trust Region Policy Optimization code (scripts/run_rl_mj.py) and

observed that the code specifies neural network weights with a list of

dictionaries. Nice! I was wondering about how I could better generalize my

existing neural network code.

Moving on, what happens after saving the snapshots? (In Deep Learning it’s

common to refer to weights after specific iterations as “snapshots” to be

saved.) The GAIL code uses a TrainingLog class which utilizes PyTables

and — by extension — the HDF5 file format. If I run the TRPO code I might

get trpo_logs/CartPole-v0.h5 as the output file. It doesn’t have to end with

the HDF5 extension .h5 but that’s the convention. Policies in the code are

subclasses of a generic Policy class to handle the case of discrete versus

continuous control. The Policy class is a subclass of an abstract Model

class which provides an interface for saving and loading weights.

I decided to explore a bit more, this time using the pre-trained CartPole-v0 policy provided by GAIL:

In [1]: import h5py

In [2]: with h5py.File("expert_policies/classic/CartPole-v0.h5", "r") as f:

...: print(f.keys())

...:

[u'log', u'snapshots']

In [3]: with h5py.File("expert_policies/classic/CartPole-v0.h5", "r") as f:

...: print(f['log'])

...: print(f['snapshots'])

...:

<HDF5 dataset "log": shape (101,), type "|V80">

<HDF5 group "/snapshots" (6 members)>

In [4]: with h5py.File("expert_policies/classic/CartPole-v0.h5", "r") as f:

...: print(f['snapshots/iter0000100/GibbsPolicy/hidden/FeedforwardNet/layer_0/AffineLayer/W'].value)

...:

# value gets printed here ...It took me a while to figure this out, but here’s how to walk through the nodes in the entire file:

In [5]: def print_attrs(name, obj):

...: print(name)

...: for key, val in obj.attrs.iteritems():

...: print(" {}: {}".format(key, val))

...:

In [6]: expert_policy = h5py.File("expert_policies/classic/CartPole-v0.h5", "r")

In [7]: expert_policy.visititems(print_attrs)

# Lots of stuff printed here!PyTables works well for hierarchical data, which is nice for Deep Reinforcement Learning because there are many ways to form a hierarchy: snapshots, iterations, layers, weights, and so on. All in all, PyTables looks like a tremendously useful library. I should definitely consider using it to store weights. Furthermore, even if it would be easier to store with a single weight vector as I now do (see my TensorFlow code snippet from earlier) the generality of PyTables means it might have cross-over effects to other code I want to run in the future. Who knows?

Review of Theoretical Statistics (STAT 210B) at Berkeley

After taking STAT 210A last semester (and writing way too much about it), it made sense for me to take STAT 210B, the continuation of Berkeley’s theoretical statistics course aimed at PhD students in statistics and related fields.

The Beginning

Our professor was Michael I. Jordan, who is colloquially called the “Michael Jordan of machine learning.” Indeed, how does one begin to describe his research? Yann LeCun, himself an extraordinarily prominent Deep Learning researcher and considered as one of the three leaders in the field1, said this2 in a public Facebook post:

Mike’s research direction tends to take radical turns every 5 years or so, from cognitive psychology, to neural nets, to motor control, to probabilistic approaches, graphical models, variational methods, Bayesian non-parametrics, etc. Mike is the “Miles Davis of Machine Learning”, who reinvents himself periodically and sometimes leaves fans scratching their heads after he changes direction.

And Professor Jordan responded with:

I am particularly fond of your “the Miles Davis of machine learning” phrase. (While “he’s the Michael Jordan of machine learning” is amusing—or so I’m told—your version actually gets at something real).

As one would expect, he’s extremely busy, and I think he had to miss four lectures for 210B. Part of the reason might be because, as he mentioned to us: “I wasn’t planning on teaching this course … but as chair of the statistics department, I assigned it to myself. I though it would be fun to teach.” The TAs were able to substitute, though it seemed like some of the students in the class decided to skip those lectures.

Just because him teaching 210B was somewhat “unplanned” doesn’t mean that it was easy — far from it! In the first minute of the first lecture, he said that 210B is the hardest course that the statistics department offers. Fortunately, he followed up with saying that the grading would be lenient, that he didn’t want to scare us, and so forth. Whew. We also had two TAs (or “GSIs” in Berkeley language) who we could ask for homework assistance.

Then we dived into the material. One of the first things we talked about was U-Statisics, a concept that can often trick me up because of my lack of intuition in internalizing expectations of expectations and how to rearrange related terms in clever ways. Fortunately, we had a homework assignment question about U-Statistics in 210A so I was able to follow some of the material. We also talked about the related Hájek projection.

Diving into High-Dimensional Statistics

We soon delved into to the meat of the course. I consider this to be the material in our textbook for the course, Professor Martin Wainwright’s recent book High-Dimensional Statistics: A Non-Asymptotic Viewpoint.

For those of you who don’t know, Professor Wainwright is a faculty member in the Berkeley statistics and EECS departments who won the 2014 COPSS “Nobel Prize in Statistics” award due to his work on high dimensional statistics. Here’s the transcript of his interview, where he says that serious machine learning students must know statistics. As a caveat, the students he’s referring to are the kind that populate the PhD programs in schools like Berkeley, so he’s talking about the best of the best. It’s true that basic undergraduate statistics courses are useful for a broad range of students — and I wish I had taken more when I was in college — but courses like 210B are not needed for all but a handful of students in specialized domains.

First, what is “high-dimensional” statistics? Suppose we have parameter \(\theta \in \mathbb{R}^d\) and \(n\) labeled data points \(\{(x_i,y_i)\}_{i=1}^n\) which we can use to estimate \(\theta\) via linear regression or some other procedure. In the classical setting, we can safely assume that \(n > d\), or that \(n\) is allowed to increase while the data dimension \(d\) is typically held fixed. This is not the case in high-dimensional (or “modern”) statistics where the relationship is reversed, with \(d > n\). Classical algorithms end up running into brick walls into these cases, so new theory is needed, which is precisely the main contribution of Wainwright’s research. It’s also the main focus of STAT 210B.

The most important material to know from Wainwright’s book is the stuff from the second chapter: sub-Gaussian random variables, sub-Exponential random variables, bounds from Lipschitz functions, and so on. We referenced back to this material all the time.

We then moved away from Wainwright’s book to talk about entropy, the Efron-Stein Inequality, and related topics. Professor Jordan criticized Professor Wainwright for not including the material in this book. I somewhat agree with him, but for a different reason: I found this material harder to follow compared to other class concepts, so it would have been nice to see Professor Wainwright’s interpretation of it.

Note to future students: get the book by Boucheron, Lugosi, and Massart, titled Concentration Inequalities: a Nonasymptotic Theory of Independence. I think that’s the book Professor Jordan was reviewing when he gave these non-Wainwright-related lectures, because he was using the same exact notation as in the book.

How did I know about the book, which amazingly, wasn’t even listed on the course website? Another student brought it to the class and I peeked over the student’s shoulder to see the title. Heh. I memorized the title and promptly ordered it online. Unfortunately, or perhaps fortunately, Professor Jordan then moved on to exclusively material from Professor Wainwright’s book.

If any future students want to buy off the Boucheron et al book from me, send me an email.

After a few lectures, it was a relief to me when we returned to material from Wainwright’s book, which included:

- Rademacher and Gaussian Complexity (these concepts were briefly discussed in a Deep Learning paper I recently blogged about)

- Metric entropy, coverings, and packings

- Random matrices and high dimensional covariance matrix estimation

- High dimensional, sparse linear models

- Non-parametric least squares

- Minimax lower bounds, a “Berkeley specialty” according to Professor Jordan

I obtained a decent understanding of how these concepts relate to each other. The concepts appear in many chapters outside the ones when they’re formally defined, because they can be useful as “sub-routines” or as part of technical lemmas for other problems.

Despite my occasional complaint about not understanding details in Wainwright’s book — which I’ll bring up later in this blog post — I think the book is above-average in terms of clarity, relative to other textbooks aimed at graduate students. There were often enough high-level discussions so that I could see the big picture. One thing that needs to be fixed, though, are the typos. Professor Jordan frequently pointed these out during lecture, and would also sometimes ask us to confirm his suspicions that something was a typo.

Regarding homework assignments, we had seven of them, each of which was about five or so problems with multiple parts per problem. I was usually able to correctly complete about half of each homework by myself. For the other half, I needed to consult the GSIs, other students, or perform extensive online research to assist me with the last parts. Some of the homework problems were clearly inspired by Professor Wainwright’s research papers, but I didn’t have much success translating from research paper to homework solution.

For me, some of the most challenging homework problems pertained to material that wasn’t in Wainwright’s textbook. In part this is because some of the problems in Wainwright’s book have a similar flavor to exercises in the main text of the book, which were often accompanied with solutions.

The Final Exam

In one of the final lectures of the class, Professor Jordan talked about the final exam — that it would cover a range of questions, that it would be difficult, and so forth — but then he also mentioned that he could complete it in an hour. (Final exams in Berkeley are in three-hour slots.) While he quickly added “I don’t mean to disparage you…”, unfortunately I found the original comment about completing the exam in an hour quite disparaging. I’m baffled by why professors say that; it seems to be a no-win solution for the students. Furthermore, no student is going to question a Berkeley professor’s intelligence; I certainly wouldn’t.

That comment aside, the final exam was scheduled to be Thursday at 8:00AM (!!) in the morning. I was hoping we could keep this time slot, since I am a morning person and if other students aren’t, then I have a competitive advantage. Unfortunately, Professor Jordan agreed with the majority in the class that he hated the time, so we had a poll and switched to Tuesday at 3:00PM. Darn. At least we know now that professors are often more lenient towards graduate students than undergrads.

On the day of the final exam, I felt something really wrenching. And it wasn’t something that had to do with the actual exam, though that of course was also “wrenching.” It was this:

It looked like my streak of having all professors know me on a first-name basis was about to be snapped.

For the last seven years at Williams and Berkeley, I’m pretty sure I managed to be known on a first-name basis to the professors from all of my courses. Yes, all of them. It’s easier to get to know professors at Williams, since the school is small and professors often make it a point to know the names of every student. At Berkeley it’s obviously different, but graduate-level courses tend to be better about one-on-one interaction with students/professors. In addition, I’m the kind of student who frequently attends office hours. On top of it all, due to my deafness, I get some form of visible accommodation, either captioning (CART providers) or sign language interpreting services.

Yes, I have a little bit of an unfair advantage in getting noticed by professors, but I was worried that my streak was about to be snapped. It wasn’t for lack of trying; I had indeed attended office hours once with Professor Jordan (who promptly criticized me for my lack of measure theory knowledge) and yes, he was obviously aware of the sign language interpreters I had, but as far as I can tell he didn’t really know me.

So here’s what happened just before we took the final. Since the exam was at a different time slot than the “official” one, Professor Jordan decided to take attendance.

My brain orchestrated an impressive mental groan. It’s a pain for me to figure out when I should raise my hand. I did not have a sign language interpreter present, because why? It’s a three hour exam and there wouldn’t be (well, there better not be!) any real discussion. I also have bad memories because one time during a high school track practice, I gambled and raised my hand when the team captains were taking attendance … only to figure out that the person being called at that time had “Rizzuto” as his last name. Oops.

Then I thought of something. Wait … why should I even raise my hand? If Professor Jordan knew me, then surely he would indicate to me in some way (e.g. by staring at me). Furthermore, if my presence was that important to the extent that my absence would cause a police search for me, then another student or TA should certainly point me out.

So … Professor Jordan took attendance. I kept turning around to see the students who raised their hand (I sat in the front of the class. Big surprise!). I grew anxious when I saw the raised hand of a student whose last name started with “R”. It was the moment of truth …

A few seconds later … Professor Jordan looked at me and checked something off on his paper — without consulting anyone else for assistance. I held my breath mentally, and when another student whose last name was after mine was called, I grinned.

My streak of having professors know me continues! Whew!

That personal scenario aside, let’s get back to the final exam. Or, maybe not. I probably can’t divulge too much about it, given that some of the material might be repeated in future iterations of the course. Let me just say two things regarding the exam:

- Ooof. Ouch. Professor Jordan wasn’t kidding when he said that the final exam was going to be difficult. Not a single student finished early, though some were no doubt quadruple-checking their answers, right?

- Professor Jordan wasn’t kidding when he said that the class would be graded leniently.

I don’t know what else there is to say.

I am Dying to Know

Well, STAT 210B is now over, and in retrospect I am really happy I took the course. Even though I know I won’t be doing research in this field, I’m glad that I got a taste of the research frontier in high-dimensional statistics and theoretical machine learning. I hope that understanding some of the math here can transfer to increased comprehension of technical material more directly relevant to my research.

Possibly more than anything else, STAT 210B made me really appreciate the enormous talent and ability that Professor Michael I. Jordan and Professor Martin Wainwright exhibit in math and statistics. I’m blown away at how fast they can process, learn, connect, and explain technically demanding material. And the fact that Professor Wainwright wrote the textbook solo, and that much of the material there comes straight from his own research papers (often co-authored with Professor Jordan!) surely attests to why those two men are award-winning statistics and machine learning professors.

It makes me wonder: what do I lack compared to them? I know that throughout my life, being deaf has put me at a handicap. But if Professor Jordan or Professor Wainwright and I were to sit side-by-side and each read the latest machine learning research paper, they would be able to process and understand the material far faster than I could. Reading a research paper theoretically means my disability shouldn’t be a strike on me.

So what is it that prevents me from being like those two?

I tried doing as much of the lecture reading as I could, and I truly understood a lot of the material. Unfortunately, many times I would get bogged down by some technical item which I couldn’t wrap my head around, or I would fail to fill in missing steps to argue why some “obvious” conclusion is true. Or I would miss some (obvious?) mathematical trick that I needed to apply, which was one of the motivating factors for me writing a lengthy blog post about these mathematical tricks.

Then again, after one of the GSIs grinned awkwardly at me when I complained to him during office hours about not understanding one of Professor Wainwright’s incessant “putting together the pieces” comment without any justification whatsoever … maybe even advanced students struggle from time to time? And Wainwright does have this to say in the first chapter of his book:

Probably the most subtle requirement is a certain degree of mathematical maturity on the part of the reader. This book is meant for the person who is interested in gaining a deep understanding of the core issues in high-dimensional statistics. As with anything worthwhile in life, doing so requires effort. This basic fact should be kept in mind while working through the proofs, examples and exercises in the book.

(I’m not sure if a “certain degree” is a good description, more like “VERY HIGH degree” wouldn’t you say?)

Again, I am dying to know:

What is the difference between me and Professor Jordan? For instance, when we each read Professor Wainwright’s textbook, why is he able to process and understand the information at a much faster rate? Does his brain simply work on a higher plane? Do I lack his intensity, drive, and/or focus? Am I inherently less talented?

I just don’t know.

Random Thoughts

Here are a few other random thoughts and comments I have about the course:

-

The course had recitations, which are once-a-week events when one of the TAs leads a class section to discuss certain class concepts in more detail. Attendance was optional, but since the recitations conflicted with one of my research lab meetings, I didn’t attend a single recitation. Thus, I don’t know what they were like. However, future students taking 210B should at least attend one section to see if such sessions would be beneficial.

-

Yes, I had sign language interpreting services, which are my usual class accommodations. Fortunately, I had a consistent group of two interpreters who attended almost every class. They were quite kind enough to bear through such technically demanding material, and I know that one of the interpreters was sick once, but came to work anyway since she knew that whoever would be substituting would be scarred to life from the class material. Thanks to both of you3, and I hope to continue working with you in the future!

-

To make things easier for my sign language interpreters, I showed up early to every class to arrange two seats for them. (In fact, beyond the first few weeks, I think I was the first student to show up to every class, since in addition to rearranging the chairs, I used the time to review the lecture material from Wainwright’s book.) Once the other students in the class got used to seeing the interpreters, they didn’t touch the two magical chairs.

-

We had a class Piazza. As usual, I posted way too many times there, but it was interesting to see that we had a lot more discussion compared to 210A.

-

The class consisted of mostly PhD students in statistics, mathematics, EECS, and mechanical engineering, but there were a few talented undergrads who joined the party.

Concluding Thoughts

I’d like to get back to that Facebook discussion between Yann LeCun and Michael I. Jordan in the beginning of his post. Professor Jordan’s final paragraph was a pleasure to read:

Anyway, I keep writing these overly-long posts, and I’ve got to learn to do better. Let me just make one additional remark, which is that I’m really proud to be a member of a research community, one that includes Yann Le Cun, Geoff Hinton and many others, where there isn’t just lip-service given to respecting others’ opinions, but where there is real respect and real friendship.

I found this pleasing to read because I often find myself thinking similar things. I too feel proud to be part of this field, even though I know I don’t have a fraction of the contributions of those guys. I feel privileged to be able to learn statistics and machine learning from Professor Jordan and all the other professors I’ve encountered in my education. My goal is to become a far better researcher than I am now so that I feel like I am giving back to the community. That’s indeed one of the reasons why I started this blog way back in August 2011 when I was hunched over a desk in the eighth floor of a dorm at the University of Washington. I wanted a blog in part so that I could discuss the work I’m doing and new concepts that I’ve learned, all while making it hopefully accessible to many readers.

The other amusing thing that Professor Jordan and I have in common is that we both write overly long posts, him on his Facebook, and me on my blog. It’s time to get back to research.

-

The other two are Geoffrey Hinton and Yoshua Bengio. Don’t get me started with Jürgen Schmidhuber, though he’s admittedly a clear fourth. ↩

-

This came out of an interview that Professor Jordan had with IEEE back in 2014. However, it didn’t quite go as well as Professor Jordan wanted, and he criticized the title and hype (see the featured comments below at the article). ↩

-

While I don’t advertise this blog to sign language interpreters, a few years ago one of them said that there had been “some discussion” of my blog among her social circle of interpreters. Interesting … ↩

The BAIR Blog is Now Live

The word should now be out that BAIR — short for Berkeley Artificial Intelligence Research — now has a blog. The official BAIR website is here and the blog is located here.

I was part of the team which created and set up the blog. The blog was written using Jekyll so for the most part I was able to utilize my prior Jekyll knowledge from working on “Seita’s Place” (that name really sounds awful, sorry).

One neat thing that I learned throughout this process was how to design a Jekyll blog but then have it appear as a sub-directory inside an existing website like the BAIR website with the correct URLs. The key is to understand two things:

-

The

_sitefolder generated when you build and preview Jekyll locally contains all you need to build the website using normal HTML. Just copy over the contents of this folder into wherever the server is located. -

In order to get links set up correctly, it is first necessary to understand how “baseurl”s work for project pages, among other things. This blog post and this other blog post can clarify these concepts. Assuming you have correct

site.urlandsite.baseurlvariables, to build the website, you need to run` JEKYLL_ENV=production bundle exec jekyll serve `

The production mode aspect will automatically configure the contents of

_siteto contain the correct links. This is extremely handy — otherwise, there would be a bunch of annoyinghttp://localhost:4000strings and we’d have to run cumbersome find-and-replace commands. The contents of this folder can then be copied over to where the server is located.

Anyway, enough about that. Please check out our inaugural blog post, about an exciting concept called Neural Module Networks.

OpenAI's Generative Adversarial Imitation Learning Code

In an earlier blog post, I described how to use OpenAI’s Evolution Strategies code. In this post, I’ll provide a similar guide for their imitation learning code which corresponds to the NIPS 2016 paper Generative Adversarial Imitation Learning. While the code works and is quite robust (as I’ll touch upon later), there’s little documentation and on the GitHub issues page, people have asked variants of “please help me run the code!!” Thus, I thought I’d provide some insight into how the code works. Just like the ES code, it runs on a cluster, but I’ll specifically run it on a single machine to make life easier.

The code was written in early 2016, so it uses Theano instead of TensorFlow. The

first task for me was therefore to install Theano on my Ubuntu 16.04 machine

with a TITAN X GPU. The imitation code is for Python 2.7, so I also decided to

install Anaconda. If I want to switch back to Python 3.5, then I think I can

modify my .bashrc file to comment out the references to Anaconda, but maybe

it’s better for me to use virtual environments. I don’t know.

I then followed the installations to get the stable 0.9.0 version of Theano. My configuration looks like this:

[global]

floatX = float64

device = gpu

[cuda]

root = /usr/local/cuda-8.0

Unfortunately, I ran into some nightmares with installing Theano. I hope you’re

not interested in the details; I wrote them here on their Google Groups.

Let’s just say that their new “GPU backend” causes me more trouble than it’s

worth, which is why I kept the old device = gpu setting. Theano still seems to

complain and spews out warnings about the float64 setting I have here, but I

don’t have much of a choice since the imitation code assumes double precision

floats.

Yeah, I’m definitely switching back to TensorFlow as soon as possible.

Back to the code — how does one run it? By calling scripts/im_pipeline.py

three times, as follows:

python scripts/im_pipeline.py pipelines/im_classic_pipeline.yaml 0_sampletrajs

python scripts/im_pipeline.py pipelines/im_classic_pipeline.yaml 1_train

python scripts/im_pipeline.py pipelines/im_classic_pipeline.yaml 2_eval

where the pipeline configuration file can be one of the four provided options (or something that you provide). You can put these three commands in a bash script so that they automatically execute sequentially.

If you run the commands one-by-one from the imitation repository, you should

notice that the first one succeeds after a small change: get rid of the

Acrobot-v0 task. That version no longer exists in OpenAI gym. You could train

version 1 using their TRPO code, but I opted to skip it for simplicity.

That first command generates expert trajectories to use as input data for imitation learning. The second command is the heavy-duty part of the code: the actual imitation learning. It also needs some modification to get it to work for a sequential setting, because the code compiles a list of commands to execute in a cluster.

Those commands are all of the form python script_name.py [arg1] [arg2] .... I

decided to put them together in a list and then run them sequentially, which can

easily be done using this code snippet:

all_commands = [x.format(**y) for (x,y) in zip(cmd_templates,argdicts)]

for command in all_commands:

subprocess.call(command.split(" "))This is nifty: the x.format(**y) part looks odd, but x is a string format in

Python with arguments to be filled in by the values of y.

If running something like the above doesn’t quite work, you might want to check the following:

-

If you’re getting an error with pytables, it’s probably because you’re using version 3.x of the library, which changed

getNodetoget_node. Someone wrote a pull request for this which should probably get integrated ASAP. (Incidentally, pytables looks like a nice library for data management, and I should probably consider using it in the near future.) -

If you’re re-running the code, you need to delete the appropriate output directories. It can be annoying, but don’t remove this functionality! It’s too easy to accidentally run a script that overrides your old data files. Just manually delete them, it’s better.

-

If you get a lot of “Exception ignored” messages, go into

environments/rlgymenv.pyand comment out the__del__method in theRLGymSimclass. I’m not sure why that’s there. Perhaps it’s useful in clusters to save memory? Removing the method didn’t seem to adversely impact my code and it got rid of the warning messages, so I’m happy. -

Someone else mentioned in this GitHub issue that he had to disable multithreading, but fortunately I didn’t seem to have this problem.

Hopefully, if all goes well, you’ll see a long list of compressed files

containing relevant data for the runs. Here’s a snippet of the first few that I

see, assuming I used im_classic_pipeline.yaml:

alg=bclone,task=cartpole,num_trajs=10,run=0.h5

alg=bclone,task=cartpole,num_trajs=10,run=1.h5

alg=bclone,task=cartpole,num_trajs=10,run=2.h5

alg=bclone,task=cartpole,num_trajs=10,run=3.h5

alg=bclone,task=cartpole,num_trajs=10,run=4.h5

alg=bclone,task=cartpole,num_trajs=10,run=5.h5

alg=bclone,task=cartpole,num_trajs=10,run=6.h5

alg=bclone,task=cartpole,num_trajs=1,run=0.h5

alg=bclone,task=cartpole,num_trajs=1,run=1.h5

alg=bclone,task=cartpole,num_trajs=1,run=2.h5

alg=bclone,task=cartpole,num_trajs=1,run=3.h5

alg=bclone,task=cartpole,num_trajs=1,run=4.h5

alg=bclone,task=cartpole,num_trajs=1,run=5.h5

alg=bclone,task=cartpole,num_trajs=1,run=6.h5

The algorithm here is behavioral cloning, one of the four that the GAIL paper benchmarked. The number of trajectories is 10 for the first seven files, then 1 for the others. These represent the “dataset size” quantities in the paper, so the next set of files appearing after this would have 4 and then 7. Finally, each dataset size is run seven times from seven different initializations, as explained in the very last sentence in the appendix of the GAIL paper:

For the cartpole, mountain car, acrobot, and reacher, these statistics are further computed over 7 policies learned from random initializations.

The third command is the evaluation portion, which takes the log files and

compresses it all into a single results.h5 file (or whatever you called it in

your .yaml configuration file). I kept the code exactly the same as it was in

the original version, but note that you’ll need to have all the relevant

output files as specified in the configuration or else you’ll get errors.

When you run the evaluation portion, you should see for each policy instance, its mean and standard deviation over 50 rollouts. For instance, with behavioral cloning, the policy that’s chosen is the one that performed best on the validation set. For the others, it’s whatever appeared at the final iteration of the algorithm.

The last step is to arrange these results and plot them somehow. Unfortunately,

while you can get an informative plot using scripts/showlog.py, I don’t think

there’s code in the repository to generate Figure 1 in the GAIL paper, so I

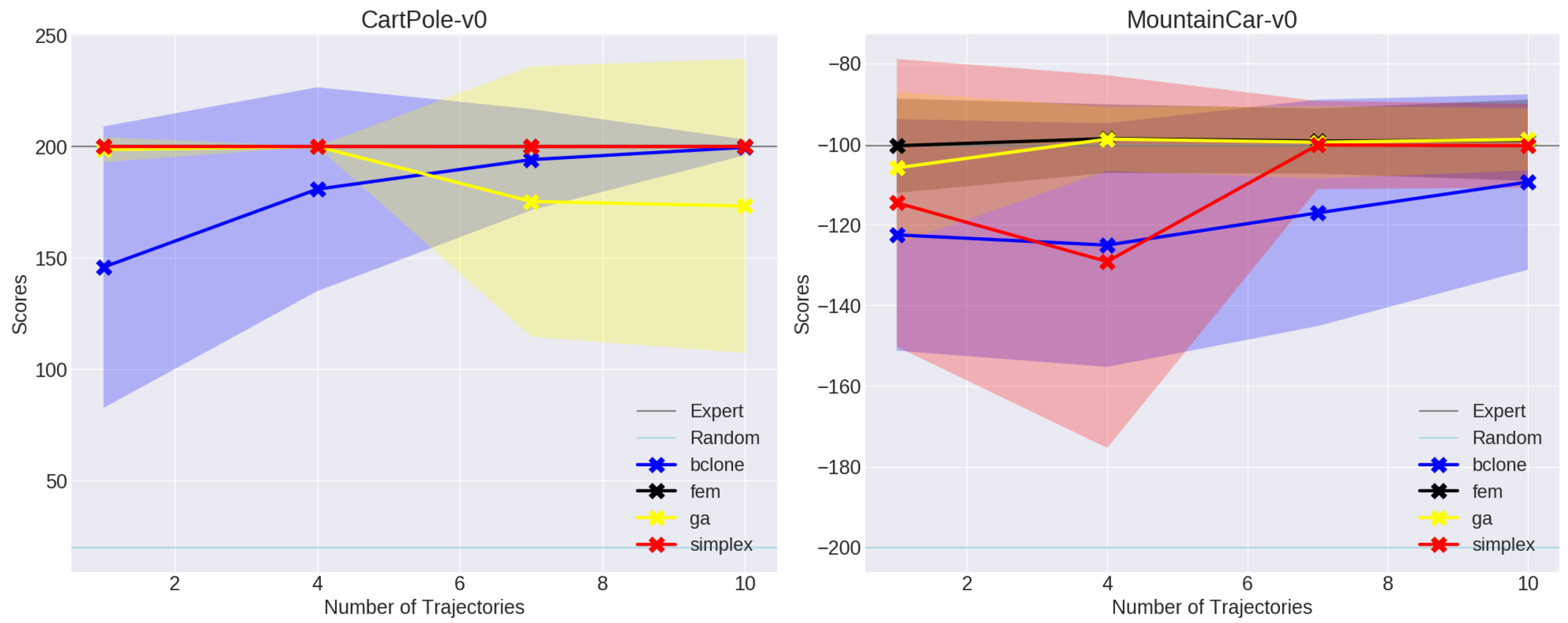

wrote some plotting code from scratch. For CartPole-v0 and MountainCar, I got

the following results:

These are comparable with what’s in the paper, though I find it interesting that GAIL seems to choke with the size 7 and 10 datasets for CartPole-v0. Hopefully this is within the random noise. I’ll test with the harder environments shortly.

Acknowledgments: I thank Jonathan Ho for releasing this code. I know it seems like sometimes I (or other users) complain about lack of documentation, but it’s still quite rare to see clean, functional code to exactly reproduce results in research papers. The code base is robust and highly generalizable to various settings. I also learned some new Python concepts from reading his code. Jonathan Ho must be an all-star programmer.

Next Steps: If you’re interested in running the GAIL code sequentially, consider looking at my fork here. I’ve also added considerable documentation.

AWS, Packer, and OpenAI's Evolution Strategies Code

I have very little experience with programming in clusters, so when OpenAI released their evolution strategies starter code which runs only on EC2 instances, I took this opportunity to finally learn how to program in clusters the way professionals do it.

Amazon Web Services

The first task is to get an Amazon Web Services (AWS) account. AWS offers a mind-bogglingly large amount of resources for doing all sorts of cloud computing. For our purposes, the most important feature is the Elastic Comptue Cloud (EC2). The short description of these guys is that they allow me to run code on heavily-customized machines that I don’t own. The only catch is that running code this way costs some money commensurate with usage, so watch out.

Note that joining AWS means we start off with one year of the free-tier option. This isn’t as good as it sounds, though, since many machines (e.g. those with GPUs) are not eligible for free tier usage. You still have to watch your budget.

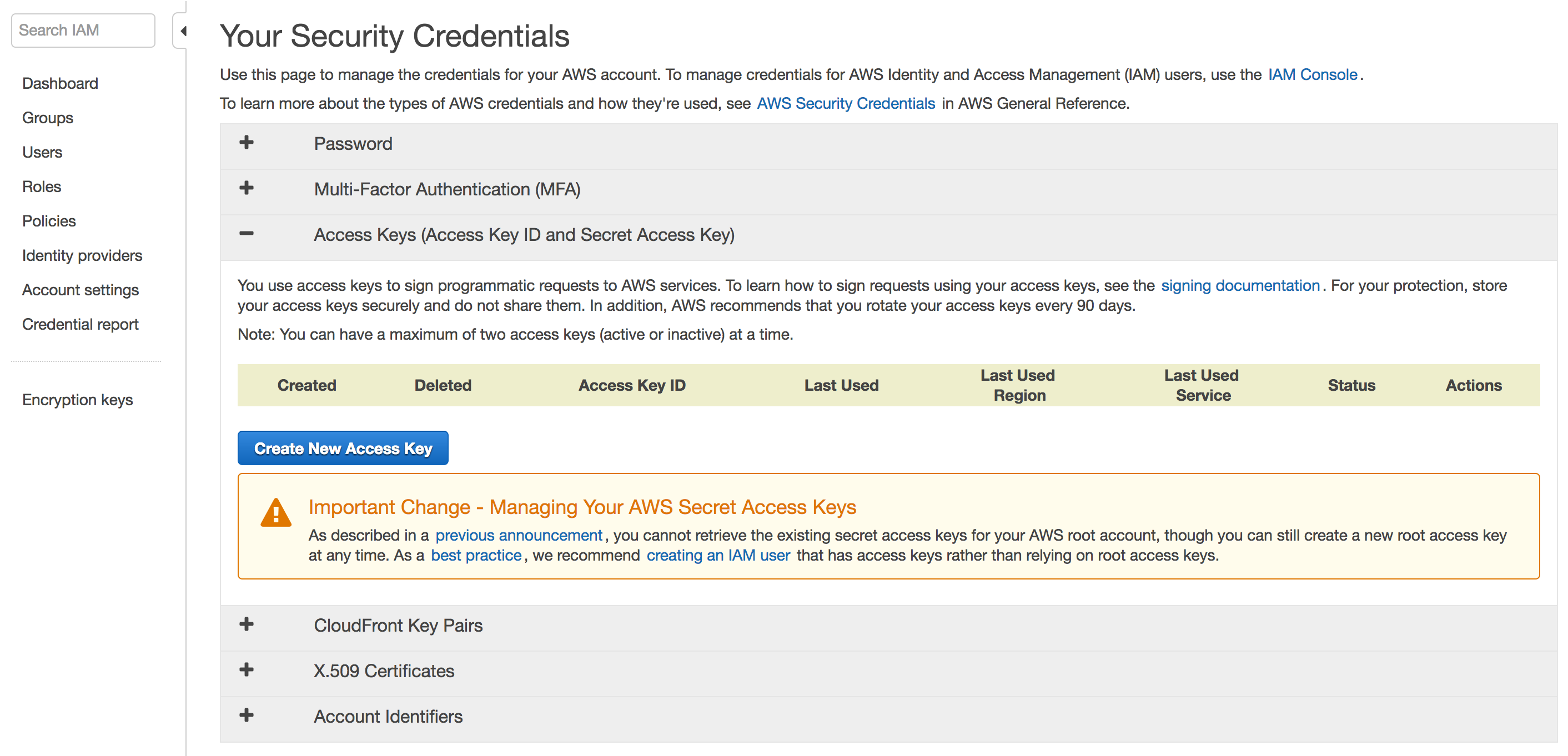

One immediate aspect of AWS to understand are their security credentials. They state (emphasis mine):

You use different types of security credentials depending on how you interact with AWS. For example, you use a user name and password to sign in to the AWS Management Console. You use access keys to make programmatic calls to AWS API actions.

To use the OpenAI code, I have to provide my AWS access key and secret access

keys, which are officially designated as AWS_ACCESS_KEY_ID and

AWS_SECRET_ACCESS_KEY, respectively. These aren’t initialized by default; we

have to explicitly create them. This means going to the Security Credentials

tab, and seeing:

You can create root access and secret access keys this way, but this is not the recommended way. To be clear, I took the above screenshot during the “root perspective,” so make sure you’re not seeing this on your computer. AWS strongly recommends to instead make a new user with administrative requirements, which effectively means it’s as good as the root account (minus the ability to view billing information). You can see their official instructions here to create groups with administrative privileges. The way I think of it, I’m a systems administrator and have to create a bunch of users for a computer. Except here, I only need to create one. So maybe this is a bit unnecessary, but I think it’s helpful to get used to the good practices as soon as possible. This author even suggests throwing away (!!) the root AWS password.

After following those instructions I had a “new” user and created the two access

keys. These must be manually downloaded, where they’ll appear in a .csv file.

Don’t lose them!

Next, we have to provide these credentials. When running packer code, as I’ll

show in the next section, it suffices to either provide them as command line

arguments, or use more secure ways such as adding them to your .bashrc file. I

chose the latter. This page from AWS provides further information about how

to provide your credentials, and the packer documentation contains similar

instructions.

On a final note regarding AWS, I had a hard time figuring out how to actually log in as the Administrator user, rather than the root. This StackOverflow question really helped out, but I’m baffled as to why this isn’t easier to do.

Installing and Understanding Packer

As stated in the OpenAI code, we must use something known as packer to run the

code. After installing it, I went through their basic example. Notice that

in their .json file, they have the following:

"variables": {

"aws_access_key": "",

"aws_secret_key": ""

},

where the access and secret keys must be supplied in some way. They could be

hard-coded above if you want to type them in there, but as mentioned earlier, I

chose to use environment variables in .bashrc.

Here are a couple of things to keep in mind when running packer’s basic example:

-

Be patient when the

packer buildcommand is run. It does not officially conclude until one sees:==> Builds finished. The artifacts of successful builds are: --> amazon-ebs: AMIs were created: us-east-1: ami-19601070where the last line will certainly be different if you run it.

-

The output, at least in this case, is an Amazon Machine Image (AMI) that I own. Therefore, I will have to start paying a small fee if this image remains active. There are two steps to deactivating this and ensuring that I don’t have to pay: “deregistering” the image and deleting the (associated) snapshot. For the former, go to the EC2 Management Console and see the

IMAGES / AMIsdrop-down menu, and for the latter, useELASTIC BLOCK STORE / Snapshots. From my experience, deregistering can take several minutes, so just be patient. These have to happen in order, as deleting the snapshot first will result in an error which says that the image is still using it. -

When launching (or even when deactivating, for that matter) be careful about the location you’re using. Look at the upper right corner for the locations. The “us-east-1” region is “Northern Virginia” and that is where the image and snapshot will be displayed. If you change locations, you won’t see them.

-

Don’t change the “region” argument in the “builders” list; it has to stay at “us-east-1”. When I first fired this up and saw that my image and snapshot were in “us-east-1” instead of the more-desirable “us-west-1” (Northern California) for me, I tried changing that argument and re-building. But then I got an error saying that the image couldn’t be found.

I think what happens is that the provided “source_ami” argument is the packer author’s fixed, base machine that he set up for the purposes of this tutorial, with packer installed (and maybe some other stuff). Then the

.jsonfile we have copies that image, as suggested by this statement in the docs (emphasis mine):Congratulations! You’ve just built your first image with Packer. Although the image was pretty useless in this case (nothing was changed about it), this page should’ve given you a general idea of how Packer works, what templates are and how to validate and build templates into machine images.

In packer’s slightly more advanced example, we get to see what happens

when we want to pre-install some software on our machines, and it’s here where

we see packer’s benefits start to truly shine. In that new example, the

“provisions” list lets us run command line arguments to install desired packages

(i.e. sudo apt-get install blahblahblah). When I ssh-ed into the generated

machine — a bit of a struggle at first since I didn’t realize the username to

get in was actually ubuntu instead of ec2-user — I could successfully run

redis-server on the command line and it was clear that the package had been

installed.

In OpenAI’s code, they have a full script of commands which they load in. Thus, any image that we create from the packer build will have those commands run, so that our machines will have exactly the kind of software we want. In particular, OpenAI’s script installs TensorFlow, gym, the ALE, and so on. If we didn’t have packer, I think we would have to manually execute that script for all the machines. To give a sense of how slow that would be, the OpenAI ES paper said they once tested with 1,440 machines.

OpenAI’s Code

The final stage is to understand how to run OpenAI’s code. As mentioned earlier,

there’s a dependency.sh shell script which will install stuff on our

cloud-computing machines. Unfortunately, MuJoCo is not open source.

(Fortunately, we might have an alternative with OpenAI’s RoboSchool — I

hope to see that work out!) Thus, we have to add our own license. For me, this

was a two-stage process.

First, in the configuration file, I added the following two file provisioners:

"provisioners": [

{

"type": "file",

"source": "/home/daniel/mjpro131",

"destination": "~/"

},

{

"type": "file",

"source": "/home/daniel/mjpro131/mjkey.txt",

"destination": "~/"

},

{

"type": "shell",

"scripts": [

"dependency.sh"

]

}

]

In packer, the elements in the “provisioners” array are executed in order of

their appearance, so I wanted the files sent over to the home directory on the

images so that they’d be there for the shell script later. The “source” strings

are where MuJoCo is stored on my personal machine, the one which executes

packer build packer.json.

Next, inside dependency.sh, I simply added the following two sudo mv

commands:

#######################################################

# WRITE CODE HERE TO PLACE MUJOCO 1.31 in /opt/mujoco #

# The key file should be in /opt/mujoco/mjkey.txt #

# Mujoco should be installed in /opt/mujoco/mjpro131 #

#######################################################

sudo mv ~/mjkey.txt /opt/mujoco/

sudo mv ~/mjpro131 /opt/mujoco/

(Yes, we’re still using MuJoCo 1.31. I’m not sure why the upgraded versions don’t work.)

This way, when running packer build packer.json, the relevant portion of the

output should look something like this:

amazon-ebs: + sudo mkdir -p /opt/mujoco

amazon-ebs: + sudo mv /home/ubuntu/mjkey.txt /opt/mujoco/

amazon-ebs: + sudo mv /home/ubuntu/mjpro131 /opt/mujoco/

amazon-ebs: + sudo tee /etc/profile.d/mujoco.sh

amazon-ebs: + sudo echo 'export MUJOCO_PY_MJKEY_PATH=/opt/mujoco/mjkey.txt'

amazon-ebs: + sudo tee -a /etc/profile.d/mujoco.sh

amazon-ebs: + sudo echo 'export MUJOCO_PY_MJPRO_PATH=/opt/mujoco/mjpro131'

amazon-ebs: + . /etc/profile.d/mujoco.sh

where the sudo mv commands have successfully moved my MuJoCo materials over to

the desired target directory.

As an aside, I should also mention the other change I made to packer.json: in

the “ami_regions” argument, I deleted all regions except for “us-west-1”, since

otherwise images would be created in all the regions listed.

Running packer build packer.json takes about thirty minutes to run. Upon

concluding, I saw the following output:

==> Builds finished. The artifacts of successful builds are:

--> amazon-ebs: AMIs were created:

us-west-1: ami-XXXXXXXX

where for security reasons, I have not revealed the full ID. Then, inside

launch.py, I put in:

# This will show up under "My AMIs" in the EC2 console.

AMI_MAP = {

"us-west-1": "ami-XXXXXXXX"

} The last step is to call the launcher script with the appropriate arguments.

Before doing so, make sure you’re using Python 3. I originally ran this with

Python 2.7 and was getting some errors. (Yeah, yeah, I still haven’t changed

even though I said I would do so four years ago; blame backwards

incompatibility.) One easy way to manage different Python versions on one

machine is to use Python virtual environments. I started a new one with Python

3.5 and was able to get going after a few pip install commands.

You can find the necessary arguments in the main method of launch.py. To

understand these arguments, it can be helpful to look at the boto3

documentation, which is the Python library that interfaces with AWS. In

particular, reading the create_instances documentation will be useful.

I ended up using:

python launch.py ../configurations/humanoid.json \

--key_name="MyKeyPair" \

--s3_bucket="s3://put-name-here" \

--region_name="us-west-1" \

--zone="us-west-1b" \

--master_instance_type="m4.large" \

--worker_instance_type="t2.micro" \

--security_group="default" \

--spot_price="0.05"

A few pointers:

- Make sure you run

sudo apt install awscliif you don’t have the package already installed. - Double check the default arguments for the two access keys. They’re slightly

different than what I used in the packer example, so I adjusted my

.bashrcfile. - “MyKeyPair” comes from the

MyKeyPair.pemfile which I created via the EC2 console. - The

s3_bucketargument is based on AWS Simple Storage Service. I made my own unique bucket name via the S3 console, and to actually provide it as an argument, write it ass3://put-name-herewhereput-name-hereis what you created. - The

region_nameshould be straightforward. Thezoneargument is similar, except we add letters at the end since they can be thought of as “subsets” of the regions. Not all zones will be available to you, since AWS adjusts what you can use so that it can more effectively achieve load balancing for its entire service. - The

master_instance_typeandworker_instance_typearguments are the names of the instance types; see this for more information. It turns out that the master requires a more advanced (and thus more expensive) type due to EBS optimization. I chose t2.micro for the workers, which seems to work and is better for me since that’s the only type eligible for the free tier. - The

security_groups you have can be found in the EC2 console underNETWORK & SECURITY / Security Groups. Make sure you use the name, not the ID; the names are NOT the strings that look like “sg-XYZXYZXYZ”. Watch out! -

Finally, the

spot_priceindicates the maximum amount to bid, since we’re using “Spot Instances” rather than “On Demand” pricing. OpenAI’s README says:It’s resilient to worker termination, so it’s safe to run the workers on spot instances.

The README says that because spot instances can be terminated if we are out-bid.

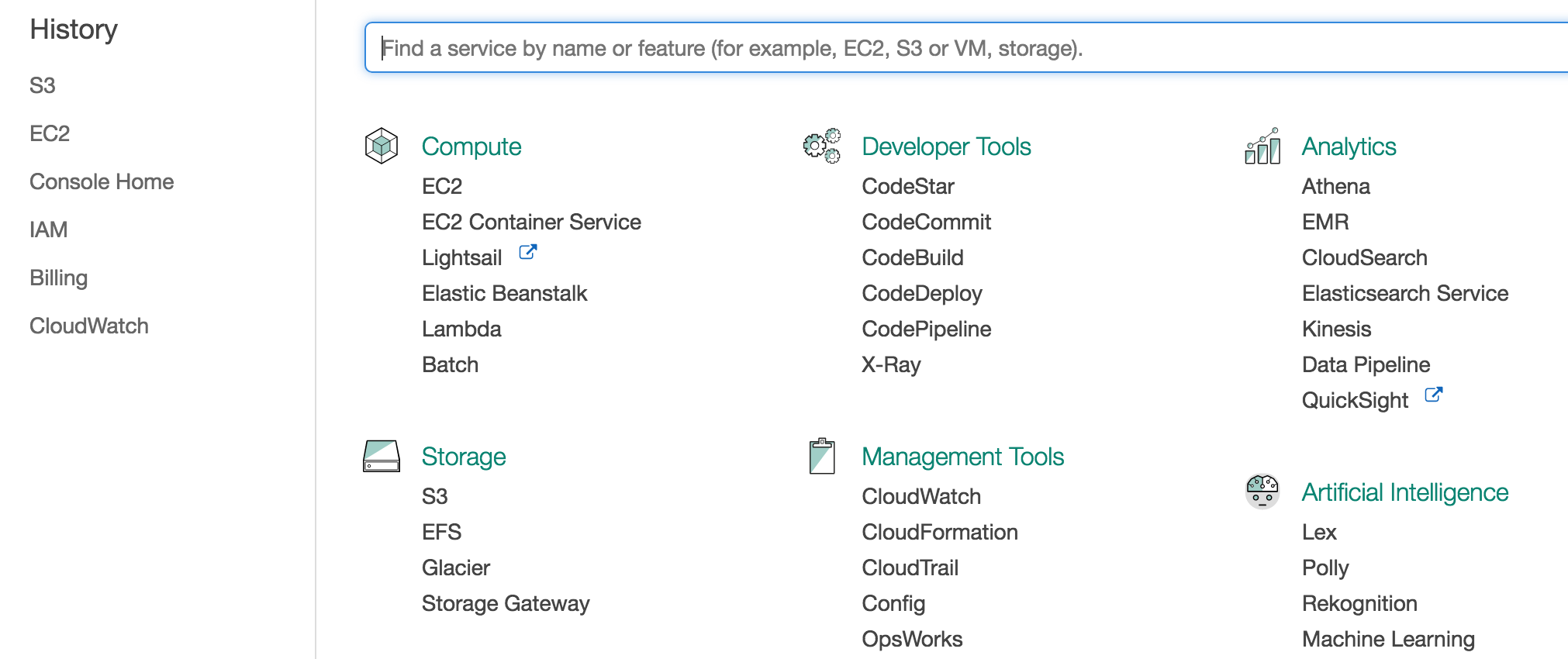

By the way, to be clear on what I mean when I talk about the “EC2 Console” and “S3 Console”, here’s the general AWS console:

The desired consoles can be accessed by clicking “EC2” or “S3” in the above.

If all goes well, you should see a message like this:

Scaling group created

humanoid_20170530-133848 launched successfully.

Manage at [Link Removed]

Copy and paste the link in your browser, and you will see your instance there, running OpenAI’s code.

Deep Reinforcement Learning (CS 294-112) at Berkeley, Take Two

Back in Fall 2015, I took the first edition of Deep Reinforcement Learning (CS 294-112) at Berkeley. As usual, I wrote a blog post about the class; you can find more about other classes I’ve taken by searching the archives.

In that blog post, I admitted that CS 294-112 had several weaknesses, and also that I didn’t quite fully understand the material. Fast forward to today, and I’m pleased to say that:

-

There has been a second edition of CS 294-112, taught this past spring semester. It was a three-credit, full semester course and therefore more substantive than the previous edition which was two-credits and lasted only eight weeks. Furthermore, the slides, homework assignments, and the lecture recordings are all publicly available online. Check out the course website for details. You can find the homework assignments in this GitHub repository (I had to search a bit for this).

-

I now understand much more about deep reinforcement learning and about how to use TensorFlow.

These developments go hand in hand, because I spent much of the second half of the Spring 2017 semester self-studying the second edition of CS 294-112. (To be clear, I was not enrolled in the class.) I know I said I would first self-study a few other courses in a previous blog post, but I couldn’t pass up such a prime opportunity to learn about deep reinforcement learning. Furthermore, the field moves so fast that I worried that if I didn’t follow what was happening now, I would never be able to catch up to the research frontier if I tried to do so in a year.

The class had four homework assignments, and I completed all of them with the exception of skipping the DAgger algorithm implementation in the first homework. The assignments were extremely helpful for me to understand how to better use TensorFlow, and I finally feel comfortable using it for my personal projects. If I can spare the time (famous last words) I plan to write some TensorFlow-related blog posts.

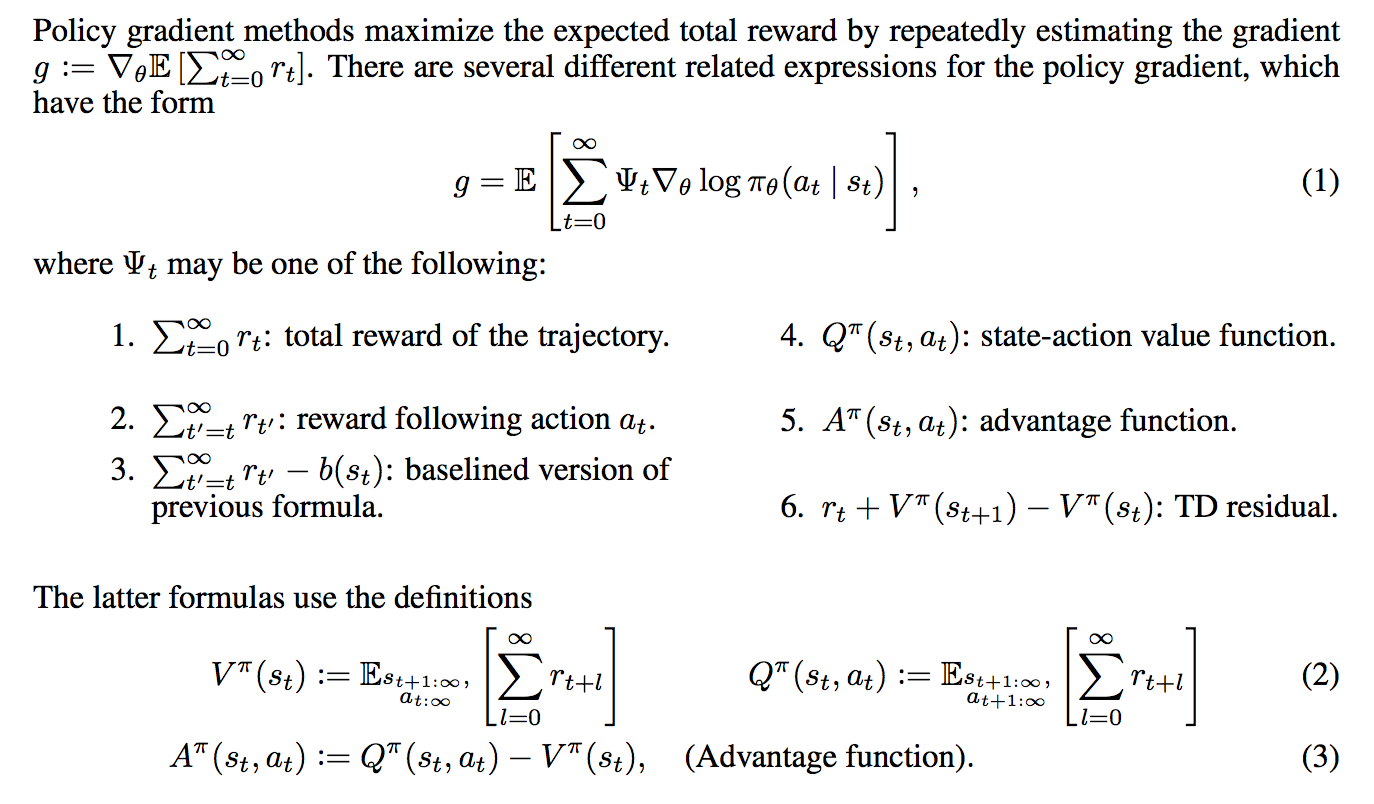

The video lecture were a nice bonus. I only watched a fraction of them, though. This was in part due to time constraints, but also in part due to the lack of captions. The lecture recordings are on YouTube, and in YouTube, I can turn on automatic captions which helps me to follow the material. However, some of the videos didn’t enable that option, so I had to skip those and just read the slides since I wasn’t following what was being said. As far as I remember, automatic captions are provided as an option so long as whoever uploaded the video enables some setting, so maybe someone forgot to do so? Fortunately, the lecture video on policy gradients has captions enabled, so I was able to watch that one. Oh, and I wrote a blog post about the material.

Another possible downside to the course, though this one is extremely minor, is that the last few class sessions were not recorded, since those were when students presented their final projects. Maybe the students wanted some level of privacy? Oh well, I suppose there’s way too many other interesting projects available anyway (by searching GitHubs, arXiv preprints, etc.) to worry about this thing.

I want to conclude with a huge thank you to the course staff. Thank you for helping to spread knowledge about deep reinforcement learning with a great class and with lots of publicly available material. I really appreciate it.

Alan Turing: The Enigma

I finished reading Andrew Hodges’ book Alan Turing: The Engima, otherwise known as the definitive biography of mathematician, computer scientist, and code breaker Alan Turing. I was inspired to read the book in part because I’ve been reading lots of AI-related books this year1 and in just about every one of those books, Alan Turing is mention in some form. In addition, I saw the film The Imitation Game, and indeed this is the book that inspired it. I bought the 2014 edition of the book — with The Imitation Game cover — during a recent visit to the National Cryptology Museum.

The author is Andrew Hodges, who at that time was a mathematics instructor at the University of Oxford (he’s now retired). He maintains a website where he commemorates Alan Turing’s life and achievements. I encourage the interested reader to check it out. Hodges has the qualifications to write about the book, being deeply versed in mathematics. He also appears to be gay himself.2

After reading the book, my immediate thoughts relating to the positive aspects of the books are:

-

The book is organized chronologically and the eight chapters are indicated with date ranges. Thus, for a biography of this size, it is relatively straightforward to piece together a mental timeline of Alan Turing’s life.

-

The book is detailed. Like, wow. The edition I have is 680 pages, not counting the endnotes at the back of the book which command an extra 30 or so pages. Since I read almost every word of this book (I skipped a few endnotes), and because I tried to stay alert when reading this book, I felt like I got a clear picture of Turing’s life, along with what life must have been like during the World War II-era.

-

The book contains quotes and writings from Turing that show just how far ahead of his time he was. For instance, even today people are still utilizing concepts from his famous 1936 paper On Computable Numbers, with an Application to the Entscheidungsproblem and his 1950 paper Computing Machinery and Intelligence. The former introduced Turing Machines, the latter introduced the famous Turing Test. Fortunately, I don’t think there was much exaggeration of Turing’s accomplishments, unlike the The Imitation Game. When I was reading his quotes, I often had to remind myself that “this is the 1940s or 1950s ….”

-

The book showcases the struggles of being gay, particularly during a time when homosexual activity was a crime. The book actually doesn’t seem to cover some of his struggles in the early 1950s as much as I thought it would be, but it was probably difficult to find sufficient references for this aspect of his life. At the very least, readers today should appreciate how much our attitude towards homosexuality has improved.

That’s not to say there weren’t a few downsides. Here are some I thought of:

-

Related to what I mentioned earlier, it is long. It too me a month to finish, and the writing is in “1983-style” which makes it more difficult for me to understand. (By contrast, I read both of Richard Dawkins’ recent autobiographies, which combine to be roughly the same length as Hodges’ book, and Dawkins’ books were much easier to read.) Now, I find Turing’s life very interesting so this is more of a “neutral” factor to me, but I can see why the casual reader might be dissuaded from reading this book.

-

Much of the material is technical even to me. I understand the basics of Turing Machines but certainly not how the early computers were built. The hardest parts of the book to read are probably in chapters six and seven (out of eight total). I kept asking to myself “what’s a cathode ray”?

To conclude, the book is an extremely detailed overview of Turing’s life which at times may be technically challenging to read.

I wonder what Alan Turing would think about AI today. The widely-used AI undergraduate textbook by Stuart Russell and Peter Norvig concludes with the follow prescient quote by Turing:

We can only see a short distance ahead, but we can see plenty there that needs to be done.

Earlier scientists have an advantage in setting their legacy in their fields since it’s easier to make landmark contributions. I view Charles Darwin, for instance, as the greatest biologist who has ever lived, and no matter how skilled today’s biologists are, I believe none will ever be able to surpass Darwin’s impact. The same goes today for Alan Turing, who (possibly along with John von Neumann) is one of the two preeminent computer scientists who has ever lived.

Despite all the talent that’s out there in computer science, I don’t think any one individual can possibly surpass Turing’s legacy on computer science and artificial intelligence.

-

Thus, the 2017 edition of my reading list post (here’s the 2016 version, if you’re wondering) is going to be very biased in terms of AI. Stay tuned! ↩

-

I only say this because people who are members of “certain groups” — where membership criteria is not due to choice but due to intrinsic human characteristics — tend to have more knowledge about the group than “outsiders.” Thus, a gay person by default has extra credibility when writing about being gay than would a straight person. A deaf person by default has extra credibility when writing about deafness than a hearing person. And so on. ↩

Understanding Deep Learning Requires Rethinking Generalization: My Thoughts and Notes

The paper “Understanding Deep Learning Requires Rethinking Generalization” (arXiv link) caused quite a stir in the Deep Learning and Machine Learning research communities. It’s the rare paper that seems to have high research merit — judging from being awarded one of three Best Paper awards at ICLR 2017 — but is also readable. Hence, it got the most amount of comments of any ICLR 2017 submission on OpenReview. It has also been discussed on reddit and was recently featured on The Morning Paper blog. I was aware of the paper shortly after it was uploaded to arXiv, but never found the time to read it in detail until now.

I enjoyed reading the paper, and while I agree with many readers that some of the findings might be obvious, the paper nonetheless seems deserving of the attention it has been getting.

The authors conveniently put two of their important findings in centered italics:

Deep neural networks easily fit random labels.

and

Explicit regularization may improve generalization performance, but is neither necessary nor by itself sufficient for controlling generalization error.

I will also quote another contribution from the paper that I find interesting:

We complement our empirical observations with a theoretical construction showing that generically large neural networks can express any labeling of the training data.

(I go through the derivation later in this post.)

Going back to their first claim about deep neural networks fitting random labels, what does this mean from a generalization perspective? (Generalization is just the difference between training error and testing error.) It means that we cannot come up with a “generalization function” that can take in a neural network as input and output a generalization quality score. Here’s my intuition:

-

What we want: let’s imagine an arbitrary encoding of a neural network designed to give as much deterministic information as possible, such as the architecture and hyperparameters, and then use that encoding as input to a generalization function. We want that function to give us a number representing generalization quality, assuming that the datasets are allowed to vary. The worst generalization occurs when a fixed neural network gets excellent training error but could get either the same testing error (awesome!), or get test-set performance no better than random guessing (ugh!).

-

Reality: unfortunately, the best we can do seems to be no better than the worst case. We know of no function that can provide bounds on generalization performance across all datasets. Why? Let’s use the LeNet architecture and MNIST as an example. With the right architecture, generalization error is very small as both training and testing performance are in the high 90 percentages. With a second data set that consists of the same MNIST digits, but with the labels randomized, that same LeNet architecture can do no better than random guessing on the test set, even though the training performance is extremely good (or at least, it should be). That’s literally as bad as we can get. There’s no point in developing a function to measure generalization when we know it can only tell us that generalization will be in between zero (i.e. perfect) and the difference between zero and random guessing (i.e. the worst case)!

As they later discuss in the paper, regularization can be used to improve generalization, but will not be sufficient for developing our desired generalization criteria.

Let’s briefly take a step back and consider classical machine learning, which provides us with generalization criteria such as VC-dimension, Rademacher complexity, and uniform stability. I learned about VC-dimension during my undergraduate machine learning class, Rademacher complexity during STAT 210B this past semester, and … actually I’m not familiar with uniform stability. But intuitively … it makes sense to me that classical criteria do not apply to deep networks. To take the Rademacher complexity example: a function class which can fit to arbitrary \(\pm 1\) noise vectors presents the trivial bound of one, which is like saying: “generalization is between zero and the worst case.” Not very helpful.

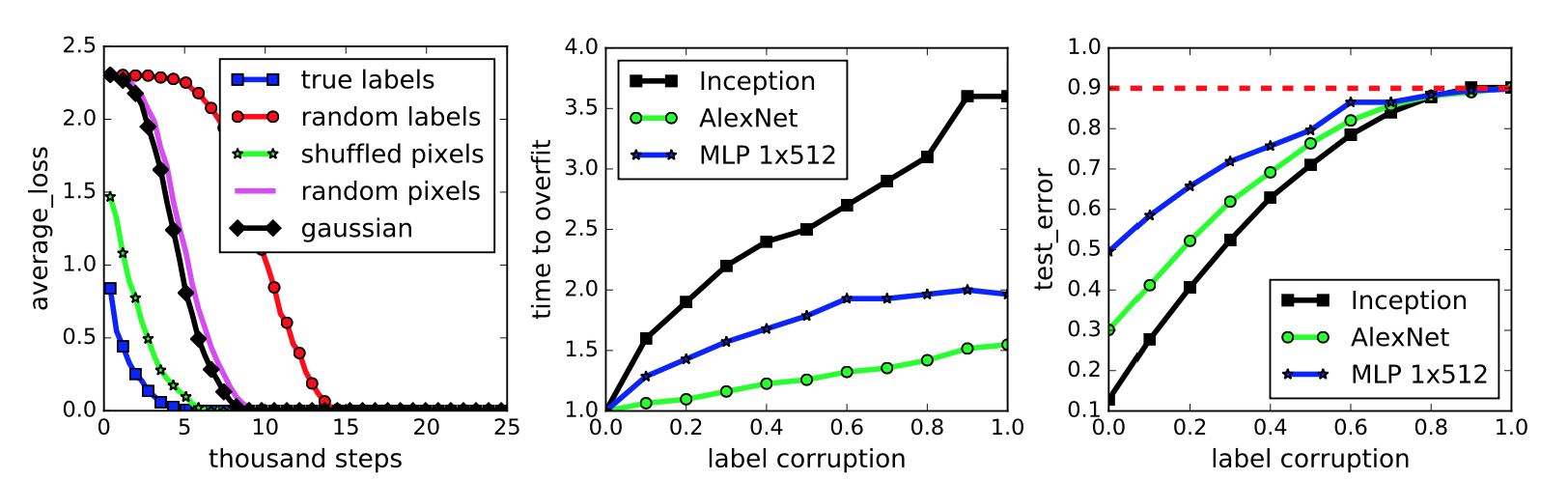

The paper then proceeds to describe their testing scenario, and packs some important results in the figure reproduced below:

This figure represents a neural network classifying the images in the widely-benchmarked CIFAR-10 dataset. The network the authors used is a simplified version of the Inception architecture.

-

The first subplot represents five different settings of the labels and input images. To be clear on what the “gaussian” setting means, they use a Gaussian distribution to generate random pixels (!!) for every image. The mean and variance of that Gaussian are “matched to the original dataset.” In addition, the “shuffled” and “random” pixels apply a random permutation to the pixels, with the same permutation to all images for the former, and different permutations for the latter.

We immediately see that the neural network can get zero training error on all the settings, but the convergence speed varies. Intuition suggests that the dataset with the correct labels and the one with the same shuffling permutation should converge quickly, and this indeed is the case. Interestingly enough, I thought the “gaussian” setting would have the worst performance, but that prize seems to go to “random labels.”

-

The second subplot measures training error when the amount of label noise is varied; with some probability \(p\), each image independently has its labeled corrupted and replaced with a draw from the discrete uniform distribution over the classes. The results show that more corruption slows convergence, which makes sense. By the way, using a continuum of something is a common research tactic and something I should try for my own work.

-

Finally, the third subplot measures generalization error under label corruption. As these data points were all measured after convergence, this is equivalent to the test error. The results here also make a lot of sense. Test set error should be approaching 90 percent because CIFAR-10 has 10 classes (that’s why it’s called CIFAR-10!).

My major criticism of this figure is not that the results, particularly in the second and third subplots, might seem obvious but that the figure lacks error bars. Since it’s easy nowadays to program multiple calls in a bash script or something similar, I would expect at least three trials and with error bars (or “regions”) to each curve in this figure.

The next section discusses the role of regularization, which is normally applied to prevent overfitting to the training data. The classic example is with linear regression and a dataset of several points arranged in roughly a linear fashion. Do we try to fit a straight line through these points, which might have lots of training error, or do we take a high-dimensional polynomial and fit every point exactly, even if the resulting curve looks impossibly crazy? That’s what regularization helps to control. Explicit regularization in linear regression is the \(\lambda\) term in the following optimization problem:

\[\min_w \|Xw - y\|_2^2 + \lambda \|w\|_2^2\]I presented this in an earlier blog post.

To investigate the role of regularization in Deep Learning, the authors test with and without regularizers. Incidentally, the use of \(\lambda\) above is not the only type of regularization. There are also several others: data augmentation, dropout, weight decay, early stopping (implicit) and batch normalization (implicit). These are standard tools in the modern Deep Learning toolkit.

They find that, while regularization helps to improve generalization performance, it is still possible to get excellent generalization even with no regularization. They conclude:

In summary, our observations on both explicit and implicit regularizers are consistently suggesting that regularizers, when properly tuned, could help to improve the generalization performance. However, it is unlikely that the regularizers are the fundamental reason for generalization, as the networks continue to perform well after all the regularizers [are] removed.

On a side note, the regularization discussion in the paper feels out of order and the writing sounds a bit off to me. I wish they had more time to fix this, as the regularization portion of the paper contains most of my English language-related criticism.

Moving on, the next section of the paper is about finite-sample expressivity, or understanding what functions neural networks can express given a finite number of samples. The authors state that the previous literature focuses on population analysis where one can assume an arbitrary number of samples. Here, instead, they assume a fixed set of \(n\) training points \(\{x_1,\ldots,x_n\}\). This seems easier to understand anyway.

They prove a theorem that relates to the third major contribution I wrote earlier: “that generically large neural networks can express any labeling of the training data.” Before proving the theorem, let’s begin with the following lemma:

Lemma 1. For any two interleaving sequences of \(n\) real numbers

\[b_1 < x_1 < b_2 < x_2 \cdots < b_n < x_n\]the \(n \times n\) matrix \(A = [\max\{x_i - b_j, 0\}]_{ij}\) has full rank. Its smallest eigenvalue is \(\min_i (x_i - b_i)\).

Whenever I see statements like these, my first instinct is to draw out the matrix. And here it is:

\[\begin{align} A &= \begin{bmatrix} \max\{x_1-b_1, 0\} & \max\{x_1-b_2, 0\} & \cdots & \max\{x_1-b_n, 0\} \\ \max\{x_2-b_1, 0\} & \max\{x_2-b_2, 0\} & \cdots & \max\{x_2-b_n, 0\} \\ \vdots & \ddots & \ddots & \vdots \\ \max\{x_n-b_1, 0\} & \max\{x_n-b_2, 0\} & \cdots & \max\{x_n-b_n, 0\} \end{bmatrix} \\ &\;{\overset{(i)}{=}}\; \begin{bmatrix} x_1-b_1 & 0 & 0 & \cdots & 0 \\ x_2-b_1 & x_2-b_2 & 0 & \cdots & 0 \\ \vdots & \ddots & \ddots & \ddots & \vdots \\ x_{n-1}-b_1 & x_{n-1}-b_2 & \ddots & \cdots & 0 \\ x_n-b_1 & x_n-b_2 & x_n-b_3 & \cdots & x_n-b_n \end{bmatrix} \end{align}\]where (i) follows from the interleaving sequence assumption. This matrix is lower-triangular, and moreover, all the non-zero elements are positive. We know from linear algebra that lower triangular matrices

- are invertible if and only if the diagonal elements are nonzero

- have their eigenvalues taken directly from the diagonal elements

These two facts together prove Lemma 1. Next, we can prove: