My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

My PhD Dissertation, and a Moment of Thanks

Back in May, I gave my PhD dissertation talk, which is the second-to-last major milestone in getting a PhD. The last one is actually writing it. I think most EECS PhD students give their talk and then file in the written dissertation a few days afterwards. I had a summer-long gap, but the long wait is finally over. After seven (!) years at UC Berkeley, I have finally written up my PhD dissertation and you can download it here. It’s been the ride of a lifetime, from the first time I set foot at UC Berkeley during visit days in 2014 to today. Needless to say, so much has changed since that day. In this post, I discuss the process of writing up my dissertation and (for fun) I share the acknowledgments.

The act of writing the dissertation was pretty painless. In my field, making the dissertation typically involves these steps:

-

Take 3-5 of your prior (ideally first-author) papers and stitch them back-to-back, with one paper as one chapter.

-

Do a find-and-replace to change all instances of “paper” to “chapter” (so that in the dissertation, the phrase “In this paper, we show…” turns to “In this chapter, we show …”.

-

Add an introduction chapter and a conclusion chapter, both of which can be just a handful of pages long. The introduction explains the structure of the thesis, and the conclusion has suggestions for future work.

-

Then the little (or not so little things, in my case): add an acknowledgments section at the beginning, make sure the title and LaTeX formatting all look good, and then get signatures from your committee.

That’s the first-order approximation to writing the PhD. Of course, the Berkeley Graduate Division claims that the chapters must be arranged and written in a “coherent theme” but I don’t think people pay much attention to that rule in practice.

On my end, since I had already given a PhD talk, I basically knew I had the green-light to write up the dissertation. My committee members were John Canny, Ken Goldberg, and Masayoshi Tomizuka, 3 of the 4 professors who were on my qualifying exam committee. I emailed them a few early drafts, and once they gave approval via email, it was a simple matter of uploading the PDF to ProQuest, as per instructions from the Berkeley Graduate Division. Unfortunately the default option for uploading the PDF is to not have it open access (!!), which requires an extra fee of USD 95.00. Yikes! Josh Tobin has a Twitter post about this, and I agree with him. I am baffled as to why this is the case. My advice, at least to Berkeley EECS PhD students, is to not pay ProQuest, because we already have a website which lists the dissertations open-access, as it should be done — thank you Berkeley EECS!

By the way, I am legitimately curious: how much money does ProQuest actually make from selling PhD theses? Does anyone pay for a dissertation??? A statistic would be nice to see.

I did pay for something that is probably a little more worthwhile: printed copies of the dissertations, just so that I can have a few books on hand. Maybe one day someone besides me will read through the content …

Well, that was how I filed in the dissertation. What I wanted to do next here was restate what I wrote in the acknowledgments section of my dissertation. This section is the most personal one in the dissertation, and I enjoy reading what other students have to say. In fact, the acknowledgments are probably the most common part of theses that I read. I wrote a 9-page acknowledgments section, which is far longer than typical (but is not a record).

Without further ado, here are the acknowledgments. I hope you enjoy reading it!

When I reflect back on all these years as a PhD student, I find myself agreeing to what David Culler told me when I first came to Berkeley during visit days: “you will learn more during your years at Berkeley than ever before.” This is so true for me. Along so many dimension, my PhD experience has been a transformative one. In the acknowledgments to follow, I will do my best to explain why I owe so many people a great debt. As with any acknowledgments, however, there is only so much that I can write. If you are reading this after the fact and wish that I had written more about you, please let me know, and I will treat you to some sugar-free boba tea or keto-friendly coffee, depending on your preferred beverage.

For a variety of reasons, I had one of the more unusual PhD experiences. However, like perhaps many students, my PhD life first felt like a struggle but over time became a highly fulfilling endeavor.

When I arrived at Berkeley, I started working with John Canny. When I think of John, the following phrase comes to mind: “jack of all trades.” This is often paired with the somewhat pejorative “master of none” statement, but a more accurate conclusion for John would be “master of all.” John has done research in a wider variety of areas than is typical: robotics, computer vision, theory of computation, computational geometry, human computer interaction, and he has taught courses in operating systems, combinatorics, and social justice. When I came to Berkeley, John had already transitioned to machine learning. I have benefited tremendously from his advice throughout the years, first primarily on machine learning toolkits when we were working on BIDMach, a library for high throughput algorithms. (I still don’t know how John, a highly senior faculty, had the time and expertise to implement state-of-the-art machine learning algorithms with Scala and CUDA code.) Next, I got advice from John for my work in deep imitation learning and deep reinforcement learning, and John was able to provide technical advice for these rapidly emerging fields. As will be highlighted later, other members of his group work in areas as diverse as computer vision for autonomous driving, video captioning, natural language processing, generating sketches using deep learning, and protein folding — it sometimes seems as if all areas of Artificial Intelligence (and many areas of Human Computer Interaction) are or were represented in his group.

A good rule of thumb about John can be shown by the act of asking for paper feedback. If I ask an undergrad, I expect them to point out minor typos. If I ask a graduate student, I expect minor questions about why I did not perform some small experiment. But if I ask John for feedback, he will quickly identify the key method in the paper — and its weaknesses. His advice also extended to giving presentations. In my first paper under his primary supervision, which we presented at the Conference on Uncertainty in Artificial Intelligence (UAI) in Sydney, Australia, I was surprised to see him making the long trip to attend the conference, as I had not known he was coming. Before I gave my 20-minute talk on our paper, he sat down with me in the International Convention Centre Sydney to go through the slides carefully. I am happy to contribute one thing: that right after I was handed the award for “Honorable Mention for Best Student Paper” from the conference chairs, I managed to get the room of 100-ish people to then give a round of applause to John. In addition, John is helpful in fund-raising and supplying the necessary compute to his students. Towards the end of my PhD, when he served as the computer science division department chair, he provided assistance in helping me secure accommodations such as sign language interpreters for academic conferences.

I also was fortunate to work with Ken Goldberg, who would become a co-advisor and who helped me transition into a full-time roboticist. Ken is a highly energetic professor who, despite being a senior faculty with so many things demanding of his time, is able to give some of the most detailed paper feedback that I have seen. When we were doing serious paper writing to meet a deadline, I would constantly refresh my email to see Ken’s latest comments, written using Notability on his iPad, and then immediately rush to address them. After he surprised me by generously giving me an iPad midway through my PhD, the first thing I thought of doing was to provide paper feedback using his style and to match his level of detail in the process. Ken also provides extremely detailed feedback on our research talks and presentations, an invaluable skill given the need to communicate effectively.

Ken’s lab, called the “AUTOLab,” was welcoming to me when I first joined. The Monday evening lab meetings are structured so that different lab members present on research progress in progress while we all enjoy good food. Such meetings were one of the highlights of my weeks at Berkeley, as were the regular lab celebrations to his house. I also appreciate Ken’s assistance in networking across the robotics research community at various conferences, which has helped me feel more involved in the research community and also became the source for my collaboration with Honda and Google throughout my PhD. Ken is very active in vouching for his students and, like John, is able to supply the compute we need to do compute-intensive robot learning research. Ken was also helpful in securing academic accommodations at Berkeley and in international robotics conferences. Much of my recent, and hopefully future, research is based on what I have learned from being in Ken’s lab and interacting with his students.

To John and Ken, I know I was not the easiest student to advise, and I deeply appreciate their willingness to stick with me over all these years. I hope that the end, I was able to show my own worth as a researcher. In academic circles, I am told that professors are sometimes judged based on what their students do, so I hope that I will be able to continue working on impactful research while confidently acting as a representative example for your academic descendants.

During my first week of work at Berkeley, I arrived to my desk in Soda Hall, and in the opposite corner of the shared office of six desks, I saw Biye Jiang hunched over his laptop working. We said “hi,” but this turned out to be the start of a long-time friendship with Biye. It resonated with me when I told him that because of my deafness, I found it hard to communicate with others in a large group setting with lots of background noise, and he said he sometimes felt the same but for a different reason, as an international student from China. I would speak regularly with him for four years, discussing various topics over frequent lunches and dinners, ranging from research and then to other topics such as life in China. After he left to go to work for Alibaba in Beijing, China, he gave me a hand-written note saying: “Don’t just work harder, but also live better! Enjoy your life! Good luck ^_^” I know I am probably failing at this, but it is on my agenda!

Another person I spoke to in my early days at Berkeley was Pablo Paredes, who was among the older (if not the oldest!) PhD students at Berkeley. He taught me how to manage as a beginning PhD student, and gave me psychological advice when I felt like I was hitting research roadblocks. Others who I spoke with from working with John include Haoyu Chen and Xinlei Pan, both of whom would play a major role in me getting my first paper under John’s primary supervision, which I had the good fortunate to present at UAI 2017 in Sydney, Australia. With Xinlei, I also got the opportunity to help him for his 2019 ICRA paper on robust reinforcement learning, and was honored to give the presentation for the paper in Montreal. My enthusiasm was somewhat tempered by how difficult it was for Xinlei to get visas to travel to other countries, and it was partly his own experience that I recognized how difficult it could be for an international student in the United States, and that I would try to make the situation easier for them. I am also honored that Haoyu later gave a referral for me to interview at Waymo.

In November of 2015, when I had hit a rough patch in my research and felt like I had let everyone down, Florian Pokorny and Jeff Mahler were the first two members of Ken Goldberg’s lab that I got to speak to, and they helped me to get my first (Berkeley) paper, on learning-based approaches for robotics. Their collaboration became my route to robotics, I am forever grateful that they were willing to work to me when it seemed like I might have little to offer. In Ken’s lab, I would later get to talk with Animesh Garg, Sanjay Krishnan, Michael Laskey, and Steve McKinley. With Animesh and Steve, I only wish I could have joined the lab earlier so that I could have collaborated with them more often. Near the end of Animesh’s time as a PhD student, he approached me after a lab meeting. He had read a blog post of mine and told me that I should have hung out with him more often — and I agree, I wish I did. I was honored when Animesh, now a rising star faculty at the University of Toronto, offered for me to apply for a postdoc with him. Once COVID-19 travel restrictions ease up, I promise that I will make the trip to Toronto to see Animesh, and similarly, to go to Sweden to see Florian.

Among those who I initially worked with in the AUTOLab, I want to particularly acknowledge Jeff Mahler’s help with all things related to grasping; Jeff is one of the leading minds in robotic manipulation, and his Dex-Net project is one of the AUTOLab’s most impactful projects, and shows the benefit of using a hybrid analytic and learned model in an age when so many have turned to pure learning. I look forward to seeing what his startup, Ambi Robotics, is able to do. I also acknowledge Sanjay’s patience with me when I started working with the lab’s surgical robot, the da Vinci Research Kit (dVRK). Sanjay was effectively operating like a faculty at that time, and had a deep knowledge of the literature going on in machine learning and robotics, and even databases (which was technically his original background and possibly his “official” research area, but as Ken said, “he’s one of the few people who can do both databases and robotics”). His patience when I asked him questions was invaluable, and I often start research conversations by thinking about how Sanjay would approach the question. With Michael Laskey, I acknowledge his help in getting me started with the Human Support Robot and with imitation learning. The bed-making project that I took over with him would mark the start of a series of fruitful research papers on deformable object manipulation. Ah, those days of 2017 and 2018 were sweet, while Jeff, Michael, and Sanjay were all in the lab. Looking back, there were times on Fridays when I most looked forward to our lab “happy hours” in Etcheverry Hall. Rumor has it that we could get reimbursed by Ken for these purchases of corn chips, salsa, and beer, but I never bothered. I would be willing to pay far more to have these meetings happen again.

After Jeff, Michael, and Sanjay, came the next generation of PhD students and postdocs. I enjoyed my conversations with Michael Danielczuk, who helped to continue much of the Dex-Net and YuMi-related projects after Jeff Mahler’s graduation. I will also need to make sure I never stop running so that I can inch closer and closer to his half-marathon and marathon times. I also enjoyed my conversations with Carolyn Matl and Matthew Matl, over various lab meetings and dinners, about research. I admire Carolyn’s research trajectory and her work on manipulating granular media and dough manipulation, and I look forward to seeing Matthew’s leadership at Ambi Robotics, and I hope we shall have more Japanese burger dinners in the future.

With Roy Fox, we talked about some of the most interesting topics in generative modeling and imitation learning. There was a time in summer 2017 in our lab when the thing I looked forward to the most was a meeting with Roy to check that my code implementations were correct. Alas, we did not get a new paper from our ideas, but I still enjoyed the conversations, and I look forward to reading about his current and future accomplishments at UC Irvine. With our other postdoc from Israel, Ron Berenstein, I enjoyed our collaboration on the robotic bed-making project, which may have marked the turning point of my PhD experience, and I appreciate him reminding me that “your time is valuable” and that I should be wisely utilizing my time to work on important research.

Along with Roy and Ron, Ken continued to show his top ability in recruiting more talented postdocs to his lab. Among those who I was fortunate to meet include Ajay Kumar Tanwani, Jeff Ichnowski, and Minho Hwang. My collaboration with Ajay started with the robot bed-making project, and continued for our IROS 2020 and RSS 2020 fabric manipulation papers. Ajay has a deep knowledge of recent advances in reinforcement learning and machine learning, and played key roles in helping me frame the messaging in our papers. Jeff is an expert kinematician who understands how to perform trajectory optimization with robotics, and we desperately needed him to improve the performance of our physical robots. With Minho, I enjoyed his help on getting the da Vinci Surgical Robot back in operation and with better performance than ever before. He is certainly, as Ken Goldberg proudly announced multiple times, “the lab’s secret weapon,” as should no doubt be evident from the large amount of papers the AUTOLab has produced in recent years with the dVRK. I wish him the best as a faculty at DGIST. I thank him for the lovely Korean tea that he gave me after our farewell sushi dinner at Akemi’s! I took a picture of the kind note Minho left to me with the box of tea, so that as with Biye’s note, it is part of my permanent record. During the time these postdocs were in the lab, I also acknowledge Jingyi Xu from the Technical University of Munich in Germany, who spent a half-year as a visiting PhD student, for her enthusiasm and creativity with robot grasping research.

To Ashwin Balakrishna and Brijen Thananjeyan, I’m not sure why you two are PhD students. You two are already at the level of faculty! If you ever want to discuss more ideas with me, please let me know. I will need to study how they operate to understand how to mentor a wide range of projects, as should be evident by the large number of AUTOLab undergraduates working with them. During the COVID-19 work-from-home period, it seemed as if one or both of them was part of all my AUTOLab meetings. I look forward to seeing their continued collaboration in safe reinforcement learning and similar topics, and maybe one day I will start picking up tennis so that running is not my only sport.

After I submitted the robot bed-making paper, I belatedly started mentoring new undergraduates in the AUTOLab. The first undergrad I worked with was Ryan Hoque, who had quickly singled me out as a potential graduate student mentor, while mentioning his interest in my blog (this is not an uncommon occurrence). He, and then later Aditya Ganapathi, were the first two undergraduates who I felt like I had mentored at least somewhat competently. I enjoyed working and debugging the fabric simulator we developed, which would later form the basis of much of our subsequent work published at IROS, RSS, and ICRA. I am happy that Ryan has continued his studies as a PhD student in the AUTOLab, focusing on interactive imitation learning. Regarding the fabrics-related work in the AUTOLab, I also thank the scientists at Honda Research Institute for collaborating with us: Nawid Jamali, Soshi Iba, and Katsu Yamane. I enjoyed our semi-regular meetings in Etcheverry Hall where we could go over research progress and brainstorm some of the most exciting ideas in developing a domestic home robot.

While all this was happening, I was still working with John Canny, and trying to figure out the right work balance with two advisors. Over the years, John would work with PhD students David Chan, Roshan Rao, Forrest Huang, Suhong Moon, Jinkyu Kim, and Philippe Laban, along with a talented Master’s student Chen (Allen) Tang. As befitting someone like John, his students work on a wider range of research areas than is typical for a research lab. (There is no official name for John Canny’s lab, so we decided to be creative and called it … “the CannyLab.”) With Jinkyu and Suhong, I learned more about explainable AI and its application for autonomous driving, and on the non-science side, I learned more about South Korea. Philippe taught me about natural language processing, summarizing text, and his “NewsLens” project resonated with me, given the wide variety of news that I read these days, and I enjoyed the backstory for why he was originally motivated to work on this. David taught me about computer vision (video captioning), Roshan taught me about proteins, and Forrest taught me about sketching. Philippe, David, Roshan, and Forrest also helped me understand Google’s shiny new neural network architecture, the Transformer, as well as closely-related architectures such as OpenAI’s GPT models. I also acknowledge David’s help for his work getting the servers set up for the CannyLab, and for his advice in building a computer. Allen Tang’s master’s thesis on how to accelerate deep reinforcement learning played a key role in my final research projects.

For my whole life, I had always wondered what it was like to intern at a company like Google, and have long watched in awe as Google churned out impressive AI research results. I had applied to Google twice earlier in my PhD, but was unable to land an internship. So, when the great Andy Zeng sent me a surprise email in late 2019, after my initial shock and disbelief wore off, I quickly responded with my interest in interning with him. After my research scientist internship under his supervision, I can confirm that the rumors are true: Andy Zeng is a fantastic intern host, and I highly recommend him. The internship in 2020 was virtual, unfortunately, but I still enjoyed the work and his frequent video calls helped to ensure that I stayed focused on producing solid research during my internship. I also appreciated the other Google researchers who I got to chat with throughout the internship: Pete Florence, Jonathan Tompson, Erwin Coumans, and Vikas Sindhwani. I have found that the general rule that others in the AUTOLab (I’m looking at you, Aditya Ganapathi) have told me is a good one to follow: “think of something, and if Pete Florence and Andy Zeng like it, it’s good, and if they don’t like it, don’t work on it.” Thank you very much for the collaboration!

The last two years of my PhD have felt like the most productive of my life. During this time, I was collaborating (virtually) with many AUTOLab members. In addition to those mentioned earlier, I want to acknowledge undergraduate Haolun (Harry) Zhang on dynamic cable manipulation, leading to the accurately-named paper Robots of the Lost Arc. I look forward to seeing Harry’s continued achievements at Carnegie Mellon University. I was also fortunate to collaborate more closely with Huang (Raven) Huang, Vincent Lim, and many other talented newer students to Ken Goldberg’s lab. Raven seems like a senior PhD student instead of just starting out, and Vincent is far more skilled than I could have imagined from a beginning undergraduate. Both have strong work ethics, and I hope that our collaboration shall one day lead to robots performing reliable lassoing and tossing. In addition, I also enjoyed my conversations with the newer postdocs to the AUTOLab, Daniel Brown and Ellen Novoseller, from whom I have learned a lot of inverse reinforcement learning and preference learning. Incoming PhD student Justin Kerr also played an enormous role in helping me work with the YuMi in my final days in the AUTOLab.

I also want to acknowledge the two undergraduates from John Canny’s lab who I collaborated with the most, Mandi Zhao and Abhinav Gopal. Given the intense pressure of balancing both coursework and others, I am impressed they were willing to stick around with me while we finalized our work with John Canny. With Mandi, I hope we can continue discussing research ideas and US-China relations over WeChat, and with Abhinav, I hope we can pursue more research ideas in offline reinforcement learning.

Besides those who directly worked with me, my experience at Berkeley was enriched by the various people from other labs who I got to interact with somewhat regularly. Largely through Biye, I got to know a fair amount of Chinese international students, among them include Hezheng Yin, Xuaner (Cecilia) Zhang, Qijing (Jenny) Huang, and Isla Yang. I enjoyed our conversations over dinners and I hope they enjoyed my cooking of salmon and panna cotta. I look forward to the next chapter in all of our lives. It’s largely because of my interaction with them that I decided I would do my best to learn more about anything related to China, which explains book after book that I have on my iBooks app.

My education at Berkeley benefited a great deal from what other faculty taught me during courses, research meetings, and otherwise. I was fortunate to take classes from Pieter Abbeel, Anca Dragan, Daniel Klein, Jitendra Malik, Will Fithian, Benjamin Recht, and Michael I. Jordan. I also took the initial iteration of Deep Reinforcement Learning (RL), back when John Schulman taught it, and I thank John for kindly responding to questions I had regarding Deep RL. Among these professors, I would like to particularly acknowledge Pieter Abbeel, who has regularly served as inspiration for my research, and somehow remembers me and seems to have the time to reply to my emails even though I am not a student of his nor a direct collaborator. His online lecture notes and videos in robotics and unsupervised learning are among those that I have consulted the most.

In addition to my two formal PhD advisors, I thank Sergey Levine and Masayoshi Tomizuka for serving on my qualifying exam committee. The days leading up to that event were among the most stressful I had experienced in my life, and I thank them for taking the time to listen to my research proposal. I also enjoyed learning more about deep reinforcement learning through Sergey Levine’s course and online lectures.

I also owe a great deal to the administrators at UC Berkeley. The ones who helped me the most, especially during the two times during my PhD when I felt like I had hit rock bottom (in late 2015 and early 2018), were able to offer guidance and do what the could to help me stay on track to finish my PhD. I don’t know all the details about what they did behind the scenes, but thank you, to Shirley Salanio, Audrey Sillers, Angie Abbatecola, and the newer administrators to BAIR. Like Angie, I am an old timer of BAIR. I was even there when it was called Berkeley Vision and Learning Center (BVLR), before we properly re-branded the organization to become Berkeley Artificial Intelligence Research (BAIR). I also thank their help in getting the BAIR Blog up and running.

My research was supported initially by a university fellowship, and then later by a six-year Graduate Fellowships for STEM Diversity (GFSD) which was formerly called the National Physical Science Consortium (NPSC) Fellowship. At the time I received the fellowship, I was in the middle of feeling stuck on several research progress. I don’t know precisely why they granted me the fellowship, but whatever their reasons, I am eternally grateful for the decision they made. One of the more unusual conditions of the GFSD fellowship is that recipients are to intern at the sponsoring agency, which for me was the National Security Agency (NSA). I went there for one summer in Laurel, Maryland, and got a partial peek past the curtain of the NSA. By design, the NSA is one of the most secretive United States government agencies, which makes it difficult for people to acknowledge the work they do. Being there allowed me to understand and appreciate the signals intelligence work that the NSA does on behalf of the United States. Out of my NSA contacts, I would like to particularly mention Grant Wagner and Arthur Drisko.

While initially apprehensive about Berkeley, I have now come to accept it for some of the best it has to offer. I will be thankful of the many cafes I spent time in around the city, along with the frequent running trails both on the streets and in the hills. I only wish that other areas of the country offered this many food and running options.

Alas, all things must come to an end. While my PhD itself is coming to a close, I look forward to working with my future supervisor, David Held, in my next position at Carnegie Mellon University. Throughout the time when I was searching for a postdoc, I thank other faculty who took the time out of their insanely busy schedules to engage with me and to offer research advice: Shuran Song of Columbia, Jeannette Bohg of Stanford, and Alberto Rodriguez of MIT. I am forever in awe of their research contributions, and I hope that I will be able to achieve a fraction of what they have done in their careers.

In a past life, I was an undergraduate at Williams College in rural Massachusetts, which boasts an average undergraduate student body of about 2000 students. When I arrived at campus on that fall day in 2010, I was clueless about computer science and how research worked in general. Looking back, Williams must have done a better job preparing me for the PhD than I expected. Among the professors there, I owe perhaps the most to my undergraduate thesis advisor, Andrea Danyluk, as well as the other Williams CS faculty who taught me at that time: Brent Heeringa, Morgan McGuire, Jeannie Albrecht, Duane Bailey, and Stephen Freund. I will do my best to represent our department in the research area, and I hope that the professors are happy with how my graduate trajectory has taken place. One day, I shall return in person to give a research talk, and will be able to (in the words of Duane Bailey) show off my shiny new degree. I also majored in math, and I similarly learned a tremendous amount from my first math professor, Colin Adams, who emailed me right after my final exam urging me to major in math. I also appreciate other professors who have left a lasting impression on me: Steven Miller, Mihai Stoiciu, Richard De Veaux, and Qing (Wendy) Wang. I appreciate their patience during my frequent visits to their office hours.

During my undergraduate years, I was extremely fortunate to benefit from two Research Experiences for Undergraduates (REUs), the first at Bard College with Rebecca Thomas and Sven Andersen, and the second at the University of North Carolina at Greensboro, with Francine Blanchet-Sadri. I thank the professors for offering to work with me. As with the Williams professors, I don’t think any of my REU advisors had anticipated that they would be helping to train a future roboticist. I hope they enjoyed working with me just as much as I enjoyed working with them. To everyone from those REUs, I am still thinking of all of you and wish you luck wherever you are.

I owe a great debt to Richard Ladner of the University of Washington, who helped me break into computer science. He and Rob Roth used to run a program called the “Summer Academy for Advancing Deaf and Hard of Hearing in Computing.” I attended one of the iterations of this program, and it exposed to me what it might have been like to be a graduate student. Near the end of the program, I spoke with Richard one-on-one, and asked him detailed questions about what he thought of my applying to PhD programs. I remember him expressing enthusiasm, but also some reservation: “do you know how hard it is to get in a top PhD program?” he cautioned me. I thanked him for taking the time out of his busy schedule to give me advice. In the upcoming years, I always remembered to work hard in the hopes of achieving a PhD. (The next time I visited the University of Washington, years later, I raced to Richard Ladner’s office the minute I could.) Also, as a fun little history note, when I was there that I decided to start my (semi-famous?) personal blog, which seemingly everyone at Berkeley’s EECS department has seen, in large part because I felt like I needed to write about computer science in order to understand it better. I still feel that way today, and I hope I can continue writing.

Finally, I would like to thank my family for helping me persevere throughout the PhD. It is impossible for me to adequately put in words how much they helped me survive. My frequent video calls with family members helped me to stay positive during the most stressful days of my PhD, and they have always been interested in the work that I do and anything else I might want to talk about. Thank you.

Reframing Reinforcement Learning as Sequence Modeling with Transformers?

The Transformer Network, developed by Google and presented in a NeurIPS 2017 paper, is one of the few papers that can truly claim to have fundamentally transformed (pun intended) the field of Artificial Intelligence. Transformer Networks have become the foundation of some of the most dramatic performance advances in Natural Language Processing (NLP). Two prominent examples are Google’s BERT model, which uses a bidirectional Transformer, and OpenAI’s line of GPT models, which uses a unidirectional Transformer. Both papers have substantially helped out their respective companies’ bottom line: BERT has boosted Google’s search capabilities to new tiers and OpenAI uses GPT-3 for automatic text generation in their first commercial product .

For a solid understand of Transformer Networks, it is probably best to read the original paper and try out sample code. However, the Transformer Network paper has also spawned a seemingly endless series of blog posts and tutorial articles, which can be solid references (though with high variance in quality). Two of my favorite posts are from well-known bloggers Jay Alammar and Lilian Weng, who serve as inspirations for my current blogging habits. Of course, I am also guilty of jumping on this bandwagon, since I wrote a blog post on Transformers a few years ago.

Transformers have changed the trajectory of NLP and other fields such as protein modeling (e.g., the MSA transformer) and computer vision. OpenAI has an ICML 2020 paper which introduces Image-GPT, and the name alone should be self-explanatory. But, what about the research area I focus on these days, robot learning? It seems like Transformers have had less impact in this area. To be clear, researchers have already tried to replace existing neural networks used in RL with Transformers, but this does not fundamentally change the nature of the problem, which is consistently framed as a Markov Decision Process where states follow the Markovian property of being a function of only the prior state and action.

That might now change. Earlier this month, two groups in BAIR released arXiv preprints that use Transformers for RL, and which do away with MDPs and treat RL as one big sequence modeling problem. They propose models called Decision Transformer and Trajectory Transformer. These have not yet been peer-reviewed, but judging from the format, it’s likely that both are under review for NeurIPS. Let’s dive into the papers, shall we?

Decision Transformer

This paper introduces the Decision Transformer, which takes a particular trajectory representation as input, and outputs action predictions at training time, or the actual actions at test time (i.e., evaluation).

First, how is a trajectory represented? In RL, these are typically a sequence of states, actions, and rewards. In this paper, however, they consider the return to go:

\[\hat{R}_t = \sum_{t'=t}^{T} r_{t'}\]resulting in the full trajectory representation of:

\[\tau = (\hat{R}_1, s_1, a_1, \hat{R}_2, s_2, a_2, \ldots, \hat{R}_T, s_T, a_t)\]This already raises the question of why this representation is chosen. The reason is that at test time, the Decision Transformer must be paired up with a desired performance, which is cumulative episodic return. Given that as input, after each time step, the agent gets the per-time step reward from the environment emulator, and decreases the desired performance by that amount. Then, this revised desired performance value is passed again as input, and the process repeats. The immediate question I had after this was whether it would be possible to predict the return-to-go accurately, and if the Decision Transformer could extrapolate beyond the best return-to-go in the training data. Spoiler alert: the paper reports experiments with this, finding a strong correlation between predicted and actual return, and it is possible to extrapolate beyond the best return in the data, but only by a little bit. That’s fair, it would be unrealistic to assume it could get any return-to-go feasible from the environment emulator.

The input to the Decision Transformer is a subset of the trajectory $\tau$ consisting of the $K$ most recent time steps, each of which consists of a tuple with three items as noted above (the return-to-go, state, and action). Note how this differs from a DQN-style method, which for each time step, takes in 4 stacked game frames but does not take in rewards or prior actions as input. Furthermore, in this paper, Decision Transformers use values such as $K=30$, so they consider a longer history.

The output of Decision Transformer simply requires predicting an action (during training), so it can be trained with the usual cross-entropy or mean square error loss functions, depending on whether the action is discrete or continuous.

Now, what is the architecture for predicting or generating actions? Decision Transformers use GPT, which is an auto-regressive model which means it handles probabilities of the form $p(x_t | x_{t-1}, \ldots, x_1)$ where the prediction of something at a current time is conditioned on all prior data. GPT uses this to generate (that’s what the “G” stands for) by sampling the $x_t$ term. In my notation of the $x_i$ terms, imagine all of those represent data tuples of (return-to-go, state, action) – that’s what the GPT model deals with, and it produces the next predicted tuple. Well, technically they only need to predict the action, but I wonder if state prediction could be useful? From communicating with the authors, they didn’t get much performance benefit from predicting states, but it is doable.

There are also various embedding layers applied on the input before it is passed to the GPT model. I highly recommend looking at Algorithm 1 in the paper, which has it in nicely written pseudocode. The Appendix also clarifies the code bases that they build upon, and both are publicly available. Andrej Karpathy’s miniGPT code looks nice and is self-contained.

That’s it! Notice how the Decision Transformer does not do bootstrapping to estimate value functions.

The paper evaluates on a suite of offline RL tasks, using environments from Atari (discrete control), from D4RL (continuous control), and from a “Key-to-Door” task. Fortunately for me, I had recently done a lot of reading on offline RL, and I even wrote a survey-style blog post about it a few months ago. The Decision Transformer is not specialized towards offline RL. It just happens to be the problem setting the paper considers, because not only is it very important, it is also a nice fit in that (again) the Decision Transformer does not perform bootstrapping, which is known to cause diverging Q-values in many offline RL contexts.

The results suggest that Decision Transformer is on par with state-of-the-art offline RL algorithms. It is a little worse on Atari, and a little better on D4RL. It seems to do a lot better on the Key-to-Door task but I’m not sufficiently familiar with that benchmark. However, since the paper is proposing an approach fundamentally different from most RL methods, it is impressive to get similar performance. I expect that future researchers will build upon the Decision Transformer to improve its results.

Trajectory Transformer

Now let us consider the second paper, which introduces the Trajectory Transformer. As with the prior paper, it departs from the usual MDP assumptions, and it also does not require dynamic programming or bootstrapped estimates. Instead, it directly uses properties from the Transformer to encode all the ingredients it needs for a wide range of control and decision-making problems. As it borrows techniques from language modeling, the paper argues that the main technical innovation is understanding how to represent a trajectory. Here, the trajectories $\tau$ are represented as:

\[\tau = \{ \mathbf{s}_t^0, \mathbf{s}_t^{1}, \ldots, \mathbf{s}_t^{N-1}, \mathbf{a}_t^0, \mathbf{a}_t^{1}, \ldots, \mathbf{a}_t^{M-1}, r_t \}_{t=0}^{T-1}\]My first reaction was that this looks different than the trajectory representation for Decision Transformers. There’s no return-to-go written here, but this is a little misleading. The Trajectory Transformer paper tests three decision-making settings: (1) imitation learning, (2) goal-conditioned RL, and (3) offline RL. The Decision Transformer paper focuses on applying the framework to offline RL only. For offline RL, the Trajectory Transformer actually uses the return-to-go as an extra component in each data tuple in $\tau$. So I don’t believe there is any fundamental difference in terms of the trajectory consisting of states, actions, and return-to-go, though the Trajectory Transformer seems to also take in the current scalar $r_t$ as input, so that could be one difference, and it also appears to use a discount factor in the return-to-go. Both seem minor.

Perhaps a more fundamental difference is with discretization. The Decision Transformer paper doesn’t mention discretization, and from contacting the authors, I confirm they did not discretize. So for continuous states and actions, the Decision Transformer likely just represents them as vectors in $\mathbb{R}^d$ for some suitable $d$ representing the state or action dimension. In contrast, Trajectory Transformers use discretized states and actions as input, and the paper helpfully explains how the indexing and offsets work. While this may be inefficient, the paper states, it allows them to use a more expressive model. My intuition for this phrase comes from histograms — in theory, histograms can represent arbitrarily complex 1D data distributions, whereas a 1D Gaussian must have a specific “bell-shaped” structure.

As with the Decision Transformer, the Trajectory Transformer uses a GPT as its backbone, and is trained to optimize log probabilities of states, actions, and rewards, conditioned on prior information in the trajectory. This enables test-time prediction by sampling from the trained model using what is known as beam search. This is another core difference between the Trajectory Transformer and Decision Transformer. The former uses beam search, the latter does not, and that’s probably because with discretization, it may be easier to do multimodal reasoning.

For quantitative results, they again test on D4RL for offline RL experiments. The results suggest that Trajectory Transformers are competitive with prior state-of-the-art offline RL algorithms. Again, as with Decision Transformers, the results aren’t significant improvements, but the fact that they’re able to get to this performance for the first iteration of this approach is impressive in its own right. They also show a nice qualitative visualization where their Trajectory Transformer can produce a long sequence of predicted trajectories of a humanoid, whereas a popular state-of-the-art model-based RL algorithm known as PETS makes significantly worse predictions.

The project website succinctly summarizes the comparisons between Trajectory Transformer and Decision Transformer as follows:

Chen et al concurrently proposed another sequence modeling approach to reinforcement learning. At a high-level, ours is more model-based in spirit and theirs is more model-free, which allows us to evaluate Transformers as long-horizon dynamics models (e.g., in the humanoid predictions above) and allows them to evaluate their policies in image-based environments (e.g., Atari). We encourage you to check out their work as well.

To be clear, the idea that Trajectory Transformer is model-based and that Decision Transformer is model-free is partly because the former predicts states, whereas the latter only predicts actions.

Concluding Thoughts

Both papers show that we can consider RL as a sequence learning problem, where Transformers can take in a long sequence of data and predict something. The two approaches can get around the “deadly triad” in RL since bootstrapping value estimates is not necessary. The use of Transformers enables building upon an extensive literature for Transformers in other fields — and it’s very extensive, despite how Transformers are only 4 years old (it has an absurd 22955 Google Scholar citations as of today)! The models use the same fundamental backbone, and I wonder if there are ways to merge the approaches. Would beam search, for example, be helpful in Decision Transformers, and would conditioning on return-to-go be helpful for Trajectory Transformer?

To reitertate, the results are not “out of this world” compared to current state-of-the-art RL using MDPs, but as a first step, these look impressive. Moreover, I am guessing that the research teams are busy extending the capabilities of these models. These two papers have very high impact potential. Assuming the research community is able to improve upon these models, this approach may even become the standard treatment for RL. I am excited to see what will come.

My PhD Dissertation Talk

The long wait is over. After many years, I am excited to share that I delivered my PhD dissertation talk. I gave it on May 13, 2021 via Zoom. I recorded the 45-minute talk and you can find the video above.

I had multiple opportunities to practice the PhD talk, as I gave several talks earlier with a substantial amount of overlap, such as the one “at” Toronto in March (see the blog post here). My PhD talk, like prior talks, heavily focuses on robot manipulation of deformables, and includes discussions of my IROS 2020, RSS 2020, and ICRA 2021 papers. However, I wanted the focus to be broader than deformable manipulation alone, so I structured the talk to feature “robot learning” prominently, of which “deformable manipulation” is one particular example of robot learning. Then, rather than go through the “Model-Free,” “Model-Based,” and “Transporter Network” sections from my prior talks, I chose to title talk sections as follows: “Simulated Interactions,” “Architectural Priors,” and “Curricula.” This also gave me the chance to feature some of my curriculum learning work with John Canny.

The audience had some questions at the end, but overall, the questions were generally not too difficult to answer. Perhaps in years past, it was typical to have very challenging questions at the end of a dissertation talk, and students may have failed if they couldn’t answer well enough. Nowadays, every Berkeley EECS PhD student who gives a dissertation talk is expected to pass. I’m not aware of anyone failing after giving the talk.

I want to thank everyone who helped me get to this point today, especially when earlier in my PhD, I thought I would never reach this point. Or at the very least, I thought I would not have as strong a research record as I now have. A proper and more detailed set of acknowledgments will come at a later date.

I am not a “Doctor” yet, since I still need to write up the actual dissertation itself, which I will do this summer by “stitching” together my 4-5 most relevant first-author papers. Nonetheless, giving this talk is a huge step forward in finishing up my PhD, and I am hugely relieved that it’s out of the way.

I will also be starting a postdoc position in a few months. More on that to come later …

Inverse Reinforcement Learning from Preferences

It’s been a long time since I engaged in a detailed read through of an inverse reinforcement learning (IRL) paper. The idea is that, rather than the standard reinforcement learning problem where an agent explores to get samples and finds a policy to maximize the expected sum of discounted rewards, we instead are given data already, and must determine the reward function. After this reward function is learned, one can then learn a new policy based on this reward function by running standard reinforcement learning, but where the rewards for each state (or state-action) is determined from the learned reward function. As a side note, since this appears to be quite common and “part of” IRL, then I’m not sure why IRL is often classified as an “imitation learning” algorithm when reinforcement learning has to be run as a subroutine. Keep this in mind when reading papers on imitation learning, which often categorize algorithms as supervised learning (e.g., behavioral cloning) approaches vs IRL approaches, such as in the introduction of the famous Generative Adversarial Imitation Learning paper.

In the rest of this post, we’ll cover two closely-related works on IRL that cleverly and effectively rely on preference rankings among trajectories. They also have similar acronyms: T-REX and D-REX. The T-REX paper presents the Trajectory-ranked Reward Extrapolation algorithm, which is also used in the D-REX paper (Disturbance-based Reward Extrapolation). So we shall first discuss how reward extrapolation works in T-REX, and then we will clarify the difference between the two papers.

T-REX and D-REX

The motivation for T-REX is that in IRL, most approaches rely on defining a reward function which explains the demonstrator data and makes it appear optimal. But, what if we have suboptimal demonstrator data? Then, rather than fit a reward function to this data, it may be better to instead figure out the appropriate features of the data that convey information about the underlying intentions of the demonstrator, which may be extrapolated beyond the data. T-REX does this by working with a set of demonstrations which are ranked.

To be concrete, denote a sequence of $m$ ranked trajectories:

\[\mathcal{D} = \{ \tau_1, \ldots, \tau_m \}\]where if $i<j$, then $\tau_i \prec \tau_j$, or in other words, trajectory $\tau_i$ is worse than $\tau_j$. We’ll assume that each $\tau_i$ consists of a series of states, so that neither demonstrator actions nor the reward are needed (a huge plus!):

\[\tau_i = (s_0^{(i)}, s_1^{(i)}, \ldots, s_T^{(i)})\]and we can also assume that the trajectory lengths are all the same, though this isn’t a strict requirement of T-REX (since we can normalize based on length) but probably makes it more numerically stable.

From this data $\mathcal{D}$, T-REX will train a learned reward function $\hat{R}_\theta(s)$ such that:

\[\sum_{s \in \tau_i} \hat{R}_\theta(s) < \sum_{s \in \tau_j} \hat{R}_\theta(s) \quad \mbox{if} \quad \tau_i \prec \tau_j\]To be clear, in the above equation there is no true environment reward at all. It’s just the learned reward function $\hat{R}_\theta$, along with the trajectory rankings. That’s it! One may, of course, use the true reward function to determine the rankings in the first place, but that is not required, and that’s a key flexibility advantage for T-REX – there are many other ways we can rank trajectories.

In order to train $\hat{R}_\theta$ so the above criteria is satisfied, we can use the cross entropy loss function. Most people probably start using the cross-entropy loss function in the context of classification tasks, where the neural network outputs some “logits” and the loss function tries to “get” the logits to match a true one-hot vector distribution. In this case, the logic is similar. The output of the reward network forms the (un-normalized) probability that one trajectory is preferable to another:

\[P(\hat{J}_\theta(\tau_i) < \hat{J}_\theta(\tau_j)) \approx \frac{\exp \sum_{s \in \tau_j} \hat{R}_\theta(s) }{ \exp \sum_{s \in \tau_i}\hat{R}_\theta(s) + \exp \sum_{s \in \tau_j}\hat{R}_\theta(s) }\]when we then use in this loss function:

\[\mathcal{L}(\theta) = - \sum_{\tau_i \prec \tau_j } \log \left( \frac{\exp \sum_{s \in \tau_j} \hat{R}_\theta(s) }{\exp \sum_{s \in \tau_i} \hat{R}_\theta(s)+ \exp \sum_{s \in \tau_j}\hat{R}_\theta(s) } \right)\]Let’s deconstruct what we’re looking at here. The loss function $\mathcal{L}(\theta)$ for training $\hat{R}_\theta$ is binary cross entropy, where the two “classes” involved here are whether $\tau_i \succ \tau_j$ or $\tau_i \prec \tau_j$. (We can easily extend this to include cases when the two are equal, but let’s ignore for now.) Above, the true class corresponds to $\tau_i \prec \tau_j$.

If this isn’t clear then reviewing the cross entropy (e.g., from this source), we see that between a true distribution “$p$” and a predicted distribution “$q$”, it is defined as: $-\sum_x p(x) \log q(x)$ where the sum over $x$ iterates through all possible classes – in this case we only have two classes. The true distribution is $p=[0,1]$ if we interpret the two components as expressing the class $\tau_i \succ \tau_j$ at index 0, or $\tau_i \prec \tau_j$ at index 1. In all cases, the “class” we assign is to index 1 by design. The predicted distribution comes from the output of the reward function network:

\[q = \Big[1 - P(\hat{J}_\theta(\tau_i) < \hat{J}_\theta(\tau_j)), \; P(\hat{J}_\theta(\tau_i) < \hat{J}_\theta(\tau_j)) \Big]\]and putting this together, the cross entropy term reduces to $\mathcal{L}(\theta)$ as shown above, for a single training data point (i.e., a single training pair $(\tau_i, \tau_j)$). We would then sample many of these pairs during training for each minibatch.

To get this to work in cases when the two trajectories are ambiguous, then you can set the “target” distribution to be $[0.5, 0.5]$. This is made explicit in this NeurIPS 2018 paper from DeepMind which uses the same loss function.

The main takeaway is that this process will learn a reward function assigning greater total return to higher ranked trajectories. As long as there are features associated with higher return that are identifiable from the data, then it may be possible to extrapolate beyond the data.

Once the reward function is learned, T-REX then runs policy optimization by running reinforcement learning, which in both papers here is Proximal Policy Optimization. This is done in an online fashion, but where instead of data coming in as $(s,a,r,s’)$ tuples, they will be $(s,a,\hat{R}_\theta(s),s’)$, where the reward is from the learned policy.

This makes sense, but as usual, there are a bunch of practical tips and tricks to get things working. Here are some for T-REX:

-

For many environments, “trajectories” often refer to “episodes”, but these can last for a large number of time steps. To perform data augmentation, one can subsample trajectories of the same length among pairs of trajectories $\tau_i$ and $\tau_j$.

-

Training an ensemble of reward functions for $\hat{R}_\theta$ often helps, provided the individual components have values at roughly the same scale.

-

The reward used for the policy optimization stage might need some extra “massaging” to it. For example, with MuJoCo, the authors use a control penalty term that gets added to $\hat{R}_\theta(s)$.

-

To check if reward extrapolation is feasible, one can plot a graph that shows ground truth returns on the x-axis and predicted return on the y-axis. If there is strong correlation among the two, then that’s a sign extrapolation is more likely to happen.

In both T-REX and D-REX, the authors experiment with discrete control and continuous control using standard environments from Atari and MuJoCo, respectively, and find that overall, their two stage approach of (1) finding $\hat{R}_\theta$ from preferences and (2) running PPO on top of this learned reward function, works better than competing baselines such as Behavior Cloning and Generative Adversarial Imitation Learning, and that they can exceed the performance of the demonstration data.

The above is common to both T-REX and D-REX. So what’s the difference between the two papers?

-

T-REX assumes that we have rankings available ahead of time. This can be from a number of sources. Maybe they were “ground truth” rankings based on ground truth rewards (i.e., just sum up the true reward within the $\tau_i$s), or they might be noisy rankings. An easy way to test noisy rankings is to rank trajectories based on the time in training history if we extract trajectories from an RL agent’s history. Another, but more cumbersome way (since it relies on human subjects) is to use Amazon Mechanical Turk. The T-REX paper does a splendid job testing these different rankings – it’s one reason I really like the paper.

-

In contrast, D-REX assumes these rankings are not available ahead of time. Instead, the approach involves training a policy from the provided demonstration data via Behavior Cloning, then taking that resulting snapshot and rolling it out in the environment with different noise levels. This naturally provides a ranking for the data, and only relies on the weak assumption that the Behavior Cloning agent will be better than a purely random policy. Then with these automatic rankings, D-REX can just do exactly what T-REX did!

-

D-REX makes a second contribution on the theoretical side to better understand why preferences over demonstrations can reduce reward function ambiguity in IRL.

Some Theory in D-REX

Here’s a little more on the theory from D-REX. We’ll follow the notation from the paper and state Theorem 1 here (see the paper for context):

If the estimated reward function is $\;\hat{R}(s) = w^T\phi(s),\;$ the true reward function is \(\;R^*(s) = \hat{R}(s) + \epsilon(s)\;\) for some error function \(\;\epsilon : \mathcal{S} \to \mathbb{R}\;\) and \(\;\|w\|_1 \le 1,\;\) then extrapolation beyond the demonstrator, i.e., \(\; J(\hat{\pi}|R^*) > J(\mathcal{D}|R^*),\;\) is guaranteed if:

\[J(\pi_{R^*}^*|R^*) - J(\mathcal{D}|R^*) > \epsilon_\Phi + \frac{2\|\epsilon\|_\infty}{1 - \gamma}\]where \(\;\pi_{R^*}^* \;\) is the optimal policy under $R^*$, \(\;\epsilon_\Phi = \| \Phi_{\pi_{R^*}^*} - \Phi_{\hat{\pi}}\|_\infty,\;\) and \(\|\epsilon\|_\infty = {\rm sup}\{ | \epsilon(s)| : s \in \mathcal{S} \}\).

To clarify the theorem, $\hat{\pi}$ is some learned policy for which we want to outperform the average episodic return in the demonstration data $J(\mathcal{D}|R^*)$. We begin by considering the difference in return between the optimal policy under the true reward (which can’t be exceeded w.r.t. that reward by definition) and the expected return of the learned polcy (also under that true reward):

\[\begin{align} J(\pi_{R^*}^*|R^*) - J(\hat{\pi}|R^*) \;&{\overset{(i)}=}\;\; \left| \mathbb{E}_{\pi_{R^*}^*} \Big[ \sum_{t=0}^\infty \gamma^t R^*(s) \Big] - \mathbb{E}_{\hat{\pi}} \Big[ \sum_{t=0}^\infty \gamma^t R^*(s) \Big] \right| \\ \;&{\overset{(ii)}=}\;\; \left| \mathbb{E}_{\pi_{R^*}^*} \Big[ \sum_{t=0}^\infty \gamma^t (w^T\phi(s_t)+\epsilon(s_t)) \Big] - \mathbb{E}_{\hat{\pi}} \Big[ \sum_{t=0}^\infty \gamma^t (w^T\phi(s_t)+\epsilon(s_t)) \Big] \right| \\ \;&{\overset{(iii)}=}\; \left| w^T\Phi_{\pi_{R^*}^*} + \mathbb{E}_{\pi_{R^*}^*} \Big[ \sum_{t=0}^\infty \gamma^t \epsilon(s_t) \Big] - w^T\Phi_{\hat{\pi}} - \mathbb{E}_{\hat{\pi}} \Big[ \sum_{t=0}^\infty \gamma^t \epsilon(s_t) \Big] \right| \\ \;&{\overset{(iv)}\le}\;\; \left| w^T(\Phi_{\pi_{R^*}^*} -\Phi_{\hat{\pi}}) + \mathbb{E}_{\pi_{R^*}^*} \Big[ \sum_{t=0}^\infty \gamma^t \sup_{s\in \mathcal{S}} \epsilon(s) \Big] - \mathbb{E}_{\hat{\pi}} \Big[ \sum_{t=0}^\infty \gamma^t \inf_{s \in \mathcal{S}} \epsilon(s) \Big] \right| \\ \;&{\overset{(v)}=}\;\; \left| w^T(\Phi_{\pi_{R^*}^*} -\Phi_{\hat{\pi}}) + \Big( \sup_{s\in \mathcal{S}} \epsilon(s) - \inf_{s \in \mathcal{S}} \epsilon(s) \Big) \sum_{t=0}^{\infty} \gamma^t \right| \\ \;&{\overset{(vi)}\le}\;\; \left| w^T(\Phi_{\pi_{R^*}^*} -\Phi_{\hat{\pi}}) + \frac{2 \|\epsilon\|_\infty}{1-\gamma} \right| \\ \;&{\overset{(vii)}\le}\;\; \left| w^T(\Phi_{\pi_{R^*}^*} -\Phi_{\hat{\pi}})\right| + \frac{2 \|\epsilon\|_\infty}{1-\gamma} \\ \;&{\overset{(viii)}\le}\; \|w\|_1 \|\Phi_{\pi_{R^*}^*} -\Phi_{\hat{\pi}})\|_\infty + \frac{2 \|\epsilon\|_\infty}{1-\gamma} \\ &{\overset{(ix)}\le}\; \epsilon_\Phi + \frac{2\|\epsilon\|_\infty}{1 - \gamma} \end{align}\]where

-

in (i), we apply the definition of the terms and put absolute values around the terms. I don’t think this is necessary since the LHS must be positive, but it doesn’t hurt.

-

in (ii), we substitute $R^*$ with the theorem’s assumption about both the error function and how the estimated reward is a linear combination of features.

-

in (iii) we move the weights $w$ outside the expectation as they are constants and we can use linearity of expectation. Then we use the paper’s definition of $\Phi_\pi$ as the expected feature counts for given policy $\pi$.

-

in (iv) we move the two $\Phi$ terms together (notice how this matches the theorem’s $\epsilon_\Phi$ definition), and we then make this an inequality by looking at the expectations and applying “sup”s and “infs” to each time step. This is saying if we have $A-B$ then let’s make the $A$ term larger and the $B$ term smaller. Since we’re doing this for an infinite amount of time steps, I am somewhat worried that this is a loose bound.

-

in (v) we see that since the “sup” and “inf” terms no longer depend on $t$, we can move them outside the expectations. In fact, we don’t even need expectations anymore, since all that’s left is a sum over discounted $\gamma$ terms.

-

in (vi) we apply the geometric series formula to get rid of the sum over $\gamma$ and then the inequality results from replacing the “sup”s and “inf”s with the \(\| \epsilon \|_\infty\) from the theorem statement – the “2” helps to cover the extremes of a large positive error and a large negative error (note the absolute value in the theorem condition, that’s important).

-

in (vii) we apply the Triangle Inequality.

-

in (viii) we apply Hölder’s inequality.

-

finally, in (ix) we apply the theorem statements.

We now take that final inequality and subtract the average demonstration data return on both sides:

\[\underbrace{J(\pi_{R^*}^*|R^*)- J(\mathcal{D}|R^*)}_{\delta} - J(\hat{\pi}|R^*) \le \epsilon_\Phi + \frac{2\|\epsilon\|_\infty}{1 - \gamma} - J(\mathcal{D}|R^*)\]Now we finally invoke the “if” condition in the theorem. If the equation in the theorem holds, then we can replace $\delta$ above as follows since it’s just reducing the LHS:

\[\epsilon_\Phi + \frac{2\|\epsilon\|_\infty}{1 - \gamma} - J(\hat{\pi}|R^*) \le \epsilon_\Phi + \frac{2\|\epsilon\|_\infty}{1 - \gamma} - J(\mathcal{D}|R^*)\]which implies:

\[- J(\hat{\pi}|R^*) \le - J(\mathcal{D}|R^*) \quad \Longrightarrow \quad J(\hat{\pi}|R^*) > J(\mathcal{D}|R^*),\]showing that $\hat{\pi}$ has extrapolated beyond the data.

What’s the intuition behind the theorem? The LHS of the theorem shows the difference in the return based on the optimal policy versus the demonstration data. By definition of optimality, the LHS is at least 0, but it can get very close to 0 if the demonstration data is very good. That’s not good for extrapolation, and hence the condition for outperforming the demonstrator is less likely to hold (which makes sense). Focusing on the RHS, we see that it’s value is larger if the maximum error in $\epsilon$ is large. This might be a very restrictive condition, since it’s considering the maximum absolute error over the entire state set $\mathcal{S}$. Since there are an infinite amount of states in many practical applications, this means even one large error might cause the inequality in the theorem statement to fail.

The proof also relies on the assumption that the estimated reward function is a linear combination of features (that’s what $\hat{R}(s)=w^T\phi(s)$ means) but $\phi$ could contain arbitrarily complex features, so I guess it’s a weak assumption (which is good), but I am not sure?

Concluding Remarks

Overall, the T-REX and D-REX papers are nice IRL papers that rely on preferences between trajectories. The takeaways I get from these works:

-

While reinforcement learning may be very exciting, don’t forget about the perhaps lesser-known task of inverse reinforcement learning.

-

Taking subsamples of trajectories is a helpful way to do data augmentation when doing anything at the granularity of episodes.

-

Perhaps most importantly, I should understand when and how preference rankings might be applicable and beneficial. In these works, preferences enable them to train an agent to perform better than demonstrator data without strictly requiring ground truth environment rewards, and potentially without even requiring demonstrator actions (though D-REX requires actions).

I hope you found this post helpful. As always, thank you for reading, and stay safe.

Papers covered in this blog post:

-

Daniel S. Brown, Wonjoon Goo, Prabhat Nagarajan, Scott Niekum. Extrapolating Beyond Suboptimal Demonstrations via Inverse Reinforcement Learning from Observations, ICML 2019.

-

Daniel S. Brown, Wonjoon Goo, Scott Niekum. Better-than-Demonstrator Imitation Learning via Automatically-Ranked Demonstrations, CoRL 2019.

Research Talk at the University of Toronto on Robotic Manipulation

A video of my talk at the University of Toronto with the Q-and-A at the end.

Last week, I was very fortunate to give a talk “at” the University of Toronto in their AI in Robotics Reading Group. It gives a representative overview of my recent research in robotic manipulation. It’s a technical research talk, but still somewhat high-level, so hopefully it should be accessible to a broad range of robotics researchers. I normally feel embarrassed when watching recordings of my talks, since I realize I should have done X instead of Y in so many places. Fortunately I think this one turned out reasonably well. Furthermore, and to my delight, the YouTube / Google automatic captions captured my audio with a high degree of accuracy.

My talk covers these three papers in order:

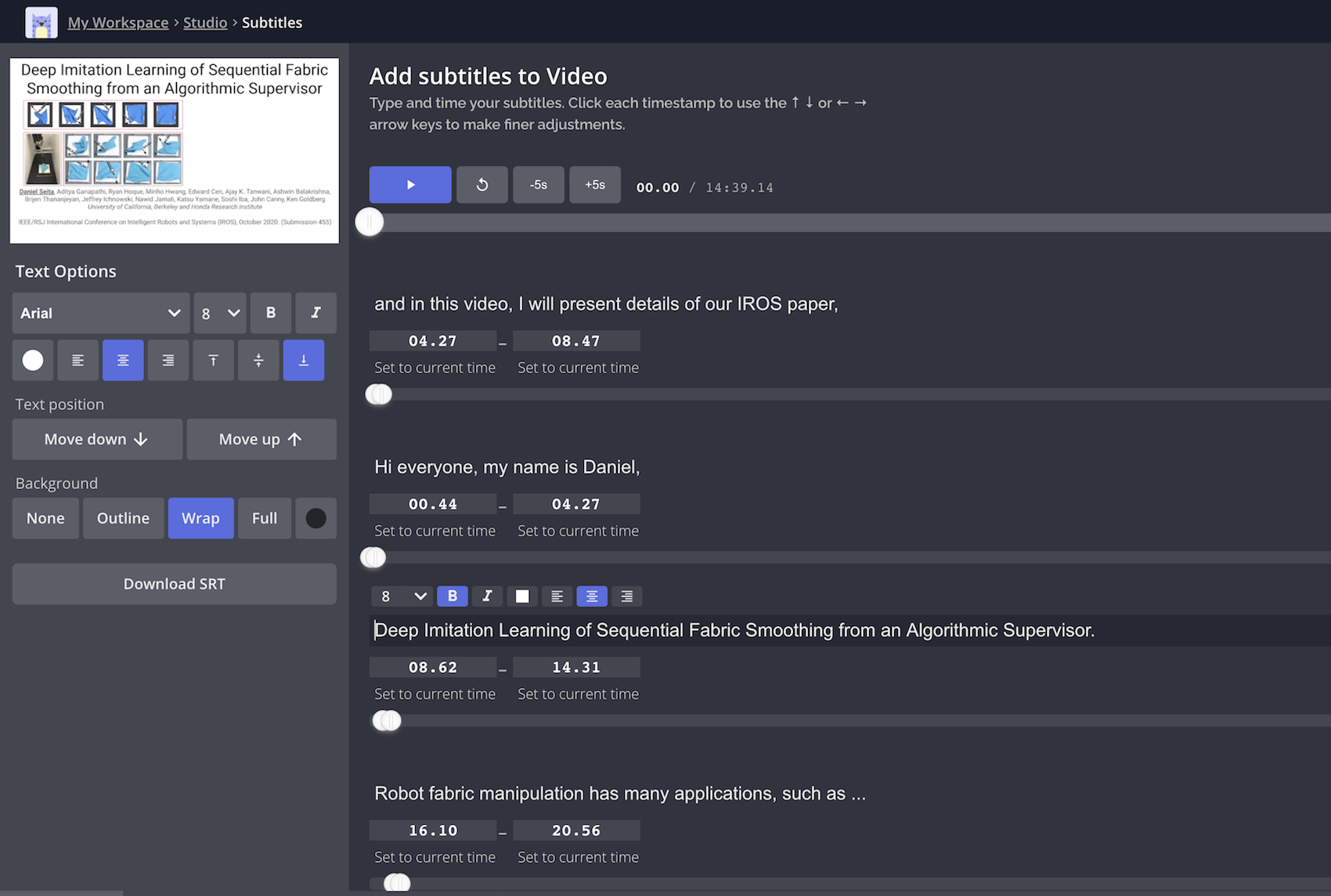

- Deep Imitation Learning of Sequential Fabric Smoothing From an Algorithmic Supervisor, IROS 2020.

- VisuoSpatial Foresight for Multi-Step, Multi-Task Fabric Manipulation, RSS 2020.

- Learning to Rearrange Deformable Cables, Fabrics, and Bags with Goal-Conditioned Transporter Networks, ICRA 2021.

We covered the first two papers in a BAIR Blog post last year. I briefly mentioned the last one in a personal blog post a few months ago, with the accompanying backstory behind how we developed it. A joint Google AI and BAIR Blog post is in progress … I promise!

Regarding that third paper (for ICRA 2021), when making this talk in Keynote, I was finally able to create the kind of animation that shows the intuition for how a Goal-Conditioned Transporter Network works. Using Google Slides is great for drafting talks quickly, but I think Keynote is better for formal presentations.

I thank the organizers (Homanga Bharadhwaj, Arthur Allshire, Nishkrit Desai, and Professor Animesh Garg) for the opportunity, and I also thank them for helping to arrange the two sign language interpreters for my talk. Finally, if you found this talk interesting, I encourage you to view the talks from the other presenters in the series.

Getting Started with SoftGym for Deformable Object Manipulation

This is a regularly updated post, last updated October 05, 2023.

Visualization of the PourWater environment from SoftGym. The animation is

from the project website.

Over the last few years, I have enjoyed working on deformable object manipulation for robotics. In particular, it was the focus of my Google internship work, and I previously did some work with deformables before that, highlighted with our BAIR Blog post here. In this post, I’d like to discuss the SoftGym simulator, developed by researchers from Carnegie Mellon University in their CoRL 2020 paper. I’ve been exploring this simulator to see if it might be useful for my future projects, and I am impressed by the simulation quality and how it also has support for fluid simulation. The project website has more information and includes impressive videos. This blog post will be similar in spirit to one I wrote almost a year ago about using a different code base (rlpyt) with a focus on the installation steps for SoftGym.

Installing SoftGym

The first step is to install SoftGym. The provided README has some information but it wasn’t initially clear to me, as shown in my GitHub issue report. As I stated in my post on rlpyt, I like making long and detailed GitHub issue reports that are exactly reproducible.

The main thing to understand when installing is that if you’re using an Ubuntu 16.04 machine, you (probably) don’t have to use Docker. (However, Docker is incredibly useful in its own right, so I encourage you to learn how to use it if you haven’t done so already.) If you’re using Ubuntu 18.04, then you definitely have to use Docker. However, Docker is only used to compile PyFleX, which has the physics simulation for deformables. The rest of the repository can be managed through a standard conda environment.

Here’s a walk-through of my installation and compilation steps on an Ubuntu 18.04 machine, and I assume that conda is already installed. If conda is not installed, I encourage you to check another blog post which describes my conda workflow.

So far, the code has worked for me on a variety of CUDA and NVIDIA driver versions. You can find the CUDA version by running:

seita@mason:~ $ nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2018 NVIDIA Corporation

Built on Sat_Aug_25_21:08:01_CDT_2018

Cuda compilation tools, release 10.0, V10.0.130

For example, the above means I have CUDA 10.0. Similarly, the driver version

can be found from running nvidia-smi.

Now let’s get started by cloning the repository and then creating the conda environment:

conda env create -f environment.yml

This command will create a conda environment that has the necessary packages with their correct version. However, there’s one more package to install, the pybind11 package, so I would install that after activating the environment:

conda activate softgym

conda install pybind11

At this point, the conda environment should be good to go.

Next we have the most interesting part, where we use Docker. Here’s the installation guide for Ubuntu machines in case it’s not installed on your machine yet. I’m using Docker version 19.03.6. A quick refresher on terminology: Docker has images and containers. An image is like a recipe, whereas a container is an instance of it. StackOverflow has a more detailed explanation. Therefore, after running this command:

docker pull xingyu/softgym

we are downloading the author’s pre-provided Docker image, and it should be

listed if you type in docker images on the command line:

seita@mason:~$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

xingyu/softgym latest 2cbcd6a50965 3 months ago 2.44GB

If you’re running into issues with requiring “sudo”, you can mitigate this by adding yourself to a “Docker group” so that you don’t have to type it in each time. This Ask Ubuntu post might be helpful.

Next, we have to run a command to start a container. Here, we’re using

nvidia-docker since this requires CUDA, as one would expect given that FleX

is from NVIDIA. This is not installed when you install Docker, so please refer

to this page for installation instructions. Once that’s done, to be safe,

I would check to make sure that nvidia-docker -v works on your command line

and that the version matches what’s printed from docker -v. I don’t know if

it is necessary to have the two versions match.

As mentioned earlier, we have to start a container. Here is the command I use:

(softgym) seita@mason:~/softgym$ nvidia-docker run \

-v /home/seita/softgym:/workspace/softgym \

-v /home/seita/miniconda3:/home/seita/miniconda3 \

-v /tmp/.X11-unix:/tmp/.X11-unix \

--gpus all \

-e DISPLAY=$DISPLAY \

-e QT_X11_NO_MITSHM=1 \

-it xingyu/softgym:latest bash

Here’s an explanation:

- The first

-vwill mount/home/seita/softgym(i.e., where I cloned softgym) to/workspace/softgyminside the Docker container’s file system. Thus, when I enter the container, I can change directory to/workspace/softgymand it will look as if I am in/home/seita/softgymon the original machine. The/workspaceseems to be the default directory we start in Docker containers. - A similar thing happens with the second mounting command for miniconda. In fact I’m using the same exact directory before and after the colon, which means the directory structure is the same inside the container.

- The

-itandbashportions will create an environment in the container which lets us type in things on the command line, like with normal Ubuntu machines. Here, we will be the root user. The Docker documentation has more information about these arguments. Note that-itis shorthand for-i -t. - The other commands are copied from the SoftGym Docker README.

October 2023 update: if you are running Ubuntu 22.04, I have gotten the

compilation working with a few modifications. First, I use docker instead of

nvidia-docker since it seems like a separate nvidia-docker installation is

not necessary. Second, I omit the --gpus all argument.

Running the command means I enter a Docker container as a “root” user, and you

should be able to see this container listed if you type in docker ps in

another tab (outside of Docker) since that shows the activate container IDs. At

this point, we should go to the softgym directory and run the scripts to (1)

prepare paths and (2) compile PyFleX:

root@82ab689d1497:/workspace# cd softgym/

root@82ab689d1497:/workspace/softgym# export PATH="/home/seita/miniconda3/bin:$PATH"

root@82ab689d1497:/workspace/softgym# . ./prepare_1.0.sh

(softgym) root@82ab689d1497:/workspace/softgym# . ./compile_1.0.sh

The above should compile without errors. That’s it! One can then exit Docker (just type in “exit”), though I actually would recommend keeping that Docker tab/window open on your command line editor, because any changes to the C++ code will require re-compiling it, so having the Docker already set in place to compile with one command makes things easier. Adjusting the C++ code is (almost) necessary if you wish to create custom environments.

If you are using Ubuntu 16.04, the steps should be similar but also much simpler, and here is the command history that I have when using it:

git clone https://github.com/Xingyu-Lin/softgym.git

cd softgym/

conda env create -f environment.yml

conda activate softgym

. ./prepare_1.0.sh

. ./compile_1.0.sh

cd ../../..

The last change directory command is because the compile script changes my

path. Just go back to the softgym/ directory and you’ll be ready to run.

Code Usage

Back in our normal Ubuntu 18.04 command line setting, we should make sure our conda environment is activated, and that paths are set up appropriately:

(softgym) seita@mason:~/softgym$ export PYFLEXROOT=${PWD}/PyFlex

(softgym) seita@mason:~/softgym$ export PYTHONPATH=${PYFLEXROOT}/bindings/build:$PYTHONPATH

(softgym) seita@mason:~/softgym$ export LD_LIBRARY_PATH=${PYFLEXROOT}/external/SDL2-2.0.4/lib/x64:$LD_LIBRARY_PATH

To make things easier, you can use a script like their provided

prepare-1.0.sh to adjust paths for you, so that you don’t have to keep typing

in these “export” commands manually.

Finally, we have to turn on headless mode for SoftGym if running over a remote machine. This was a step that tripped me up for a while, even though I’m usually good about remembering this after having gone through similar issues using the Blender simulator (for rendering fabric images remotely). Commands like this should hopefully work, which run the chosen environment and have the agent take random actions:

(softgym) seita@mason:~/softgym$ python examples/random_env.py --env_name ClothFlatten --headless 1

If you are running on a local machine with a compatible GPU, you can remove the headless option to have the animation play in a new window. Be warned, though: the size of the window should remain fixed throughout, since the code appends frames together, so don’t drag and resize the window. You can right click on the mouse to change the camera angle, and use W-A-S-D keyboard keys to navigate.

The given script might give you an error about a missing directory, but just

add mkdir data/.

Long story short, SoftGym contains one of the nicest looking physics simulators I’ve seen for deformable objects. I also really like the support for liquids. I can imagine future robots transporting boxes and bags of liquids.

Working and Non-Working Configurations

I’ve tried installing Docker on a number of machines. To summarize, here are

all the working configurations, which are tested by running the

examples/random_env.py script:

- Ubuntu 16.04, CUDA 9.0, NVIDIA 440.33.01, no Docker at all.

- Ubuntu 18.04, CUDA 10.0. NVIDIA 450.102.04, only use Docker for installing PyFleX.

- Ubuntu 18.04, CUDA 10.1. NVIDIA 430.50, only use Docker for installing PyFleX.

- Ubuntu 18.04, CUDA 10.1. NVIDIA 450.102.04, only use Docker for installing PyFleX.

- Ubuntu 18.04, CUDA 11.1. NVIDIA 455.32.00, only use Docker for installing PyFleX.

To clarify, when I list the above “CUDA” versions, I am getting them from

typing the command nvcc --version, and when I list the “NVIDIA” driver

versions, it is from nvidia-smi. The latter command also lists a “CUDA

Version” but that is for the driver, and not the runtime, and these two

CUDA versions can be different (on my machines the versions usually do

not match).

Unfortunately, I have run into a case where SoftGym does not seem to work:

- Ubuntu 16.04, CUDA 10.0, NVIDIA 440.33.01, no Docker at all. The only difference from a working setting above is that it’s CUDA 10.0 instead of 9.0. This setting is resulting in:

Waiting to generate environment variations. May take 1 minute for each variation...

*** stack smashing detected ***: python terminated

Aborted (core dumped)

I have yet to figure out how to fix this. If you’ve found and addressed this fix, it would be nice to inform the code maintainers.