My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

Batch Constrained Deep Reinforcement Learning

An interesting paper that I am reading is Off-Policy Deep Reinforcement Learning without Exploration. You can find the latest version on arXiv, where it clearly appears to be under review for ICML 2019. An earlier version was under review at ICLR 2019 under the earlier title Where Off-Policy Deep Reinforcement Learning Fails. I like the research contribution of the paper, as it falls in line with recent work on how to make deep reinforcement learning slightly more practical. In this case, “practical” refers to how we have a batch of data, from perhaps a simulator or an expert, and we want to train an agent to learn from it without exploration, which would do wonders for safety and sample efficiency.

As is clear from the abstract, the paper introduces the batch-constrained RL algorithm:

We introduce a novel class of off-policy algorithms, batch-constrained reinforcement learning, which restricts the action space in order to force the agent towards behaving close to on-policy with respect to a subset of the given data.

This is clear. We want the set of states the agent experiences to be similar to the set of states from the batch, which might be from an expert (for example). This reminded me of the DART paper (expanded in a BAIR Blog post) that the AUTOLAB developed:

- DART is about applying noise to expert states, so that behavior cloning can see a “wider” distribution of states. This was an imitation learning paper, but the general theme of increasing the variety of states seen has appeared in past reinforcement learning research.

- This paper, though, is about restricting the actions so that the states the agent sees match those of the expert’s by virtue of taking similar actions.

Many of the most successful modern (i.e., “deep”) off-policy algorithms use some variant of experience replay, but the authors claim that this only works when the data in the buffer is correlated with the data induced by the current agent’s policy. This does not work if there is what the authors define as extrapolation error, which is when there is a mismatch between the two datasets. Yes, I agree. Though experience replay is actually designed to break correlation among samples, the most recent information is put into the buffer, bumping older stuff out. By definition, that means some of the data in the experience replay is correlated with the agent’s policy.

But more generally, we might have a batch of data where nothing came from the current agent’s policy. The more I think about it, the more an action restriction makes sense. With function approximation, unseen state-action pairs $(s,a)$ might be more or less attractive than seen pairs. But, aren’t there more ways to be bad than there are to be good? That is, it’s easy to get terrible reward in environments, but harder to get the highest reward, which one can verify by mathematically assigning the probabilities of each random sequence of actions. This paper is about restricting the actions so that we keep funneling the agent towards the high-quality states in the batch.

To be clear, here’s what “batch reinforcement learning” means, and its advantages:

Batch reinforcement learning, the task of learning from a fixed dataset without further interactions with the environment, is a crucial requirement for scaling reinforcement learning to tasks where the data collection procedure is costly, risky, or time-consuming.

You can also view this through the lens of imitation learning, because the simplest form, behavior cloning, does not require environment interaction.1 Furthermore, one of the fundamental aspects of reinforcement learning is precisely environment interaction! Indeed, this paper benchmarks with behavior cloning, and freely says that “Our algorithm offers a unified view on imitation and off-policy learning.”2

Let’s move on to the technical and algorithmic contribution, because I’m rambling too much. Their first foray is to try and redefine the Bellman operator in finite, discrete MDPs in the context of reducing extrapolation error so that the induced policy will visit the state-action pairs that more closely correspond with the distribution of state-action pairs from the batch.

A summary of the paper’s theory is that batch-constrained learning still converges to an optimal policy for deterministic MDPs. Much of the theory involves redefining or inducing a new MDP based on the batch, and then deferring to standard Q-learning theory. I wish I had time to go through some of those older classical papers, such as this one.

For example, the paper claims that normal Q-learning on the batch of data will result in an optimal value function for an alternative MDP, $M_{\mathcal{B}}$, based on the batch $\mathcal{B}$. A related and important definition is the tabular extraploation error $\epsilon_{\rm MDP}$, defined as discrepancy between the value function computed with the batch versus the value function computed with the true MDP $M$:

\[\epsilon_{\rm MDP}(s,a) = Q^\pi(s,a) - Q_{\mathcal{B}}^\pi(s,a)\]This can be computed recursively using a Bellman-like equation (see the paper for details), but it’s easier to write as:

\[\epsilon_{\rm MDP}^\pi = \sum_{s} \mu_\pi(s) \sum_a \pi(a|s) |\epsilon_{\rm MDP}(s,a)|\]By using the above, they are able to derive a new algorithm: Batch-Constrained Q-learning (BCQL) which restricts the possible actions to be in the batch:

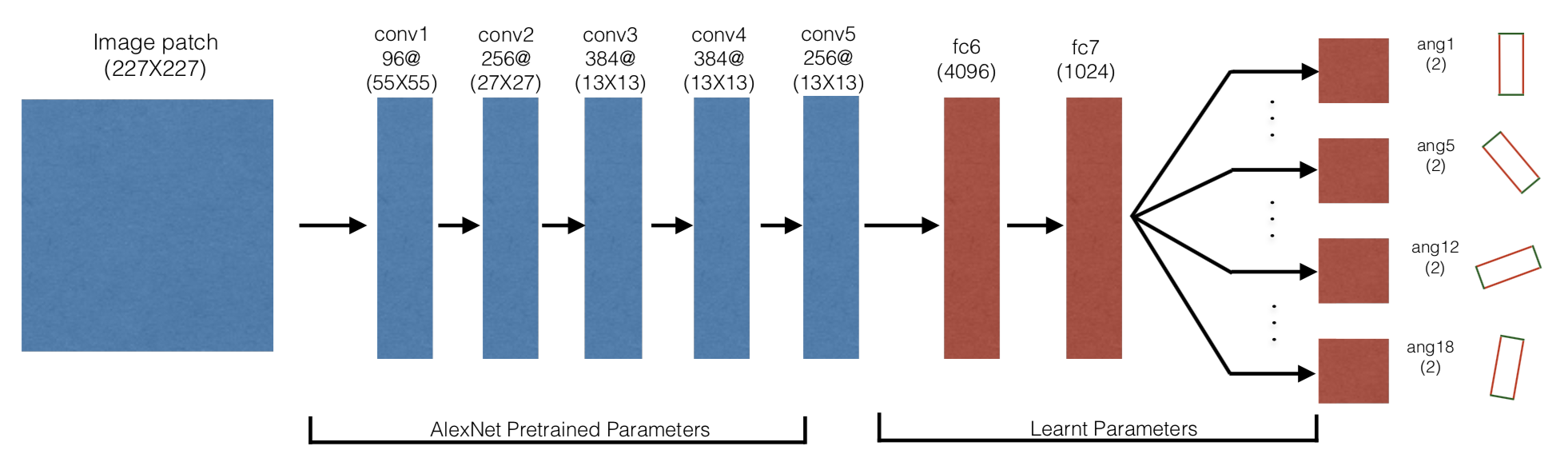

\[Q(s,a) \leftarrow (1 - \alpha ) Q(s,a) + \alpha\left( r + \gamma \left\{ \max_{a' \;{\rm s.t.}\; (s',a') \in \mathcal{B}} Q(s',a') \right\} \right)\]Next, let’s introduce their practical algorithm for high-dimensional, continuous control: Batch-Constrained deep Q-learning (BCQ). It utilizes four parameterized networks.

-

A Generative model $G_\omega(s)$ which, given the state as input, produces an action. Using a generative model this way assumes we pick actions using:

\[\operatorname*{argmax}_{a} \;\; P_{\mathcal{B}}^G(a|s)\]or in other words, the most likely action given the state, with respect to the data in the batch. This is difficult to model in high dimensional continuous control environments, so they approximate it with a variational autoencoder. This is trained along with the policy parameters during each for loop iteration.

-

A Perturbation model $\xi_\phi(s,a,\Phi)$ which aims to “optimally perturb” the actions, so that they don’t need to sample too much from $G_\omega(s)$. The perturbation applies noise in $[-\Phi,\Phi]$. It is updated via a deterministic policy gradient rule:

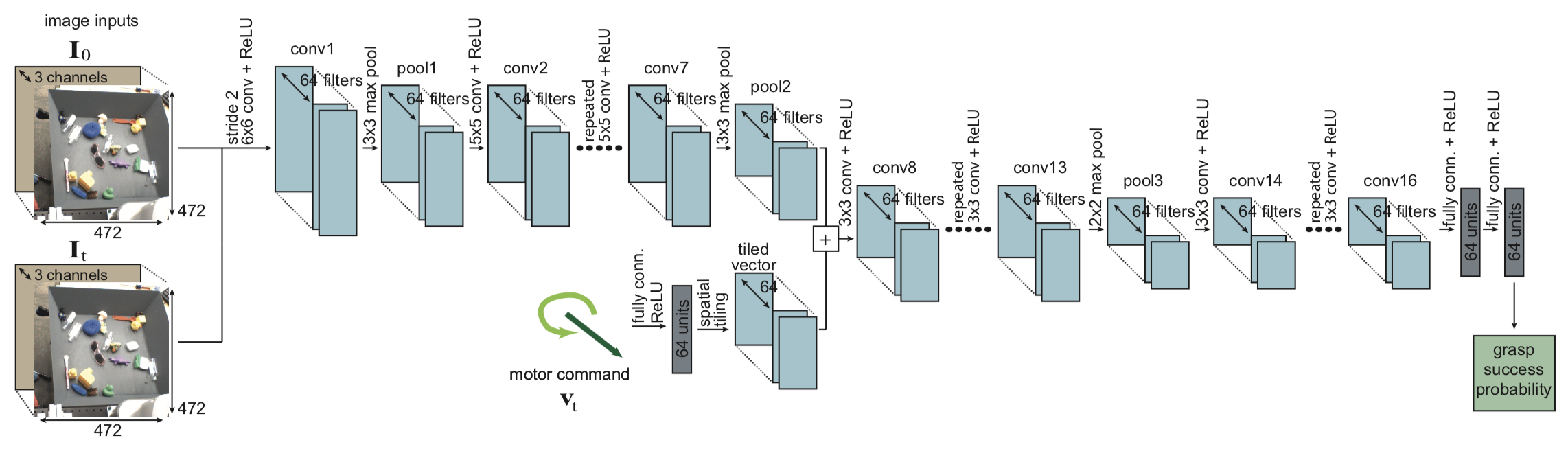

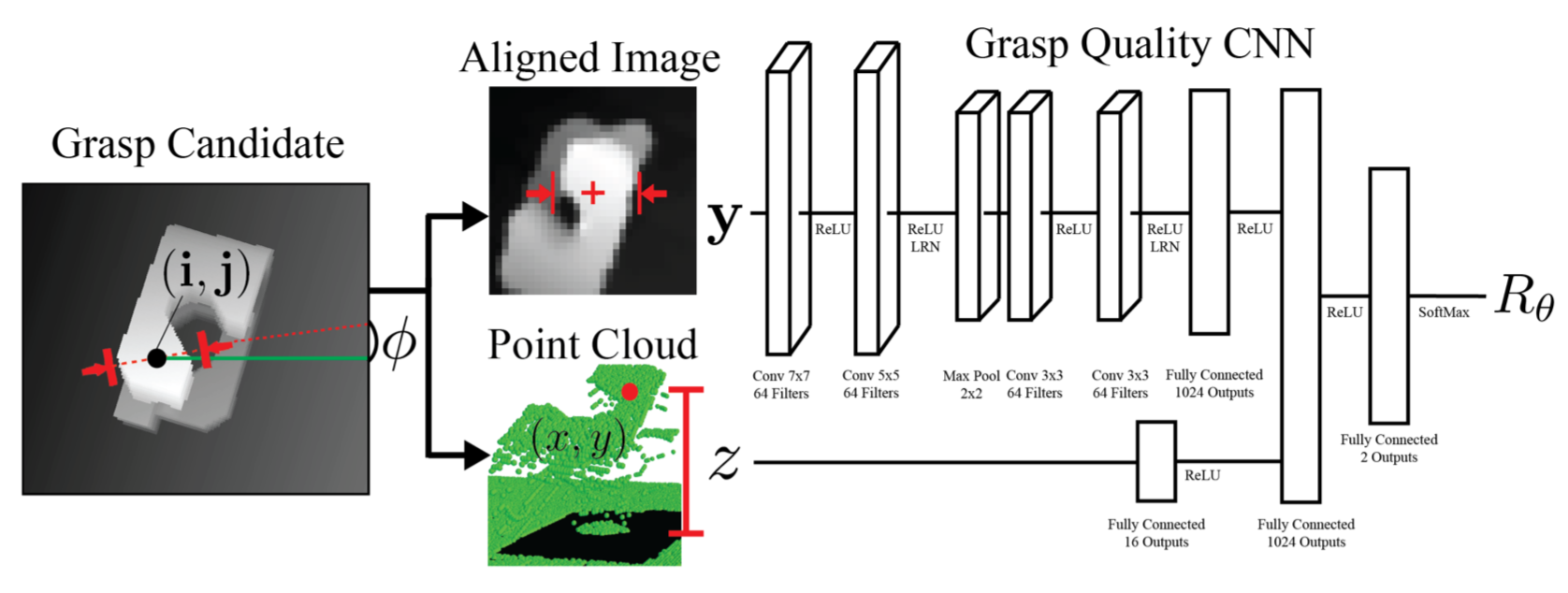

\[\phi \leftarrow \operatorname*{argmax}_\phi \;\; \sum_{(s,a) \in \mathcal{B}} Q_\theta\Big( s, a+\xi_\phi(s,a,\Phi)\Big)\]The above is a maximization problem over a sum of Q-function terms. The Q-function is differentiable as we parameterize it with a deep neural network, and stochastic gradient descent methods will work with stochastic inputs. I wonder, is the perturbation model overkill? Is it possible to do a cross entropy method, like what two of these papers do for robotic grasping?

-

Two Q-networks $Q_{\theta_1}(s,a)$ and $Q_{\theta_2}(s,a)$, to help push their policy to select actions that lead to “more certain data.” This is based on their ICML paper last year, which proposed the (now popular!) Twin-Delayed DDPG (TD3) algorithm. OpenAI’s SpinningUp has a helpful overview of TD3.

All networks other than the generative model also consist of target networks, following standard DDPG practices.

All together, their algorithm uses this policy:

\[\pi(s) = \operatorname*{argmax}_{a_i+\xi_\phi(s,a_i,\Phi)} \;\; Q_\theta\Big(s, a_i+\xi_\phi(s,a_i,\Phi)\Big) \quad \quad \{a_i \sim G_\omega(s) \}_{i=1}^n\]To be clear, they approximate this maximization by sampling $n$ actions each time step, and picking the best one. The perturbation model, as stated earlier, increases the diversity of the sampled actions. Once again, it would be nice to confirm that this is necessary, such as via an experiment that shows the VAE collapses to a mode. (I don’t see justification in the paper or the appendix.)

There is a useful interpretation of how this algorithm is a continuum between behavior cloning (if $n=1$ and $\Phi=0$) and Q-learning ($n\to \infty$ and $\Phi \to a_{\rm max}-a_{\rm min}$).

All right, that was their theory and algorithm — now let’s discuss the experiments. They test with DDPG under several different conditions. They assume that there is a “behavioral DDPG” agent which generates the batch of data, for which an “off-policy DDPG” agent learns from, without exploration. Their goal is to improve the learning of the “off-policy DDPG.” (Don’t get confused with the actor-critic framework of normal DDPG … just think of the behavioral DDPG as the thing that generates the batch in “batch-constrained RL.”)

-

Final Buffer. They train the behavioral DDPG agent from scratch for 1 million steps, adding more noise than usual for extra exploration. Then all of its experience is pooled inside an experience replay. That’s the “batch”. Then, they use it to train the off-policy DDPG agent. That off-policy agent does not interact with the environment — it just draws samples from the buffer. Note that this will result in widespread state coverage, including potentially the early states when the behavioral agent was performing poorly.

-

Concurrent. This time, as the behavioral DDPG agent learns, the off-policy one learns as well, using data from the behavioral agent. Moreover, the original behavioral DDPG agent is also learning from the same data, so both agents learn from identical datsets. To be clear: this means the agents have almost identical training settings. The only differences I can think of are: (a) noise in initial parameters and (b) noise in minibatch sampling. Is that it?

-

Imitation. After training the behavioral DDPG agent, they run it for 1 million steps. Those experiences are added to the buffer, from which the off-policy DDPG agent learns. Thus, this is basically the imitation learning setting.

-

Imperfect Demonstrations. This is the same as the “imitation” case, except some noise is added to the data, through Gaussian noise on the states and randomness in action selection. Thus, it’s like adding more coverage to an expert data.

The experiments use … MuJoCo. Argh, we’re still using it as a benchmark. They test with HalfCheetah-v1, Hopper-v1, and Walker2d-v1. Ideally there would be more, at least in the main part of the paper. The Appendix has some limited Pendulum-v0 and Reacher-v1 results. I wonder if they tried on Humanoid-v1.

They actually performed some initial experiments before presenting the theory, which justifies the need to correct for extrapolation error. The most surprising fact there was that the off-policy DDPG agent failed to match the behavioral agent even in the concurrent learning paradigm. That’s surprising!

This was what motivated their Batch-Constrained deep Q-learning (BCQ) algorithm, discussed above.

As for their results, I am a little confused after reading Figure 2. They say that:

Only BCQ matches or outperforms the performance of the behavioral policy in all tasks.

Being color-blind, the BCQ and VAE-BC colors look indistinguishable to me. (And the same goes for the DQN and DDPG baselines, which look like they are orange and orange, respectively.) I wish there was better color contrast, perhaps with light purple and dark blue for the former, and yellow and red for the latter. Oh well. I assume that their BCQ curve is the highest one on the rewards plot … but this means it’s not that much better than the baselines on Hopper-v1 except for the imperfect demonstrations task. Furthermore, the shaded area is only half of a standard deviation, rather than one. Finally, in the imitation task, simple behavior cloning was better. So, it’s hard to tell if these are truly statistically significant results.

While I wish the results were more convincing, I still buy the rationale of their algorithm. I believe it is a valuable contribution to the research community.

-

More advanced forms of imitation learning might require substantial environment interaction, such as Generative Adversarial Imitation Learning. (My blog post about that paper is here.) ↩

-

One of the ICLR reviewers brought up that this is more of an imitation learning algorithm than it is a reinforcement learning one … ↩

Deep Learning and Importance Sampling Review

This semester, I am a Graduate Student Instructor for Berkeley’s Deep Learning class, now numbered CS 182/282A. I was last a GSI in fall 2016 for the same course, so I hope my teaching skills are not rusty. At least I am a GSI from the start, and not an “emergency appointment” like I was in fall 2016. I view my goal as helping Professor Canny stuff as much Deep Learning knowledge into the students as possible so that they can use the technology to be confident, go forth, and change the world!

All right, that was cheesy, and admittedly there is a bit too much hype. Nonetheless, Deep Learning has been a critical tool in a variety of my past and current research projects, so my investment in learning the technology over the last few years has paid off. I have read nearly the entire Deep Learning textbook, but for good measure, I want to officially finish digesting everything from the book. Thus, (most of) my next few blog posts will be technical, math-oriented posts that chronicle my final journey through the book. In addition, I will bring up related subjects that aren’t explicitly covered in the book, including possibly some research paper summaries.

Let’s start with a review of Chapter 17. It’s about Monte Carlo sampling, the general idea of using samples to approximate some value of interest. This is an extremely important paradigm, because in many cases sampling is the best (or even only) option we have. A common way that sampling arises in Deep Learning is when we use minibatches to approximate a full-data gradient. And even for that, the full data gradient is really one giant minibatch, as Goodfellow nicely pointed out on Quora.

More formally, assume we have some discrete, vector-valued random variable $\bf{x}$ and we want the following expectation:

\[s = \sum_{x} p(x)f(x) = \mathbb{E}_p[f(\bf{x})]\]where $x$ indicates the possible values (or “instantiations” or “realizations” or … you get the idea) of random variable $\bf{x}$. The expectation $\mathbb{E}$ is taken “under the distribution $p$” in my notation, where $p$ must clearly satisfy the definition of being a (discrete) probability distribution. This just means that $\bf{x}$ is sampled based on $p$.

This formulation is broad, and I like thinking in terms of examples. Let’s turn to reinforcement learning. The goal is to find some parameter $\theta^* \in \Theta$ that maximizes the objective function

\[J(\theta) = \mathbb{E}_{\tau \sim \pi_\theta(\tau)}[R(\tau)]\]where $\tau$ is a trajectory induced by the agent’s policy $\pi_\theta$; that probability is $\pi_\theta(\tau) = p(s_1,a_1,\ldots,s_T,a_T)$, and $R(\tau) = \sum_{t=1}^T R(s_t,a_t)$. Here, the objective plays the role of $\mathbb{E}_p[f(\bf{x})]$ from earlier with the trajectory $\tau$ as the vector-valued random variable.

But how would we exactly compute $J(\theta)$? The process would require us to explicitly enumerate all possible trajectories that could possibly arise from the environment emulator, and then weigh them all accordingly by their (log) probabilities, and compute the expectation from that. The number of trajectories is super-exponential, and this computation would be needed for every gradient update we need to perform on $\theta$, since the distribution of trajectories directly depends on $\pi_\theta(\tau)$.

You can see why sampling is critical for us to make any headway.

(For background on this material, please consult my older post on policy gradients, and an even older post on the basics of Markov Decision Processes.)

The solution is to take a small set of samples \(\{x^{(1)}, \ldots, x^{(n)}\}\) from the distribution of interest, to obtain our estimator

\[\hat{s}_n = \frac{1}{n} \sum_{i=1}^n f(x^{(i)})\]which is unbiased:

\[\mathbb{E}[\hat{s}_n] = \frac{1}{n}\sum_{i=1}^n \mathbb{E}[x^{(i)}] = \frac{1}{n}\sum_{i=1}^ns = s\]and converges almost surely to the expected value, so long as several mild assumptions are met regarding the samples.

Now consider importance sampling. As the book nicely points out, when using $p(x)f(x)$ to compute the expectation, the decomposition does not have to be uniquely set at $p(x)$ and $f(x)$. Why? We can introduce a third function $q$:

\[p(x)f(x) = q(x)\frac{p(x)f(x)}{q(x)}\]and we can sample from $q$ and average $\frac{pf}{q}$ and get our importance sampling estimator:

\[\hat{s}_p = \frac{1}{n} \sum_{i=1,\bf{x}^{(i)}\sim p}^n f(x^{(i)}) \quad \Longrightarrow \quad \hat{s}_q = \frac{1}{n} \sum_{i=1,\bf{x}^{(i)}\sim q}^n \frac{p(x^{(i)})f(x^{(i)})}{q(x^{(i)})}\]which was sampled from $q$. (The $\hat{s}_p$ is the same as $\hat{s}_n$ from earlier.) In importance sampling lingo, $q$ is often called the proposal distribution.

Think about what just happened. We are still computing the same quantity or sample estimator, and under expectation we still get $\mathbb{E}_q[\hat{s}_q] = s$. But we used a different distribution to get our actual samples. The whole $\bf{x}^{(i)}\sim p$ or $\bf{x}^{(i)}\sim q$ notation is used to control the set of samples that we get for approximating the expectation.

We employ this technique primarily to (a) sample from “more interesting regions” and (b) to reduce variance. For (a), this is often motivated by referring to some setup as follows:

We want to use Monte Carlo to compute $\mu = \mathbb{E}[X]$. There is an event $E$ such that $P(E)$ is small but $X$ is small outside of $E$. When we run the usual Monte Carlo algorithm the vast majority of our samples of $X$ will be outside $E$. But outside of $E$, $X$ is close to zero. Only rarely will we get a sample in $E$ where $X$ is not small.

where I’ve quoted this reference. I like this intuition – we need to find the more interesting regions via “overweighting” the sampling distribution there, and then we adjust the probability accordingly for our actual Monte Carlo estimate.

For (b), given two unbiased estimators, all other things being equal, the better one is the one with lower variance. The variance of $\hat{s}_q$ is

\[{\rm Var}(\hat{s}_q) = \frac{1}{n}{\rm Var} \left(\frac{p(\bf{x}) f(\bf{x})}{q(\bf{x})}\right)\]The optimal choice inducing minimum variance is $q^*(x) \propto p(x)|f(x)|$ but this is not usually attained in practice, so in some sense the task of importance sampling is to find a good sampling distribution $q$. For example, one heuristic that I’ve seen is to pick a $q$ that has “fatter tails”, so that we avoid cases where $q(x) \ll p(x)|f(x)|$, which causes the variance of $\frac{p(x)f(x)}{q(x)}$ to explode. (I’m using absolute values around $f(x)$ since $p(x) \ge 0$.) Though, since we are sampling from $q$, normally the case where $q(x)$ is very small shouldn’t happen, but anything can happen in high dimensions.

In a subsequent post, I will discuss importance sampling in the context of some deep learning applications.

I Will Make a Serious Run for Political Office by January 14, 2044

I have an official announcement. I am giving myself a 25-year deadline for making a serious run for political office. That means I must begin a major political campaign no later than January 14, 2044.

Obviously, I can’t make any guarantees about what the world will be like then. We know there are existential threats about which I worry. My health might suddenly take a nosedive due to an injury or if I somehow quit my addiction to salads and berries. But for the sake of this exercise, let’s assume away these (hopefully unlikely) cases.

People are inspired to run for political office for a variety of reasons. I have repeatedly been thinking about doing so, perhaps (as amazing as it sounds) even moreso than I think about existential threats. The tipping point for me making this declaration is our ridiculous government shutdown, now the longest in history.

This shutdown is unnecessary, counterproductive, and is weakening the United States of America. As many as 800,000 federal workers are furloughed or being forced to work without pay. On a more personal note, government cuts disrupt American science, a worrying sign given how China is investing vast sums of money in Artificial Intelligence and other sciences.

I do not know which offices I will target. It could be national or state-wide. Certain environments are far more challenging for political newcomers, such as those with powerful incumbents. But if I end up getting lucky, such as drawing a white supremacist like Steve King as my opponent … well, I’m sure I could position myself to win the respect of the relevant group of voters.

I also cannot state with certainty regarding my future political party affiliation. I am a terrible fit for the modern-day GOP, and an awkward one for the current Democratic party. But, a lot can change in 25 years.

To avoid distracting myself from more pressing circumstances, I will not discuss this in future blog posts. My primary focus is on getting more research done; I currently have about 20 drafts of technical posts to plow through in the next few months.

But stay tuned for what the long-term future may hold.

What Keeps Me Up at Night

For most of my life, I have had difficulty sleeping, because my mind is constantly whirring about some topic, and I cannot shut it down. I ponder about many things. In recent months, what’s been keeping me up at night are existential threats to humanity. Two classic categories are nuclear warfare and climate change. A more recent one is artificial intelligence.

The threat of civilization-ending nuclear warfare has been on the minds of many thinkers since the days of World War II.

There are nine countries with nuclear weapons: the United States, Russia, United Kingdom, France, China, India, Pakistan, Israel, and North Korea.

The United States and Russia have, by far, the largest nuclear weapons stockpiles. The Israeli government deliberately remains ambiguous about its nuclear arsenal. Iran is close to obtaining nuclear weapons, and it is essential that this does not happen.

I am not afraid of Putin ordering nuclear attacks. I have consistently stated that Russia (essentially, that means Putin) is America’s biggest geopolitical foe. This is not the same as saying that they are the biggest existential threat to humanity. Putin may be an dictator who I would never want to live under, but he is not suicidal.

North Korea is a different matter. I have little faith in Kim Jong Un’s mental acuity. Unfortunately, his regime still shows no signs of collapse. America must work with China and persuade them that it is in the interest of both countries for China to end their support of the Kim regime.

What about terrorist groups? While white supremacists have, I think, killed more Americans in recent years than radical Islamists, I don’t think white supremacist groups are actively trying to obtain nuclear weapons more as they want a racially pure society to live in, which by necessity requires some land usable and fallout-free.

But Islamic State, and other cult-like terrorist groups, could launch suicide attacks by stealing nuclear weapons. Terrorist groups lack homegrown expertise to build and launch such weapons, but they may purchase, steal, bribe, or extort. It is imperative that our nuclear technicians and security guards are well-trained, appropriately compensated, and have no Edward Snowdens hidden among them. It would also be prudent to assist countries such as Pakistan so that they have stronger defenses of their nuclear weapons.

Despite all the things that could go wrong, we are still alive today with no nuclear warfare since World War II. I hope that cool heads continue to prevail among those in possession of nuclear weapons.

A good overview of the preceding issues can be found in Charles D. Ferguson’s book. There is also a nice op-ed by elder statesmen George Shultz, Henry Kissinger, William Perry, and Sam Nunn on a world without nuclear weapons.

Climate change is a second major existential threat.

The good news is that the worst-case predictions from our scientists (and, ahem, Al Gore) have not materialized. We are still alive today, and the climate, at least from my personal experience — which cannot be used as evidence against climate change since it’s one data point — is not notably different from years past. The increasing use of natural gas has substantially slowed down the rate of carbon emissions. Businesses are aiming to be more energy-efficient. Scientists continue to track worldwide temperatures and to make more accurate climate predictions aided by advanced computing hardware.

The bad news is that carbon emissions will continue to grow. As countries develop, they naturally require more energy for the higher-status symbols of civilization (more cars, more air travel, and so on). Their citizens will also want more meat, causing more methane emissions and further strains on our environment.

Moreover, the recent Artificial Intelligence and Blockchain developments are computationally-heavy, due to Deep Learning and mining (respectively). Artificial Intelligence researchers and miners therefore have a responsibility to be frugal about their energy usage.

It would be ideal if the United States could take the lead in fighting climate change in a sensible way without total economic shutdown, such as by applying the carbon tax plan proposed by former Secretary of State George Shultz and policy entrepreneur Ted Halstead. Unfortunately, we lack the willpower to do so, and the Republican party in recent years has placed lower priorities on climate change, with their top politician even once Tweeting the absurd and patently false claim that global warming was a “hoax invented by the Chinese to make American manufacturing less competitive.” That most scientists are Democrats can be attributed in large part because of attacks on climate change (and the theory of evolution, I’d add), not because they are anti-capitalism. I bet most of us recognize the benefits of a capitalistic society like I do.

While I worry about carbon and temperature, they are not the only things that matter. Climate change can cause more extreme weather, such as droughts which have plagued the Middle East, exacerbating the current refugee crisis and destabilizing governments throughout the world. Droughts are also stressing supplies in South Africa, and even America, as we have sadly seen in California.

A more recent existential threat pertains to artificial intelligence.

Two classes of threats I ponder are (a) autonomous weapons, and a broad category that I call (b) the risks of catastrophic misinformation. Both are compounding factors that contribute to nuclear warfare or a more drastic climate trend.

The danger of autonomous weapons has been widely explored in recent books, such as Army of None (on my TODO list) and in generic Artificial Intelligence books such as Life 3.0 (highly recommended!). There are a number of terrifying ways in which these weapons could wreak havoc among populations throughout the world.

For example, one could also think of autonomous weapons merging with biological terrorism, perhaps via a swarm of “killer bee robots” spreading a virus. Fortunately, as summarized by Steven Pinker in the existential threats chapter of Enlightenment Now, biological agents are actually ill-suited for widespread terrorism and pandemics in the modern era. But autonomous weapons could easily be used for purposes that we can’t even imagine now.

Autonomous weapons will be applied on specially designed hardware. These won’t be like the physical, humanoid robots that Toyota is developing for home robots, because robotic motion that mimics human-like motion is too slow and cumbersome to cause an existential threat. Recent AI advances have been primarily from software. Nowhere was this more apparent to me from AlphaGo, which astonished the world by defeating a top Go player … but a DeepMind employee, following AlphaGo’s instructions, placed the stones on the board. The irony is that something as “primitive” as finely placing stones on a game board is beyond the ability of current robots. This means that I do not consider situations where a robot must physically acquire resources with its own hardware to be an existential threat.

The second aspect of AI that I worry about is, as stated earlier, “catastrophic misinformation.” What do I mean by this? I refer to how AI might be trained to create material that can drastically mislead a group of people, which might cause them to be belligerent with others, hence increasing the chances of nuclear or widespread warfare.

Consider a more advanced form of AI that can generate images (and perhaps videos!) far more complex than those that the NVIDIA GAN can create. Even today, people have difficulty distinguishing between fake and real news, as noted in LikeWar. A future risk for humanity might involve a world-wide “PizzaGate” incident where misled leaders go at war with each other, provoked by AI-generated misinformation from a terrorist organization running open-source code.

Even if we could count on citizens to hold their leaders accountable, (a) some countries simply don’t have accountable leaders or knowledgeable citizens, and (b) even “educated” people can be silently nudged to support certain issues. North Korea has brainwashed their citizens to obey their leaders without question. China is moving beyond blocking “Tiananmen Square massacre”-like themes on the Internet; they can determine social credit scores, automatically tracked via phone apps and Big Data. China additionally has the technical know-how, hardware, and data, to utilize the latest AI advances.

Imagine what authoritarian leaders could do if they wanted to rouse support for some controversial issue … that they learned via fake-news AI. That succinctly summarizes my concerns.

Nuclear warfare, climate change, and artificial intelligence, are currently keeping me up at night.

How to be Better: 2019 and Earlier Resolutions

I have written New Year’s resolutions since 2014, and do post-mortems to evaluate my progress. All of my resolutions are in separate text documents in my laptop’s desktop, so I see them every morning.

In the past I’ve only blogged about the 2015 edition, where I briefly covered my resolutions for the year. That was four years ago, so how are things looking today?

The good news: I have maintained tracking New Year’s resolutions throughout the years, and have achieved many of my goals. Some resolutions are specific, such as “run a half marathon in under 1:45”, but others are vague, such as “run consistently on Tuesdays and Thursdays”, so I don’t keep track of the number of successes or failures. Instead, I jot down several “positive,” “neutral,” and “negative” conclusions at each year’s end.

Possibly because of my newfound goals and ambitions, my current resolutions are much longer than they were in 2015. My 2019 resolutions are split into six categories: (1) reading books, (2) blogging, (3) academics, education, and work, (4) physical fitness and health, (5) money and finances, and (6) miscellaneous. Each is further sub-divided as needed.

Probably the most notable change I’ve made since 2015 is my book reading habit, which has rapidly turned into my #1 non-academic activity. It’s the one I default to during my evenings, my vacations, my plane rides, and on Saturdays when I generally do not work in order to recharge and to preserve my sanity.

Ultimately, much of my future career/life will depend on how well I meet my goals under class (3) above, in the academics, education, and work category, At a high level, the goals here (which could be applied to my other categories, but I view them mostly under the lens of “work”) are:

-

Be Better At Minimizing Distractions. I am reasonably good at this, but there is still a wide chasm between where I’m at and my ideal state. I checked email way too often this past year, and need to cut that down.

-

Be Better At Reading Research Papers. Reading academic papers is hard. I have read many, as evident by my GitHub paper notes repository. But not all of those notes have reflected true understanding, and it’s easy to get bogged down into irrelevant details. I also need to be more skeptical of research papers, since no paper is perfect.

-

Be Better At Learning New Concepts. When learning new concepts (examples: reading a textbook, self-studying an online course, understanding a new code base), apply deliberate practice. It’s the best way to quickly get up to speed and rapidly attain the level of expertise I require.

I hope I make a leap in 2019. Feel free to contact me if you’ve had some good experiences or insights from forming your own New Year’s resolutions!

Books Read in 2018

[Warning: Long Read]

As I did in 2016 and then in 2017, I am reporting the list of books that I read this past year1 along with brief summaries and my colorful commentary. This year, I read 34 books, which is similar to the amount in past years (35 and 43, respectively). This page will have any future set of reading list posts.

Here are the categories:

- Business, Economics, and Technology (9 books)

- Biographies and Memoirs (9 books)

- Self-Improvement (6 books)

- History (3 books)

- Current Events (3 books)

- Miscellaneous (4 books)

All books are non-fiction, and I drafted the summaries written below as soon as I had finished reading each book.

As usual, I write the titles below in bold text, and the books that I especially enjoyed reading have double asterisks (**) surrounding the titles.

Group 1: Business, Economics, and Technology

I’m lumping these all together because the business/econ books that I read tend to be about “high tech” industries.

-

** Crossing the Chasm: Marketing and Selling High-Tech Products to Mainstream Customers ** is Geoffrey A. Moore’s famous book (published in 1991, revised 1999 and 2014) aimed as a guide to high-tech start-up firms. Moore argues that start-ups initially deal with an early set of customers – the “visionaries” – but in order to survive long-term, they must transition to a mainstream market with pragmatist and conservative customers with different expectations and purchasing practices. Moore treats the gap between early and mainstream customers a chasm that many high-tech companies fail to cross. This is a waste of potential, and hence this book is a guide on how to successfully enter the mainstream market, which is when the company ideally stabilizes and rakes in the profits. Moore describes the solution with an analogy with D-Day: “Our long-term goal is to enter and take control of a mainstream market (Western Europe) that is currently dominated by an entrenched competitor (the Axis). For our product to wrest the mainstream market from this competitor, we must assemble an invasion force comprising other products and companies (the Allies) […]”. This is cheesy, but I admit it helped my understanding of Moore’s arguments, and on the whole, the advice in this book seems accurate, at least as good as one can expect to get in the business world. One important caveat: Crossing the Chasm is aimed at B2B (Business to Business) companies and not B2C (Business to Consumer) companies, so it might be slightly harder intuitively interpret “normal” business activity in B2B-land. Despite my lack of business-related knowledge, however, this book was highly readable. I learned more about business jargon which should make it easier for me to discuss and debate relevant topics with friends and colleagues. I read this book after it was recommended by Andrew Ng.2 While I don’t have any plans to create a start-up, Ng has recently founded Deeplearning.ai and Landing.ai, and serves as chairman of Woebot. For Landing.ai, which seems to be his main “B2B company,” I will see if I can interpret the company’s actions in the context of Crossing the Chasm.

-

How Asia Works: Success and Failure in the World’s Most Dynamic Region is a 2013 book by Joe Studwell, who I would classify as a “business journalist” (there isn’t much information about him, but he has a blog). Studwell attempts to identify why certain Asian countries have succeeded economically and technologically (Japan, Taiwan, South Korea, and now China, which takes up a chapter all on its own) while others have not (Thailand, Indonesia, Malaysia, and the Philippines). Studwell’s argument is split into three parts. The first is agriculture: successful states promote agriculture for farms instead of “efficient” large-scale businesses, since low-income countries have lots of low-skill laborers who can be effective farmers.3 The second step is to focus on manufacturing with the state providing political and economic support to get small companies to develop export discipline (something he brings up A LOT). The third part is on finance: governments need to support agriculture and manufacturing as discussed earlier, rather than lavish money towards real estate. The fourth chapter is about China. (In the first three chapters, he has five “journey” tales: Japan, Philippines, South Korea, Malaysia, and Indonesia. These were really interesting!) There are several broader takeaways. First, he repeatedly makes the case for more government intervention when the country is just developing, and not deregulation as the World Bank and United States keep saying. Of course, later deregulation is critical, but don’t do it too early! Studwell repeatedly criticizes economists who don’t understand history. But I doubt lots of government intervention is helpful. What about the famines in China and ethnic wars that were entirely due to government policy? To be fair, I agree that if governments follow his recipe, then countries are likely to succeed, but the recipe — though easy to describe — is astoundingly hard to achieve in practice due to a variety of reasons. The book is a bit dry and I wish some content had been cut off since it still wasn’t clear to me what happened to make agriculture so important in Japan and other countries, but I had to spend lots of time interrupting my reading to look up online about facts about Asia that I didn’t know. I wholeheartedly agree with Bill Gates’ final words: “How Asia Works is not a gripping page-turner aimed at general audiences, but it’s a good read for anyone who wants to understand what actually determines whether a developing economy will succeed. Studwell’s formula is refreshingly clear—even if it’s very difficult to execute.” Whatever my disagreements with Studwell, we can all agree that it is easy to fail and hard to succeed.

-

Blockchain Revolution: How the Technology Behind Bitcoin and Cryptocurrencies is Changing the World (2016, later updated in 2018) by father-son author team Don Tapscott and Alex Tapscott, describes how the blockchain technology will change the world. To be clear, blockchain already has done that (to some extent), but the book is mostly about the future and its potential. The technology behind blockchain, which has enabled bitcoin, was famously introduced in 2008 by Satoshi Nakamoto, whose true identity remains unknown. Blockchain Revolution gives an overview of Nakamoto’s idea, and then spends most of its ink describing problems that could be solved or ameliorated with blockchain, such as excess centralization of power, suppression of citizens under authoritarian governments, inefficiencies in payment systems, and so forth. This isn’t the book’s main emphasis, but I am particularly intrigued by the potential for combining blockchain technology with artificial intelligence; the Tapscotts are optimistic about automating things with smart devices. I still have lots of questions about blockchain, and to better understand it, I will likely have to implement a simplified form of it myself. That being said, despite the book’s optimism, I remain concerned for a few reasons. The first is that I’m worried about all the energy that we need for mining — isn’t that going to counter any efficiency gains from blockchain technology (e.g., due to smart energy grids)? Second, will this be too complex for ordinary citizens to understand and benefit, leaving the rich to get the fruits? Third, are we really sure that blockchain will help protect citizens from authoritarian governments, and that there aren’t any unanticipated drawbacks? I remain cautiously optimistic. The book is great at trying to match the science fiction potential with reality, but still, I worry that the expectations for blockchain are too high.

-

** The Industries of the Future ** is an engaging, informative book written by Alec Ross in 2016. Ross’ job description nowadays mostly consists of being an advisor to someone: advisor to Secretary of State Hillary Clinton, and to various other CEOs and organizations, and he’s a visiting scholar and an author (with this book!). It’s a bit unclear to me how one arrives at that kind of “advisor” position,4 but his book shows to me that he knows his stuff. Born in West Virginia, he saw the decline of coal and how opportunities have dwindled for those who have fallen behind in the new, information, data, and tech-based economy. In this book, Ross’ goal is to predict what industries will be “hot” in the next 20 years (2016-2036). He discusses robotics and machine learning (yay!), genomics, cryptocurrency and other currency, code wars, and so on. Amazingly, he cites Ken Goldberg and the work the lab has done in surgical robotics, which is impressive! (I was circling the citations and endnotes with my pencil, grinning from ear-to-ear when reading the book.) Now, Ross’ predictions are not exactly bold. There are a lot of people saying the same thing about future industries — but that also means Ross is probably more likely to be right than wrong. Towards the end of the book, he discusses how to best prepare ourselves for the industries of the future. His main claim is that leaders cannot be a control freak. (He also mentions the need to have women be involved in the industries of the future.) People such as Vladimir Putin and other leaders that want control will fall behind in such a world, so instead of “capitalism vs communism” of the 20th century, we have “open vs closed” of the 21st century. Of course, this happens on a spectrum. Some countries are closed politically but open economically (China is the ultimate case) and some are open politically (in the sense of democracy, etc.) but closed economically (India).5 Unfortunately I think he underestimated how much authoritarian leaders can retain control over their citizens and steal technologies (see LikeWar below). While his book is about predictions, not about policy solutions, Ross ran for Governor of Maryland in the Democratic primaries. Unfortunately, he got clobbered in the primaries, finishing 7th out of 9th. Well, we know by now that the most qualified candidate doesn’t always get the job…

-

** Driverless: Intelligent Cars and the Road Ahead ** is an academic book by Hod Lipson, Professor of Mechanical Engineering at Columbia, and Melba Kurman, a tech writer. I have always heard news about self-driving cars — I mean, look at my BARS 2018 experience — but never got around to understanding how they are actually retrofitted. Hence, why I read this book. It provides a decent overview of the history on self-driving cars, from the early, promising (but overly optimistic) 1950s era, to today, when they are now becoming more of a reality due to deep learning and other supporting technologies. The authors are advocates of self-driving cars, and for fully automatic cars (like with Google is trying to do), and not a gradual change from human-to-automatic (like what car manufacturers would like). They make a compelling case: if we try to develop self-driving cars by gradually transitioning to automation but keeping the human in the loop, it won’t work. Humans can’t suddenly jerk back to attention and take over when needed. It’s different in airfare, as David Mindell describes in Our Robots, Ourselves: Robotics and the Myths of Autonomy where there can be a sufficient mix of human and autonomy. Flying a plane is, parodoxically, actually easier in some ways than driving to have human-in-the-loop automation.6 While this might sound bad for car manufacturers, the good news is that the tech companies with the software powering the self-driving cars will need to partner with one of the manufacturers for the hardware. Later, Driverless book discusses the history of competitions such as DARPA, which I’ve seen in prior books. What distinguishes Driverless from prior books I’ve read is that they describe how modern self-driving cars are retrofitted, which was new to me. And then, finally, they talk about Deep Learning. That’s the final piece in the puzzle, and what really excites me going forward.

-

** Platform Revolution: How Networked Markets Are Transforming the Economy and How to Make Them Work for You ** is a recent 2016 book co-authored by a three-team of Geoffrey G. Parker, Marshall W. Van Alstyne, and Sangeet Paul Choudary. The first two are professors (of (management) engineering and business, respectively) and the third is a well-regarded platform business insider. Platform Revolution describes how traditional “pipeline” businesses are either transforming into or rapidly being usurped by competitors following a platform business model. They define a platform as “A business based on enabling value-creating interactions between external producers and consumers” and emphasize the differences between that and pipeline businesses where the value chain starts from the firm designing a product and soliciting materials, to consumers purchasing it at the end. Understanding platforms for business people and others is of paramount importance for an obvious reason: platform businesses have revolutionized the economy by tapping into previously dormant sources of value and innovation. It helps that many of the examples in this book are familiar: Uber, Lyft, Amazon, Google, Facebook, LinkedIn, dating apps, and so forth, but I also learned about lesser-known businesses. For me, key insights included how best to design platforms to enable rapid growth, how to create high-quality interactions, the challenges of regulating them, and of course, how platforms make money. For example, I already knew that Facebook makes a ton of money due to advertisements (and not for user sign-ups, thankfully), but what about lesser-known platforms? I will strive to recall the concepts in Platform Revolution if (more likely when) I enter the world of business. I agree with Andrew McAfee’s (author of “Machine Platform Crowd”, see below) praise that “you can either read [Platform Revolution] or try to keep it out of the hands of your competitors – present and future. I think it’s an easy call.”

-

** Machine Platform Crowd: Harnessing our Digital Future ** is the most recent book jointly authored by Brynjolfsson and McAfee. It was published in 2017, and I was excited to read it after thoroughly enjoying their 2014 book The Second Machine Age. The title implies that it overlaps with the previous book, and it does: on platforms, the effect of two-sided markets, and how they are disrupting businesses. But there’s also two other core aspects: the machine and the crowd. In the former (my favorite part, for obvious reasons), they talk about how AI and machine learning have been able to overcome “Polyani’s Paradox”, discussing DeepMind’s AlphaGo – yay! Key insight: experts are often incorrect, and it’s best to leave many decisions to machines. The other part is the crowd, and how the core of many participants can do better than a smaller group of so-called experts. One of the more interesting aspects is the debate on Bitcoin as an alternative to cash/currency, and the underlying Blockchain structure to help enforce contracts. However, they say that companies are not going obsolete, in part because contracts can never fully specify everything in the possible world, so companies can claim to do anything that’s not specified there if they own an asset, etc. Brynjolfsson and McAfee argue that while the pace of today’s world is incredible, companies will still have a role to play, and so will people and management, since they help to provide a conducive environment or mission to get things done. Overall, these themes combine together to form a splendid presentation in, effectively, how to understand all three of these aspects (the machine, the platform, and the crowd) in the context of our world today. Sure, one can’t know everything from reading a book, but it gives a tremendous starting point, hence why I enjoyed it very much.

-

** Hit Refresh: The Quest to Rediscover Microsoft’s Soul and Imagine a Better Future for Everyone ** is a recent 2017 book by Microsoft CEO Satya Nadella and co-authored with Greg Shaw and Jill Tracie Nichols. Nadella is the third CEO in Microsoft’s history, the others being Steve Ballmer and (of course) Bill Gates himself. (Hit Refresh is listed on Bill Gates’ book blog, which I should have anticipated like I expect the sun to rise tomorrow.) In this book, Nadella uses the analogy of “hitting refresh” to describe his approach to being a CEO: just as how hitting refresh changes a webpage on your computer but also preserves some of the existing internal structure, Nadella as CEO wanted to change some aspects of Microsoft but maintain what worked well. The main Microsoft-related takeaway I got was that, at the time Nadella took the reins, Microsoft was behind on the mobile and cloud computing markets, and had a somewhat questionable stance towards open source code. Fast forward just a few years later, and all of a sudden it’s like we’re seeing a new Microsoft, with its stock price tripled in just four years. Microsoft’s Azure cloud computing platform is now a respectable competitor to Amazon Web Services, and Microsoft’s acquisitions of Minecraft and – especially – GitHub show its commitment to engaging in the communities of gamers and open-source programmers. In the future, Nadella predicts that mixed reality, artificial intelligence, and quantum computing will be the key technologies going forward, and Microsoft should play a key role in ensuring that such developments benefit humanity. This book is also partly about Nadella’s background: how he went from Hyderabad, India, to Redmond, Microsoft, and I find the story inspiring, and wish I can replicate some of his success in my future, post-Berkeley career. Overall, Hit Refresh is a refreshing (pun intended) book to read, and I was happy to get an insider’s view of how Microsoft works, and Microsoft’s vision for the near future.

-

** Reinventing Capitalism in the Age of Big Data ** is a 2018 book by Oxford professor Viktor Mayer-Schönberger and writer Thomas Ramge, that describes their view of how capitalism works today. In particular, they focus on comparing markets versus firms in a manner similar to books such as Platform Revolution (see my comments above), but with perhaps an increased discussion over the role of prices. Historically, humans lacked all the data we have today, and condensing everything about an item for purchase in a single quantity made sense for the sake of efficiency. Things have changed in today’s Big Data world, where data can better connect producers and consumers. In the past, a firm could control data and coordinate efforts, but this advantage has declined over time, causing the authors to argue that markets are making a “comeback” against the firm, while the decline of the firm means we need to rethink our approaches towards employment since stable jobs are less likely. Reinventing Capitalism doesn’t discuss much about policies to pursue, but one that I remember they suggested is a data tax (or any “data-sharing mandate” for that matter) to help level the playing field, where data effectively plays the role of money from earlier, or fuel in the case of Artificial Intelligence applications. Obviously, this won’t be happening any time soon (and especially not with the Republican party in control of our government) but it’s certainly thought-provoking to consider what the future might bring. I feel that, like a Universal Basic Income (UBI), a data tax is inevitable, but will come too late for most of its benefits to kick in due to delays in government implementation. It’s an interesting book, and I would recommend it along with the other business-related books I’ve read here. For another perspective, see David Leonhardt’s favorable review in The New York Times.

Group 2: Biographies and Memoirs

This is rapidly becoming a popular genre within nonfiction for me, because I like knowing more about accomplished people who I admire. It helps drive me to become a better person.

-

** Lee Kuan Yew: The Grand Master’s Insights on China, the United States, and the World ** is a book consisting of a series of quotes from Lee Kuan Yew (LKY), either through his writing or interviews throughout his long life. LKY was the first Prime Minister of Singapore from 1959 to 1990, and the transformation was nothing short of astonishing: taking a third-world country to a first-world one with skyscrapers and massive wealth, all despite being literally the size of a single city! Written two years before LKY’s death in 2015, this book covers a wide range of topics: the future of the United States and China, the future of Radical Islam, the future of globalization, how leadership and democracy works, and so on. Former Secretary of State Henry Kissinger wrote the introduction to this book, marveling at LKY’s knowledge. The impression I get from this book is that LKY simply is the definition of competent. Many books and articles I read about economics, democracy, and nation-building cite Singapore as a case where an unusually competent government can bring a nation from the third world to the first in a single generation.7 Part of the reason why I like the book is that LKY shares many of the insights I’ve worked out through my own extensive reading over the last few years. He, like I would describe myself, considers himself a classical liberal who supports democracy, the free market, and a sufficient — but not overblown — welfare state to support the lower class who lose out on the free market. I also found many of his comments remarkably prescient. He was making comments in 2000 about the dangers of globalization and the gap between rural and urban residents, and other topics that became “household” ones after the 2016 US Presidential Election. He’s also right in that weaknesses of the US system include gridlock and an inability to control spending (despite the Republicans in power now). He additionally (and again, this was quite before 2016) commented that the strength of America is that it takes in a number of immigrants (unlike East Asian countries) but also that the US would face issues with the rise of minorities such as Hispanics. He describes himself as correct — not politically correct — though some of his comments could be taken as caustic. I admire that he describes his goal of governance as maximizing the collective good of the greatest amount of people, and that he doesn’t have a theory — he just wants to get things done and then he will do things that work, and he’ll “let others extract the principles from my successful solutions”, the “real life test.” Though he passed away in 2015, his legacy of competence continues to be felt in Singapore.

-

** Worthy Fights: A Memoir of Leadership in War and Peace ** is Leon Panetta’s memoir, co-written with Jim Newton. I didn’t know much about Panetta, but after reading this engaging story of his life, I’m incredibly amazed by his career and how Panetta has made the United States and the world better off. The memoir starts at his father’s immigration from Italy to the United States, and then discusses Panetta’s early career in Congress (first as an assistant to a Congressman, then as a Congressman himself), and then his time at the Office of Management and Budget, and then President Clinton’s Chief of Staff, and then (yes, there’s more!) Director of the CIA, and finally, President Obama’s Secretary of Defense. Wow — that’s a lot to absorb already, and I wish I could have a fraction of the success and impact that Panetta has had on the world. I appreciate Panetta for several reasons. First, he repeatedly argues for the importance of balancing budgets, something which I believe isn’t a priority for either political party; despite what some may say (especially in the Republican party), their actions suggest otherwise (let’s build a wall!!!). Panetta, though, actually helped to balance the federal budget. Second, I appreciated all the effort that he and the CIA did to find and kill Osama bin Laden — that was one of the best things to happen from the CIA over the last decade, and their efforts should be appreciated. The raid on Osama bin Laden’s fortress was the most thrilling part of the memoir by far, and I could not put the book down. Finally, and while this may just be me, I personally find Panetta to be just the kind of American that we need the most. His commitment to the country is evident by the words in the book, and I can only hope that we see more people like him — whether in politics or not — instead of the ones who try to run government shutdowns8 and deliberately provoke people for the sake of provocation. After Enlightenment Now (see below), this was my second favorite book of 2018.

-

** My Journey at the Nuclear Brink ** is William Perry’s story of his coming of age in the nuclear era. For those who don’t know him (admittedly, this included me before reading this book!) he served as the Secretary of Defense for President Clinton from February 1994 to January 1997. Before that he held an “undersecretary” position in government, and before that he was an aspiring entrepreneur and a mathematician, and earlier still, he was in the military. The book can be admittedly dry at times, but I still liked it and Perry recounts several occasions when he truly feared that the world would delve into nuclear warfare, most notably during the Cuban Missile Crisis. During the Cold War, as expected, Perry’s focus was on containing possible threats from the Soviet Union. Later, as Secretary of Defense, Perry was faced with a new challenge: the end of the Cold War meant that the Soviet Union dissolved into 15 countries, but this meant that nuclear weapons were spread out among different entities, heightening the risks. It is a shame that few people understand how essential Perry was (along with then-Georgia Senator Sam Nunn) in defusing this crisis by destroying or dis-assembling nuclear silos. It is also a shame that, as painfully recounted by Perry, Russia-U.S. relations have sunk to their lowest point since the high at 1996-1997 that Perry helped to facilitate. Relations sank in large part due to the expansion of NATO to include Eastern European countries. This was an important event discussed by Michael Mandelbaum in Mission Failure, and while Perry argued forcefully against NATO expansion, Clinton overrode his decision by listening to … Al Gore, of all people. Gaaah. In more recent years, Perry has teamed up with Sam Nunn, Henry Kissinger, and George Shultz to spread knowledge on the dangers of nuclear warfare. These four men aim to move towards a world without nuclear weapons. I can only hope that we achieve that ideal.

-

** The Art of Tough: Fearlessly Facing Politics and Life ** is Barbara Boxer’s memoir, published in 2016 near the end of her fourth (and last) term as U.S. Senator of California. Before that, she was in the House of Representatives for a decade. Earlier still, Boxer held some local positions while taking part in several other political campaigns. Before moving to California in 2014, I didn’t know about Barbara Boxer, so I learned more about her experiences in the previously mentioned positions; I got a picture of what it’s like to run a political campaign and then later to be a politician. The stories of the Senate are most riveting, since it’s a highly exclusive body that acts as a feeder for presidents. It’s also constantly under public scrutiny — a good thing! In the Senate, Boxer emphasizes the necessity of developing working relationships among colleagues (are you listening, Ted Cruz?). She also emphasizes the importance of being tough (hence the book’s title), particularly due to being one of the few women in the Senate. Another example of “being tough” is staking out a minority, unpopular political position, such as her vote against the Iraq war in 2002, which was the correct thing to do in hindsight. She concludes the memoir emphasizing that she didn’t retire because of hyper-partisanship, but rather because she thought she could be more effective outside the Senate and that California would produce worthy successors to her. Indeed, her successor Kamala Harris holds very similar political positions. The book was a quick, inspiring read, and I now want to devour more memoirs by famous politicians. My biggest complaint, by far, is that during the 1992 Senate election, Boxer described herself as “an asterisk in the polls” and said even as recently as a few months before the Democratic primary election, she was thinking of quitting. But then she won … without any explanation for how she overcame the other contestants. I mean, seriously? One more thing: truthfully, one reason why I read The Art of Tough was that I wanted to know how people actually get to the House of Representatives or the Senate. In Boxer’s case, her predecessor actually knew her and recommended that she run for his seat. Thus, it seems like I need to know more politically powerful people.

-

** Hillbilly Elegy: A Memoir of a Family and Culture in Crisis ** is a famous 2016 memoir by Venture Capitalist JD Vance. He grew up poor, in Jackson, Kentucky and Middletown, Ohio and describes poverty, alcoholicism, a missing Dad, and a drug-addicted Mom complete with a revolving door of male figures. He found hope with his grandparents (known as “Mamaw and Papaw”) whom he credits for helping him recover academically. Vance then spent several years in the Marines, because he admitted he wasn’t ready for higher education. But the years in the Marines taught him well, and he went to Ohio State University and then Yale Law School, where one of his professors, Amy Chua, would encourage him to write this book. Hillbilly Elegy is great; in the beginning, you have to reread a few of these names to understand the family tree, but once you do, you can get a picture of what life must have been like — and how even though Vance was able to break out of poverty, he still has traces of his past. For instance, he still sometimes storms away from his current wife (another Yale Law grad) since that’s what the men in his family would often do, and he still has to watch his mother who continues to cycle in and out of drug abuse. Today, Vance is a Venture Capitalist investing in the mid-west and other areas that he thinks have been neglected.9 Hillbilly Elegy became popular after the 2016 presidential election, because in many ways it encapsulated rural, mid-Western white America’s shift from Democratic to Republican. As expected, Vance is a conservative, but says he voted for a third party. But here’s what I don’t get. He often blames the welfare state, but then he also fully admits that many of the members of his party believe in myths such as Obama being a Muslim and so forth, and says politics cannot help them. Well then, what shall we do? I also disagree with him about the problem of declining church attendance. I would never do some of the things the men in his family would do, despite my lack of church attendance — it’s something other than church attendance that’s the problem. According to his Twitter feed, he considered running for a Senate seat in Ohio, but elected not to!10 For another piece on his views, see his great opinion piece in The New York Times about finding hope from Barack Obama.

-

** Alibaba: The House that Jack Ma Built ** is an engaging biography of Jack Ma by longtime friend Duncan Clark. Jack Ma is the co-founder and face of Alibaba, which has led to his net worth of about 35 billion today. Ma has an unusual background as an English teacher in China, with little tech knowledge (he often jokes about this), despite Alibaba being the biggest e-commerce thing in China. In Alibaba, Clark describes Ma’s upbringing from Zhejiang province in China, with pictures from his childhood. He then describes how the business-minded reforms of China allowed Ma to try his hand at entrepreneurship, and particularly at e-commerce due to the spread of the Internet in China. Alibaba wasn’t Ma’s first company — his first ended up not doing much, but like so many eventually successful businessmen, he learned and was able to co-found Alibaba, fighting off e-Bay in China and joining with Yahoo to skyrocket in wealth. Of course, Alibaba wasn’t a guaranteed success, and like many books about entrepreneurs, Clark goes over the difficulties Ma had, and times when things just seemed hopeless. But, here we are today, and Ma — despite sacrificing lots of equity to other employees and investors — somehow has billions of dollars. It is a rousing and inspiring success story for someone with an unusual background for entrepreneurship, which is one of the reasons (along with Jack Ma’s oral skill) why people find his story inspiring. Clark’s book was written in 2016, and a number of interesting things have happened since the book was published. First, John Canny has had this student and this student join Alibaba, who (as far as I know) apply Deep Learning techniques there. Second, Ma has stepped down (!!) from Alibaba and was revealed to be a member of the Communist party. Duncan Clark, the author of this book, was cited, quoting that this is a sign that the government may be exercising too much control. For the sake of business (and the usual human rights issues) in China, I hope that is not the case.

-

** Churchill and Orwell: The Fight for Freedom ** is a thrilling 2017 book by Thomas E. Ricks, a longtime reporter specializing in military and national security issues, and who writes the Foreign Policy blog Best Defense. Churchill and Orwell, provides a dual biography of these two Englishmen, first discussing them independently before weaving together their stories and then combining their legacies. By the end of the 20th Century, as the book correctly points out, both Churchill and Orwell would be considered as two of the most influential figures in protecting the rights and freedoms of people from intrusive state governments and outside adversaries. Churchill, obviously, was the Prime Minister of England during World War II and guided the country through blood and tears to victory versus the decidedly anti-freedom Nazi Germany. Orwell initially played a far lesser role in the fight for freedom, and was still an unknown quantity even during the 1940s as he was writing his two most influential works: Animal Farm and 1984. However, no one could ever have anticipated at the time of his death in 1950 (one year after publishing 1984) that those books would become two of the most wildly successful novels of all time11. As mentioned earlier, this book was published last year, but I think if Ricks had extra time, he would have mentioned Kellyanne Conway’s infamous “alternative facts” statement and how 1984 once again became a bestseller … decades after it was originally published. I’m grateful to Ricks for writing such an engaging book, but of course, I’m even more grateful for what Churchill and Orwell have done. Their legacies have a permanent spot in my heart.

-

** A Higher Loyalty: Truth, Lies, and Leadership ** is the famous 2018 memoir of James Comey, former FBI director and detested by Democrats and Republicans alike. I probably have a (pun intended) higher opinion of him than almost all “serious” Democrats and Republicans, given my sympathy towards people who work in intelligence and military jobs that are supposed to be non-political. I was interested in why Comey discussed Clinton’s emails they way he did, and also how he managed his interactions with Trump. Note that the Robert Mueller thing is largely classified, so there’s nothing in A Higher Loyalty about that, but his interactions with others at the highest levels of American politics is fascinating. Comey’s book, however, starts early, with a harrowing story about how Comey and his brother were robbed at gunpoint while in high school, an event which he would remember forever and which spurred him to join law enforcement. Among other great stories in the book (before the Clinton/Trump stuff) is when he threatened to resign as (deputy) Attorney General. That was when George Bush wanted to renew StellarWind, a program which would surge into public discourse upon Edward Snowden’s leaks. I knew about this, but Comey’s writing made this story thrilling: a race to try and protect a dying Attorney General’s approval to renew a law which Comey and other lawyers thought was completely indefensible. (It was criticized by WSJ writer Karl Rove as “melodramatic flair”). Regarding the Clinton emails, Comey did a good job explaining to me what needed to happen in order to prosecute Clinton, and I think the explanation he gave was fair. Now, about his renewal of the news 11 days before the election … Comey said either he could not say anything (and destroy the reputation of the FBI if the email investigation was found to continue) or say something (and get hammered now). One of the things that I’m most impressed about the book is Comey’s praise towards Obama, and oddly, Obama said he still thought highly of him at the end of 2016 when Comey was universally pilloried in the press. A Higher Loyalty is another book in my collection of those who have served in high levels of office (Leon Panetta, William Perry, Michael Hayden, Barbara Boxer, Sonia Sotomayor, etc.) so you can tell that there’s a trend here. The WSJ slammed him for being “more like Trump than he admits” but I personally can’t agree with that statement.

-

Faith: A Journey for All is one of former President James (“Jimmy”) Carter’s many books,12 this one published in 2018. I discussed it in this earlier blog post.

Group 3: Self-Improvement and Skills Development

I have long enjoyed reading these books because I want to use them to become a highly effective person who can change the world for the better.

-

** Stress Free For Good: 10 Scientifically Proven Life Skills for Health and Happiness ** is a well-known 2005 book13 co-authored by professors Fred Luskin and Kenneth R. Pelletier. The former is known for writing Forgive for Good and his research on forgiveness, while the latter is more on the medical side. In this book, they discuss two types of stress: Type I and Type II. Type I stress occurs when the stress source is easily identified and resolved, while Type II stress is (as you might guess) when the source cannot be easily resolved. Not all stress is bad — somewhat contradicting the title itself! – as humans clearly need stress and its associated responses if it is absolutely necessary for survival (e.g., running away from a murderer). But this is not the correct response for a chronic but non-lethal condition such as deteriorating familial relationships, challenging work environments, and so forth. Thus, Luskin and Pelletier go through 10 skills, each dedicated to its own chapter. Skills include the obvious, such as smiling, and the not-so-obvious, such as … belly-breathing?!? Yes, really. The authors argue that each skill is scientifically proven and back each with anecdotes from their patients. I enjoyed the anecdotes, but I wonder how much scientific evidence qualifies as “proven”. Stress Free For Good does not formally cite any papers, and instead concisely describes work done by groups of researchers. Certainly, I don’t think we need dozens of papers to tell us that smiling is helpful, but I think other chapters (e.g., belly breathing) need more evidence. Also, like most self-help books, it suffers from the medium of the written word. Most people will read passively, and likely forget about the skills. I probably will be one of them, even though I know I should practice these skills. The good news is, while I have lots of stress, it’s not the kind (at least right now, thankfully) that is enormously debilitating and wears me down. For those in worse positions than me, I can see this book being, if not a literal life saver, at least fundamentally useful.

-

** The Start-Up of You: Adapt to the Future, Invest in Yourself, and Transform Your Career ** is more about self-improvement rather than business. It’s a 2012 book by LinkedIn founder Reid Hoffman and entrepreneur Ben Casnocha. Regarding Hoffman, he left academia and joined the tech industry despite little tech background, starting at Apple for two years and then going to Fujitsu for product management. He founded an online dating company which didn’t work out, before experiencing success with PayPal’s team, and then of course, as the founder of the go-to social network for professionals, LinkedIn. And for Casnocha, I need to start reading his blog if I want to learn more about business. But anyway, this book is about how to improve yourself to better adapt to modern times, which (as we all know) is fast-paced and makes it less likely that one can hold one career for life. To drill this home, Hoffman and Casnocha start off by discussing Detroit and the auto industry. They criticize the “passion first, then job hunt” mantra a la Cal Newport — who applauds the book on his blog, though I’m guessing he wouldn’t like the social media aspects. Hoffman and Casnocha urge the reader to utilize LinkedIn, Facebook, and Twitter to network, of but course Hoffman wants us to utilize LinkedIn!! Less controversially (at least to me), the authors talk about having a Plan A, B, and Z (!!), and show examples of pivoting. For example, the Flickr team, Sheryl Sandberg, and Reid Hoffman ended up in wildly different areas than they would have expected. Things change and one cannot plan everything. In addition, they also suggest working on a team. I agree! Look at high-tech start-ups today. They are essentially all co-founded. In addition to anecdotes and high-level advice, Hoffman and Casnocha have some more specific suggestions that they list at the end of chapters. One explicitly tells the reader to reach out to five people who work in adjacent niches and ask for coffee. I’ve never been a fan of this kind of advice, but perhaps I should start trying at least something? What I can agree with is this: lifelong learning. Yes, I am a lifelong learner.

-

How to Invest Your Time Like Money is a brief 2015 essay by time coach Elizabeth Grace Saunders, and I found out about it by reading (no surprise here!) a blog post from Cal Newport. I bought this on my iBooks app while trying to pass the time at a long airport layover in Vancouver when I was returning from ICRA 2018. Like many similarly-themed books, she urges the reader to drop activities that aren’t high on the priority list and won’t have a huge impact (meetings!!), and to set aside sufficient time for relaxing and sleeping. The main distinction between this book and others in the genre is that Saunders tries to provide a full-blown weekly schedule to the reader, urging them to fill in the blanks with what their schedule will look like. The book also proffers formulaic techniques to figure out which activity should go where. This is the part that I’m not a fan of — I never like having to go that far in detail in my scheduling and I doubt the effectiveness of applying formulas to figure out my activities. I can usually reduce my work days to one or two critical things that I need to do, and block off huge amounts of flexible time blocks. A fixed, rigid schedule (as in, stop working on task A at 10:00am and switch to task B for two hours) rarely works for me, so I am not much of a fan of this book.

-

** Peak: Secrets from the New Science of Expertise ** is a 2016 book by Florida State University psychologist Anders Ericsson and science writer Robert Pool. Ericsson is well-known for his research on deliberate practice, a proven technique for rapidly improving one’s ability in some field,14 and this book presents his findings to educate the lay audience. Ericsson and Pool define deliberate practice as a special type of “purposeful practice” in which there are well-defined goals, immediate feedback, total focus, and where the practitioner is slightly outside his or her comfort zone (but not too much!). This starkly contrasts with the kind of ineffective practice where one repeats the same activity over and over again. Ericsson and Pool demonstrate how the principles of deliberate practice were derived not only from “the usual”15 fields of chess and music, but also from seemingly obscure tasks such as memorizing a string of numerical digits. They provide lessons on developing mental representations for deliberate practice. Ericsson and Pool critique Malcolm Gladwell’s famous “10,000-hour rule” and, while they agree that it is necessary to invest ginormous amounts of time to become an expert, that time must consist of deliberate practice rather than “ordinary” practice. A somewhat controversial topic that appears later is the notion of “natural talent.” Ericsson and Pool claim that it doesn’t exist except for height and body size for sports, and perhaps a few early advantages associated with IQ for mental tasks. They back their argument with evidence of how child prodigies (e.g., Mozart) actually invested lots of meaningful practice beforehand. And thus lies the paradox for me: I’m happy that there isn’t a “natural talent” for computer science and AI research, but I’m not happy that I got a substantially late start in developing my math, programming and AI skills compared to my peers. That being said, this book proves its worth as an advocate for deliberate practice and for its appropriate myth-busting. I will do my best to apply deliberate practice to my work and physical fitness.

-