My Blog Posts, in Reverse Chronological Order

subscribe via RSS or by signing up with your email here.

International Symposium on Robotics Research (ISRR) 2019, Day 2 of 5

A view of Hanoi's bulidings and restaurants at night, after the second day of

ISRR 2019.

Before going to the conference room, I ate an amazing breakfast at the hotel’s buffet, which was on par with the breakfast from the Sydney hotel I was at for UAI 2017. I always face a dilemma for these cases as to when I should make yet another trip to get a new serving of fresh food. I voraciously ate the exotic fruits, such as dragon fruit and the super ripe, Vietnam-style mangoes, which are different from the mangoes I eat in Berkeley, California. Berries are the main fruits that I eat on a regular basis, but I put that on hold while I was here. I also picked up copies of an English-language newspaper about Vietnam, and would read those every morning during my stay.

After breakfast, I went to the main ISRR conference room at the hotel. I was 30 minutes early and among the first in the room, but that was because (a) I wanted to get a seating spot at the front, and (b) I needed to test my remote captioning system. I wanted to test the system with a person from Berkeley, where it was evening at the time. For this, I put a microphone at the table where the speakers would present, and set up my iPad to wirelessly connect to it. I next logged into a “meeting group” via an app on my iPad, and the captions would appear on a separate website URL on my iPad. After a few minutes, we agreed that it was ready.

Oussama Khatib, of Stanford University, started off the conference with a 30-minute talk about his research. I am aware of some of his work and was able to follow the slides reasonably well. The captioners immediately told me they had trouble with his accent. I was curious where Khatib was from, so I looked him up. He was raised in Aleppo, Syria, the city made famous by its recent destruction and warfare.

I see. A Stanford Professor was able to emerge from Aleppo in the 1950s and 1960s. I don’t know how this could happen today, and it’s sad when the government of Syria ruins opportunities for its own citizens to become renowned world leaders. It is completely unacceptable that Bashar al-Assad is still in power. I know this phrase has gotten politically unpalatable in some circles, but regime change must happen in Syria.

Some of the subsequent talks were easier for the captioners to understand. Unfortunately we ran into a few more technical issues (not counting the “accent” one), such as:

-

WiFi that sometimes disconnected.

-

Audio that sounded inaudible with lots of “coughs” and “people nearby” according to the captioners, even though at the time they told me this, the current speaker was a foot away from the microphone I had placed on the table at the front, and no one was within 10 feet of the microphone — or coughing.

-

Audio that seemed to have lots of feedback, before the captioners realized that they had to do something on their end to mute a microphone.

Technical difficulties are the main downside of remote captioning systems, and have happened every time I use remote captioning. I am not sure why there isn’t some kind of checklist for addressing common cases.

Anyway, ISRR 2019 has three main conference days, each of which consist of a series of 30-minute faculty talks, and two sets of 10 talks corresponding to accepted research papers. (Each paper talk is just 5 minutes.) After each set of 10 talks, we had “interactive sessions,” which are similar to poster sessions. There were six of these sessions, and hence 6 times 10 means there were 60 papers total at ISRR 2019. It’s a lot smaller than ICRA!

ISRR also has a notable “bimodal” age distribution of its attendees. Most of the paper presenters were young graduate students, and most of the faculty were senior. There was a notable lack of younger faculty. Also, of the 100-150 attendees that were there, my guess is that the gender distribution was roughly 15% female, 85% male. The racial composition was probably 50% White, 40% Asian, and 10% “Other”.

I couldn’t get the remote captioning working on the interactive sessions — there was a “pin” I was supposed to use, but it was not turning on no matter what I tried — so I mostly walked around and observed the posters. I also ate a lot of the great food at the interactive sessions, including more dragon fruit. The lunch after that was similarly scrumptious. Naturally, it was a buffet. ISRR definitely doesn’t shy back at providing high quality food!

For talks, the highlight of the day was, as expected, Prof. Ken Goldberg’s keynote talk. Ken gave one that was of a slightly different style compared to the other faculty talks; his weaved together his interests in art, philosophy, agriculture, robotics, and AI ethics.

Our lab also presented a paper that day on area contact models for grasping; Michael Danielczuk presented this work. I don’t know too much about the technical details, unfortunately. It seems like the kind of paper that Ken Goldberg and John Canny might have collaborated on if they were graduate students.

The conference did not provide dinner that night, but fortunately, a group of about 24 students gathered at the hotel lobby, and someone found a Vietnamese restaurant that was able to accommodate all of us. Truth be told, I was too full from all the food the conference provided, so I just ordered a small pork spring roll dish. It was piping hot that night, and the restaurant did not have adequate air conditioning, so I was feeling the heat. After we ate, I went and wandered around the lake near the hotel, snapping pictures with my phone. I wanted to make the most of my experience here.

International Symposium on Robotics Research (ISRR) 2019, Travel and Day 1 of 5

A random 25-second video I took with my iPhone of the traffic in Hanoi, Vietnam

(sound included).

I just attended the 2019 International Symposium on Robotics Research (ISRR) conference in Vietnam. It was a thrilling and eye-opening experience. I was there to present the robot bed-making paper, but I also wanted to make sure I got a taste of what Vietnam is like, given the once-in-a-lifetime opportunity. I will provide a series of blog posts which describe my experience at ISRR 2019, in a similar manner as I did for UAI 2017 and ICRA 2018.

There are no direct flights from San Francisco to Vietnam; most routes stop at one of the following cities: Seoul, Hong Kong, Taipei, or Singapore. I chose the Seoul route (technically, this means stopping at Incheon International Airport) due to cost and ideal timing. I was fortunate not to pick Hong Kong, given the current protests, and my presence there as a Westerner would definitely not ameliorate the situation.

I arrived in Incheon at 4:00AM and it was nearly deserted. After roaming around a bit to explore the airport, which is regarded as one of the best in the world, I found a food court to eat, and ordered a beef stew dish. When I got it, there was a small side dish that looked like noodles, but had a weird taste. I asked the waitress about the food. She excused herself to bring a phone, which showed the English translation: squid.

Aha! I guess this is how I will start eating food that I would ordinarily not be brave enough to eat.

I used my Google Translate Pro app to tell her “Thank You”. I had already downloaded Google Translate and signed up for the 7 day free trial. That way, I could use the offline translation from English to Korean or English to Vietnamese.

I next realized that I could actually shower at Incheon for free, even as a lowly economy passenger. I showered, and then explored the “resting area” in the international terminal. This is an entire floor with a nap area, lots of desks and charging stations, some small museum-like exhibits, and a “SkyDeck” lounge that anyone (even in economy class) can attend. I should also note that passengers do not need to go through immigration at Incheon if connecting to another international flight. I remember having to go through immigration in Vancouver even though I was only stopping there to go to Brisbane. Keep that in mind in case you are using Incheon airport. It’s a true international hub.

I flew on Asiana Airlines, which is one of the two main airlines from South Korea, with the other being Korean Air. According to some Koreans I know, they are roughly equal in quality, but Korean Air is perhaps slightly better. All the flight attendants I spoke to were fluent in English, as that seems to be a requirement for the job.

As I began to board my flight to Hanoi, I looked through the vast windows of the terminal to see mountains and clouds. The scene looked peaceful. It’s hard to believe that just a few miles north lies North Korea, led by the person who I consider to be the worst modern leader today, Kim Jong Un.

I will never tire of telling people how much I disapprove of Kim Jong Un.

I finally arrived in Hanoi, Vietnam on Saturday October 5. I withdrew some Vietnamese Dong from an ATM, and spoke (in English) with a travel agent to book a taxi to my hotel. We were able to arrange the details for a full round trip. It cost 38 USD, which is a bargain compared to how much a similar driving distance would cost in the United States.

The first thing I noticed after starting the taxi ride was: Vietnam’s traffic!! There were motorcycles galore, brushing up just a few centimeters away from the taxi and other cars on the road. Both car drivers and motorcyclists seemed unfazed at driving so close to each other.

I asked the taxi driver how many years he has been driving. He initially appeared confused by my question, but then responded with: two.

Well, two is better than zero, right?

The taxi driver resumed driving to the hotel, whisking out his smart phone to make a few calls along the way. I also saw a few nearby motorcyclists looking at their smartphones. Uh oh.

And then there is the honking. Wow. By my own estimation, I have been on about 150 total Uber or Lyft rides in my life, and in that single taxi ride to the hotel in Hanoi, I experienced more honks than all those Uber or Lyft rides combined.

I thought, in an only half-joking sense, that if I were in Nguyễn Phú Trọng’s position, the first thing I would do is to strictly enforce traffic laws.

We survived the ride and arrived at the hotel: the Sofitel Legend Metropole, a 5-star luxury hotel with French roots. I was quickly greeted by a wonderful hostess who led me to my room. She spoke flawless English. Along the way, I asked her where Kim Jong Un and Donald Trump had met during their second (and unsuccessful) nuclear summit.

She pointed to the room that we had just walked by, saying that they met there and ate dinner.

I didn’t have much to do that day, as it was approaching late afternoon and I was tired from my travel, so I slept in for a bit. I generally prefer sleeping in early for the first day, since it’s easy to sleep a few extra hours to adjust to a new time zone.

The following day, Sunday October 6, was officially the first day of the conference, but the only event was a welcome reception in the evening (at the hotel). Thus, I explored Hanoi for most of the day. And, apparently I lucked out: despite the stifling heat, there was a parade and celebration happening in the streets. Some may have had to do with the timing of October 10, 2019 as the 65th anniversary of Vietnam’s liberation from French rule.

On the streets, only one local talked to me that day; a boy who looked about twelve years old asked “Do you speak English?” I said yes, but unfortunately the parade in the background meant it was too noisy for me to understand most of the words he was saying, so I politely declined to continue the conversation, and the boy left to find a person nearby who did not look Vietnamese. And there were a lot of us that day. Incidentally, walking across the streets was much easier than usual, because the police had blocked off the roads from traffic. Otherwise, we would have had a nightmare trying to navigate through a stream of incoming motorcyclists, most of whom do not slow down when they see a pedestrian in front of them.

After enough time in the heat, I cooled down by exploring an air-conditioned museum: the Vietnamese Women’s Museum. The museum described the traditional ways of family life in Vietnam, with the obligatory (historical) marriage and family rituals. It also honored Vietnamese women who served in the American War. We, of course, call this the Vietnam War.

I finally attended the Welcome Reception that evening. It was cocktail style, with mostly meat dishes. (Being a vegetarian in Asia — with the exception of India — is insanely difficult.) I spoke with the conference organizers that day, who seemed to already know me. Perhaps it was because Ken Goldberg had mentioned me, or perhaps because I had asked them about some conference details so that I could effectively use a remote captioning system that Berkeley would provide me, as I will discuss in the posts to come.

Two Projects, The Year's Plan, and BAIR Blog Posts

Yikes! It has been a while since being active on this blog. The reason for my posting delay is, as usual, research deadlines. As I comment here, I still have a blogging addiction, but I force myself to prioritize research when appropriate. In order to keep my monthly blogging streak alive, here are three relevant updates. First, I recently wrapped up and made public two research projects. Second, I have, hopefully, a rough agenda for what I aim to accomplish this year. Third, there are several new BAIR Blog posts that we should read.

The two research projects are:

-

Deep Transfer Learning of Pick Points on Fabric for Robot Bed-Making, to appear at the International Symposium on Robotics Research (ISRR) 2019. We describe a system for robot bed-making on a quarter-scale bed. This is the rare academic project that I can actively advertise and describe to those outside of computer science academia, and it’s nice to see both academics and non-academics amused by robot bed-making.

-

Deep Imitation Learning of Sequential Fabric Smoothing Policies. We introduce the problem of fabric smoothing from a highly rumpled and wrinkled configuration. We develop a in-house fabric simulator and generate lots of data for fabric smoothing in simulation. Then, we show how to transfer this to a physical da Vinci surgical robot. I encourage you to watch the videos to see what the robot can do.

The bed-making paper will be at ISRR 2019, October 6 to 10. In other words, it is happening very soon! It will be in Hanoi, Vietnam, which is exciting as I have never been there. The only Asian country I have visited before is Japan.

We recently submitted the other project, on fabric smoothing, to arXiv. Unfortunately, we got hit with the dreaded “on hold” flag, so it may be a few more days before it gets officially released. (This sometimes happens for arXiv submissions, and we are not told the reason for why.)

I spent much of 2018 and early 2019 on the bed-making project, and then the first nine months of 2019 on fabric smoothing. These projects took an enormous amount of my time, and I learned several lessons, two of which are:

-

Having good experimental code practices is a must. The stuff in my linked blog post has helped me constantly throughout my research, which is why I have it on record here for future reference. I’m amazed that I rarely employed them (except perhaps version control) before coming to Berkeley.

-

Don’t start with deep reinforcement learning if imitation learning has not been tried. In the second project on fabric smoothing, I sunk about three months of research time attempting to get deep reinforcement learning to work. Then, with lackluster results, I switched to using DAgger, and voila, that turned out to be good enough for the project!

You can find details on DAgger from the official AISTATS 2011 paper, though much of the paper is for theoretical analysis on bounding regret. The actual algorithm is dead simple. Using the notation from the Berkeley DeepRL course, we can define DAgger as a four step cycle that gets repeated until convergence:

- Train \(\pi_\theta(\mathbf{a}_t \mid \mathbf{s}_t)\) from demonstrator data \(\mathcal{D} = \{\mathbf{o}_1, \mathbf{a}_1, \ldots, \mathbf{o}_N, \mathbf{a}_N\}\).

- Run \(\pi_\theta(\mathbf{a}_t \mid \mathbf{s}_t)\) to get an on-policy dataset \(\mathcal{D}_\pi = \{\mathbf{o}_1, \ldots, \mathbf{o}_M\}\).

- Ask a demonstrator to label $\mathcal{D}_\pi$ with actions $\mathbf{a}_t$.

- Aggregate $\mathcal{D} \leftarrow \mathcal{D} \cup \mathcal{D}_{\pi}$ and train again.

The DeepRL class uses a human as the demonstrator, but we use a simulated one, and hence we nicely avoid the main drawback of DAgger.

That’s it! DAgger is far easier to use and debug compared to reinforcement learning. As a general rule of thumb, imitation learning is easier than reinforcement learning, though it does require a demonstrator.

For the 2019-2020 academic year, I have many research goals, most of which build upon the prior two works or my other ongoing (not yet published) projects. I hope to at least know more about the following:

-

Simulator Quality and Structured Domain Randomization. I think simulation-to-real transfer is one of the most exciting topics in robotics. There are two “sub-topics” within this that I want to investigate. First, given the inevitable mismatch between simulator quality and the real world, how do we properly choose the “right” simulator for sim-to-real? During the fabric smoothing project, one person suggested I use ARCSim instead of our in-house simulator. We tried ARCSim briefly, but it was too difficult to implement grasping. If we use lower quality simulators, then I also want to know if there are ways to improve the simulator in a data-driven way.

The second sub-topic I want to know more about is the kind of specific, or “structured”, domain randomization that should be applied for tasks. In the fabric smoothing project, I randomized camera pose, colors, and brightness, but this was done in an entirely heuristic manner. I wonder if there are principled ways to decide on what randomization to use given a computational budget. If we had enough computational power, then of course, we can just try everything.

-

Combining Imitation Learning (IL) and Reinforcement Learning (RL). From prior blog posts, it is hopefully clear that I enjoy combining these two fields. I want to better understand how to optimize this combination of IL and RL to accelerate training of new agents and to reduce exploration requirements. For applications of these algorithms, I have gravitated towards fabric manipulation. It fits both of the two research projects described earlier, and it may be my niche.

For 2019-2020, I also aim to be more actively involved in advising undergraduate research. This is a new experience for me; thus far, my interaction with undergraduate researchers has been with the fabric smoothing paper where they helped me implement chunks of our code base. But now, there are so many ideas I want to try with simulators, IL, and RL, and I do not have time to do everything. It makes more sense to have undergraduates take on a lead role for some of the projects.

Finally, there wasn’t much of a post-project deadline reprieve because I needed to release a few BAIR Blog posts, which requires considerable administration. We have had several posts released in a close span over the last two weeks. The posts were ready for a long time (minus the formatting needed to get it on the actual website) but I was consumed with working on the projects, to the tune of working 14-15 hours a day, that I had to ask blog post authors to postpone. My apologies!

Here are some recent posts that are worth reading:

-

A Deep Learning Approach to Data Compression by Friso Kingma. I don’t know much about the technical details, unfortunately, but data compression is an important application.

-

rlpyt: A Research Code Base for Deep Reinforcement Learning in PyTorch by Adam Stooke. I am really interested in trying this new code base. By default, I use OpenAI baselines for reinforcement learning. While I have high praise for the project overall, baselines has disappointed me several times. You can see my obscenely detailed issue reports here and here to see why. The new code base, rlpyt, (a) uses the more debugging-friendly PyTorch, (b) also has parallel environment support, (c) supports more algorithms than baselines, and (d) may be more optimized in terms of speed (though I will need to benchmark).

-

Sample Efficient Evolutionary Algorithm for Analog Circuit Design by Kourosh Hakhamaneshi. Circuit design is unfortunately not in my area, but it is amazing to see how Deep Learning and evolutionary algorithms can be used in many fields. If there are any remaining low-hanging fruits in Deep Learning research, it is probably in applications to areas that are, on the surface, far removed from machine learning.

As a sneak preview, there are at least two more BAIR blog posts that we will be releasing next week.

Hopefully this year will be a fruitful one for research and advising. Meanwhile, if you are attending ISRR 2019 soon and want to chat, please contact me.

Sutton and Barto's Reinforcement Learning Textbook

It has been a pleasure reading through the second edition of the reinforcement learning (RL) textbook by Sutton and Barto, freely available online. From my day-to-day work, I am familiar with the vast majority of the textbook’s material, but there are still a few concepts that I have not fully internalized, or “grokked” if you prefer that terminology. Those concepts sometimes appear in the research literature that I read, and while I have intuition, a stronger understanding would be preferable.

Another motivating factor for me to read the textbook is that I work with function approximation and deep learning nearly every day, so I rarely get the chance to practice, or even review, the exact, tabular versions of the algorithms I’m using. I also don’t get to review the theory on those algorithms, because I work in neural network space. I always fear I will forget the fundamentals. Thus, during some of my evenings, weekends, and travels, I have been reviewing Sutton and Barto, along with other foundational textooks in similar fields. (I should probably update my old blog post about “friendly” textbooks!)

Sutton and Barto’s book is the standard textbook in reinforcement learning, and for good reason. It is relatively easy to read, and provides sufficient justification and background for the algorithms and concepts presented. The organization is solid. Finally, it has thankfully been updated in 2018 to reflect more recent developments. To be clear: it is not a deep reinforcement learning textbook, but knowing basic reinforcement learning is a prerequisite before applying deep neural networks, so it is better to have one textbook devoted to foundations.

Thus far, I’ve read most of the first half of the book, which covers bandit problems, the Markov Decision Process (MDP) formulation, and methods for solving (tabular) MDPs via dynamic programming, Monte Carlo, and temporal difference learning.

I appreciated a review of bandit problems. I knew about the $k$-armed bandit problem from reading papers such as RL-squared, which is the one that Professor Abbeel usually presents at the start of his meta-RL talks, but it was nice to see it in a textbook. Bandit problems are probably as far from my research as an RL concept can get, despite how I think they are more widely used in industry than “true” RL problems, but nonetheless I think I’ll briefly discuss them here because why not?

Suppose we have an agent which is taking actions in an environment. There are two cases:

-

The agent’s action will not affect the distribution of the subsequent situation it sees. This is a bandit problem. (I use “situation” to refer to both states and the reward distribution in $k$-armed bandit problems.) These can further be split up as nonassociative or associative. In the former, there is only one situation in the environment. In the latter, there are multiple situations, and this is often referred to as contextual bandits. A simple example would be if an environment has several $k$-armed bandits, and at each time, one of them is drawn at random. Despite the seemingly simplicity of the bandit problem, there is already a rich exploration-exploitation problem because the agent has to figure out which of $k$ actions (“arms”) to pull. Exploitation is optimal if we have one time step left, but what if we have 1000 left? Fortunately, this simple setting allows for theory and extensive numerical simulations.

-

The agent’s action will affect the distribution of subsequent situations. This is a reinforcement learning problem.

If the second case above is not true for a given task, then do not use RL. A lot of problems can be formulated as RL — I’ve seen cases ranging from protein folding to circuit design to compiler optimization — but that is different from saying that all problems make sense in a reinforcement learning context.

We now turn to reinforcement learning. I list some of the relevant notation and equations. As usual, when reading the book, it’s good practice to try and work out the definitions of equations before they are actually presented.

-

They use $R_{t+1}$ to indicate the reward due to the action at time $t$, i.e., $A_t$. Unfortunately, as they say, both conventions are used in the literature. I prefer $R_t$ as the reward at time $t$, partially because I think it’s the convention at Berkeley. Maybe people don’t want to write another “+1” in LaTeX.

-

The lowercase “$r$” is used to represent functions, and it can be a function of state-action pairs $r : \mathcal{S} \times \mathcal{A} \to \mathbb{R}$, or state-action-state triples $r : \mathcal{S} \times \mathcal{A} \times \mathcal{S} \to \mathbb{R}$, where the second state is the successor state. I glossed over this in my August 2015 post on MDPs, where I said: “In general, I will utilize the second formulation [the $r(s,a,s’)$ case], but the formulations are not fundamentally different.” Actually, what I probably should have said is that either formulation is valid and the difference likely comes down to whether it “makes sense” for a reward to directly depend on the successor state.

In OpenAI gym-style implementations, it can go either way, because we usually call something like:

new_obs, rew, done, info = env.step(action), so the new observationnew_obsand rewardreware returned simultaneously. The environment code therefore decides whether it wants to make use of the successor state or not in the reward computation.In Sutton and Barto’s notation, the reward function can be this for the first case:

\[r(s,a) = \mathbb{E}\Big[ R_t \mid S_{t-1}=s, A_{t-1}=a \Big] = \sum_{r \in \mathcal{R}} r \cdot p(r | s,a) = \sum_{r \in \mathcal{R}} r \cdot \sum_{s' \in \mathcal{S}} p(s', r | s,a)\]where we first directly apply the definition of a conditional expectation, and then do an extra marginalization over the $s’$ term because Sutton and Barto define the dynamics in terms of the function $p(s’,r | s,a)$ rather than the $p(s’|s,a)$ that I’m accustomed to using. Thus, I used the function $p$ to represent (in a slight abuse of notation) the probability mass function of a reward, or reward and successor state combination.

Similarly, the second case can be written as:

\[r(s,a,s') = \mathbb{E}\Big[ R_t \mid S_{t-1}=s, A_{t-1}=a, S_{t}=s' \Big] = \sum_{r \in \mathcal{R}} r \cdot \frac{p(s', r | s,a)}{p(s' | s,a)}\]where now we have the $s’$ given to us, so there’s no need to sum over it. If we are summing over possible reward values, we will also use $r$.

-

The expected return, which the agent wants to maximize, is $G_t$. I haven’t seen this notation used very often, and I think I only remember it because it appeared in the Rainbow DQN paper. Most papers just write something similar to $\mathbb{E}[\sum_{t=0}^{\infty} \gamma^tR_t]$, where the sum starts at 0 because that’s where the agent starts.

Formally, we have:

\[G_t = \sum_{k=t+1}^{T} \gamma^{k-t-1} R_k\]where we might have $T=\infty$, or $\gamma=1$, but both cannot be true. This is their notation for combining episodic and infinite-horizon tasks.

Suppose that $T=\infty$. Then we can write the expected return $G_t$ in a recursive fashion:

\[G_t = R_{t+1} + \gamma (R_{t+2} + \gamma R_{t+3} + \gamma^2 R_{t+4} + \cdots) = R_{t+1} + \gamma G_{t+1}.\]From skimming various proofs in RL papers, recursion frequently appears, so it’s probably a useful skill to master. The geometric series is also worth remembering, particularly when the reward is a fixed number at each time step, since then there is a sum and a “common ratio” of $\gamma$ between successive terms.

-

Finally, we have the all important value function in reinforcement learning. These are usually state values or state-action values, but others are possible, such as advantage functions. The book’s notation is to use lowercase letters, i.e.: $v_\pi(s)$ and $q_\pi(s,a)$ for state and state-value functions. Sadly, the literature often uses $V_\pi$ and $Q_\pi(s,a)$ instead, but as long as we know what we’re talking about, the notation gets abstracted away. These functions are:

\[v_\pi(s) = \mathbb{E}_\pi\Big[G_t \mid S_t=s\Big]\]for all states $s$, and

\[q_\pi(s,a) = \mathbb{E}_\pi\Big[G_t \mid S_t=s, A_t=a\Big]\]for all states and action pairs $(s,a)$. Note the need to have $\pi$ under the expectation!

That’s all I will bring up for now. I encourage you to check the book for a more complete treatment of notation.

A critical concept to understand in reinforcement learning is the Bellman equation. This is a recursive equation that defines a policy with respect to itself, effectively providing a “self consistency” condition (if that makes sense). We can write the Bellman equation for the most interesting policy, the optimal one $\pi_*(s)$, as

\[\begin{align} v_*(s) &= \mathbb{E}_{\pi_*}\Big[G_t \mid S_t=s\Big] \\ &{\overset{(i)}=}\;\; \mathbb{E}_{\pi_*}\Big[R_{t+1} + \gamma G_{t+1} \mid S_t=s\Big] \\ &{\overset{(ii)}=}\; \sum_{a,r,s'} p(a,r,s'|s) \Big[r + \gamma \mathbb{E}_{\pi_*}[G_{t+1} \mid S_{t+1}=s']\Big] \\ &{\overset{(iii)}=}\; \sum_{a} \pi_*(a|s) \sum_{r,s'} p(r,s'|a,s) \Big[r + \gamma \mathbb{E}_{\pi_*}[G_{t+1} \mid S_{t+1}=s']\Big] \\ &{\overset{(iv)}=}\; \max_{a} \sum_{r,s'} p(r,s'|a,s) \Big[r + \gamma \mathbb{E}_{\pi_*}[G_{t+1} \mid S_{t+1}=s']\Big] \\ &{\overset{(v)}=}\; \max_{a} \sum_{s',r} p(r,s'|s,a) \Big[r + \gamma v_*(s')\Big] \end{align}\]where

- in (i), we apply the recurrence on $G_t$ as described earlier.

- in (ii), we convert the expectation into its definition in the form of a sum over all possible values of the probability mass function $p(a,r,s’|s)$ and the subsequent value being taken under the expectation. The $r$ is now isolated and we condition on $s’$ instead of $s$ since we’re dealing with the next return $G_{t+1}$.

- in (iii) we use the chain rule of probability to split the density $p$ into the policy $\pi_*$ and the “rest of” $p$ in an abuse of notation (sorry), and then push the sums as far to the right as possible.

- in (iv) we use the fact that the optimal policy will take only the action that maximizes the value of the subsequent expression, i.e., the expected value of the reward plus the discounted value after that.

- finally, in (v) we convert the $G_{t+1}$ into the equivalent $v_*(s’)$ expression.

In the above, I use $\sum_{x,y}$ as shorthand for $\sum_x\sum_y$.

When trying to derive these equations, I think the tricky part comes when figuring out when it’s valid to turn a random variable (in capital letters) into one of its possible instantiations (a lowercase letter). Here, we’re dealing with policies that determine an action given a state. The environment subsequently generates a return and a successor state, so these are the values we can sum over (since we assume a discrete MDP). The expected return $G_t$ cannot be summed over and must remain inside an expectation, or converted to an equivalent definition.

In the following chapter on dynamic programming techniques, the book presents the policy improvement theorem. It’s one of the few theorems with a proof in the book, and relies on similar “recursive” techniques as shown in the Bellman equation above.

Suppose that $\pi$ and $\pi’$ are any pair of deterministic policies such that, for all states $s \in \mathcal{S}$, we have $q_\pi(s,\pi’(s)) \ge v_\pi(s)$. Then the policy $\pi’$ is as good as (or better than) $\pi$, which equivalently means $v_{\pi’}(s) \ge v_\pi(s)$ for all states. Be careful about noticing which policy is under the value function.

The proof starts from the given and ends with the claim. For any $s$, we get:

\[\begin{align} v_\pi(s) &\le q_\pi(s,\pi'(s)) \\ &{\overset{(i)}=}\;\; \mathbb{E}\Big[ R_{t+1} + \gamma v_\pi(S_{t+1}) \mid S_t=s, A_t=\pi'(s) \Big] \\ &{\overset{(ii)}=}\; \mathbb{E}_{\pi'}\Big[ R_{t+1} + \gamma v_\pi(S_{t+1}) \mid S_t=s \Big] \\ &{\overset{(iii)}\le}\; \mathbb{E}_{\pi'}\Big[ R_{t+1} + \gamma q_\pi(S_{t+1}, \pi'(S_{t+1})) \mid S_t=s \Big] \\ &{\overset{(iv)}=}\; \mathbb{E}_{\pi'}\Big[ R_{t+1} + \gamma \cdot \mathbb{E}\Big[R_{t+2} + \gamma v_\pi(S_{t+2}) \mid S_{t+1}, A_{t+1}=\pi'(S_{t+1})\Big] \mid S_t=s \Big] \\ &{\overset{(v)}=}\;\; \mathbb{E}_{\pi'}\Big[ R_{t+1} + \gamma R_{t+2} + \gamma^2 v_\pi(S_{t+2}) \mid S_t=s \Big] \\ &{\overset{(vi)}\le}\; \mathbb{E}_{\pi'}\Big[ R_{t+1} + \gamma R_{t+2} + \gamma^2 R_{t+3} + \gamma^3 v_\pi(S_{t+3}) \mid S_t=s \Big] \\ &\vdots \\ &\le\; \mathbb{E}_{\pi'} \Big[ R_{t+1} + \gamma R_{t+2} + \gamma^2 R_{t+3} + \gamma^3R_{t+4} + \cdots \mid S_t = s\Big] \\ &=\; v_{\pi'}(s)... \\ \end{align}\]where

- in (i) we expand the left hand side by definition, and in particular, the action we condition on for the Q-values are from $\pi’(s)$. I’m not doing an expectation w.r.t. a given policy because we have the action already given to us, hence the “density” here is from the environment dynamics.

- in (ii) we remove the conditioning on the action in the expectation, and make the expectation w.r.t. the policy $\pi’$ now. Intuitively, this is valid because by taking an expectation w.r.t. the (deterministic) $\pi’$, given that the state is already conditioned upon, the policy will deterministically provide the same action $A_t=\pi’(s)$ as in the previous line. If this is confusing, think of the expectation under $\pi’$ as creating an outer sum $\sum_{a}\pi’(a|s)$ before the rest of the expectation. However, since $\pi’$ is deterministic, it will be equal to one only under one of the actions, the “$\pi’(s)$” we’ve been writing.

- in (iii) we apply the theorem’s assumption.

- in (iv) we do a similar thing as (i) by expanding $q_\pi$, and conditioning on random variables rather than a fixed instantiation $s$ since we are not given one.

- in (v) we apply a similar trick as earlier, by moving the conditioning on the action under the expectation, so that the inner expectation turns into “$\mathbb{E}_{\pi’}$”. To simplify, we move the nner expectation out to merge with the outermost expectation.

- in (vi) we recursively expand based on the inequality of (ii) vs (v).

- then finally, after repeated application, we get to the claim.

One obvious implication of the proof above is that, if we have two policies that are exactly the same, except for one state where $\pi’(s) \ne \pi(s)$, then if the condition holds in the theorem above, $\pi’$ is a strictly better policy.

The generalized policy iteration subsection in the same chapter is worth reading. It describes, in one page, the general idea of learning policies via interaction between policy evaluation and policy improvement.

I often wished the book had more proofs of its claims, but then I realized it wouldn’t be suitable as an introduction to reinforcement learning. For the theory, I’m going through Chapter 6 of Dynamic Programming and Optimal Control by Dimitri P. Bertsekas.

It’s a pleasure to review Sutton and Barto’s book and compare how much more I know now than I did when first studying reinforcement learning in a clumsy on-and-off way from 2013 to 2016. Coming up next will be, I promise, discussion of the more technical and challenging concepts in the textbook.

Domain Randomization Tips

Domain randomization has been a hot topic in robotics and computer vision since 2016-2017, when the first set of papers about it were released (Sadeghi et al., 2016, Tobin et al., 2017). The second one was featured in OpenAI’s subsequent blog post and video. They would later follow-up with some impressive work on training a robot hand to manipulate blocks. Domain randomization has thus quickly become a standard tool in our toolkit. In retrospect, the technique seems obviously useful. The idea, as I’ve seen Professor Abbeel state in so many of his talks, is to effectively randomize aspects of the training data (e.g., images a robot might see) in simulation, so that the real world looks just like another variation. Lilian Weng, who was part of OpenAI’s block-manipulating robot, has a good overview of domain randomization if you want a little more detail, but I highly recommend reading the actual papers as well, since most are relatively quick reads by research paper standards. My goal in this post is not to simply rehash the definition of domain randomization, but to go over concepts and examples that perhaps might not be obvious at first thought.

My main focus is on OpenAI’s robotic hand, or Dactyl as they call it, and I lean heavily on their preprint. Make sure you cite that with OpenAI as the first author! I will also briefly reference other papers that use domain randomization.

-

In Dactyl there is a vision network and a control policy network. The vision network takes Unity-rendered images as input, and outputs the estimated object pose (i.e., a quaternion). The pose then gets fed into the control policy, which also takes as input the robot fingertip data. This is important: they are NOT training their policy directly from images to actions, but from fingertips and object pose to action. Training PPO — their RL algorithm of choice — directly on images would be horrendous. Domain randomization is applied in both the vision and control portions.

I assume they used Unity due to ease of programmatically altering images. They might have been able to do this in MuJoCo, which comes with rendering support, but I’m guessing it is harder. The lesson is to ensure that whatever rendering software one is using, make sure it is easy to programmatically change images.

-

When performing domain randomization for some physical parameter, the mean of the range should correspond to reasonable physical values. If one thinks that friction is really 0.7 (whatever that means), then one should code the domain randomization using something like:

friction = np.random.uniform(0.7-eps, 0.7+eps)whereepsis a tuneable parameter. Real-world calibration and/or testing may be needed to find this “mean” value. OpenAI did this by running trajectories and minimizing mean squared error. I think they had to do this for at least the 264 MuJoCo parameters. -

It may help to add correlated noise to observations (i.e., pose and fingertip data) and physical parameters (e.g., block sizes and friction) that gets sampled at the beginning of each episode, but is kept fixed for the episode. This may lead to better consistency in the way noise is applied. Intuitively, if we consider the real world, the distribution of various parameters may vary from that in simulation, but it’s not going to vary during a real-world episode. For example, the size of a block is going to stay the same throughout the robotic hand’s manipulation. An interesting result from their paper was that an LSTM memory-augmented policy could learn the kind of randomization that was applied.

-

Actor-Critic methods use an actor and a critic. The actor is the policy, and the critic estimates a value function. A key insight is that only data passed to the actor needs to be randomized during training. Why? The critic’s job is to accurately assess the value of a state so that it can assist the actor. During deployment, only the trained actor is needed, which gets real-world data as input. Adding noise to the critic’s input will make its job harder.

This reminds me of another OpenAI product, Asymmetric Actor-Critic (AAC), where the critic gets different input than the actor. In AAC, the critic gets a lower-dimensional state representation instead of images, which makes it easier to accurately assess the value of a state, and it’s fine for training because, again, the value network is what gets deployed. Oh, and surprise surprise, the Asymmetric Actor-Critic paper also used domain randomization, and mentioned that randomizing colors should be applied independently (or separately) for each object. I agree.

-

When applying randomization to images, adding uniform, Gaussian, and/or “salt and pepper noise” is not sufficient. In our robot bed-making paper, I used these forms of noise to augment the data, but data augmentation is not the same as domain randomization, which is applied to cases when we train in simulation and transfer to the real world. In our paper, I was using the same real-world images that the robot saw. With domain randomization, we want images that look dramatically different from each other, but which are also realistic and similar from a human’s perspective. We can’t do this with Gaussian noise, but we can do this by randomizing hue, saturation, value, and colors, along with lighting and glossiness. OpenAI only applied per-pixel Gaussian noise at the end of this process.

Another option, which produces some cooler-looking images, is to use procedural generation of image textures. This is the approach taken in these two papers from Imperial College London (ICL), which use “Perlin noise” to randomize images. I encourage you to check out the papers, particularly the first one, to see the rendered images.

-

Don’t forget camera randomization. OpenAI randomized the positions and orientations with small uniform noise. (They actually used three images simultaneously, so they have to adjust all of them.) Both of the ICL papers said camera randomization was essential. Unfortunately the sim-to-real cloth paper did not precisely explain their camera randomization parameters, but I’m assuming it is the same as their prior work. Camera randomization is also used in the Dexterity Network project. From communicating with the authors (since they are in our lab), I think they used randomization before it was called “domain randomization.”

I will keep these and other tricks in mind when applying domain randomization. I agree with OpenAI in that it is important for deploying machine learning based robotics in the real world. I know there’s a “Public Relations” aspect to everything they promote, but I still think that the technique matters a lot, and will continue to be popular in the near future.

Apollo 11, Then and Now

Today, we celebrate the 50th anniversary of the first humans walking on the moon from the Apollo 11 mission on July 20, 1969. It was perhaps the most complicated and remarkable technological accomplishment the world had ever seen at that time. I can’t imagine the complexity in doing this with the knowledge we had back in 1969, and without the Internet.

I wasn’t alive back then, but I have studied some of the history and am hoping to read more about it. More importantly, though, I also want to understand how we can encourage a similar kind of “grand mission” for the 21st century, but this one hopefully cooperative among several nations rather than viewed in the lens of “us versus them.” I know this is not easy. Competition is an essential ingredient for accelerating technological advances. Had the Soviet Union not launched Sputnik in 1957, perhaps we would not have had a Space Race at all, and NASA might not exist.

I also understand the contradictions of the 1960s. My impression from reading various books and news articles is that trust and confidence in government was generally higher back then than it is today, which seems justified in the sense that America was able to somehow muster the political will and pull together so many resources for the Space Race. But it also seems strange to me, since that era also saw the Civil Rights protests and the assassination of Martin Luther King Jr in 1968, and then the Stonewall Inn riots of 1969. Foreign policy was rapidly turning into a disaster with the Vietnam War, leading Lyndon Johnson to avoid running for president in 1968. Richard Nixon would be the president who called Neil Armstrong and Buzz Aldrin when they landed on the moon and fulfilled John F. Kennedy’s vision from earlier — and we all know what happened to Nixon in 1974.

I have no hope that our government can replicate a feat like Apollo 11. I don’t mean to phrase this as an entirely negative statement; on the contrary, that our government largely provides insurance instead of engaging in expensive, bombastic missions has helped to stabilize or improve the lives of many. Other factors that affect my thinking here, though, are less desirable: it’s unlikely that the government will be able to accomplish what it did 50 years ago due to high costs, soaring debt, low trust, and little sense of national unity.

Investment and expertise in math, science, and education show some disconcerting trends. The Space Race created heavy interest and investment in science, and was one of the key factors that helped motivate, for example, RPI President Shirley Ann Jackson to study physics. Yet, as UC Berkeley economist Enrico Moretti describes in The New Geography of Jobs (the most recent book I’ve read) young American students are average in math and science compared to other advanced countries. Fortunately, the United States in the age of Trump continues to have an unprecedented ability to recruit high skilled immigrants from other countries. This is a key advantage the United States has other countries, and it would be wise not to relinquish it, but neither does it provide a pass for the poor state of math and science education in many parts of the country.

What has improved in the last 50 years is the strength and technological leadership of the private sector. Among the American computer science students here at Berkeley, there is virtually no interest in working for the public sector. For PhDs, with the exception of those who pursue careers in academia, almost all work for a big tech company or a start-up. It makes sense, because the most exciting advancements, particularly in my fields of AI and robotics, have come from companies like Google (AI agents for Go and Starcraft), and “capped-profits” like OpenAI (Dota2). Google (via Waymo), Tesla, and many other companies are accelerating the development of self-driving cars. Other companies perform great work in computer vision, such as Facebook, and in natural language processing, with Google and Microsoft among the leaders. Those of us advocating to break up these companies should remember that they are the ones pioneering the technologies of this century.

NASA wants to send more humans on the moon by 2024. That would be inspiring, but I argue that we need to focus on two key technologies of our time: AI and clean energy. Recent AI advantages are extraordinarily energy-hungry, and we have a responsibility not to consume too much energy, or at the very least to utilize cleaner energy sources more often. I don’t necessarily mean “green” energy, because I am a strong proponent of nuclear energy, but hopefully my point is clear. Perhaps the Apollo 11 of this century could use AI for better management of energy of all sorts, and could be pursued by various company alliances spanning multiple countries. For example, think Google and Baidu aligning with energy companies in their home countries to extract more value from wind energy. Such achievements have the potential to help people all across the world.

AI will probably be great, and let’s ensure we use it wisely to create a better future for all.

Understanding Prioritized Experience Replay

Prioritized Experience Replay (PER) is one of the most important and conceptually straightforward improvements for the vanilla Deep Q-Network (DQN) algorithm. It is built on top of experience replay buffers, which allow a reinforcement learning (RL) agent to store experiences in the form of transition tuples, usually denoted as $(s_t,a_t,r_{t},s_{t+1})$ with states, actions, rewards, and successor states at some time index $t$. In contrast to consuming samples online and discarding them thereafter, sampling from the stored experiences means they are less heavily “correlated” and can be re-used for learning.

Uniform sampling from a replay buffer is a good default strategy, and probably the first one to attempt. But prioritized sampling, as the name implies, will weigh the samples so that “important” ones are drawn more frequently for training. In this post, I review Prioritized Experience Replay, with an emphasis on relevant ideas or concepts that are often hidden under the hood or implicitly assumed.

I assume that PER is applied with the DQN framework because that is what the original paper used, but PER can, in theory, be applied to any algorithm which samples from a database of items. As most Artificial Intelligence students and practitioners probably know, the DQN algorithm attempts to find a policy \(\pi\) which maps a given state $s_t$ to an action $a_t$ such that it maximizes the expected reward of the agent \(\mathbb{E}_{\pi}\Big[ \sum_{t=0}^\infty r_t \Big]\) from some starting state $s_0$. DQN obtains \(\pi\) implicitly by calculating a state-value function $Q_\theta(s,a)$ parameterized by $\theta$, which measures the goodness of the given state-action with respect to some behavioral policy. (This is a critical point that’s often missed: state-action values, or state-values for that matter, don’t make sense unless they are also attached to some policy.)

To find an appropriate $\theta$, which then determines the final policy $\pi$, DQN performs the following optimization:

\[{\rm minimize}_{\theta} \;\; \mathbb{E}_{(s_t,a_t,r_t,s_{t+1})\sim D} \left[ \Big(r_t + \gamma \max_{a \in \mathcal{A}} Q_{\theta^-}(s_{t+1},a) - Q_\theta(s_t,a_t)\Big)^2 \right]\]where $(s_t,a_t,r_t,s_{t+1})$ are batches of samples from the replay buffer $D$, which is designed to store the past $N$ samples (usually $N=1,000,000$ for Atari 2600 benchmarks). In addition, $\mathcal{A}$ represents the set of discrete actions, $\theta$ is the current or online network, and $\theta^-$ represents the target network. Both networks use the same architecture, and we use $Q_\theta(s,a)$ or $Q_{\theta^-}(s,a)$ to denote which of the two is being applied to evaluate $(s,a)$.

The target network starts off by getting matched to the current network, but remains frozen (usually for thousands of steps) before getting updated again to match the network. The process repeats throughout training, with the goal of increasing the stability of the targets $r_t + \gamma \max_{a \in \mathcal{A}} Q_{\theta^-}(s_{t+1},a)$.

I have an older blog post here if you would like an intuitive perspective on DQN. For more background on reinforcement learning, I refer you to the standard textbook in the field by Sutton and Barto. It is freely available (God bless the authors) and updated to the second edition for 2018. Woo hoo! Expect future blog posts here about the more technical concepts from the book.

Now, let us get started on PER. The intuition of the algorithm is clear, and the Prioritized Experience Replay paper (presented at ICLR 2016) is surprisingly readable. They say:

In particular, we propose to more frequently replay transitions with high expected learning progress, as measured by the magnitude of their temporal-difference (TD) error. This prioritization can lead to a loss of diversity, which we alleviate with stochastic prioritization, and introduce bias, which we correct with importance sampling. Our resulting algorithms are robust and scalable, which we demonstrate on the Atari 2600 benchmark suite, where we obtain faster learning and state-of-the-art performance.

The paper was written in 2015 and submitted to ICLR 2016, so straight-up PER with DQN is definitely not state of the art performance. For example, the Rainbow DQN algorithm is superior. Everything else is correct, though. The PER idea reminds me of “hard negative mining” in the supervised learning setting. The magnitude of the TD error (squared) is what we want to minimize in the Bellman equation. Hence, pick the samples with the largest error so that our neural network can minimize it!

To clarify a somewhat implied point (for those who did not read the paper), and to play some devil’s advocate, why do we minimize the magnitude of the TD error? Ideally we would sample with respect to some mysterious function $f( (s_t,a_t,r_t,s_{t+1}) )$ that exactly tells us the “usefulness” of sample $(s_t,a_t,r_t,s_{t+1})$ for fastest learning to get maximum reward. But since this magical function $f$ is unknown, we use absolute TD error because it appears to be a reasonable approximation to it. There are other options, and I encourage you to read the discussion in Appendix A. I am not sure how many alternatives to TD error magnitude have been implemented in the literature. Since I have not seen any (besides a KL-based one in Rainbow DQN), it suggests that DeepMind’s choice of absolute TD error was the right one. The TD error for vanilla DQN, is:

\[\delta_i = r_t + \gamma \max_{a \in \mathcal{A}} Q_{\theta^-}(s_{t+1},a) - Q_\theta(s_t,a_t)\]and for Double DQN, it would be:

\[\delta_i = r_t + \gamma Q_{\theta^-}(s_{t+1},{\rm argmax}_{a \in \mathcal{A}} Q_\theta(s_{t+1},a)) - Q_\theta(s_t,a_t)\]and either way, we use $| \delta_i |$ as the magnitude of the TD error. Negative versus positive TD errors are combined into one case here, but in principle we could consider them as separate cases and add a bonus to whichever one we feel is more important to address.

This provides the absolute TD error, but how do we incorporate this into an RL algorithm?

First, we can immediately try to assign the priorities ($| \delta_i |$) as components to add to the samples. That means our replay buffer samples are now $(s_{t},a_{t},r_{t},s_{t+1}, | \delta_t |)$. (Strictly speaking, they should also have a “done” flag $d_t$ which tells us if we should use the bootstrapped estimate of our target, but we often omit this notation since it is implicitly assumed. This is yet another minor detail that is not clear until one implements DQN.)

But then here’s a problem: how is it possible to keep a tally of all the magnitude of TD errors updated? Replay buffers might have a million elements in them. Each time we update the neural network, do we really need to update each and every $\delta_i$ term, which would involve a forward pass through $Q_\theta$ (and possibly $Q_{\theta^-}$ if it was changed) for each item in the buffer? DeepMind proposes a far more computationally efficient alternative of only updating the $\delta_i$ terms for items that are actually sampled during the minibatch gradient updates. Since we have to compute $\delta_i$ anyway to get the loss, we might as well use those to change the priorities. For a minibatch size of 32, each gradient update will change the priorities of 32 samples in the replay buffer, but leave the (many) remaining items alone.

That makes sense. Next, given the absolute TD terms, how do we get a probability distribution for sampling? DeepMind proposes two ways of getting priorities, denoted as $p_i$:

-

A rank based method: $p_i = 1 / {\rm rank}(i)$ which sorts the items according to $| \delta_i |$ to get the rank.

-

A proportional variant: $p_i = | \delta_i | + \epsilon$, where $\epsilon$ is a small constant ensuring that the sample has some non-zero probability of being drawn.

During exploration, the $p_i$ terms are not known for brand-new samples because those have not been evaluated with the networks to get a TD error term. To get around this, PER initializes $p_i$ according to the maximum priority of any priority thus far, thus favoring those terms during sampling later.

From either of these, we can easily get a probability distribution:

\[P(i) = \frac{p_i^\alpha}{\sum_k p_k^\alpha}\]where $\alpha$ determines the level of prioritization. If $\alpha \to 0$, then there is no prioritization, because all $p(i)^\alpha =1$. If $\alpha \to 1$, then we get to, in some sense, “full” prioritization, where sampling data points is more heavily dependent on the actual $\delta_i$ values. Now that I think about it, we could increase $\alpha$ above one, but that would likely cause dramatic problems with over-fitting as the distribution could become heavily “pointy” with low entropy.

We finally have our actual probability $P(i)$ of sampling the $i$-th data point for a given minibatch, which would be (again) $(s_t,a_t,r_t,s_{t+1},| \delta_t |)$. During training, we can draw these simply by weighting all samples in the $N$-sized replay buffer by $P(i)$.

Since the buffer size $N$ can be quite large (e.g., one million), DeepMind uses special data structures to reduce the time complexity of certain operations. For the proportional-based variant, which is what OpenAI implements, a sum-tree data structure is used to make both updating and sampling $O(\log N)$ operations.

Is that it? Well, not quite. There are a few technical details to resolve, but probably the most important one (pun intended) is an importance sampling correction. DeepMind describes why:

The estimation of the expected value with stochastic updates relies on those updates corresponding to the same distribution as its expectation. Prioritized replay introduces bias because it changes this distribution in an uncontrolled fashion, and therefore changes the solution that the estimates will converge to (even if the policy and state distribution are fixed). We can correct this bias by using importance-sampling (IS) weights.

This makes sense. Here is my intuition, which I hope is useful. I think the distribution DeepMind is talking about (“same distribution as its expectation”) above is the distribution of samples that are obtained when sampling uniformly at random from the replay buffer. Recall the expectation I wrote above, which I repeat again for convenience:

\[{\rm minimize}_{\theta} \;\; \mathbb{E}_{(s_t,a_t,r_t,s_{t+1})\sim D} \left[ \Big(r_t + \gamma \max_{a \in \mathcal{A}} Q_{\theta^-}(s_{t+1},a) - Q_\theta(s_t,a_t)\Big)^2 \right]\]Here, the “true distribution” for the expectation is indicated with this notation under the expectation:

\[(s_t,a_t,r_{t},s_{t+1})\sim D\]which means we uniformly sample from the replay buffer. Since prioritization means we are not doing that, then the distribution of samples we get is different from the “true” distribution using uniform sampling. In particular, PER over-samples those with high priority, so the importance sampling correction should down-weight the impact of the sampled term, which it does by scaling the gradient term so that the gradient has “less impact” on the parameters.

To add yet more confusion, I don’t even think the uniform sampling is the “true” distribution we want, in the sense that it is the distribution under the expectation for the Q-learning loss. What I think we want is the actual set of samples that are induced by the agent’s current policy, so that we really use:

\[(s_t,a_t,r_{t},s_{t+1})\sim \pi\]where $\pi$ is a policy induced from the agent’s current Q-values. Perhaps it is greedy for simplicity. So what effectively happens is that, due to uniform sampling, there is extra bias and over-sampling towards the older samples in the replay buffer. Despite this, we should be OK because Q-learning is off-policy, so it shouldn’t matter in theory where the samples come from. Thus it’s unclear what a “true distribution of samples” should be like, if any exists. Incidentally, the off-policy aspect of Q-learning and why it does not take expectations “over the policy” appears to be the reason why importance sampling is not needed in vanilla DQN. (When we add an ingredient like importance sampling to PER, it is worth thinking about why we had to use it in this case, and not in others.) Things might change when we talk about $n$-step returns, but that raises the complexity to a new level … or we might just ignore importance sampling corrections, as this StackExchange answer suggests.

This all makes sense intuitively, but there has to be a nice, rigorous way to formalize it. The “TL;DR” is that the importance sampling in PER is to correct the over-sampling with respect to the uniform distribution.

Hopefully this is clear. Feel free to refer back to an earlier blog post about importance sampling more generally; I was hoping to follow it up right away with this current post, but my blogging plans never go according to plan.

How do we apply importance sampling? We use the following weights:

\[w_i = \left( \frac{1}{N} \cdot \frac{1}{P(i)} \right)^\beta\]and then further scaled in each minibatch so that $\max_i w_i = 1$ for stability reasons; generally, we don’t want weights to be wildly large.

Let’s dissect this term. The $1/N$ part is because of the current experience replay size. To clarify: this is NOT the same as the capacity of the buffer, and it only becomes equivalent to it once we hit the capacity and have to start over-riding samples. The $P(i)$ represents the probability of sampling data point $i$ according to priorities. It is this key term that scales the weights proportionally. As $P(i) \to 1$ (which really should never happen) the weight gets smaller, with an extreme down-weighting of the sample’s impact. As $P(i) \to 0$, the weight gets larger. If $P(i) = 1/N$ for all $i$, then we get uniform sampling with the $1/N$ term canceling out $1/(1/N)$.

Don’t forget the $\beta$ term in the exponent, which controls how much prioritization to apply. They argue that training is highly unstable at the beginning, and that importance sampling corrections matter more near the end of training. Thus, $\beta$ starts small (values of 0.4 to 0.6 are commonly used) and anneals towards one.

We finally “fold” this weight together with the $\delta_i$ TD error term during training, with $w_i \delta_i$, because the $\delta_i$ is multiplied with the gradient $\nabla_\theta Q_\theta(s_t,a_t)$ following the chain rule.

The PER paper shows that PER+(D)DQN it outperforms uniform sampling on 41 out of 49 Atari 2600 games, though which of the exact 8 games it did not improve on is unclear. From looking at Figure 3 (which uses Double DQN, not DQN), perhaps Robotank, Defender, Tutankham, Boxing, Bowling, BankHeist, Centipede, and Yar’s Revenge? I wouldn’t get too bogged down with the details; the benefits of PER are abundantly clear.

As a testament to the importance of prioritization, the Rainbow DQN paper showed that prioritization was perhaps the most essential extension for obtaining high scores on Atari games. Granted, their prioritization was based not on absolute TD error but based on a Kullback-Leibler loss because of their use of distributional DQNs, but the main logic might still apply to TD error.

Prioritization can be applied to other applications of experience replay. For example, suppose we wanted to add extra samples to the buffer from some “demonstrator” as in Deep Q-Learning from Demonstrations (blog post here). We can keep the same replay buffer code as earlier, but allocate the first $k$ items in the list come from demonstrator samples. Then our indexing for overriding older samples from the current agent must skip over the first $k$ items. It might be simplest to record this by adding a flag $f_t$ to the sample indicating whether it is a demonstrator or current agent sample. You can probably see why researchers prefer to write $(s_t,a_t,r_t,s_{t+1})$ without all the annoying flags and extra terms! To apply prioritization, one can adjust the raw values $p_i$ to increase those from the demonstrator.

I hope this was an illuminating overview of prioritized experience replay. For details, I refer you (again) to the paper and for an open-source implementation from OpenAI. Happy readings!

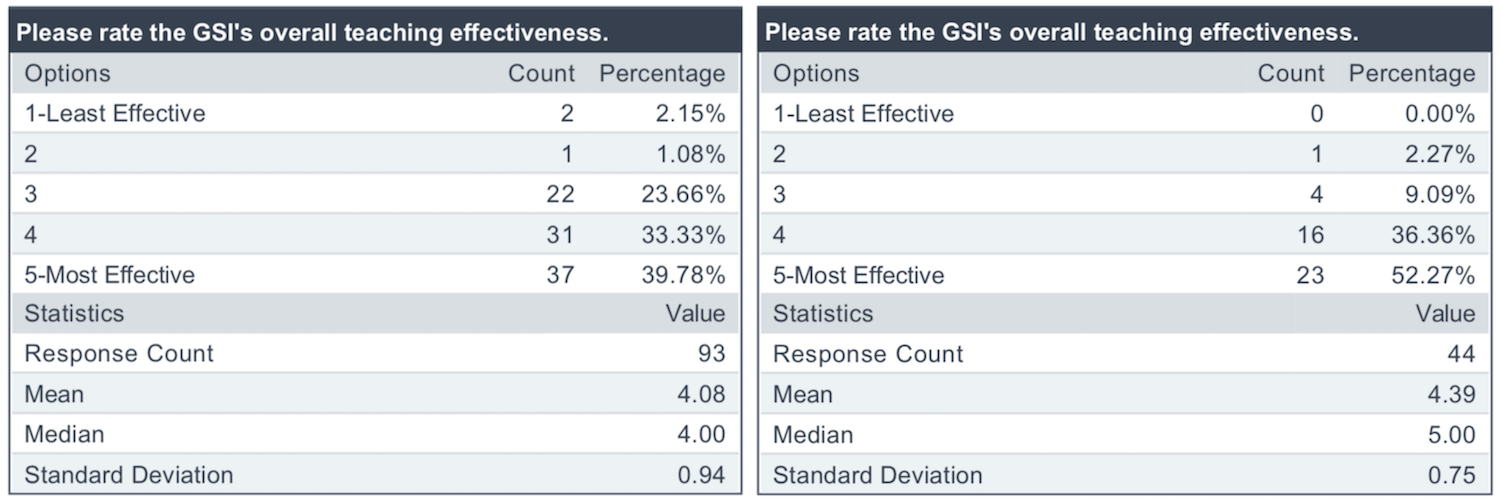

My Second Graduate Student Instructor Experience for CS 182/282A (Previously 194/294-129)

In Spring 2019, I was the Graduate Student Instructor (i.e., Teaching Assistant) for CS 182/282A, Designing, Visualizing, and Understanding Deep Neural Networks, taught by Professor John Canny. The class was formerly numbered CS 194/294-129, and recently got “upgraded” to have its own three-digit numbers of 182 and 282A for the undergraduate and graduate versions, respectively. The convention for Berkeley EECS courses is that new ones are numbered 194/294-xyz where xyz is a unique set of three digits, and once the course has proven that it is worthy of being treated as a regular course, it gets upgraded to a unique number without the “194/294” prefix.

Judging from my conversations with other Berkeley students who are aware of my blog, my course reviews seem to be a fairly popular category of posts. You can find the full set in the archives and in this other page I have. While most of these course reviews are for classes that I have taken, one of the “reviews” is actually my GSI experience from Fall 2016, when I was the GSI for the first edition of Berkeley’s Deep Learning course. Given that the class will now be taught on a regular basis, and that I just wrapped up my second GSI experience for it, I thought it would be nice to once again dust off my blogging skills and discuss my experience as a course staff member.

Unlike last time, when I was an “emergency” 10-hour GSI, I was a 20-hour GSI for CS 182/28A from the start. At Berkeley, EECS PhD students must GSI for a total of “30 hours.” The “hours” designation means that students are expected to work for that many hours per week in a semester as a GSI, and the sum of all the hours across all semesters must be at least 30. Furthermore, at least one of the courses must be an undergraduate course.1 As a 20-hour GSI for CS 182/282A and a 10-hour GSI for the Fall 2016 edition, I have now achieved my teaching requirements for the UC Berkeley EECS PhD program.

That being said, let us turn to the course itself.

Course Overview and Logistics

CS 182/282A can be thought of as a mix between 231n and 224n from Stanford. Indeed, I frequently watched lectures or reviewed the notes from those courses to brush up on the material here. Berkeley’s on a semester system, whereas Stanford has quarters, so we are able to cover slightly more material than 231n or 224n alone. We cover some deep reinforcement learning in 182/282A, but that is also a small part of 231n.

In terms of course logistics, the bad news was obvious when the schedule came out. CS 182/282A had lectures on Mondays and Wednesdays, at 8:00am. Ouch. That’s hard on the students; I wish we had a later time, but I think the course was added late to the catalog so we were assigned to the least desirable time slot. My perspective on lecture times is that as a student, I would enjoy an early time because I am a morning person, and thus earlier times fit right into my schedule. In contrast, as a course staff member who hopes to see as many students attend lectures as possible, I prefer early afternoon slots when it’s more likely that we get closer to full attendance.

Throughout the semester, there were only three mornings when the lecture room was crowded: on day one, and on the two in-class midterms. That’s it! Lecture attendance for 182/282A was abysmal. I attended nearly all the lectures, and by the end of the semester, I observed we were only getting about 20 students per lecture, out of a class size of (judging by the amount listed on the course evaluations) perhaps 235 students!

Incidentally, the reason for everyone showing up on day one is that I think students on the waiting list have to show up on the first day if they want a chance of getting in the class. The course staff got a lot of requests from students asking if they could get off the waiting list. Unfortunately I don’t think I or anyone on the course staff had control over this, so I was unable to help. I really wish the EECS department had a better way to state unequivocally whether a student can get in a class or not, and I am somewhat confused as to why students constantly ask this question. Do other classes have course staff members deal with the waiting list?

One logistical detail that is unique to me are sign language interpreting services. Normally, Berkeley’s Disabled Students’ Program (DSP) pays for sign language services for courses and lab meetings, since this is part of my academic experience. Since I was getting paid by the EECS department, however, DSP told me that the EECS department had to do the payment. Fortunately, this detail was quickly resolved by the excellent administrators at DSP and EECS, and the funding details abstracted away from me.

Discussion Sections

Part of our GSI duties for 182/282A is that we need to host discussions or sections; I use the terms interchangeably, and sometimes together, and another term is “recitations” as in this blog post 4.5 years ago. Once a week, each GSI was in charge of two discussions, which are each a 50-minute lecture we give to a smaller audience of students. This allows for a more intimate learning environment, where students may feel more comfortable asking questions as compared to the normal lectures (with a terrible time slot).

The discussions did not start well. They were scheduled only on Mondays, with some overlapping time slots, which seemed like a waste of resources. We first polled the students to see the best times for them, and then requested the changes to the scheduling administrators in the department. After several rounds of ambiguous email exchanges, we got a stern and final response from one of them, who said the students “were not six year olds” and were responsible for knowing the discussion schedule since it was posted ahead of time.

To the students who had scheduling conflicts with the sections, I apologize, but we tried.

We also got off to a rocky start with the material we chose to present. The first discussion was based on Michael I. Jordan’s probabilistic grapical models notes2 to describe the connection between Naive Bayes and Logistic Regression. Upon seeing our discussion material, John chastised us for relying on graduate-level notes, and told us to present his simpler ideas instead. Sadly, I did not receive his email until after I had already given my two discussion sections, since he sent it while I was presenting.

I wish we had started off a bit easier to help some students gradually get acclimated to the mathematics. Hopefully after the first week, the discussion material was at a more appropriate difficulty level. I hope the students enjoyed the sections. I certainly did! It was fun to lecture and to throw the occasional (OK, frequent) joke.

Preparing for the sections meant that I needed to know the material and anticipate the questions students might ask. I dedicated my entire Sundays (from morning to 11:00pm) preparing for the sections by reviewing the relevant concepts. Each week, one GSI took the lead in forming the notes, which dramatically helped to simplify the workload.

At the end of the course, John praised us (the GSIs) for our hard work on the notes, and said he would reuse them them in future iterations of the course.

Piazza and Office Hours

I had ZERO ZERO ZERO people show up to office hours throughout the ENTIRE semester in Fall 2016. I don’t even know how that is humanly possible.

I did not want to repeat that “accomplishment” this year. I had high hopes that in Spring 2019, with the class growing larger, more students would come to office hours. Right? RIGHT?!?

It did not start off well. Not a single student showed up to my office hours after the first discussion. I was fed up, so at the beginning of my section the next week, I wrote down on the board: “number of people who showed up to my office hours”. Anyone want to guess? I asked the students. When no one answered, I wrote a big fat zero on the board, eliciting a few chuckles.

Fortunately, once the homework assignments started, a nonzero number of students showed up to my office hours, so I no longer had to complain.

Students were reasonably active on Piazza, which is expected for a course this large with many undergraduate students. One thing that was also expected — this one not so good — was that many students ran into technical difficulties when doing the homework assignments, and posted incomplete reports on Piazza. Their reports were written in a way that made it hard for the course staff to adequately address them.

This has happened in previous iterations of the course, so John wrote this page on the course website which has a brief and beautiful high-level explanation of how to properly file an issue report. I’m copying some of his words here because they are just so devastatingly effective:

If you have a technical issue with Python, EC2 etc., please follow these guidelines when you report an issue in Piazza. Most issues are relatively easy to resolve when a good report is given. And the process of creating a good Issue Report will often help you fix the problem without getting help - i.e. when you write down or copy/paste the exact actions you took, you will usually discover if you made a slip somewhere.

Unfortunately many of the issue reports we get are incomplete. The effect of this is that a simple problem becomes a major issue to resolve, and staff and students go back-and-force trying to extract more information.

[…]

Well said! Whenever I felt stressed throughout the semester due to teaching or other reasons, I would often go back and read those words on that course webpage, which brought me a dose of sanity and relief. Ahhhhh.

The above is precisely why I have very few questions on StackOverflow and other similar “discussion forums.” The following has happened to me so frequently: I draft a StackOverflow post and structure it by saying that I tried this and that and … oh, I just realized I solved what I wanted to ask!

For an example of how I file in (borderline excessive) issue reports, please see this one that I wrote for OpenAI baselines about how their DDPG algorithm does not work. (But what does “does not work” mean?? Read the issue report to find out!)

I think I was probably spending too much time on Piazza this semester. The problem is that I get this uncontrollable urge to respond to student questions.3 I had the same problem when I was a student, since I was constantly trying to answer Piazza questions that other students had. I am proud to have accumulated a long list of “An instructor […] endorsed this answer” marks.

The advantage of my heavy Piazza scrutiny was that I was able to somewhat gauge which students should get a slight participation bonus for helping others on Piazza. Officially, participation was 10% of the grade, but in practice, none of us actually knew what that meant. Students were constantly asking the course staff what their “participation grade” actually meant, and I was never able to get a firm answer from the other course staff members. I hope this is clarified better in future iterations of 182/282A.

Near the end of the grading period, we finally decided that part of participation would consist of slight bonuses to the top few students who were most helpful on Piazza. It took me four hours to scan through Piazza and to send John a list of the students who got the bonus. This was a binary bonus: students could get either nothing or the bonus. Obviously, we didn’t announce this to the students, because we would get endless complaints from those who felt that they were near the cutoff for getting credit.

Homework Assignments

We had four challenging homework assignments for 182/282A, all of which were bundled with Python and Jupyter notebooks:

-

The first two came straight from the 231n class at Stanford — but we actually took their second and third assignments, and skipped their first one. Last I checked, the first assignment for 231n is mostly an introduction to machine learning and taking gradients, the second is largely about convolutional neural networks, and third is about recurrent neural networks with a pinch of Generative Adversarial Networks (GANs). Since we skipped the first homework assignment from 231n, this might have made our course relatively harder, but fortunately for the students, we did not ask them to do the GANs part for Stanford’s assignment.

-

The third homework was on NLP and the Transformer architecture (see my blog post here). One of the other GSIs designed this from the ground up, so it was unique for the class. We provided a lot of starter code for the students, and asked them to implement several modules for the Transformer. Given that this was the first iteration of the assignment, we got a lot of Piazza questions about code usage and correctness. I hope this was educational to the students! Doing the homework myself (to stress test it beforehand) was certainly helpful for me.

-

The fourth homework was on deep reinforcement learning, and I designed this one. It took a surprisingly long time, even though I borrowed lots of the code from elsewhere. My original plan was actually to get the students to implement Deep Q-Learning from Demonstrations (blog post here) because that’s an algorithm that nicely combines imitation and reinforcement learning, and I have an implementation (actually, two) in a private repository which I could adapt for the assignment. But John encouraged me to keep it simple, so we stuck with the usual “Intro to DeepRL” combination of Vanilla Policy Gradients and Deep Q-learning.